A major update to Image2Docker was released last week, which adds ASP.NET support to the tool. Now you can take a virtualized web server in Hyper-V and extract a #Docker image for each website in the VM – including ASP.NET WebForms, MVC and WebApi apps.

Image2Docker is a PowerShell module which extracts applications from a Windows Virtual Machine image into a Dockerfile. You can use it as a first pass to take workloads from existing servers and move them to Docker containers on Windows.

The tool was first released in September 2016, and we’ve had some great work on it from PowerShell gurus like Docker Captain Trevor Sullivan and Microsoft MVP Ryan Yates. The latest version has enhanced functionality for inspecting IIS &8211; you can now extract ASP.NET websites straight into Dockerfiles.

In Brief

If you have a Virtual Machine disk image (VHD, VHDX or WIM), you can extract all the IIS websites from it by installing Image2Docker and running ConvertTo-Dockerfile like this:

Install-Module Image2Docker

Import-Module Image2Docker

ConvertTo-Dockerfile -ImagePath C:win-2016-iis.vhd -Artifact IIS -OutputPath c:i2d2iis

That will produce a Dockerfile which you can build into a Windows container image, using docker build.

How It Works

The Image2Docker tool (also called “I2D2” works offline, you don&8217;t need to have a running VM to connect to. It inspects a Virtual Machine disk image &8211; in Hyper-V VHD, VHDX format, or Windows Imaging WIM format. It looks at the disk for known artifacts, compiles a list of all the artifacts installed on the VM and generates a Dockerfile to package the artifacts.

works offline, you don&8217;t need to have a running VM to connect to. It inspects a Virtual Machine disk image &8211; in Hyper-V VHD, VHDX format, or Windows Imaging WIM format. It looks at the disk for known artifacts, compiles a list of all the artifacts installed on the VM and generates a Dockerfile to package the artifacts.

The Dockerfile uses the microsoft/windowsservercore base image and installs all the artifacts the tool found on the VM disk. The artifacts which Image2Docker scans for are:

IIS & ASP.NET apps

MSMQ

DNS

DHCP

Apache

SQL Server

Some artifacts are more feature-complete than others. Right now (as of version 1.7.1) the IIS artifact is the most complete, so you can use Image2Docker to extract Docker images from your Hyper-V web servers.

Installation

I2D2 is on the PowerShell Gallery, so to use the latest stable version just install and import the module:

Install-Module Image2Docker

Import-Module Image2Docker

If you don&8217;t have the prerequisites to install from the gallery, PowerShell will prompt you to install them.

Alternatively, if you want to use the latest source code (and hopefully contribute to the project), then you need to install the dependencies:

Install-PackageProvider -Name NuGet -MinimumVersion 2.8.5.201

Install-Module -Name Pester,PSScriptAnalyzer,PowerShellGet

Then you can clone the repo and import the module from local source:

mkdir docker

cd docker

git clone https://github.com/sixeyed/communitytools-image2docker-win.git

cd communitytools-image2docker-win

Import-Module .Image2Docker.psm1

Running Image2Docker

The module contains one cmdlet that does the extraction: ConvertTo-Dockerfile. The help text gives you all the details about the parameters, but here are the main ones:

ImagePath &8211; path to the VHD | VHDX | WIM file to use as the source

Artifact &8211; specify one artifact to inspect, otherwise all known artifacts are used

ArtifactParam &8211; supply a parameter to the artifact inspector, e.g. for IIS you can specify a single website

OutputPath &8211; location to store the generated Dockerfile and associated artifacts

You can also run in Verbose mode to have Image2Docker tell you what it finds, and how it&8217;s building the Dockerfile.

Walkthrough &8211; Extracting All IIS Websites

This is a Windows Server 2016 VM with five websites configured in IIS, all using different ports:

Image2Docker also supports Windows Server 2012, with support for 2008 and 2003 on its way. The websites on this VM are a mixture of technologies &8211; ASP.NET WebForms, ASP.NET MVC, ASP.NET WebApi, together with a static HTML website.

I took a copy of the VHD, and ran Image2Docker to generate a Dockerfile for all the IIS websites:

ConvertTo-Dockerfile -ImagePath C:i2d2win-2016-iis.vhd -Artifact IIS -Verbose -OutputPath c:i2d2iis

In verbose mode there&8217;s a whole lot of output, but here are some of the key lines &8211; where Image2Docker has found IIS and ASP.NET, and is extracting website details:

VERBOSE: IIS service is present on the system

VERBOSE: ASP.NET is present on the system

VERBOSE: Finished discovering IIS artifact

VERBOSE: Generating Dockerfile based on discovered artifacts in

:C:UserseltonAppDataLocalTemp865115-6dbb-40e8-b88a-c0142922d954-mount

VERBOSE: Generating result for IIS component

VERBOSE: Copying IIS configuration files

VERBOSE: Writing instruction to install IIS

VERBOSE: Writing instruction to install ASP.NET

VERBOSE: Copying website files from

C:UserseltonAppDataLocalTemp865115-6dbb-40e8-b88a-c0142922d954-mountwebsitesaspnet-mvc to

C:i2d2iis

VERBOSE: Writing instruction to copy files for #aspnet-mvc site

VERBOSE: Writing instruction to create site aspnet-mvc

VERBOSE: Writing instruction to expose port for site aspnet-mvc

When it completes, the cmdlet generates a Dockerfile which turns that web server into a Docker image. The Dockerfile has instructions to installs IIS and ASP.NET, copy in the website content, and create the sites in IIS.

Here&8217;s a snippet of the Dockerfile &8211; if you&8217;re not familiar with Dockerfile syntax but you know some PowerShell, then it should be pretty clear what&8217;s happening:

# Install Windows features for IIS

RUN Add-WindowsFeature Web-server, NET-Framework-45-ASPNET, Web-Asp-Net45

RUN Enable-WindowsOptionalFeature -Online -FeatureName IIS-ApplicationDevelopment,IIS-ASPNET45,IIS-BasicAuthentication…

# Set up website: aspnet-mvc

COPY aspnet-mvc /websites/aspnet-mvc

RUN New-Website -Name ‘aspnet-mvc’ -PhysicalPath “C:websitesaspnet-mvc” -Port 8081 -Force

EXPOSE 8081

# Set up website: aspnet-webapi

COPY aspnet-webapi /websites/aspnet-webapi

RUN New-Website -Name ‘aspnet-webapi’ -PhysicalPath “C:websitesaspnet-webapi” -Port 8082 -Force

EXPOSE 8082

You can build that Dockerfile into a Docker image, run a container from the image and you&8217;ll have all five websites running in a Docker container on Windows. But that&8217;s not the best use of Docker.

When you run applications in containers, each container should have a single responsibility &8211; that makes it easier to deploy, manage, scale and upgrade your applications independently. Image2Docker support that approach too.

Walkthrough &8211; Extracting a Single IIS Website

The IIS artifact in Image2Docker uses the ArtifactParam flag to specify a single IIS website to extract into a Dockerfile. That gives us a much better way to extract a workload from a VM into a Docker Image:

ConvertTo-Dockerfile -ImagePath C:i2d2win-2016-iis.vhd -Artifact IIS -ArtifactParam aspnet-webforms -Verbose -OutputPath c:i2d2aspnet-webforms

That produces a much neater Dockerfile, with instructions to set up a single website:

# escape=`

FROM microsoft/windowsservercore

SHELL [“powershell”, “-Command”, “$ErrorActionPreference = ‘Stop';”]

# Wait-Service is a tool from Microsoft for monitoring a Windows Service

ADD https://raw.githubusercontent.com/Microsoft/Virtualization-Documentation/live/windows-server-container-tools/Wait-Service/Wait-Service.ps1 /

# Install Windows features for IIS

RUN Add-WindowsFeature Web-server, NET-Framework-45-ASPNET, Web-Asp-Net45

RUN Enable-WindowsOptionalFeature -Online -FeatureName IIS-ApplicationDevelopment,IIS-ASPNET45,IIS-BasicAuthentication,IIS-CommonHttpFeatures,IIS-DefaultDocument,IIS-DirectoryBrowsing

# Set up website: aspnet-webforms

COPY aspnet-webforms /websites/aspnet-webforms

RUN New-Website -Name ‘aspnet-webforms’ -PhysicalPath “C:websitesaspnet-webforms” -Port 8083 -Force

EXPOSE 8083

CMD /Wait-Service.ps1 -ServiceName W3SVC -AllowServiceRestart

Note &8211; I2D2 checks which optional IIS features are installed on the VM and includes them all in the generated Dockerfile. You can use the Dockerfile as-is to build an image, or you can review it and remove any features your site doesn&8217;t need, which may have been installed in the VM but aren&8217;t used.

To build that Dockerfile into an image, run:

docker build -t i2d2/aspnet-webforms .

When the build completes, I can run a container to start my ASP.NET WebForms site. I know the site uses a non-standard port, but I don&8217;t need to hunt through the app documentation to find out which one, it&8217;s right there in the Dockerfile: EXPOSE 8083.

This command runs a container in the background, exposes the app port, and stores the ID of the container:

$id = docker run -d -p 8083:8083 i2d2/aspnet-webforms

When the site starts, you&8217;ll see in the container logs that the IIS Service (W3SVC) is running:

> docker logs $id

The Service ‘W3SVC’ is in the ‘Running’ state.

Now you can browse to the site running in IIS in the container, but because published ports on Windows containers don&8217;t do loopback yet, if you&8217;re on the machine running the Docker container, you need to use the container&8217;s IP address:

$ip = docker inspect –format ‘{{ .NetworkSettings.Networks.nat.IPAddress }}’ $id

start “http://$($ip):8083″

That will launch your browser and you&8217;ll see your ASP.NET Web Forms application running in IIS, in Windows Server Core, in a Docker container:

Converting Each Website to Docker

You can extract all the websites from a VM into their own Dockerfiles and build images for them all, by following the same process &8211; or scripting it &8211; using the website name as the ArtifactParam:

$websites = @(“aspnet-mvc”, “aspnet-webapi”, “aspnet-webforms”, “static”)

foreach ($website in $websites) {

ConvertTo-Dockerfile -ImagePath C:i2d2win-2016-iis.vhd -Artifact IIS -ArtifactParam $website -Verbose -OutputPath “c:i2d2$website” -Force

cd “c:i2d2$website”

docker build -t “i2d2/$website” .

}

Note. The Force parameter tells Image2Docker to overwrite the contents of the output path, if the directory already exists.

If you run that script, you&8217;ll see from the second image onwards the docker build commands run much more quickly. That&8217;s because of how Docker images are built from layers. Each Dockerfile starts with the same instructions to install IIS and ASP.NET, so once those instructions are built into image layers, the layers get cached and reused.

When the build finish I have four i2d2 Docker images:

> docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

i2d2/static latest cd014b51da19 7 seconds ago 9.93 GB

i2d2/aspnet-webapi latest 1215366cc47d About a minute ago 9.94 GB

i2d2/aspnet-mvc latest 0f886c27c93d 3 minutes ago 9.94 GB

i2d2/aspnet-webforms latest bd691e57a537 47 minutes ago 9.94 GB

microsoft/windowsservercore latest f49a4ea104f1 5 weeks ago 9.2 GB

Each of my images has a size of about 10GB but that&8217;s the virtual image size, which doesn&8217;t account for cached layers. The microsoft/windowsservercore image is 9.2GB, and the i2d2 images all share the layers which install IIS and ASP.NET (which you can see by checking the image with docker history).

The physical storage for all five images (four websites and the Windows base image) is actually around 10.5GB. The original VM was 14GB. If you split each website into its own VM, you&8217;d be looking at over 50GB of storage, with disk files which take a long time to ship.

The Benefits of Dockerized IIS Applications

With our Dockerized websites we get increased isolation with a much lower storage cost. But that&8217;s not the main attraction &8211; what we have here are a set of deployable packages that each encapsulate a single workload.

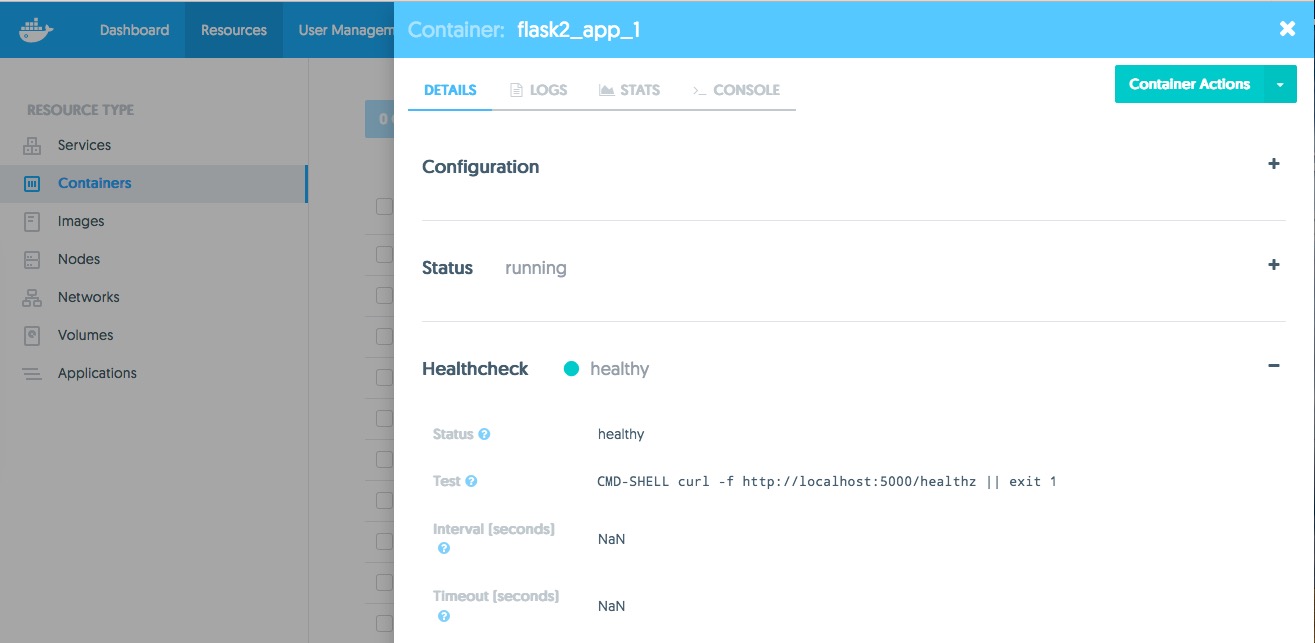

You can run a container on a Docker host from one of those images, and the website will start up and be ready to serve requests in seconds. You could have a Docker Swarm with several Windows hosts, and create a service from a website image which you can scale up or down across many nodes in seconds.

And you have different web applications which all have the same shape, so you can manage them in the same way. You can build new versions of the apps into images which you can store in a Windows registry, so you can run an instance of any version of any app. And when Docker Datacenter comes to Windows, you&8217;ll be able to secure the management of those web applications and any other Dockerized apps with role-based access control, and content trust.

Next Steps

Image2Docker is a new tool with a lot of potential. So far the work has been focused on IIS and ASP.NET, and the current version does a good job of extracting websites from VM disks to Docker images. For many deployments, I2D2 will give you a working Dockerfile that you can use to build an image and start working with Docker on Windows straight away.

We&8217;d love to get your feedback on the tool &8211; submit an issue on GitHub if you find a problem, or if you have ideas for enhancements. And of course it&8217;s open source so you can contribute too.

Additional Resources

Image2Docker: A New Tool For Prototyping Windows VM Conversions

Containerize Windows Workloads With Image2Docker

Run IIS + ASP.NET on Windows 10 with Docker

Awesome Docker &8211; Where to Start on Windows

Convert @Windows aspnet VMs to Docker with Image2DockerClick To Tweet

The post Convert ASP.NET Web Servers to Docker with Image2Docker appeared first on Docker Blog.

Quelle: https://blog.docker.com/feed/