Using Google’s cloud networking products: a guide to all the guides

Posted by Mike Truty, Cloud Solutions Architect

I’m a relative newcomer to Google Cloud Platform. After nine years working in Technical Infrastructure, I recently joined the team to work hand-in-hand with customers building out next-generation applications and services on the platform. In this role, I realized that my privileged understanding of how we build our systems can be hard to come by from outside the organization. That is, unless you know where to look.

I recently spent a bunch of time hopping around the Google Cloud Networking pages under the main GCP site, looking for materials that could help a customer better understand our approach.

What follows is a series of links for anyone who may want an introduction to Google Cloud Networking, presented in digestible pieces and ordered to build on previous content.

Getting started

First, for some quick 15-minute background, I recommend this Google Cloud Platform Overview. It’s a one-page survey of all the necessary concepts you need to work in Cloud Platform. Then, you may want to scan the related Cloud Platform Services doc, another one-pager that introduces the primary customer-facing services (including networking services) that you might need. It’s not obvious but Cloud Platform networking also lays the foundation for the newer managed services mentioned including Google Container Engine (Kubernetes) and Cloud Dataflow. After all that, you’ll have a good idea of the landscape and be ready to actually do something in GCP!

(click to enlarge)

Networking Codelabs

Google has an entire site devoted to Codelabs — my favorite way to learn nontrivial technical concepts. Within the Cloud Codelabs there are two really excellent networking Codelabs: Networking 101 and Networking 102. I recommend them highly for a few reasons. Each one only takes about 90 minutes end-to-end; each is a quick survey of a few of the most commonly used features in cloud networking; both include really helpful hints about performance and, most importantly, after completing these Codelabs, you’ll have a really good sandbox for experimenting in cloud networking on Google Cloud Platform.

Google Cloud Networking references

Another question you may have is what are the best Google Cloud Networking reference docs? The Google Cloud Networking feature docs are split between two main landing pages: the Cloud Networking Products page and the Compute Engine networking page. The products page introduces the main product feature areas: Cloud Virtual Network, Autoscaling and Load Balancing, Global DNS, Cloud Interconnect and Cloud CDN. Be sure to scroll down to the end, because there are some really valuable links to guides and resources at the very bottom of each page that a lot of people miss out on.

The Compute Engine networking page is a treasure trove of all kinds of interesting details that you won’t find anywhere else. It includes the picture I hold in my mind for how networks and subnetworks are related to regions and zones, details about quotas, default IP ranges, default routes, firewall rules, details about internal DNS, and some simple command line examples using gcloud.

An example of the kind of gem you’ll find on this page is a little blurb on measuring network throughput that links to the PerfKitBenchMarker tool, an open-source benchmark tool for comparing cloud providers (more on that below). I return to this page frequently and find things explained that previously confused me.

For future reference, the Google Cloud Platform documentation also maintains a list of networking tutorials and solutions documents with some really interesting integration topics. And you should definitely check out Google Cloud Platform for AWS Professionals: Networking, an excellent, comprehensive digest of networking features.

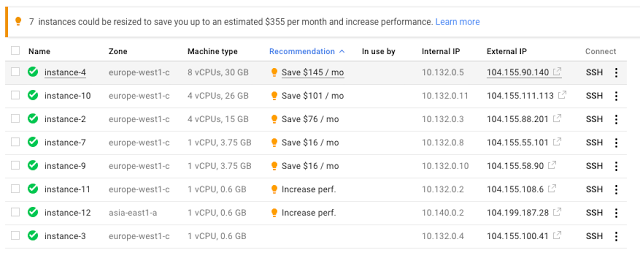

Price and performance

Before you do too much, you might want to get a sense for how much of your free quota it will cost you to run through more networking experiments. Get yourself acquainted with the Cloud Platform Pricing page as a reference (notice the “Free credits” link at the bottom of the page). Then, you can find the rest of what you need under Compute Engine Pricing. There, you can see rates for the standard machine types used in the Codelabs, and also a link to General network pricing. A little further down, you’ll find the IP address pricing numbers. Finally, you may find it useful to click through the link at the very bottom to the estimated billing charges invoice page for a summary of what you spent on the codelabs.

Once you’ve done that, you can start thinking about the simple performance and latency tests you completed in the Codelabs. There’s a very helpful discussion on egress throughput caps buried in the Networking and Firewalls doc and you can run your own throughput experiments with PerfKitBenchMarker (sources). This tool does all the heavy lifting with respect to spinning up instances, and understands how different cloud providers define regions, making for relevant comparisons. Also, with PerfKitBenchmaker, someone else has already done the hard work of identifying the accepted benchmarks in various areas.

Real world use cases

Now that you understand the main concepts and features behind Google Cloud Networking, you might want to see how others put them all together. A common first question is how to set things up securely. Securely Connecting to VM Instances is a really good walkthrough that includes more overviews of key topics (firewalls, HTTPS/SSL, VPN, NAT, serial console), some useful gcloud examples and a nice picture that reflects the jumphost setup in the codelab.

Next you should watch two excellent videos from GCP Next 2016: Seamlessly Migrating your Networks to GCP and Load Balancing, Autoscaling & Optimizing Your App Around the Globe. What I like about these videos is that they hit all the high points for how people talk about public cloud virtual networking, and offer examples of common approaches used by large early adopters.

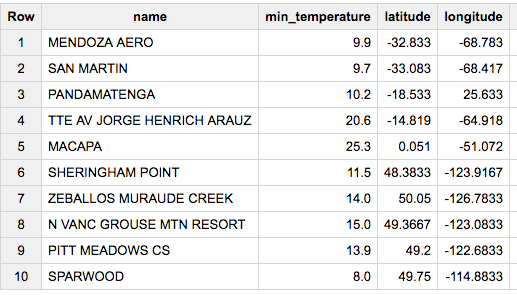

A common question about cloud networking technologies is how to distribute your services around the globe. The Regions and Zones document explains specifically where GCP resources reside, and Google’s research paper Software Defined Networking at Scale (more below) has pretty map-based pictures of Google’s Global CDN and inter-Datacenter WAN that I really like. This Google infrastructure page has zoomable maps with Google’s data centers around the world marked and you can read how Google uses its four undersea cables, with more ‘under’ the horizon, to connect them here.

Finally, you may want to check out this sneaky-useful collection of articles discussing approaches to geographic management of data. I plan to go through the solutions referenced at the bottom of this page to get more good ideas on how to use multiple regions effectively.

Another thing that resonated with me from both GCP Next 2016 videos was the discussion about how easy it is to setup and manage services in GCP to serve from closest, low-latency instances using a single global Anycast VIP. For more on this, the Load Balancing and Scaling concept doc offers a really nice overview of the topic. Then, for some initial exploration of load balancing, check out Setting Up Network Load Balancing.

And in case you were wondering from exactly where Google peers and serves CDN content, visit the Google Edge Network/Peering site and PeeringDB for more details. The peering infrastructure page has zoomable maps where you can see Google’s Edge PoPs and nodes.

Best practices

There’s also a wealth of documents about best practices for Google Cloud Networking. I really like the Best Practices for Networking and Security within the Best Practices for Enterprise Organizations document, and DDoS Best Practices doc provides more useful ways to think about building a global service.

Another key concept to wrap your head around is Cloud Identity & Access Management (IAM). In particular, check out the Understanding Roles doc for its introduction to network- and security-specific roles. Service accounts play a key role here. Understanding Service Accounts walks you through the considerations, and Using IAM Securely offers some best practices checklists. Also, for some insight into where this all leads, check out Access Control for Organizations using IAM [Beta].

A little history of Google Cloud Networking

All this research about Google Cloud Networking may leave you wanting to know more about its history. I checked out the research papers referenced in the previously mentioned video Seamlessly Migrating your Networks to GCP and — warning — they’re deep, but they’ll help you understand the fundamentals of how Google Cloud Networking has evolved over the past decade, and how its highly distributed services deliver the performance and competitive pricing for which it’s known.

Google’s network-related research papers fall into two categories:

Cloud Networking fundamentals

Enter the Andromeda zone – Google Cloud Platform’s latest networking stack, a 2014 blog that details the fundamentals of network virtualization.

Jupiter Rising: A Decade of Clos Topologies and Centralized Control in Google’s Datacenter Network. This 2015 paper provides an excellent description of the evolution of datacenter networking at Google. It even comes with a video.

Maglev: A Fast and Reliable Software Network Load Balancer, a 2016 paper that presents an overview of distributed load balancing.

Networking background

A Guided Tour of Data-Center Networking. This 2012 article provides a high-level system overview.

B4: Experience with a Globally Deployed Software Defined WAN. Read this 2013 paper for a detailed look at Google’s quest for simpler and more efficient WAN.

Software Defined Networking at Scale, slides from 2014 about SDN models.

A look inside Google’s Data Center Networks. “Jupiter fabrics…can deliver more than 1 Petabit/sec…enough for 100,000 servers to exchange information at 10Gb/s each, enough to read the entire…Library of Congress in less than 1/10th of a second.”

The Andromeda network architecture (source)

I hope this post is useful, and that these resources help you better understand the ins and outs of Google Cloud Networking. If you have any other good resources, be sure to share them in the comments.

Quelle: Google Cloud Platform