How GKE surge upgrades improve operational efficiency

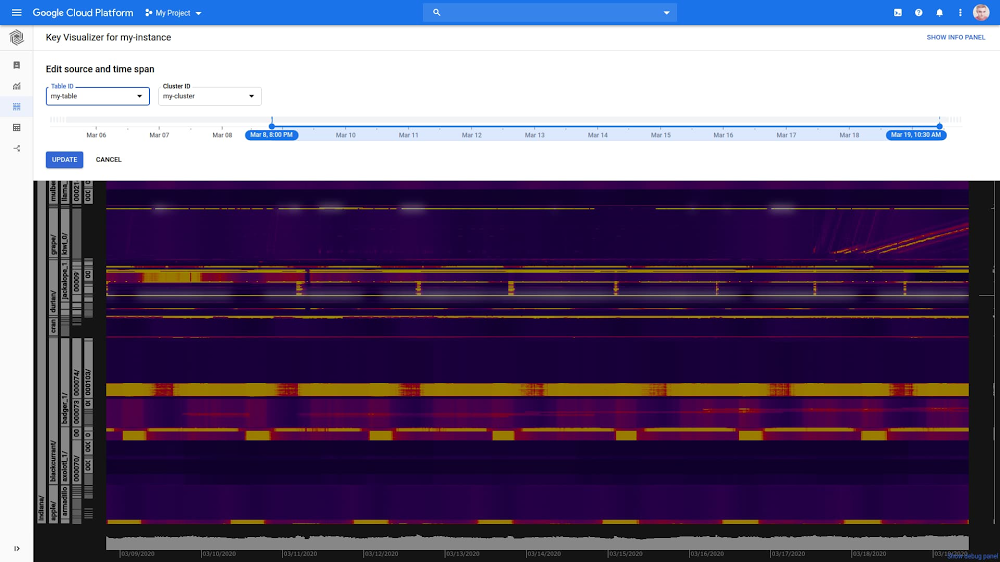

A big part of keeping a Kubernetes environment healthy is performing regular upgrades. At Google Cloud, we automatically upgrade the cluster control plane for Google Kubernetes Engine (GKE) users, but you’re responsible for upgrading the cluster’s individual nodes, as well as any additional software installed on the nodes. And while you can choose to enable node auto-upgrade to perform these updates behind the scenes, we recently introduced a ‘surge nodes upgrade’ feature that gives you fine-grained control over the upgrade process, to minimize the risk of disruption to your GKE environment, as well as to expedite the upgrade process. This is particularly important at a time when external forces are pushing many organizations to transition to a digital-only business model, where availability is key for business continuity. Surge upgrade reduces disruption to existing workloads while keeping clusters up-to-date with the latest version, security patches, and bug fixes.The importance of node upgradesNodes are where your Kubernetes workloads run. Open-source Kubernetes releases a new minor version approximately every three months, and patches more frequently. GKE follows this same release schedule, providing regular security patches and bug fixes, so you can reduce your exposure to security vulnerabilities, bugs, and version skew between control plane and nodes.Enabling node auto-upgrade is a popular choice for performing this important task. The node pool upgrade process recreates every VM in the node pool with a new (upgraded) VM image in a rolling update fashion. To do so, it shuts down all the pods running on the given node. And while most customers run workloads with sufficient redundancy and Kubernetes helps with the process of moving and restarting pods, in practice, the temporarily reduced number of replicas may not be sufficient to serve all your traffic, resulting in production incidents.Simply enabling node auto-upgrade isn’t enough for some GKE users. FACEIT provides an independent online competitive gaming platform that lets players create communities and compete in tournaments, leagues, and matches. With over a million monthly active users, FACEIT relies on GKE, benefitting from the platform’s agility and simple and automated scalability. But to eliminate the chance of downtime, FACEIT wasn’t using the node auto-upgrade feature. Instead, it used the following manual process:Create a new identical node pool, running on the new versionCordon off the nodes in the old node poolStart evicting pods by draining the nodes, causing Kubernetes to reschedule the pods on nodes in the new node poolFinally, remove the old node pool once all the nodes were drainedWith this manual process, FACEIT was able to balance upgrade speed and avoid disruptions. Introducing surge upgradesTo help ensure that all node upgrades complete successfully and in a timely fashion, we’re excited to offer surge upgrades for GKE. Surge upgrades reduces the potential for disruption by starting up new nodes before it drains the old ones, and supports upgrading multiple nodes concurrently. Upgrades are only initiated after all the required resources (VMs) are secured, ensuring that surge upgrades can complete successfully. Surge upgrades will be enabled by default on April 20, 2020, and we will also migrate existing node pools later in the quarter.The new surge upgrades feature helps to reduce workload disruption in two key ways.1. No decreased capacity during node upgradesThanks to surge upgrades, a node pool cannot transition into a state where it has less capacity than it had at the start of the upgrade process (assuming maxUnavailable is set to 0).In contrast, without surge upgrades, the node upgrade happens by recreating the node. This means there is a period during the upgrade process when the node is not available to the cluster. If there is sufficient redundancy in the cluster, this in itself may not cause any disruption to the workloads. However, any other failure with the workloads or with the infrastructure—for example, an unrelated node failure—may result in disruption.2. No evicted pod will remain unscheduled due to a lack of capacityThis feature of surge upgrades is a consequence of the above. Since there is equivalent additional capacity available (i.e., the surge node), it is always possible to schedule the evicted pod.As mentioned before, node upgrades happen by recreating Compute Engine instances with a new instance template, then evicting the pods and rescheduling them—assuming there’s the capacity to schedule them. Whenever one or more pods remain unscheduled, that means one or more workloads are running a lower number of replicas than desired. This may impact workload health (i.e., the system may not be able to tolerate the loss of the replica). Regardless, to ensure high availability, the time a workload is running with a reduced number of replicas should be shortened.The lack of surge nodes during an upgrade does not necessarily result in pods becoming unschedulable; If the node pool has sufficient available capacity, it will use it. In the picture below, the left node pool has enough capacity to be able to reschedule all the evicted pods immediately when the first node is drained. The right node pool has some available capacity, but not enough, so only two of the three evicted pods can be rescheduled immediately; Pod3 will need to wait for the node upgrade to complete, then it will be scheduled again.Let’s see how the presence of a surge node changes the situation. In the case of the less utilized node pool, the surge node does not help with pod scheduling, since the pods already had enough capacity. But in the case of the more utilized node pool, the extra capacity is necessary to be able to schedule the evicted pods right away.It’s worth noting that surge capacity can be useful for more than just upgrades; it can also be ‘spent’ on other demands like scaling up an application faster in case of a spike in load during the upgrade. Control how you upgrade, not just whenGKE’s node auto-upgrade feature helps administrators ensure that their environment stays up-to-date with the latest patches and updates. Now, with surge upgrades, you can know those upgrades will occur successfully, and without impacting production workloads. Early adopters like FACEIT report that this enhanced upgrade process is not only more reliable, but that it’s also faster, as it allows concurrent node upgrades. “Before surge upgrades, an upgrade of one environment required around seven hours to complete, multiplied by the number of environments. We used to spend roughly two weeks upgrading all of FACEIT’s environments,” said Emanuele Massara, VP Engineering, FACEIT. “With surge upgrades, the entire process takes less than a day, freeing up the team to focus on other tasks.” FACEIT has since turned down its manual upgrade process.Using surge upgrades in conjunction with correctly configured PDB (Pod Disruption Budget) can also help ensure the availability of applications during the upgrade process, said Bradley Wilson-Hunt, DevOps & Service Delivery Manager. “Without a PDB in place, Kubernetes can reschedule the pods of a deployment without waiting for the new pod to be ready, which could lead to a service disruption.”To learn more about using surge upgrades, read these guidelines on how to determine the parameters to configure your upgrades. You can also try surge upgrades yourself, using a demo application that follows this tutorial.

Quelle: Google Cloud Platform