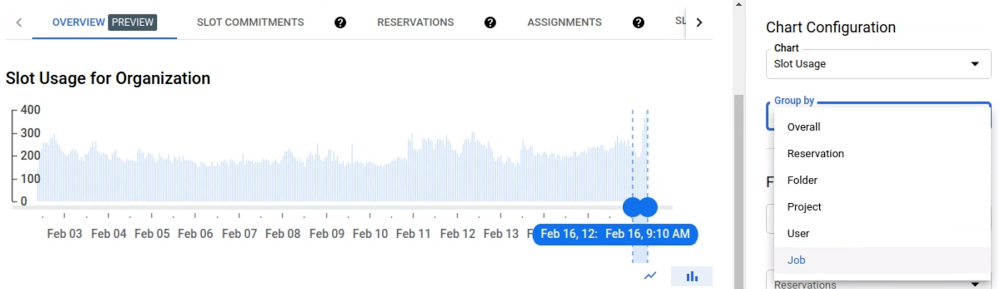

Telecommunications companies sit on a veritable goldmine of data they can use to drive new business opportunities, improve customer experiences, and increase efficiencies. There’s so much data, in fact, that a significant challenge lies in ingesting, processing, refining, and using that data efficiently enough to inform decision-making as quickly as possible—often in near real-time.According to a new study by Analysys Mason, telecommunications data volumes are growing worldwide at 20% CAGR, and network data traffic is expected to reach 13 zettabytes by 2025. To stay relevant as the industry evolves, communications service providers (CSPs) need to manage and monetize their data more effectively to:Deliver new user experiences and B2B2X services, with the “X” being customers and entities in previously untapped industries, and unlock new revenue streams.Transform operations by harnessing data, automation, and artificial intelligence (AI)/machine learning (ML) to drive new efficiencies, improved network performance, and decreased CAPEX/OPEX across the organization.Here are four key data management and analytics challenges CSPs face, and how cloud solutions can help. 1. Reimagining the user experience means CSPs need to solve for near-real-time data analytics challenges.Consider being able to suggest offers to customers at the right place and time, based on their interactions. Or imagine being able to maximize revenue generation by dynamically adjusting offers to macro and micro groups based upon trends you discover during a campaign. These types of programs, which reduce churn and increase up-sell/cross-sell, are made possible when you can correlate your data across systems and get actionable insights at near real-time.Now, when it comes to effective decision-making in near real-time, lightning-speed is critical. Low latency is required for use cases like delivering location-based offers while customers are still on-site, or detecting fraud fast enough during a transaction to minimize losses. Cloud vendors can offer the speed and scale to tackle streaming data required for near-real-time data processing. At Google, we understand these requirements because they are core to our business, and we’ve developed the technologies to do so at scale. Google Cloud’s BigQuery, for example, is a serverless and highly scalable cloud data warehouse that supports streaming ingestion and super-fast queries at petabyte scale. Google infrastructure technologies like Dremel, Colossus, Jupiter and Borg that underpin BigQuery were developed to address Google’s global data scalability challenges. And Google Cloud’s full stream analytics solution is built upon Pub/Sub and Dataflow, and supports the ingestion, processing, and analysis of fluctuating volumes of data for near real-time business insights. Furthermore, CSPs can also take advantage of Google Cloud Anthos, which offers the ability to place workloads closer to the customer, whether within an operator’s own data center, across clouds, or even at the edge, enabling the speed required for latency sensitive use cases.What’s more, according to Justin van der Lande, principal analyst at Analysys Mason, “real-time use cases require an action to take place based on changes in streaming data, which predicts or signifies a fresh action.” They also require constant model validation and optimization. Therefore, using ML tools like TensorFlow in the cloud can help improve models and prevent them from degrading. Cloud-based services also let CSP developers build, deploy and train ML models through APIs or a management platform, so models can be deployed quickly with the appropriate validation, testing, and governance. Google Cloud AutoMLenables users with limited ML expertise to train high-quality models specific to their business needs. 2. Driving CSP operational efficiencies requires streamlining fragmented and complex sets of tools.Over time, many CSPs have built up highly fragmented and complex sets of software tools, platforms, and integrations for data management and analysis. A legacy of M&A activity over years means different departments or operating companies may have their own tools, which adds to the complexity of procuring and maintaining them—and can also impact an operator’s ability to make changes and roll out new functionalities quickly.Cloud providers offer CSPs access to advanced data and analytics tools with rich capabilities that are continuously updated. Google Cloud, for instance, offers Looker, which enables organizations to connect, analyze, and visualize data across Google Cloud, Azure, AWS, or on-premises databases, and is ideal for streaming applications. In addition, hyperscale cloud vendors work with a wide ecosystem of technology partners, enabling operators to adopt more standardised data tools that support a wider variety of use cases and are more open to new requirements. For example, Google Cloud partnered with Amdocs, helping CSPs consolidate, organize, and manage data more effectively in the cloud to lower costs, improve customer experiences, and drive new business opportunities. Amdocs DataONE extracts, transforms, and organizes data using a telco-specific and TM Forum-compliant Amdocs Logical Data Model. The solution runs on Google Cloud SQL, a fully managed and scalable relational database solution that allows you to more efficiently organize and improve the accessibility, availability, and visibility of your operational and analytical data. The Amdocs data solution can also integrate with BigQuery to take advantage of built-in ML. Finally, Amdocs Cloud Servicesoffers a practice to help CSPs migrate, manage and organize their data so they can extract the strategic insights needed to maximize business value.3. Leveraging cloud and automation can help CSPs reduce cost and overhead as data volumes continue to rise.One of the most powerful motivations for CSPs to adopt a cloud-based data infrastructure may be the prospect of lowering operational and capital costs. Analysys Mason predicts that IT and software capital spending for CSPs will approach $45 billion by 2025, and IT operational expenses will be more than double that amount. These costs are set to rise, as operators support new digital services and growing data volumes. With cloud services, you pay for the capacity you use, not the servers you own. This not only saves on infrastructure-related capital costs, but it also takes advantage of the efficiencies cloud computing achieves through scale and means that all maintenance and updates are built into a predictable monthly bill.Additionally, CSPs experience demand peaks and valleys daily and annually to accommodate busy internet traffic hours and high-audience events, like the Super Bowl. However, building infrastructure to accommodate these peaks wastes resources and reduces your return on capital. Customer demand may also fluctuate beyond these expected cycles, and large workloads like big data queries or ad hoc analytics and reports also make it difficult to predict your capacity needs. Cloud computing offers fast scaling up and down—even autoscaling—that isn’t always easy to do with on-premises systems. 4. Increasing customer lifetime value requires high quality and complete data for timely decision-making.Finally, CSPs need to utilize data and analytics to better understand how to engage with customers and deliver greater, more personalized services in order to increase overall customer lifetime value. This requires the ability to analyze and act on a complete set of quality data quick enough to inform sound decision-making. For example, without high quality and timely data on your most valued customers, you may not be able to spot customers who are about to churn or conversely, you may offer discounts to customers who were not about to churn in the first place. According to van der Lande, there are five main attributes required of a good data set: data quality, governance, speed, completeness, and shareability (see Chart 1). Put another way, your data is only as good as how fast you can capture/transform/load it from a myriad of back-end systems, front-end systems, and networks, how complete it is, and how easily you can share a 360o view with the right decision-makers. It is also important to consider how well that data is governed. Considerations such as data lineage, data source, categorization of PII data, and regulatory requirements are very important as you look to build trust in the data quality and ultimately the insights. What’s more, as data volumes grow, the more difficult it is to ensure its quality, governance, and completeness.The main CSP challenges related to data (Source: Analysys Mason)Operators can create a single operational data store in the cloud and use ML-driven preparation tools to improve data quality and completeness. Cloud vendors can also provide enterprise-grade security tools with the ability to manage access rights, as well as automated administration to ensure proper governance. The cloud supports near real-time, end-to-end streaming pipelines for big data analytics that would otherwise quickly strain in-house systems. In addition, solutions like Google Cloud’s BigQuery Omni powered by Anthos give CSPs a consistent data analysis and infrastructure management experience, regardless of their deployment environment.The telecommunications industry has a unique opportunity to mine the massive amount of data its systems generate to improve customer experiences, operate more efficiently, create innovative new products, and uncover use cases to generate new revenue opportunities faster. But as long as CSPs rely on rigid on-premises infrastructure, they’re unlikely to capitalize on this valuable resource. In a world where near real-time decision-making is more critical than ever, the cloud can help provide the agility, scale, and flexibility necessary to process and analyze this growing volume of data to remain not just relevant, but competitive.Download the complete Analysys Mason whitepaper, co-sponsored with Amdocs and Intel, to learn more.Related ArticlePartnering with Intel to accelerate cloud-native 5GSee how Google Cloud and Intel are partnering to make it easier for telco companies to help customers use 5G networks and deliver edge ap…Read Article

Quelle: Google Cloud Platform