A practitioner’s view on how Docker enables security by default and makes developers work better

This blog post was written by Docker Captains, experienced professionals recognized for their expertise with Docker. It shares their firsthand, real-world experiences using Docker in their own work or within the organizations they lead. Docker Captains are technical experts and passionate community builders who drive Docker’s ecosystem forward. As active contributors and advocates, they share Docker knowledge and help shape Docker products. To learn more about how to become or to contact a Docker Captain, visit the Docker Captains’ website.

Security has been a primary concern of all types of organizations around the world. This has gone through all the eras of technology. First we had mainframes, then servers, then the cloud, all of them with their public and private offerings variations. With each evolution, security concerns grew and became harder to comply with.

Once we advanced into the world of distributed systems, security teams had to deal with the faster evolution of the environment. New programming languages, new libraries, new packages, new images, new everything.

For security to be handled correctly, security engineers needed a strong, well designed security architecture, always guaranteeing Developer Experience wouldn’t be impacted. And that’s where Docker comes in!

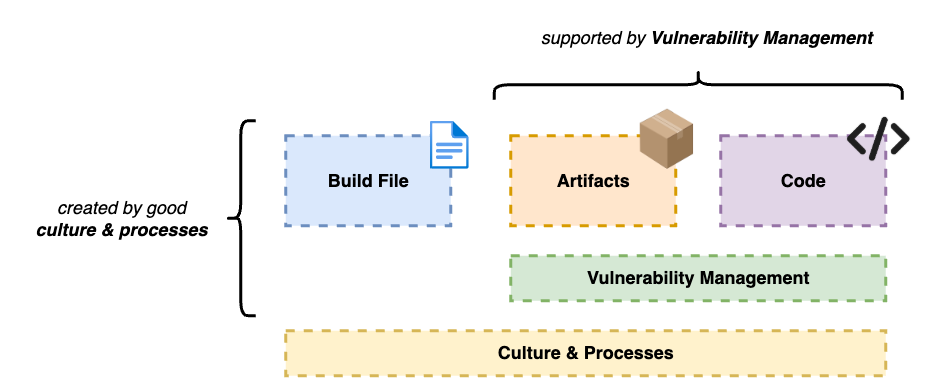

Container Security Basics

Container security covers a wide range of different topics. The field is so broad that there are entire books written exclusively about this subject. But when entering an enterprise environment, we can narrow it down to a few specific topics that need to be prioritized:

Artifacts

Code

Build file (e.g. Dockerfile) creation

Vulnerability management

Culture/Processes

Let’s get a little more in depth with those topics.

Artifacts

That’s the first step to a secure environment. Having trustworthy resources available for your engineers.

To reduce friction between security teams and developers, security engineers have to make secure resources available for developers, so they can just pull their images, libraries and dependencies in general, and start using it on their systems.

Docker Hardened Images (which we’ll talk a couple sections into the article) can help you with that.

In enterprise environments, we usually see a centralized repository for approved artifacts. This helps teams manage resources and the components used in their environments, while also helping developers know where to look when they want something.

Code

Everything really starts with the code that’s written. Having problematic code pushed into production might not seem bad at first but in the long run will cause you a lot of trouble.

In security, every surface has to be considered. We can create the most secure build file in the world, have the most robust process for managing assets, have great IAM (Identity and Access Management) workflows, but we are exposed if our code isn’t well written.

Beyond relying only on the developer’s expertise, we need to create guardrails to identify and mitigate problems as they are noted. This enforces a second layer of protection over all the work that’s done. Having tools in place can get mistakes developers might not see at first.

Having well trained developers and the right controls in the CI/CD pipelines our code goes through allows us to rest easy at night knowing we’re not sending bad code into production.

A couple of controls that can be applied to the pipelines:

SCA (Software Composition Analysis)

SAST/DAST/IAST

Secret Scanning

Dependency Scanning

Build file

In the beginning of the SDLC (Software Development Life-Cycle) our engineers have to create their build file (usually a Dockerfile) to download their application’s dependencies and to turn it into a container.

Creating a build file is easy, as it’s just a sequence of steps. You download something (e.g. a Package or a Library), install it, create a folder or a file, then download the next component, install it, and so on until all the steps have been completed. But even though the default values and settings usually do the work, they don’t have all the security guardrails and best practices applied by default. Because of that, you need to be careful with what’s being pushed into production.

While coding a build file, it’s crucial to ensure:

That there aren’t any secrets hard coded in it;

That the container is not configured to run as root – which could possibly allow an attacker to elevate their privilege and gain access to the host;

That there aren’t any sensitive files copied to your container (like certificates and credentials).

Taking these steps in the beginning and starting strong guarantees that the rest of the SDLC will be minimally exposed.

Vulnerability management

Now, we’re starting to move away from the code and from the artifacts we have engineers deliver.

Vulnerabilities can be found on everything. On technologies, on processes, on everything. We need good vulnerability management to keep the engine going.

Companies need to have well established processes to identify vulnerabilities on the go, fix them and when it’s needed, accept them. Usually we have frameworks developed internally to understand if a risk is worth being taken or if it should be fixed before moving on.

Those vulnerabilities can be new or already known. They can be in libraries used in the code, on container images used in their systems and in versions of solutions used in our environment.

They are everywhere! Be sure to identify them, keep them registered and fix them when needed.

Culture/Processes

Not only technology presents a risk to enterprise security. Poorly trained engineers and bad processes also represent a real threat to a company’s security structure.

A flaw in a process might result in the wrong code being pushed into production. Or maybe the bad version of a container image to be used in a system.

If we take into perspective how people, processes and technology are related, we might understand why a problem in the vulnerability assessment of a library might cause an entire cluster to be compromised. Or why a role that was wrongfully attributed to an user presents a serious risk to the integrity of an entire cloud environment.

These are exaggerated examples, but serve to show us that in tech, everything is connected, even if we don’t see it.

That’s why processes are so important. Solid processes mean we are focused on set outcomes instead of pleasing stakeholders. It’s important to take feedback into consideration and to make adjustments as we move forward, but we need to ensure these processes are followed, even when there isn’t unanimous agreement.

To have successful processes established, we have to:

Design guardrails

Implement steps

Train teams

Repeat

That’s the only way to enable teams effectively!

How Docker protects engineers and companies

Docker has been an ally of software engineers and security teams for a while now. Not only by enabling the success of distributed systems, but also by improving how developers write and containerize their applications.

As the Docker platform evolved, security was taken into consideration as the number one priority, like its customers.

Today, developers have access to different Docker security solutions in different parts of the platform.

Docker Scout

Docker Scout is a service created by Docker to analyze container images and its layers for known vulnerabilities. It checks against publicly known CVEs and provides the user with information regarding vulnerabilities in their images. To also help with mitigation, Docker Scout provides the user with a “fixable” value, declaring if that vulnerability can be fixed.

This is very useful once we enter a corporate environment because it makes it possible for the security teams to recognize the risks that image brings to the organization and allow them to decide if that amount of risk can be taken or not.

We all love the CLI, but sometimes having a GUI (Graphical User Interface) might help. Docker knows what developers like, and for that reason, we have Scout on both platforms. Your developers can use it to scan their images and see a quick summary on their terminal or they can enjoy the features provided by Docker Desktop and see a complete report with links and explanations on their image’s found vulnerabilities.

Docker Scout terminal report

Docker Scout Desktop report

By providing users with those reports, they can make smarter choices when adopting different libraries and packages into their applications and can also work closely with the security teams to provide faster feedback on whether that technology is safe to use or not.

Docker Hardened Images

Now focusing on providing engineers and companies with safe and recommended resources, Docker recently announced Docker Hardened Images (DHI), a list of near-zero CVE images and optimized resources for you to start building your applications.

Even though it’s common in large organizations to have private container registries to store safe images and dependencies, DHI provides a safer start point for the security teams, since the resources available have been through extensive examination and auditing.

Docker Hardened Images report

DHI is a very helpful resource not only for enterprises but also for independent and open source software developers. Docker-backed images make the internet and the cloud safer, allowing businesses to build trustworthy and reliable platforms for their customers!

From an engineer’s perspective, the true value of Docker Hardened Images is the trust we have on Docker and the value that this security-ready solution brings us. Managing image security is hard if you have to do it all the way through. It’s hard to keep images ready to use and the difficulty just increases when our developers are requesting newer versions every day. By using Hardened Images, we’re able to provide our end users (developers and engineers) the latest versions of the most popular solutions available and offload the security team.

Final Thoughts

We can approach security in a lot of different ways, the main thing is: Security CANNOT slow down engineers. We need to design our controls in a way that we’re able to cover everything, fulfilling all gaps identified and still allowing developers to deliver code fast.

Guarantee your engineers have the best of both worlds with Docker.

Security DevEx

Get in touch with the authors:

Pedro Ignácio:

Blog

Denis Rodrigues:

Blog

Learn more about Docker’s security solutions:

Docker Desktop

Docker Scout

Docker Hardened Images

Quelle: https://blog.docker.com/feed/