How Digitec Galaxus delivers personalized newsletters with reinforcement learning and Google Cloud

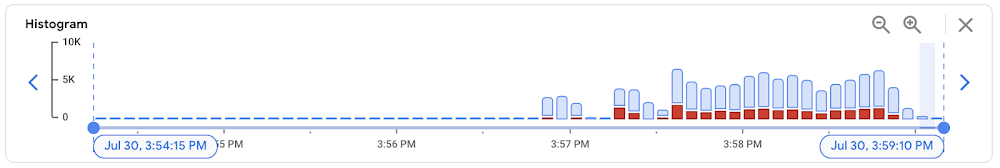

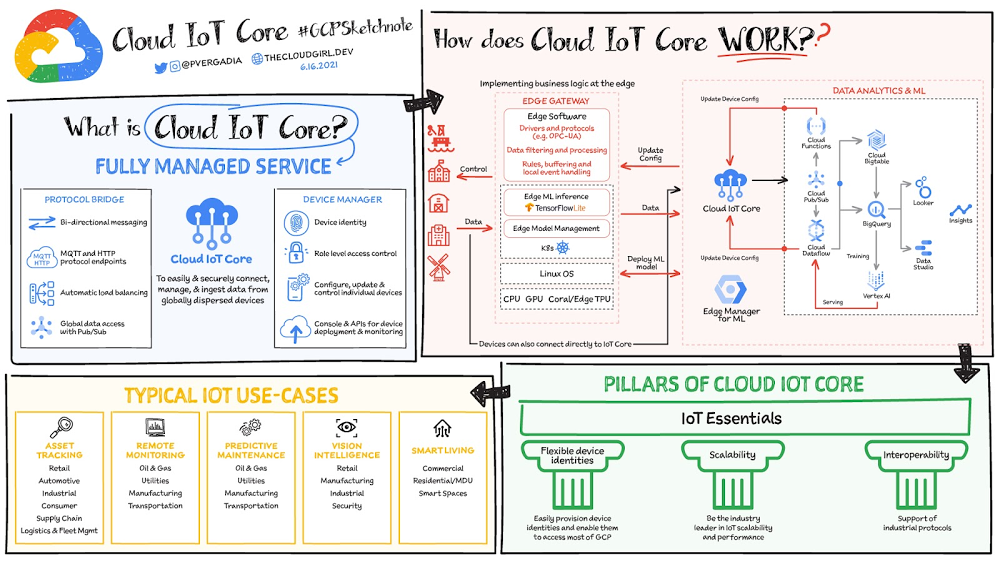

Digitec Galaxus AG is the biggest online retailer in Switzerland, operating two online stores: Digitec, Switzerland’s online market leader for consumer electronics and media products, and Galaxus, the largest Swiss online shop with a steadily growing range of consistently low-priced products for almost all daily needs. Known for its efficient, personalized shopping experiences, it’s clear that Digitec Galaxus understands what it takes to deliver a platform that is interesting and relevant to customers every time they shop. The problem: Personalizing decisions for every situationDigitec Galaxus already had established an engine to help them personalize experiences for shoppers when they reached out to Google Cloud. They had multiple recommendation systems in place and were also extensive early adopters of Recommendations AI, which already enabled them to offer personalized content in places like their homepages, product detail pages, and their newsletter. But those same systems sometimes made it difficult to understand how best to combine and optimize to create the most personalized experiences for their shoppers. Their requirements were threefold:Personalization: They have over 12 recommenders they can display on the newsletter, however they would like to contextualize this and choose different recommenders (which in turn select the items) for different users. Furthermore they would like to exploit existing trends as well as experiment with new ones.Latency: They would like to ensure that the solution is architected so that the ranked list of recommenders can be retrieved with sub 50 ms latency.End-to-end easy to maintain & generalizable/modular architecture: Digitec Galaxus wanted the solution to be architected using an easy to maintain, open source stack, complete with all MLops capabilities required to train and use contextual bandits models. It was also important to them that it is built in a modular fashion such that it can be adapted easily to other use cases which have in mind such as recommendations on the homepage, Smartags and more . To improve, they asked us to help them implement a machine learning (ML) contextual bandit based recommender system on Google Cloud taking all the above factors into consideration to take their personalization to the next level. Contextual bandits algorithms are a simplified form of reinforcement learning and help aid real-world decision making by factoring in additional information about the visitor (context) to help learn what is most engaging for each individual. They also excel at exploiting trends which work well, as well as exploring new untested trends which can yield potentially even better results. For instance, imagine that you are personalizing a homepage image where you could show a comfy living room couch or pet supplies. Without a contextual bandit algorithm, one of these images would be shown to someone at random without considering information you may have observed about them during previous visits. Contextual bandits enable businesses to consider outside context, such as previously visited pages or other purchases, and then observe the final outcome (a click on the image) to help determine what works best. Creating a personalization system with contextual banditsWhile Digitec Galaxus heavily personalizes their website homepages, they are very very sensitive and also require more cross-team collaboration to update and make changes. Together with the Digitec Galaxus team, we decided to narrow the scope and focus on building a contextual bandit personalization system for the newsletter first. The Digitec Galaxus team has complete control over newsletter decisions and testing various ML experiments on a newsletter would have less chance of adverse revenue impact than a website homepage. The main goal was to architect a system that could be easily ported over to the homepage and other services offered by Digitec Galaxus with minimal adaptations. It would also need to satisfy the functional and non-functional requirements of the homepage as well as other internal use cases.Below is a diagram of how the newsletter’s personalization recommendation system works:Click to enlargeThe system is given some context features about the newsletter subscriber such as their purchase history and demographics. Features are sometimes referred to as variables or attributes, and can vary widely depending on what data is being analyzed. The contextual bandit model trains recommendations using those context features and 12 available recommenders (potential actions). The model then calculates which action is most likely to enhance the chance of reward (a user clicking in the newsletter) and also minimize the problem (an unsubscribe). It also ensures to exploit well known trends and explore new trends with potentially higher user engagement.Calculating whether a click was a newsletter or an unsubscribe enabled the system to optimize for increasing clicks and avoid showing non-relevant content to the user (click-bait). This enabled Digitec Galaxus to exploit popular trends while also exploring potentially better-performing trends. How Google Cloud helpsThe newsletter context-driven personalization system was built on Google Cloud architecture using the ML recommendation training and prediction solutions available within our ecosystem. Below is a diagram of the high-level architecture used:Click to enlargeThe architecture covers three phases of generating context-driven ML predictions, including: ML Development: Designing and building the ML models and pipeline Vertex Notebooks are used as data science environments for experimentation and prototyping. Notebooks are also used to implement model training, scoring components, and pipelines. The source code is version controlled in Github. A continuous integration (CI) pipeline is set up to automatically run unit tests, build pipeline components, and store the container images to Cloud Container Registry. ML Training: Large-scale training and storing of ML models The training pipeline is executed on Vertex Pipelines. In essence, the pipeline trains the model using new training data extracted from BigQuery and produces a trained, validated contextual bandit model stored in the model registry. In our system, the model registry is a curated Cloud Storage. The training pipeline uses Dataflow for large scale data extraction, validation, processing, and model evaluation, and Vertex Training for large-scale distributed training of the model. AI Platform Pipelines also stores artifacts, the output of training models, produced by the various pipeline steps to Cloud Storage. Information about these artifacts are then stored in an ML metadata database in Cloud SQL. To learn more about how to build a Continuous Training Pipeline, read the documentation guide.ML Serving: Deploying new algorithms and experiments in production The training pipeline uses batch prediction to generate many predictions at once using AI Platform Pipelines, allowing Digitec Galaxus to score large data sets. Once the predictions are produced, they are stored inCloud Datastore for consumption. The pipeline uses the most recent contextual bandit model in the model registry to evaluate the inference dataset in BigQuery and give a ranked list of the best newsletters for each user, and persist it in Datastore. A Cloud Function is provided as a REST/HTTP endpoint to retrieve the precomputed predictions from Datastore.All components of the code and architecture are modular and easy to use, which means they can be adapted and tweaked to several other use cases within the company as well.Better newsletter predictions for millionsThe newsletter prediction system was first deployed in production in February, and Digitec Galaxus has been using it to personalize millions of newsletters a week for subscribers. The results have been impressive, 50% higher than initial baseline. However, the collaboration is still ongoing to improve the results even more. “Working at this level in direct exchange with Google’s machine learning experts is a unique opportunity for us. The use of contextual bandits in the targeting of our recommendations enables us to pursue completely new approaches in personalization by also personalizing the delivery of the respective recommender to the user. We have already achieved good results in our newsletter in initial experiments and are now working on extending the approach to the entire newsletter by including more contextual data about the bandits arms. Furthermore, as a next step, we intend to apply the system to our online store as well, in order to provide our users with an even more personalized experience. To build this scalable solution, we are using Google’s open source tools such as TFX and TF Agents, as well as Google Cloud Services such as Compute Engine, Cloud Machine Learning Engine, Kubernetes Engine and Cloud Dataflow.”—Christian Sager, Product Owner, Personalization (Digitec Galaxus)Since the existing architecture and system is also dynamic, it will automatically adapt to new behaviours, trends, and users. As a result, Digitec Galaxus plans to re-use the same components and extend the existing system to help them improve the personalization of their homepage and other current use cases they have within the company. Beyond clicks and user engagement, the system’s flexibility also allows for future optimization of other criteria. It’s a very exciting time and we can’t wait to see what they build next!Related ArticleIKEA Retail (Ingka Group) increases Global Average Order Value for eCommerce by 2% with Recommendations AIIKEA uses Recommendations AI to provide customers with more relevant product information.Read Article

Quelle: Google Cloud Platform