Editor’s note: Today we’re hearing from Google Cloud Platform (GCP) customer Otto Group data.works GmbH, a services organization holding one of the largest retail user data pools in the German-speaking area. They offer e-commerce and logistics SaaS solutions and conduct R&D with sophisticated AI applications for Otto Group subsidiaries and third parties. Read on to learn how Otto Group data.works GmbH recently migrated its on-premises big data Hadoop data lake to GCP and the lessons they learned along the way.At Otto Group, our business intelligence unit decided to migrate our on-premises Hadoop data lake to GCP. Using managed cloud services for application development, machine learning, data storage and transformation instead of hosting everything on-premises has become popular for tech-focused business intelligence teams like ours. But actually migrating existing on-premises data warehouses and surrounding team processes to managed cloud services brings serious technical and organizational challenges.The Hadoop data lake and included data warehouses are essential to our e-commerce business. Otto Group BI aggregates anonymized data like clickstreams, user interactions, product information, CRM data, and order transactions of more than 70 online shops of the Otto Group.On top of this unique data pool, our agile, autonomous, and interdisciplinary product teams—consisting of data engineers, software engineers, and data scientists—develop machine learning-based recommendations, product image recognition, and personalization services. The many online retailers of the Otto Group, such as otto.de and aboutyou.de, integrate our services into their shops to enhance customer experience.In this blog post, we’ll discuss the motivation that drove us to consider moving to a cloud provider, how we evaluated different cloud providers, why we decided on GCP, the strategy we use to move our on-premises Hadoop data lake and team processes to GCP, and what we have learned so far.Before the cloud: an on-premises Hadoop data lakeWe started with an on-premises infrastructure consisting of a Hadoop cluster-based data lake design, as shown below. We used the Hadoop distributed file system (HDFS) to stage click events, product information, transaction and customer data from those 70 online shops, never deleting raw data.Overview of the previous on-premises data lakeFrom there, pipelines of MapReduce jobs, Spark jobs, and Hive queries clean, filter, join, and aggregate the data into hundreds of relational Hive database tables at various levels of abstraction. That let us offer harmonized views of commonly used data items in a data hub to our product teams. On top of this data hub, the teams’ data engineers, scientists, and analysts independently performed further aggregations to produce their own application-specific data marts.Our purpose-built open-source Hadoop job orchestrator Schedoscope does the declarative, data-driven scheduling of these pipelines, as well as managing metadata and data lineage.In addition, this infrastructure used a Redis cache and an Exasol EXAsolution main-memory relational database cluster for key-based lookup in web services and fast analytical data access, respectively. Schedoscope seamlessly mirrors Hive tables to the Redis cache and the Exasol databases as Hadoop processing finishes.Our data scientists ran their iPython notebooks and trained their models on a cluster of GPU-adorned compute nodes. These models were then usually deployed as dockerized Python web services on virtual machines offered by a traditional hosting provider.What was good…This on-premises setup allowed us to quickly grow a large and unique data lake. With Schedoscope’s support for iterative, lean-ops rollout of new and modified data processing pipelines, we could operate this data lake with a small team. We developed sophisticated machine learning-driven web services for the Otto Group shops. The shops were able to cluster purchase and return history of customers for fit prediction; get improved search results through intelligent search term expansion; sort product lists in a revenue-optimizing way; filter product reviews by topic and sentiment; and more….And what wasn’tHowever, as the data volume, number of data sources, and services connected to our data lake grew, we ran into various pain points that were hampering our agility, including lack of team autonomy, operational complexity, technology limitations, costs, and more.Seeing the allure of the cloudLet’s go through each of those pain points, along with how cloud could help us solve them.Limited team autonomy: A central Hadoop cluster running dependent data pipelines does not lend itself well to multi-tenancy. Product teams constantly needed to coordinate—in particular with the infrastructure team responsible for operating the cluster. This not only created organizational bottlenecks that limited productivity; it also worked directly against the very autonomy our product teams are supposed to have. The need to share resources led to the situation that teams could not take full responsibility for their services and pipelines from development, to deployment, to monitoring. This created even more pressure on the infrastructure team. Cloud platforms, on the other hand, allow teams to autonomously launch and destroy infrastructure components via API calls, without affecting other teams and without having to pass through a dedicated team managing centrally shared infrastructure.Operational complexity: Operating Hadoop clusters and compute node clusters as well as external database systems and caches created significant operational complexity. We had to operate and monitor not only our products and data pipelines, but also the Hadoop cluster, operating system, and hardware. The cloud offers managed services for data pipelines, storing data, and web services, so we do not need to operate at the hardware, operating system, and cluster technology level.Limited tech stack: Technologically, we were limited with the Hadoop offering. While our teams could achieve a lot with Hive, MapReduce, and Spark jobs, we often felt that our teams couldn’t use the best technology for the product but had to fit a design into rigid organizational and technology constraints. The cloud offers a variety of managed data stores like BigQuery or Cloud Storage, data processing services like Cloud Dataflow and Cloud Composer, and application platforms like App Engine, plus it’s constantly adding new ones. Compared to the Hadoop stack, this could significantly expand the resources for our teams to design the right solution.Mismatched languages and frameworks: Python machine learning frameworks and web services are usually not run on YARN. Hive and HDFS are not well-suited for interactive or random data access. For a data scientist to reasonably work with data in a Hadoop cluster, Hive tables must be synced to external data stores, adding more complexity. By offering numerous kinds of data stores suitable for analytics, random access, and batch processing, as well as by separating data processing from data storage, cloud platforms make it easier to process and use data in different contexts with different frameworks.Emerging stream processing: We started tapping into more streaming data sources, but this was at odds with Hadoop’s batch-oriented approach. We had to deploy a clustered message broker—Kafka—for persisting data streams. While it is possible to run Spark streaming on YARN and connect to Kafka, we found Flink more suitable as a streaming-native processing framework, which only added another cluster and layer of complexity. Cloud platforms offer managed message brokers as well as managed stream processing frameworks.Expansion velocity: The traditional enterprise procurement process we had to follow made adding nodes to the cluster time- and resource-consuming. It was common that we had to wait three to four months from RFP and purchase order to delivery and operations. With a cloud platform, infrastructure can be added within minutes by API calls. The challenge with cloud is to set up enterprise billing processes so that varying invoices can be handled every month without the constant involvement of procurement departments. However, this challenge has to be solved only once.Expansion costs: A natural reaction to slow enterprise procurement processes is to avoid having to go through them too often. Slow processes mean that team members tend to wait and batch demand for new nodes into larger orders. Larger orders not only increase the corporate politics that come along with them, but also reduce the rate of innovation, as large-node order volumes discourage teams from building (and possibly later scratching) prototypes of new and intrinsically immature ideas. The cloud lets us avoid hanging on to infrastructure we no longer need, freeing us from such inhibitions. Moreover, many frameworks offered by cloud platforms support autoscaling and automating expansion, so expansion naturally follows as new use cases arise.Starting the cloud evaluation processGiven this potential, we started to evaluate the major cloud platforms in April 2017. We decided to move the Otto Group BI data lake to GCP about six months later. We effectively started the migration in January 2018, and finished migrating by February 2019.Our evaluation included three main areas of focus:1. Technology. We created a test use case to evaluate provider technology stacks: building customer segments based on streaming web analytics data using customer search terms.Our on-premises infrastructure team implemented this use case with the managed services of the cloud providers under evaluation (on top of doing its day-to-day business). Our product teams were involved, too, via three multi-day hackathons where they evaluated the tech stacks from their perspective and quickly developed an appetite for cloud technology.Additionally, the infrastructure team kept product teams updated regularly with technology demos and presentations.As the result of this evaluation, we favored GCP. In particular, we liked:The variety of managed data stores offered—especially BigQuery and Cloud Bigtable—and their simplicity of operation;Cloud Dataflow, a fully managed data processing framework that supports event time-driven stream processing as well as batch processing in a unified manner;Google Kubernetes Engine (GKE), a managed distributed container orchestration system, making deployment of Docker-based web services simple; andCloudML Engine as a managed Tensorflow runtime, and the various GPU and TPU options for machine learning.2. Privacy. We involved our legal department early to understand the ramifications of moving privacy-sensitive data from our data lake to the cloud.We now encrypt and anonymize more data fields than was needed on-premises. With the move to streaming data and increased encryption needs, we also ported the existing central encryption clearinghouse to a streaming architecture in the cloud. (The on-premises implementation of the clearinghouse had reached its scaling limit and needed a redesign anyway.)3. Cost. We did not focus on pricing between different cloud providers. Rather, we compared cloud cost estimates against our current on-premises costs. In this regard, we found it important to not just spec out a comparable Hadoop cluster with virtual machines in the cloud and then compare costs. We wanted managed services to reduce our many pain points, not just migrate these pain points to the cloud.We wanted managed services to reduce our many pain points, not just migrate these pain points to the cloud.Instead of comparing a Hadoop cluster against virtual machines in the cloud, we compared our on-premises cluster against native solution designs for the managed services. It was more difficult to come up with realistic cost estimates, since the designs hadn’t been implemented yet. But extrapolating from our experiences with our test use case, we were confident that cloud costs would not exceed our on-premises costs, even after applying solid risk margins.Now that we’ve finished the migration, we can say that this is exactly what happened: We are not exceeding on-premises costs. This we already consider a big win. We not only have development velocity, but the operational stability of the product teams has increased noticeably and so has the performance of their products. Also, the product teams have focused so far on migrating their products and not yet on optimizing costs, so we expect our cloud costs to go further below on-premises costs as time goes on.Going to the cloud: Moving the data lake to GCPThere were a few areas to tackle when we started moving our infrastructure to GCP.1. SecurityOne early goal was to establish a state-of-the-art security posture. We had to continue to fulfill the corporate security guidelines of Otto Group, while at the same time granting a large degree of autonomy to the product teams to create their own cloud infrastructure and eliminate the collaboration pain points.As a balance between a high security standard and the restrictiveness it implies and team autonomy, we came up with the motto of “access frugality.” Teams can work freely in their GCP projects. They can independently create infrastructure like GKE clusters or use managed services such as Cloud Dataflow or Cloud Functions as they like. But they are also expected to be restrictive about resources like IAM permissions, external load balancers and public buckets.In order to get the teams started with the cloud migration as soon as possible, we took a gradual approach to security. Building our entire security posture before teams could start with the actual migration was not an option. So we agreed on the most relevant security guidelines with the teams, then established the rest during migration. As the migration proceeded, we also started to deploy processes and tools to enforce these guidelines and provide guardrails for the teams.We came up with the following three main themes that our security tooling should address (see more in the image below):Cloud security monitoring: This theme is about transparency of cloud resources and configuration. The idea is to protect teams from security issues by detecting them early and, in the best-case scenario, preventing them entirely. At the same time, monitoring must allow for exceptions: teams might consciously want to expose resources such as API endpoints publicly without being bothered by security alerts all the time. The key objective of this theme is to instill a profound security awareness in every team member.Cloud cost controls: This theme covers financial aspects of the security posture. Misconfigurations can lead to significant unwanted costs, unintentionally—for example, by allowing BigQuery database queries going rogue over large datasets by not forcing the user to provide partition time restrictions, or because of external financial DDoS attacks in an autoscaling environment.Cloud resource policy enforcement: Security monitoring tools can detect security issues. A consequent next step is to automatically undo obvious misconfigurations as they are detected. As an example, tooling could automatically revert public access on a storage bucket. Again, such tooling must allow for exceptions.Main themes of our security posture.Since there are plenty of security-related features within Google Cloud products and there is a large variety of open-source cloud security tools available, we didn’t want to reinvent the wheel.We decided to make use of GCP’s inherent security policy configuration options and tooling where we could, such as organization policies, IAM conditions and Cloud Security Command Center.As a tool for cloud security monitoring, we evaluated Security Monkey, developed by Netflix. Security Monkey scans cloud resources periodically and alerts on insecure configurations. We chose it for its maturity and the simple extensibility of the framework with custom watchers, auditors and alerters. With these, we implemented security checks we didn’t find in GCP, mostly around the time-to-live (TTL) of Service Account Keys and setting organizational policies for disallowing public data either in BigQuery data sets or Cloud Storage buckets. We set up three different classes of issues: compliance, security, and cost optimization-related issues.Security Monkey is used by all product teams here. Team members use the Security Monkey UI to view the identified potential security issues and either justify them right there or resolve the issue in GCP. We also use a whitelisting feature to filter for known configurations, like default service account IAM bindings, to make sure we only see relevant issues. Excessive issues and alerts in a dynamic environment like GCP can be intimidating and have most certainly a negative overwhelming effect.To improve the transparency of cloud resources, we built several dashboards on top of the data gathered by Security Monkey to visualize the current and historical state of the cloud environment. While adapting Security Monkey to our needs, we found working with the Security Monkey project to be a great experience. We were able to submit several bug fixes and feature pull requests and get them into the master quickly.We are now shifting our focus from passive cloud resource monitoring towards active cloud resource policy enforcement where configuration is automatically changed based on detected security issues. Security Monkey as well as the availability of near real-time audit events on GCP offer a good foundation for this.We believe that cloud security cannot be considered a simple project with a deadline and a result; rather, it is an aspect that must be considered during each phase of development.2. Approaches to data lake migrationThere were a few possible approaches to migrating our Hadoop data lake to GCP. We considered lift-and-shift, simply redeploying our Hadoop cluster to the cloud as a first step. While this was probably the simplest approach, we wouldn’t get benefits with regards to the pain points we had identified. We’d have to get productivity gains later by rearchitecting yet again to improve team autonomy, operational complexity, and tech advancements.At the other end of the spectrum, we could port Hadoop data pipelines to GCP managed services, reading and storing data to and from GCP managed data stores and then turning off the on-premises cluster infrastructure after porting finished. But that would take much longer to see benefits, since the product teams would have to wait until porting ended and all historical data was processed before they could use the new cloud technology.3. Our approach: Mirror data first, then port pipelinesSo we decided on an incremental approach to porting our Hadoop data pipelines while embracing the existence of our on-premises cluster while it was still there.As a first step, we extended Schedoscope with a BigQuery exporter, which makes it easy to write a Hive table partition to BigQuery efficiently in parallel. We also added the capability to perform additional encryptions via the clearinghouse during export to satisfy the needs of our legal department. We then augmented our on-premises Hadoop data pipelines with additional export steps. In this way, our on-premises Hive data was encrypted and mirrored to BigQuery as soon as it had been computed with only a little delay.Second, we exported the historical Hive table partitions over the course of four weeks. As a result, we ended up with a continuously updated mirror of our on-premises data in BigQuery. By summer 2018, our product teams could start porting their web services and models to the cloud, even though the core data pipelines of the data lake were still running on-premises, as shown here:OttoGroup’s gradual data syncIn fact, product teams were able to finish migrating essential services—such as customer review sentiment analysis, intelligent product list sorting, and search term expansion—to GCP by the end of 2018, even before all on-premises data pipelines had been ported,Next, we ported the existing Hadoop data pipelines from Schedoscope to managed GCP services. We went through each data source and no longer staged it to Hadoop but to GCP, either to Cloud Storage for batch sources or to Cloud Pub/Sub for new streaming sources.We then redesigned the data pipelines originating from the data source with GCP managed services, usually Cloud Dataflow, to bring the data to BigQuery. Once the data was in BigQuery, we also used simple SQL transformations and views. Generally, we orchestrate batch pipelines using Cloud Composer, managed by Airflow. Streaming pipelines are mostly designed as chains of Cloud Dataflow jobs decoupled by Cloud Pub/Sub topics, as shown here:Migrating jobs into GCP managed servicesThere is the problem, however, that aggregations still performed on-premises were sometimes dependent on data from sources that already had been migrated. A temporary measure to address this was to create exports from the cloud back to on-premises in order to feed downstream pipelines with required data until these pipelines were migrated, as shown below:The on-premises backport, a temporary measure during migrationThis implies, however, that data sources of similar type might be processed both on-premises and in the cloud. As an example, web tracking of one shop may already have been ported to GCP while another shop’s tracking data was still being processed on-premises.Since redesigning data pipelines during migration could involve changes to data models and structures, it was thus possible that similar data types were available heterogeneously in BigQuery: natively processed in the cloud and mirrored to the cloud from the Hadoop cluster.To deal with this problem, we required our product teams to design their cloud-based services and models such that they could take data from two different heterogeneously modeled data sources. This is not as difficult a challenge as it may seem, though: There is no need to create a unified representation of heterogeneous data for all use cases. Instead, data from two sources can be combined in a use case-specific way.Finally, on March 1, 2019, all sources and their pipelines had been ported to GCP managed services. We cut off the on-premises exports, disabled the backport of cloud data to on-premises, shut down the cluster, and removed any duplicate logic from the product teams’ service introduced during the data porting step.After the migration, our architecture now looks like this:The finished cloud migrationAfter the cloud: What have we learned moving to GCP?Our product teams started getting the benefits of the cloud pretty quickly. We were surprised to learn that the point of no return in our cloud migration process came in the fall of 2018, even though the main data pipelines were still running on-premises.Once our product teams had gained autonomy, we were no longer able to take it back even if we wanted to. Moreover, the product teams had experienced considerably increased productivity gains, as they were free to work at their pace with more technology choices and managed services with high SLAs. Going back to a central Hadoop cluster on-premises environment was no longer an option.There are a few other key takeaways from our cloud migration:1. We’ve moved closer to a decentralized DevOps culture. While moving our data pool and the various web services of the product teams to managed cloud services, we automatically started to develop a DevOps culture, scripting the creation of a cloud infrastructure that treats infrastructure as code. We want to further build on this by creating a blameless culture with shared responsibilities, minimal risks due to manual changes, and reduced delivery times. To reach this goal, we’re adding a high degree of automation and increasing knowledge sharing. Product team members no longer rely on a central infrastructure team, but create infrastructure on their own.What has remained a central task of the infrastructure team, however, is the bootstrapping of cloud environments for the product teams. The required information to create a GCP project is added to a central, versioned configuration file. Such information includes team and project name, cost center, and a few technical topics such as selecting a network in a shared VPC, VPN access to Otto Group campus, and DNS zones. From there, a continuous integration (CI) pipeline creates the project, assigns it to the correct billing account, and sets up basic permissions for the team’s user groups with default IAM policies. This process takes no more than 10 minutes. Teams take over control of the created project and its infrastructure from there and can start work right away.2. Some non-managed services are still necessary. While we encourage our product teams to make use of GCP’s managed cloud services as much as possible, we do host some services ourselves. The most prominent example of such a service is our source code management and CI/CD system that is shared between the product teams.While we would love to use a hosted service for this, our legal department regards the source code of our data-driven products as proprietary and requires us to manage it ourselves. Consequently, we have set up a Gitlab deployment on GKE running in the Europe West region. The configuration of Gitlab is fully automated via code to provide groups, repositories and permissions for each team.The implications of a self-hosted Gitlab deployment are that we have to take care of regular database backups and also have a process for disaster recovery. We have to guarantee a reasonably high availability and have to follow Gitlab’s lifecycle management for patching or updating system components.3. Cloud is not one-size-fits-all. We have quickly learned that team autonomy really means team autonomy. While teams often face similar problems, superimposing central tools threatens team productivity by introducing team interdependencies and coordination overhead. Central tools should only be established if it cannot be avoided (see the motivation for hosting our own CI/CD system above) or if collaboration benefits outweigh the coordination overhead introduced (being able to look at the code of other teams, for example).For example, even though all teams need to deal with the repetitive task of scheduling recurring jobs, we have not set up a central job scheduler such as we did with Schedoscope on-premises. Each team decides on the best solution for their products by either using their own instance of a GCP-managed service like Airflow or even building their own solution like our recently published CLASH tool.Instead of sharing tooling between teams, we have moved on to sharing knowledge. Teams share their perspectives on those solutions, lessons learned, and best practices in our regular internal “GCP 3D” presentations.The road aheadMigrating our Hadoop data lake to the cloud was a bold decision—but we are totally satisfied with how it turned out and how quickly we were able to pull it off. Of course, the freedom of a cloud environment enjoyed by autonomous teams comes with the challenge of global transparency. Security monitoring and cost control are two main areas in which we’ll continue to invest.A further pressing topic for us is metadata management. Not only is data stored using different datastore technologies (or not stored at all in the case of streaming data), data is also spread across the teams’ GCP projects. We’ll continue to explore how to provide an overview of all the data available and how to ensure data security and integrity.As a company, one of our core values is excellence in development and operations. With our migration, we’ve found that moving to a cloud environment has brought us significantly further towards these goals.

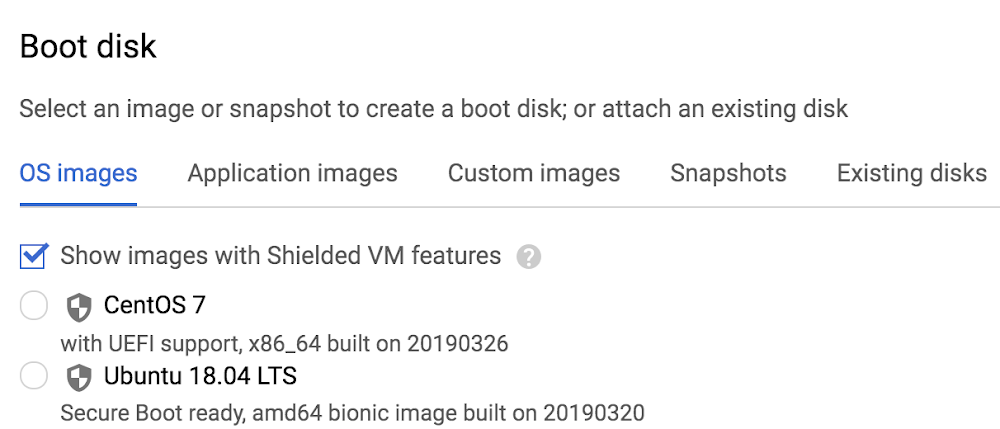

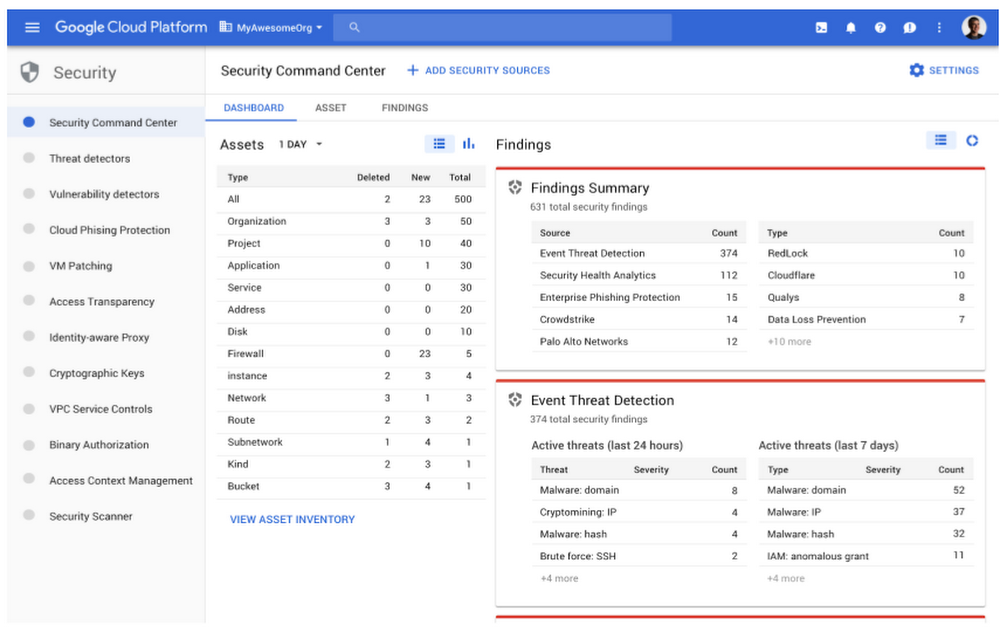

Quelle: Google Cloud Platform