Application management made easier with Kubernete Operators on GCP Marketplace

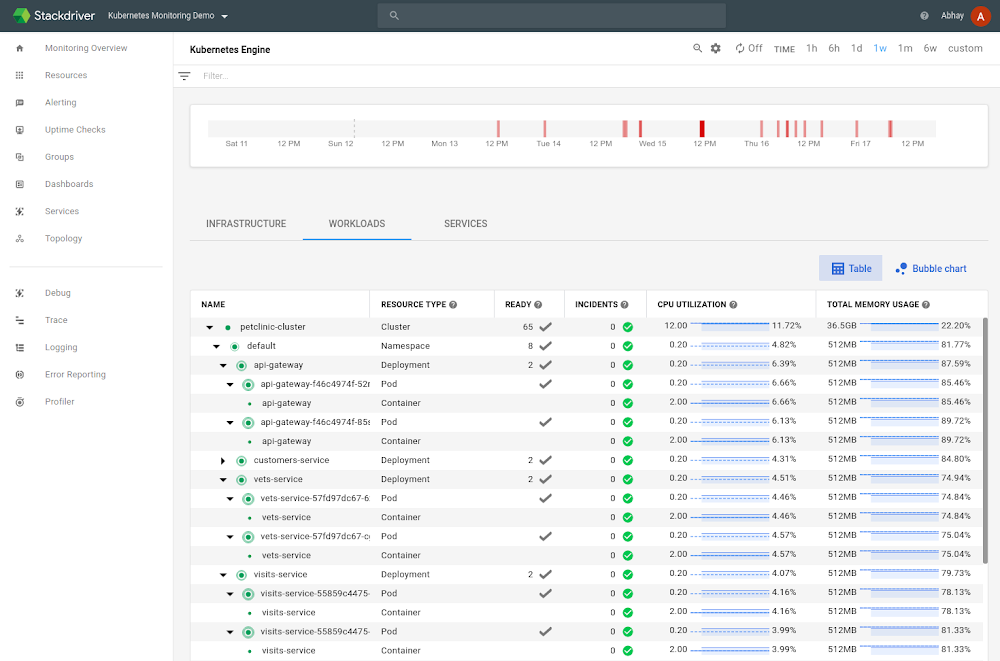

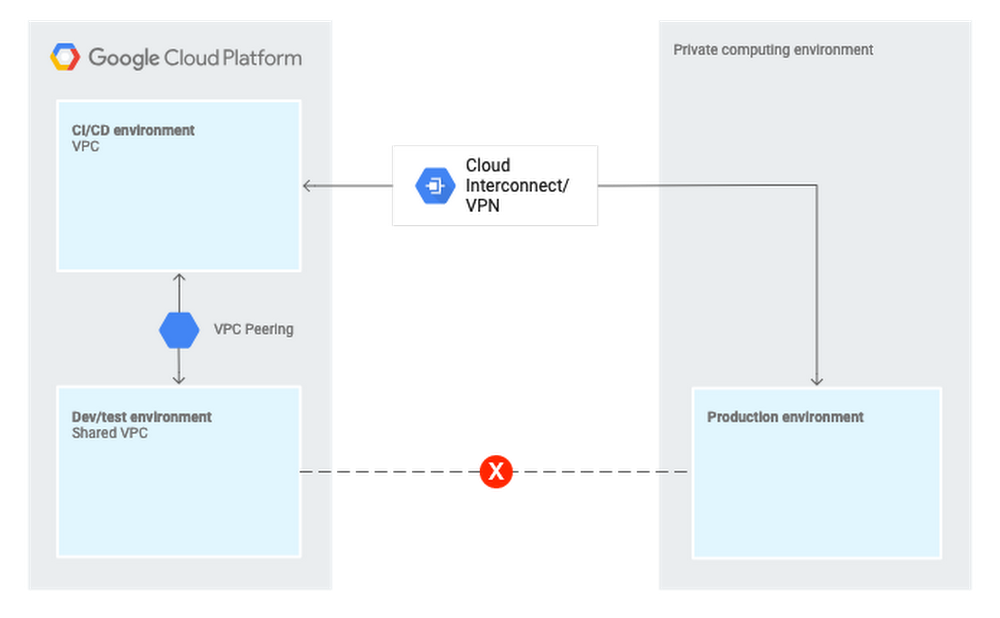

More and more enterprises leverage Google Kubernetes Engine (GKE) to containerize and manage their applications, and deliver new features to their end users. However, application and cluster admins often struggle with authoring, releasing and managing applications on top of Kubernetes. To make this easier, we have published a set of Kubernetes Operators to our marketplace that encapsulate best practices and end-to-end solutions for specific applications.Lifecycle of a Kubernetes applicationFor example, Operators automate the creation of core resources such as pods, containers, persistent volumes, services, etc., as well as workload resources including deployments and statefulsets, and enable application-specific visibility and orchestration.We worked with the Kubernetes open-source community in the Apps Special Interest Group (SIG) to introduce and standardize an Application resource that defines the various resources within an application and manages them as a group. The Application resource includes a standard API for creating, viewing, and managing applications in Kubernetes, making it easy to perform health checks, do garbage collection, and manage application dependencies. Moreover, it provides a standard mechanism for viewing and managing apps on the GKE UI and other UI dashboards.Application view in GKE UIOperators exercise one of the most valuable capabilities of Kubernetes—its extensibility—through the use of CRDs and custom controllers. Operators extend the Kubernetes API to support the modernization of different categories of workloads and provide improved lifecycle management, scheduling, etc.Kubernetes applications on GCP Marketplace recently became generally available, and are an excellent place to find ISV-supported Operators.Operators by GoogleIn addition to helping define the application standard, we’ve also created several critical Operators. These highlight the possibilities of being able to extend Kubernetes, as well as demonstrate best practices for authoring and managing the lifecycle of a Kubernetes application.Java OperatorJava is one of the most popular programming languages on Earth, with 10 million developers worldwide writing apps for 15 Java-enabled billion devices (source). Java apps rely on a Java virtual machine (JVM), which lets a computer run Java and other related programs. Examples of containerized apps that run in a JVM, and that are often deployed on GKE, include Spark, Elasticsearch, Kafka, and Cassandra. However, there are several challenges in running JVM applications on Kubernetes. The JVM is often not fully aware of the isolation mechanisms that containers use internally, leading to unexpected behavior between different environments (such as test and production).To solve these challenges, we created a Java Operator that automatically configures various aspects of a JVM application running in a Kubernetes cluster, including the JVM memory, garbage collection logging, monitoring, and debugging. You can find this Java Operator on Google Cloud Platform (GCP) Marketplace.Spark OperatorApache Spark is a popular analytics engine for large-scale batch and streaming data processing and machine learning. We recently launched an open-source Kubernetes Operator for Apache Spark in beta that simplifies lifecycle management of Spark applications running on Kubernetes in a Kubernetes-native way. You can find it on GCP Marketplace.Airflow OperatorApache Airflow allows programmatic management of complex workflows as directed acyclic graphs for dependency management and scheduling. We published the open-source Airflow Operator that simplifies the installation and management of Apache Airflow on Kubernetes, and which is available on GCP Marketplace.Building your own OperatorWhile we provide several high quality extensions and applications on GCP Marketplace, you may also want to write your own extensions for custom use cases. To do so, you can follow these best practices and useKubebuilder, reducing development time from months to weeks or days. To learn more, check out theKubebuilder book.Automatic updates for your Kubernetes appsTo simplify the management experience, Managed Updates on GCP Marketplace lets you easily update and auto-roll back your Kubernetes apps with health checks. Now, you no longer need to stop your applications, search for the right patches and releases, verify and validate them and finally manually update them. With Managed Updates, we update applications for the latest features and security patches, removing significant operational burden. We are working with partners on GCP Marketplace to enable seamless updates of their applications.Managing Kubernetes apps made easyCreating, configuring and deploying apps to run on top of Kubernetes doesn’t have to be hard. Operators simplify the process of deploying many common applications directly from the GCP Marketplace and CLI, and if you can’t find what you need, you can build it yourself. Check out GCP Marketplace for the full catalog of pre-configured Kubernetes apps that are ready to deploy into your cluster today.

Quelle: Google Cloud Platform