How SRE teams are organized, and how to get started

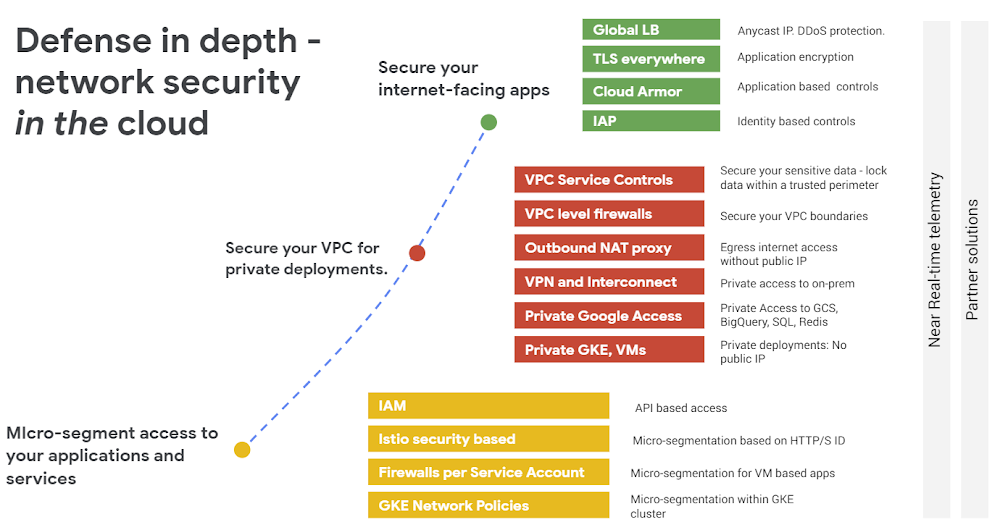

At Google, Site Reliability Engineering (SRE) is our practice of continually defining reliability goals, measuring those goals, and working to improve our services as needed. We recently walked you through a guided tour of the SRE workbook. You can think of that guidance as what SRE teams generally do, paired with when the teams tend to perform these tasks given their maturity level. We believe that many companies can start and grow a new SRE team by following that guidance.Since then, we have heard that folks understand what SREs generally do at Google and understand which best practices should be implemented at various levels of SRE maturity. We have also heard from many of you how you’re defining your own levels of team maturity. But the next step—how the SRE teams are actually organized—has been largely undocumented, until now!In this post, we’ll cover how different implementations of SRE teams establish boundaries to achieve their goals. We describe six different implementations that we’ve experienced, and what we have observed to be their most important pros and cons. Keep in mind that your implementations of SRE can be different—this is not an exhaustive list. In recent years, we’ve seen all of these types of teams here in the Google SRE organization (i.e., a set of SRE teams) except for the “kitchen sink.” The order of implementations here is a fairly common path of evolution as SRE teams gain experience.Before you begin implementing SREBefore choosing any of the implementations discussed here, do a little prep work with your team. We recommend allocating some engineering time of multiple folks and finding at least one part-time advocate for SRE-related practices within your company. This type of initial, less formal setup has some pros and cons:ProsEasy to get started on an SRE journey without organizational change.Lets you test and adapt SRE practices to your environment at low cost.ConsTime management between day-to-day job demands vs. adoption of SRE practices.Recommended for: Organizations without the scale to justify dedicated SRE team staffing, and/or organizations experimenting with SRE practices before broader adoption.Types of SRE team implementations1. Kitchen Sink, a.k.a. “Everything SRE”This describes an SRE team where the scope of services or workflows covered is usually unbounded. It’s often the first (or only) SRE team in existence, and may grow organically, as it did when Google SRE first got started. We’ve since adopted a hybrid model, including the implementations listed below.ProsNo coverage gaps between SRE teams, given that only one team is in place.Easy to spot patterns and draw similarities between services and projects.SRE tends to act as a glue between disparate dev teams, creating solutions out of distinct pieces of software.ConsThere is usually a lack of an SRE team charter, or the charter states everything in the company as being possibly in scope, running the risk of overloading the team.As the company and system complexity grows, such a team tends to move from being able to have deep positive impact on everything to making a lot more shallow contributions. There are ways to mitigate this phenomenon without completely changing the implementation or starting another team (see tiers of service, below). Issues involving such a team may negatively impact your entire business.Recommended for: A company with just a couple of applications and user journeys, where adoption of SRE practices and demand for the role has outgrown what can be staffed without a dedicated SRE team, but where the scope remains small enough that multiple SRE teams cannot be justified.2. InfrastructureThese teams tend to focus on behind-the-scenes efforts that help make other teams’ jobs faster and easier. Common implementations include maintaining shared services (such as Kubernetes clusters) or maintaining common components (like CI/CD, monitoring, IAM or VPC configurations) built on top of a public cloud provider like Google Cloud Platform (GCP). This is different from SREs working on services related to products—i.e., customer-facing code written in house. ProsAllows product developers to use DevOps practices to maintain user-facing products without divergence in practice across the business. SREs can focus on providing a highly reliable infrastructure. They will often define production standards as code and work to smooth out any sharp edges to greatly simplify things for the product developers running their own services.ConsDepending on the scope of the infrastructure, issues involving such a team may negatively impact your entire business, similar to a Kitchen Sink implementation.Lack of direct contact with your company’s customers can lead to a focus on infrastructure improvements that are not necessarily tied to the customer experience.As the company and system complexity grows, you may be required to split the infrastructure teams, so the cons related to product/application teams apply (see below).Recommended for: Any company with several development teams, since you are likely to have to staff an infrastructure team (or consider doing so) to define common standards and practices. It is common for large companies to have both an infrastructure DevOps team and an SRE team. The DevOps team will focus on customizing FLOSS and writing their own software (think features) for the application teams, while the SRE team focuses on reliability.3. ToolsA tools-only SRE team tends to focus on building software to help their developer counterparts measure, maintain, and improve system reliability or any other aspect of SRE work, such as capacity planning. One can argue that tools are part of infrastructure, so the SRE team implementations are the same. It’s true that these two types of teams are fairly similar. In practice, tools teams tend to focus more on support and planning systems that have a reliability-oriented feature set, as opposed to shared back ends on the serving path that are normally associated with infrastructure teams. As a side effect, there’s often more direct feedback to infrastructure SRE teams; a tooling SRE team runs the risk of solving the wrong problems for the business, so it needs to work hard to stay aware of the practical problems of the teams tackling front-line reliability.The pros and cons of infrastructure and tools teams tend to be similar. Additionally, for tools teams:Cons:You need to make sure that a tools team doesn’t unintentionally turn into an infrastructure team, and vice versa. There’s a high risk of an increase of toil and overall workload. This is usually contained by establishing a team charter that’s been approved by your business leaders.Recommended for: Any company that needs highly specialized reliability-related tooling that’s not currently available as FLOSS or SaaS.4. Product/applicationIn this case, the SRE team works to improve reliability of a critical application or business area, but the reliability of ancillary services such as batch processors is the sole responsibility of a different team—usually developers covering both dev and ops functions.ProsProvides a clear focus for the team’s effort and allows a clear link from business priorities to where team effort is spent.ConsAs the company and system complexity grows, new product/application teams will be required. The product focus of each team can lead to duplication of base infrastructure or divergence of practices between teams, which is inefficient and limits knowledge sharing and mobility. Recommended for: As a second or nth team for companies that started with a Kitchen Sink, infrastructure, or tools team and have a key user-facing application with high reliability needs that justifies the relatively large expense of a dedicated set of SREs.5. EmbeddedThese SRE teams have SREs embedded with their developer counterparts, usually one per developer team in scope. Embedded SREs usually share an office with the developers, but the embedded arrangement can be remote. The work relationship between the embedded SRE(s) and developers tends to be project- or time-bounded. During embedded engagements, the SREs are usually very hands-on, performing work like changing code and configuration of the services in scope.ProsEnables focused SRE expertise to be directed to specific problems or teams.Allows side-by-side demonstration of SRE practices, which can be a very effective teaching method.ConsIt may result in lack of standardization between teams, and/or divergence in practice.SREs may not have the chance to spend much time with peers to mentor them.Recommended for: This implementation works well to either start an SRE function, or to scale another implementation further. When you have a project or team that needs SRE for a period of time, then this can be a good model. This type of team can also augment the impact of a tools or infrastructure team by driving adoption.6. ConsultingThis implementation is very similar to the embedded implementation described above. The difference is that consulting SRE teams tend to avoid changing customer code and configuration of the services in scope.Consulting SRE teams may write code and configuration in order to build and maintain tools for themselves or for their developer counterparts. If they are performing the latter, one could argue that they are acting as a hybrid of consulting and tools implementations.ProsIt can help with further scaling an existing SRE organization’s positive impact by being decoupled from directly changing code and configuration (see also influence on reliability standards and practices below).ConsConsultants may lack sufficient context to offer useful advice. A common risk for consulting SRE teams is being perceived as hands-off (i.e., little incurred risk), given that they typically don’t change code and configuration, even though they are capable of having indirect technical impact. Recommended for: We’d recommend waiting to staff a dedicated SRE team of consultants until your company or complexity is considered to be large, and when demands have outgrown what can be supported by existing SRE teams of other various implementations. Keep in mind that we recommend staffing one or a couple part-time consultants before you staff your first SRE team (see above).Common modifications of SRE team implementationsWe’ve seen two common modifiers to most of the implementations described above.1. Reliability standards and practicesAn SRE team may also act as a “reliability standards and practices” group for an entire company. The scope of standards and practices may vary, but usually covers how and when it’s acceptable to change production systems, incident management, error budgets, etc. In other words, while such an SRE team may not interact with every service or developer team directly, it’s often the team that establishes what’s acceptable elsewhere within their area of expertise. We’ve seen adoption of such standards and practices approached in two different ways:Influence relies on mostly organic adoption and showing teams how these standards and practices can help them achieve their goals.Mandates rely on organizational structure, processes, and hierarchy to drive adoption of reliability standards and practices.The effectiveness of mandates vary based on the organizational culture combined with the SRE team’s experience, seniority, and reputation. A mandated approach may be effective in an organization where strict processes are already expected and common in other areas, but is highly unlikely to succeed in an organization where individuals are given high levels of autonomy. In either case, a brand new team—even if composed of experienced individuals—is likely to have more difficulty establishing company-wide standards than a team with a history and reputation of achieving high reliability through strong practices.We’ve also observed software development to be an effective tool for balancing these approaches. In this case, the SRE team develops a zero-configuration approach, where one or more reliability standards and practices can be adopted with additional zero setup cost, if the service or target team happens to be using a predetermined system. Once they see the benefits (typically time savings) that they can achieve by using that system, development teams are influenced to adopt the practices through the provided tooling. As adoption of such a system grows, the approach can then shift to target improvements for SREs and set mandates through reliability-related conformance tests.2. Tiers of serviceRegardless of which SRE team model defines the scope of the team, any SRE team also has a decision to make about the depth of their engagement with the software and services within their area. This is particularly true when there are more development teams, applications, or infrastructure than can be fully supported by the SRE team. A common approach to addressing this challenge is to offer tiers of SRE engagement. Doing so expands the binary approach of “not in scope for us or not yet seen by SRE” and “fully supported by SRE” by adding at least one more tier in between those two options. A common characteristic of a binary approach is that “fully supported by SRE” generally means that a given service or workflow is jointly owned by SRE and developers, including on-call duties, after some onboarding process. Unfortunately, an SRE team, or any other team, tends to reach a limit in terms of how many services they can fully onboard. As the architecture variety and complexity of services increases, cognitive load and memory recall suffers.Here’s an example of a tiered approach to SRE:Tier 0: Sporadic consulting work, no dedicated SRE staffing.Tier 1: Project work, some dedicated SRE time.Tier 2: The service is onboarded (or onboarding) for on-call, and receives more dedicated SRE time.The implementation details of the tiers vary based on the actual SRE implementation itself. For example, consulting and embedded SRE teams aren’t generally expected to onboard services (as in go on call) at Tier 2, but may offer dedicated staffing (as opposed to shared staffing) in Tier 1. We recommend defining tiers of service in a document that’s been approved by SRE and developer leadership. This signoff is related to, but not the same, as documenting your team charter (mentioned above).There have been instances of a single SRE team adopting characteristics of multiple implementations other than adopting tiers of service. For instance, a single Kitchen Sink SRE team could also have two SRE consultants playing a dual role.Common SRE pathsYour SRE organization may follow the implementations in the order above. Another common path is to implement what’s described in “Before you begin,” then to staff a Kitchen Sink SRE team, but swap the order of Infrastructure with product/application when it is time to start a second SRE team. In this scenario, the result is two specialized product/application SRE teams. This makes sense when there is enough product/application breadth but little to no shared infrastructure between both teams, other than hosted solutions such as the ones provided by Google Cloud.A third common path is to move from “Before you begin” to an infrastructure (or even tools) team, skipping a Kitchen Sink and product/application phase. This approach makes the most sense when the application teams are able and willing to define and maintain SLOs.We highly recommend evaluating both “Reliability standards and practices” and “Tiers of service” as early in the SRE process as possible, but that may be feasible only after you’ve established your first SRE team.What should I do next?If you are just starting your SRE practice, we recommend reading Do you have an SRE team yet? How to start and assess your journey, and then assessing the SRE implementation that best suits your needs based on the information we shared above.If you have been leading one or more SRE teams, we recommend describing their implementation in generic terms (similar to how we’ve discussed team implementations above), evaluating the pros and cons based on your own experience, and making sure the SRE team’s goals and scope are defined through a team charter document. This exercise may help you avoid overload and premature reorganizations.If you’re a GCP customer and would like to request CRE involvement, contact your account manager to apply for this program. Of course, SRE is a methodology that will work with a variety of infrastructures, and using Google Cloud is not a prerequisite for pursuing this set of engineering practices. We wish you a happy SRE journey!Thanks to Adrian Hilton, Betsy Beyer, Christine Cignoli, Jamie Wilkinson, Shylaja Nukala among others for their contributions to this post.

Quelle: Google Cloud Platform