Gartner names Google Cloud a leader in its IaaS Magic Quadrant

For the second consecutive year, Gartner has identified Google Cloud as a Leader in the 2019 Gartner Cloud Infrastructure as a Service Magic Quadrant. Enterprises rely on research firms like Gartner to help them evaluate and compare cloud providers, and we’re thrilled for the recognition.Here at Google Cloud, our goal is to be the easiest cloud for enterprises to do business with. We’ve committed to dramatically extending the size of our sales and support teams, and have made meaningful changes to our contracting and discounting practices. Further, we’re working closely with key ISVs and service providers to ensure that running enterprise workloads on Google Cloud Platform (GCP) is a seamless, satisfying experience. Customers also choose GCP for its differentiated technology. Here’s a sampling.High-performance global scale that’s highly reliableAt Google Cloud, we’ve worked hard to build infrastructure that organizations can count on, wherever they choose to deploy their workloads. In addition, Gartner calls out our innovative Customer Reliability Engineering team—engineers trained in Google’s rigorous Site Reliability Engineering (SRE) processes who teach customers how to run applications in a way that maximizes uptime, while still encouraging innovation. Leading data analytics and machine learningRunning applications is important, but you also need to make sense of the data they generate. Google Cloud has highly differentiated offerings in the realm of data analytics and machine learning. BigQuery, for instance, is our hyper-scalable, hosted serverless data warehouse that lets you query data with a familiar SQL-like interface, to meet all your enterprise data analytics needs. We’re also extending BigQuery with easy-to-use ML capabilities, so you can leverage the power of AI on existing data sets without having to hire a team of data science PhDs. Open source for the enterpriseMany Google Cloud IaaS offerings benefit from our innovation in the field of containers, networking and automation. While some customers choose GCP to build cloud-native applications, this year, we’ll bring open-source automation and scalability to the enterprise directly with Anthos, which takes the best of open source (Kubernetes, Istio, Knative) to help enterprises modernize any application, and run them wherever they see fit—in our cloud, on-premises, or even in third-party clouds. A cloud for the enterpriseEnterprises choose Google Cloud for all kinds of reasons. UPS uses Google Cloud data analytics and machine learning to help it optimize its package routing software, helping it deliver 21 million packages in 220 countries, every single day. Whirlpool uses G Suite to help its 92,000 employees around the world collaborate and innovate in real-time. McKesson chose Google Cloud as its preferred cloud provider, using our Cloud Healthcare API to enhance its applications, and to modernize its SAP environment. Learn more about what sets Google Cloud apart—and how you can use it to transform your business. You can also download a complimentary copy of the 2019 Gartner Infrastructure as a Service Magic Quadrant on our website.Gartner does not endorse any vendor, product or service depicted in its research publications, and does not advise technology users to select only those vendors with the highest ratings or other designation. Gartner research publications consist of the opinions of Gartner’s research organization and should not be construed as statements of fact. Gartner disclaims all warranties, expressed or implied, with respect to this research, including any warranties of merchantability or fitness for a particular purpose. Gartner Magic Quadrant for Cloud Infrastructure as a Service, Worldwide, Raj Bala, Bob Gill, Dennis Smith, David Wright, 16 July 2019.

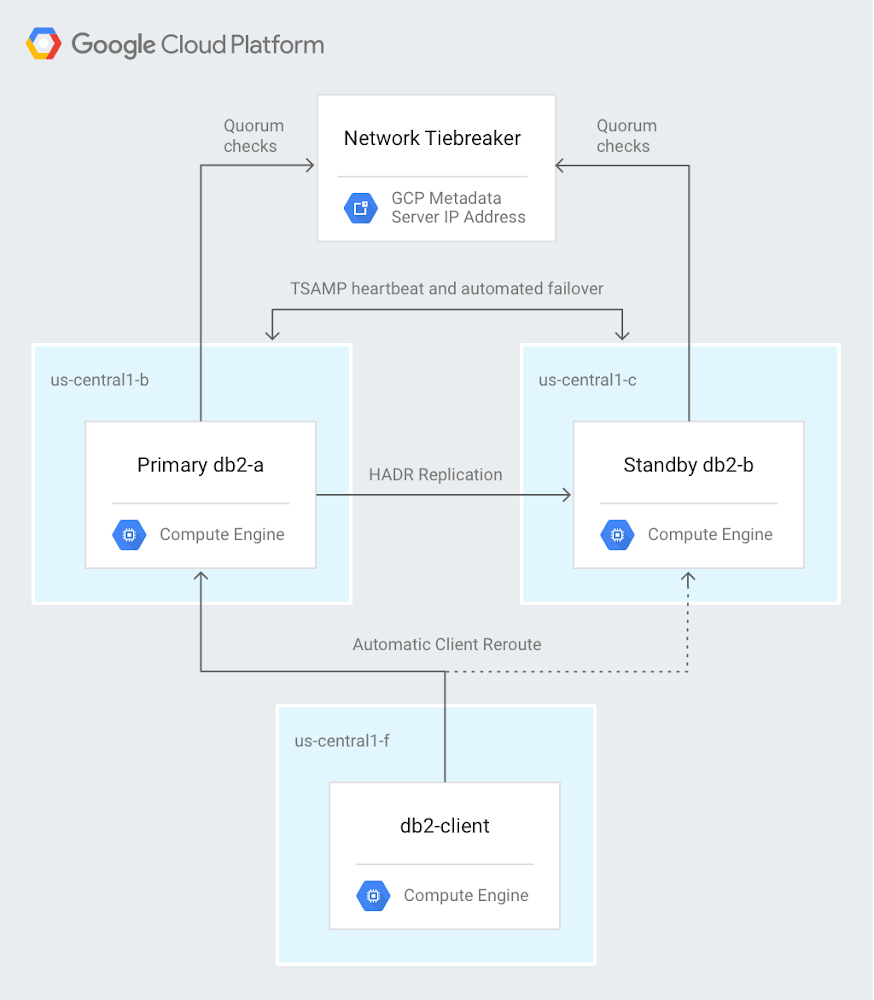

Quelle: Google Cloud Platform