How Loblaw turns to technology to support Canadians during COVID-19

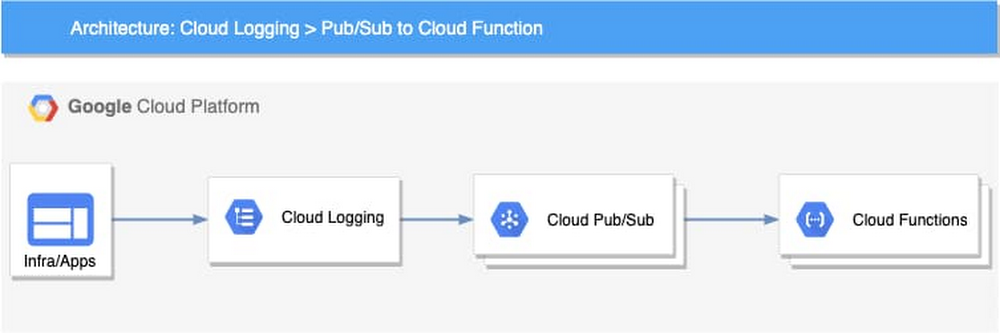

The impact of COVID-19—and the shift in how we work, live, and shop—has tested every industry, and retailers in the grocery space are no exception. While hospitals and local governments have crisis plans for scenarios like what we are experiencing, few grocery store operators had fully contemplated implications of a global pandemic. While traditional grocers have seen new, online, digital-native competitors enter the market before COVID-19, a majority of consumers still relied on physical grocery stores to purchase their household essentials. For these traditional grocers, online grocery sales were minimal compared to brick-and-mortar sales, causing many to focus their IT attention on in-store experiences. However, due to the pandemic, consumers’ online grocery spend increased from 1.5% to 9% on average in Canada alone. The drastic and immediate shift to online has created immense strain on grocers—from employee and community safety issues, to handling surges in online traffic that can crash websites, to struggling with inventory and order fulfilment. Some have weathered this new reality better than others, thanks to a variety of technologies that improve efficiency and help keep employees and customers safe. Meeting unprecedented online demandLoblaw is a great example of a grocer using technology to support its community and protect its employees, while also growing its business. A 100-year-old company, Loblaw is the largest grocery and retail pharmacy chain in Canada, with approximately 200,000 employees and more than 18 million shoppers active in its loyalty program. When COVID-19 first hit North America, Loblaw was one of a few grocery chains in Canada offering the ability to order online and pick up groceries the same day. As more shoppers moved their shopping to online, the company shifted quickly to meet the rising demand. As online traffic and order volumes reached unprecedented levels, the performance of Loblaw’s online grocery websites was starting to strain under the load. Google Cloud then activated its BFCM (Black Friday Cyber Monday) protocols, including a dedicated war room with Loblaw Digital’s Technology team where engineers from both companies worked side-by-side to quickly adjust the Loblaw platform and ensure an uninterrupted experience for shoppers.Together, Loblaw Digital and Google Cloud quickly stabilized and settled into a level of online traffic that seems now to be the new normal. According to Hesham Fahmy, VP of Technology at Loblaw Digital, “Loblaw’s systems are operating at a scale comparable to other large global e-commerce retailers.” Automating fulfilment with TakeoffWhile Google was working to scale Loblaw’s e-commerce system, the grocer was also searching for new ways to improve the fulfilment process and keep up with order volume. The Loblaw Digital PC Express team took several steps to reduce the bottleneck, such as hiring thousands of new personal shoppers, adding thousands of slots for pickup every week, along with the introduction of new technology to increase capacity across the country. Fortunately, Loblaw was in the process of rolling out its first Micro Fulfillment Center (MFC) with Google Cloud partner Takeoff Technologies. Takeoff’s MFC is essentially a small-scale automation and fulfillment solution placed within an existing storefront in a space that could be as small as two or three grocery aisles. The MFC uses a robotic racking system and cloud and AI technology, powered by Google Cloud, to store, pack, and fulfill orders. The efficiency of automation helps to drastically reduce what’s known as “last mile” costs, by keeping products as close to the customer as possible. While the MFC implementation had been in progress for nearly a year, its completion couldn’t come at a better time. The new technology, opens-up additional availability for orders and has the capacity to support order volume for multiple PC Express locations in close proximity. To get the MFC up-and-running ahead of schedule, Waltham, Mass.-based Takeoff dispatched employees to Canada armed with webcams and Google Meet, Google’s premium video conferencing solution, to handle the last steps of go-live. This process would normally take 12+ employees, but Takeoff only needed to send two. After two weeks of self-quarantine in Canada, the employees collaborated with their team back at home via Google Meet to ensure an effective rollout. With the MFC in place, colleagues are able to pick and pack items faster than they could manually. As José V. Aguerrevere and Max Pedró, co-founders of Takeoff, put it: “It’s a great example of how automation can help support employee workloads to alleviate a time-consuming and costly process. This type of hyperlocal automation will help local firms not only survive, but thrive. In the long-run it also has the potential to lower food prices, decrease the footprint of stores, and feed data back to suppliers to reduce food and packaging waste, which could eventually help our planet.” Takeoff’s Chief Technology Officer, Zach LaValley, elaborated: “Google has allowed us to shine, particularly in our recent launch with Loblaw; from their solution architecture partnership during the implementation phase, to the stability and reliability of their cloud platform, to the ease of using Google Meet to remotely launch a new site. We have an ambitious mission to transform the grocery industry, and our services have never been more vital. Google provides the reliability, scalability, and global perspective we need in order to provide the top-tier service our retail partners deserve and need at this time.”Loblaw is Takeoff’s first Canadian facility to go-live; its technology, built on Google Cloud’s scalable platform, is expected to be live in 53 retail chains across the United States, Australia, New Zealand, and the Middle East by the end of 2020. Building on a Google Cloud foundationThe technology groundwork laid by Loblaw Digital has enabled them to quickly respond to sudden shifts in shopping dynamics. For the last two years, Google Cloud and Loblaw Digital have been working hand-in-hand on a broader digital roadmap that started with PC Express and expanded to include a new marketplace for pet supplies, toys, baby essentials, home decor, and other items that aren’t available in brick-and-mortar stores. Along the way, Loblaw Digital has gotten more efficient at building new online platforms using Google Cloud as the foundation. PC Express was completed in less than six months, while Loblaw’s new marketplace was up-and-running in just weeks. The teams are now consolidating multiple data sources in Google Cloud, which will give them the ability to look across their data and find new ways to serve customers.“In the matter of days, our online traffic spiked four-fold,” said Vice President, Online Grocery, Sharon Lansing. “During this time, it was critical that our teams were able to find ways to better serve our customers and ensure that we were able to deliver that service quickly.” Loblaw’s foresight and investments in technology enabled the company to react and adapt quickly to COVID-19. To learn more, read the Loblaw case study.

Quelle: Google Cloud Platform