How Revionics brings advanced analytics to retailers with help from Google Cloud

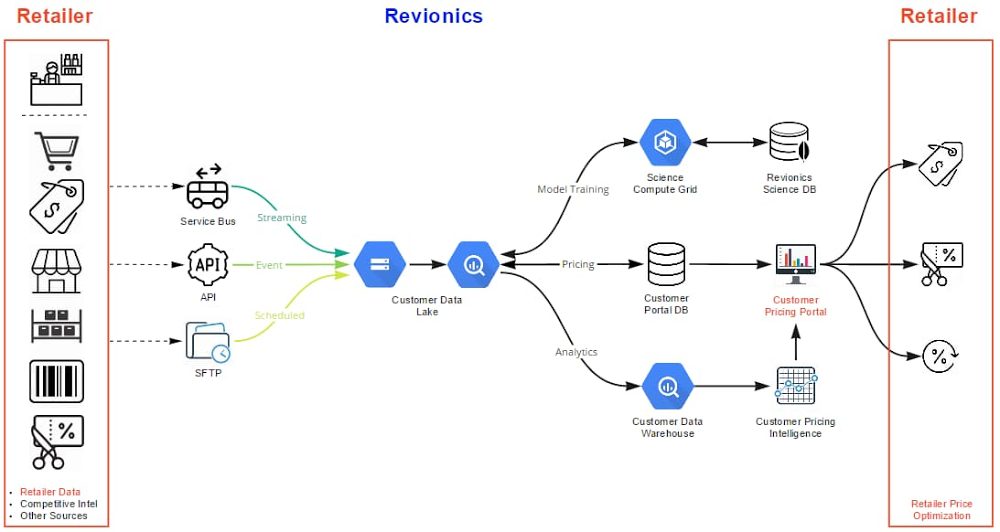

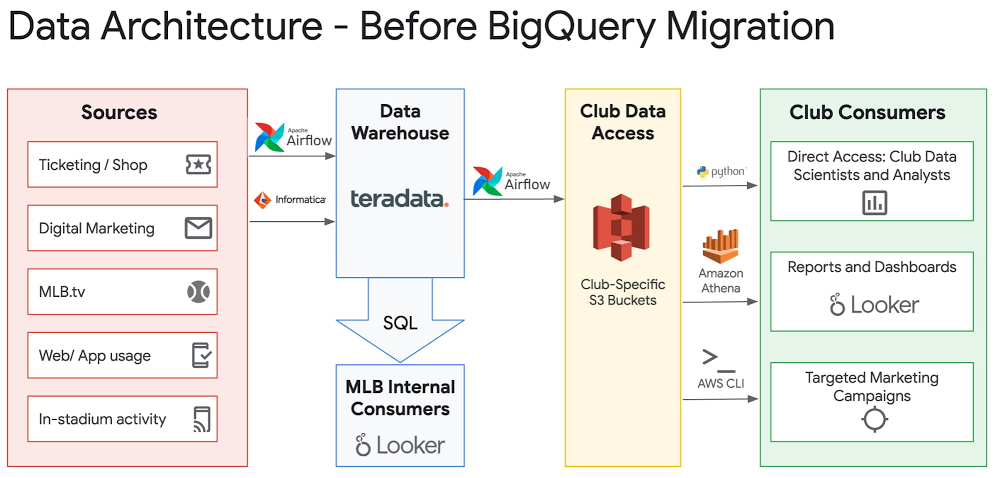

Editor’s note: Today we’re hearing from Revionics, a lifecycle pricing platform that gives retailers the confidence to ask the “what ifs” and “why nots” that result in profitable pricing strategies. In this blog, the Revionics team shares their cloud data warehouse migration journey and the lessons learned along the way. At Revionics, we’ve been in the data analytics business for nearly two decades, serving retail customers who wanted to leverage their data to drive their pricing strategies. At the company’s founding in 2002, our advanced AI models were probably the most complex in commercial use. Out of necessity, Revionics built the advanced technology infrastructure to handle the data volume and processing for our AI models. Fast forward to today, and cloud infrastructure has finally caught up. The state of current cloud computing infrastructure has opened up new avenues of data exploration for our teams and customers.Researching cloud optionsFrom the beginning, we didn’t just want to solve known problems. We wanted to improve our overall infrastructure to become more nimble to support our customers’ needs, today and in the future. Just like our AI models help retailers focus on what is possible, we need infrastructure that enables our data scientists to create that vision.We had been using on-premises Teradata appliances, and the parallelism and performance had worked well for us. But appliances have hardware and software restrictions that made it hard for us to share data and seamlessly scale. They’re not elastic, and couldn’t offer us the space we needed. We had maxed out the physical storage capacity of the appliances themselves, and we were rapidly approaching the time where we would need to renew the hardware to upgrade and expand performance and capacity. The space required for processing resulted in access constraints to the latest analytical results, impacting decision making based on current information. Replacing appliances with newer ones couldn’t alone solve our elasticity and data access problems, so we started exploring cloud options. In addition, many of our tech-savvy customers have in-house data analytics experts, and we wanted to offer them plug-and-play capabilities on our data warehouse infrastructure. That way, they could dig into their data and respond to market shifts accordingly. Once we learned more about current cloud options, we could visualize many ways to drive our business forward. For example, the elasticity and amount of storage available could let us really accelerate our product development and overall customer success.Cloud migration decisionsPartnering with Google Cloud, we adopted BigQuery. For each client, we built a data lake and a complete structured data warehouse, so every client’s data is isolated and securely accessible to meet client queries. The scale that BigQuery brought became essential when the COVID-19 pandemic wrought huge, overnight changes for our retail clients, who needed to meet unexpected demand while keeping their employees and customers safe. We were able to rapidly update our AI pricing models with our customers’ most current data and give them clarity to make the right pricing decisions. We migrated Teradata SQL to BigQuery SQL, which facilitated a fast migration. Converting Teradata DDL to BigQuery DDL was straightforward—we encountered a few challenges in our view queries due to differences in SQL, but this also gave us an opportunity to learn about how BigQuery works. We’re a lean company, so we needed to move data efficiently without a lot of manual work required. Our DevOps team helped us build tools so we could create script templates for different projects and customer datasets. For us, it was faster to redeploy our own tools than learn new tools. We had a lot of practical conversations discussing our options, and ultimately we did what was least disruptive to our customers and provided the greatest continuity for operational management, while leveraging select services available in Google Cloud.Revionics puts customers first in all that we do. Ensuring they were not impacted during the migration was a top priority, and we worked with our customers closely throughout the process. We migrated following retail’s busiest season, and we carefully performed our due diligence to internally sync and enable our customer go-live in January 2020. Google Cloud helped support our no-downtime migration and ensure business continuity.Google Cloud helped support our no-downtime migration and ensure business continuity.Migration learnings and lessonsMigrating our reports to run on BigQuery required some planning. We primarily did a lift-and-shift migration to minimize the total changes involved; all we had to do was point our existing metadata model to BigQuery. We made adjustments to some data types where necessary, but most of the reports did not need to be updated. However, some queries generate differently in BigQuery, so we took the opportunity to improve the design and performance of selected reports. As an example, our reports sit on views in a separate dataset, and we were able to simply move logic upstream, leveraging a few of the unique capabilities of BigQuery to improve runtime performance.Click to enlargeFor a successful migration, it helps to establish standards and adopt production tools early. We migrated chunks of the data model at a time, allowing us to test and improve through the project. We also took immediate advantage of several BigQuery database capabilities, such as implementing partitioning and clustering. For us, it was the right move and enabled a fast transition. Now, our next step is how to improve our data model. There are changes we can make under the covers that are impactful, such as views and the interface between BigQuery and our reporting platform. Composer and Airflow both came in handy for our data load process. We built extract pipelines to move data from our SQL Servers to Cloud Storage to load into BigQuery, all executed through Composer. We also take full advantage of built-in monitoring and logging tools (formerly known as Stackdriver). On the other side of migrationToday, the infrastructure we’ve created with Google Cloud has helped address the immediate needs we had, and provides the foundation for Revionics to solve new and interesting problems. We’re opening new doors, and Google Cloud has helped improve how we operate our infrastructure, forecast growth, and manage costs. Data access: For example, moving data to construct new analytics had at times been a slow, unwieldy process that could require days of copying and processing. Now, all of a client’s data is securely co-located in BigQuery, enabling immediate access to data for customer-specific analysis without impacting production operations in any way. Data processing happens in seconds and minutes rather than hours and days, whereas to analyze any one customer’s data before our cloud migration, end users often encountered roadblocks to move data off the SQL Server instance. Now, they don’t have to move data at all.Security: We’d been used to focusing on security, at times sacrificing usability out of necessity. With Google Cloud, we use Google’s built-in encryption at rest and in transit without any impact to usability, and with zero configuration requirements or management needed. We’ve improved our security footprint, lowered our management overhead, and improved performance significantly. Additionally, BigQuery makes it simple to triage issues, and we’ve gained significant efficiency in the way we find and solve any issues. The time spent triaging customer questions has been reduced dramatically. Seeing the business impactWhen we first started almost twenty years ago, retailers would set prices for all their products once a year. As retailers adopted our AI-based pricing models, Revionics introduced the ability to automatically model prices every week, and optimize on demand. We have now set the foundation to enable even more advanced modeling and optimization techniques, and are able to model at a deeper and more granular level than ever before, for orders of magnitude greater data volumes, all while improving our processing times. With this new functionality, we will enable retailers to update prices at the speed of their business, providing the ability to test “what ifs” and run pricing scenarios in minutes. Our data scientists can access so much more data than they could before, at speed, and perform data modeling at the transactional level. We’re able to create models now that we’ve been wanting to create for years that are broad and go deeper into the details. This capability is a pillar for Revionics and has helped speed up our product development and unleashed our data scientists. For our customers, this means that we can continually stay ahead of the complexity of modern retail environments, and this new scalability means we can respond to them immediately and help them adapt quickly. Earlier tools didn’t allow many of our teams to self-serve their analytics needs, but with access to BigQuery, they’re able to do analytics work on their own. From a support angle, that’s been beneficial. Custom reporting requests that used to take hours now are available immediately and securely for the end user through BigQuery. Combined, this has opened up exciting new roadmap possibilities. We’re looking at improving how we give our customers access to their data, exploring new and intriguing visualizations, all while leveraging the built-in global access and security, giving us a lot of capabilities and potential products. When it comes to the cloud, there’s a lot to learn. If you’re just getting started, we recommend that you master what you can and don’t try to learn everything all at once. To help, allow your teams to explore, then identify the most critical functional and non-functional requirements and stay focused on those to prevent scope creep and help drive success in your own cloud adoption journey.Learn more about Revionics. Thanks for additional contributions from Clinton Pilgrim and Kausik Kannan.

Quelle: Google Cloud Platform