The best of Google Cloud Next ‘20: OnAir: Data Management Week for technical practitioners

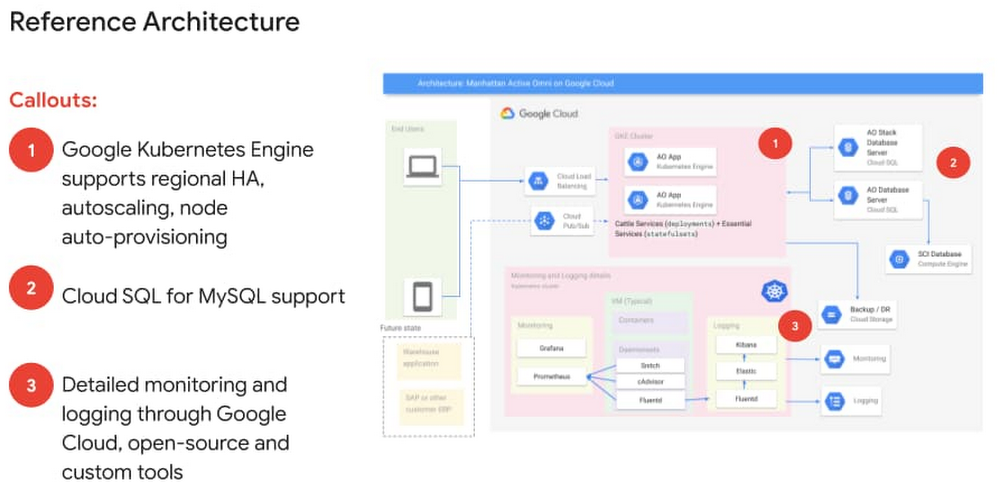

It’s Week 6 of Google Cloud Next ‘20: OnAir, and this week we’re covering all things databases, from running your favorite open source database with Cloud SQL to building new enterprise solutions using Cloud Spanner. We have a lot of great content to share with you this week, so let’s dig in.Whatever database questions you have—Should I use SQL or NoSQL? Is it horizontally scalable and disaster recovery-ready?—you can get help answering them during this week’s sessions at Next OnAir and through some cool demos showing how to choose your database and how a high availability setup works (I am very proud of our team’s creativity!).After checking out some must-see sessions below, if you have questions, I’ll be hosting a live developer- and operator-focused recap and Q&A session this Friday, August 21 at 9 AM PST. Or, join our APAC team for a recap Friday at 11 AM SGT. Hope to see you then.Personally, I am looking forward to sharing all the amazing things the Cloud SQL team did in the past year with the session: What’s New With Cloud SQL: So much good material, and the highly anticipated PITR (point in time recovery) for PostgreSQL.Another session we couldn’t miss is this one, super useful for those using Kubernetes and maybe need a little help with the connectivity between the products: Connecting to Cloud SQL from Kubernetes: Your application is scaling with all those nodes; how about adding your persistent data storage into Cloud SQL and taking advantage of our high-availability setup?Speaking of Kubernetes, we couldn’t let the cloud-native databases be forgotten:Simplify complex application development using Cloud Firestore: Get a look at how the Firestore database service makes it easy for developers to scale new and existing applications while adding real-time client data synchronization and offline mode capabilitiesModernizing HBase workloads with Cloud Bigtable: See how to move from your preferred NoSQL database to Bigtable using HBase.And if you’re still not sure which is the right tool for you, check out these sessions:How to Choose the Right Database For Your Workloads:Where you’ll see the advantages of each technology according to your needs.Optimally Deploy an Application Cache with Memorystore: Caching everywhere, folks!Also, this week’s Cloud Study Jam will give you an opportunity to participate in hands-on labs on how to use BigQuery tables across different locations and create data transformation pipelines, so you can get real-world data management experience. You’ll get a chance to learn more about how to prepare for Google Cloud’s Professional Data Engineer Certification as well.One thing to remember about databases is that there’s so much you can solve with just one database, and Google Cloud has a broad set of tools to help you solve your data problems. You may have your single source of truth on a Cloud SQL instance, but want to improve your login and product catalog by adding a caching layer with Cloud Memorystore. Being able to use these products together leads to better management and productivity—essential in a modern, fast-moving world.Next OnAir is running now until Sep. 8. You can check out the full session catalog and register at g.co/cloudnext.

Quelle: Google Cloud Platform