Can machine learning make you a better athlete?

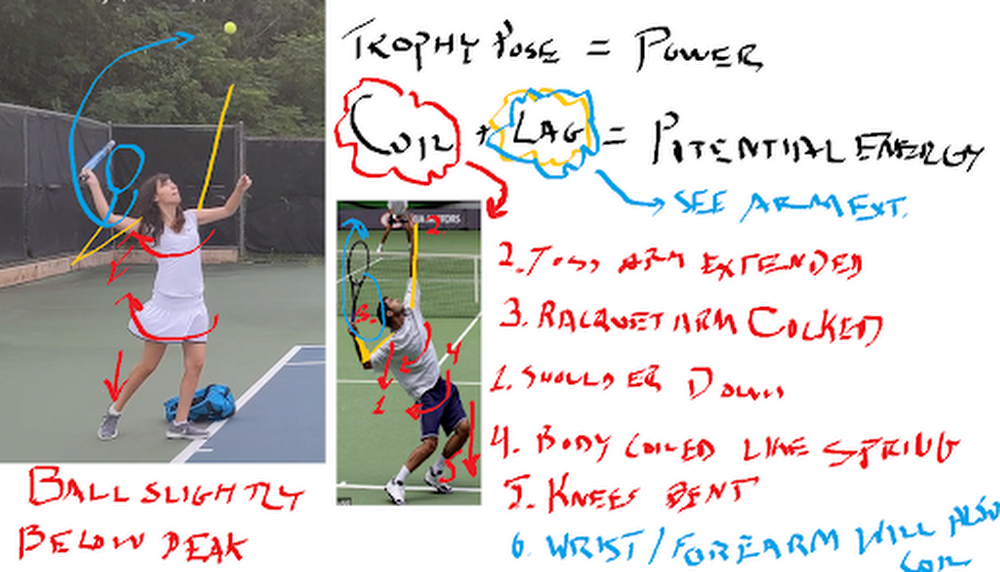

Ah, the Super Bowl. Or, as I prefer to say, the Superb Owl—that oh-so-American Sunday defined by infinite nachos, high-budget commercials, and memes that can last us half a decade. As an uncoordinated math geek, I can’t say I’ve ever had much connection to the “Football” part of the Super Bowl. That said, sports, data analytics, and machine learning make a powerful trio: most professional teams use this technology in one way or another, from tracking players’ moves to detecting injuries to reading numbers off players’ jerseys. And, for the less athletic of us, machine learning may even be able to help us improve our own skills.Which is what we’ll attempt today. In this post, I’ll show you how to use machine learning to analyze your performance in your sport of choice (as an example, I’ll use my tennis serve, but you can easily adopt the technique to other games). We’ll use the Video Intelligence API to track posture, AutoML Vision to track tennis balls, and some math to tie everything together in Python.Want to try this project for yourself? Follow along in the Qwiklab.I give full credit for this idea from my fellow Googler Zack Akil, who used the same technique to analyze penalty kicks in soccer (sorry, “football”).Using machine learning to analyze my tennis serveTo get started, I set out to capture some video data of my tennis serve. I went to a tennis court, set up a tripod, and captured some footage. Then I sent the clips to my tennis coach friend, who gave me some feedback that looked like this:These diagrams were great because they analyzed key parts of my serve that differed from those of professional athletes. I decided to use this to hone in on what my machine learning app would analyze:Were my knees bent as I served?Was my arm straight when I hit the ball?How fast did the ball actually travel after I hit it? (This one was just for my personal interest)Analyzing posture with pose detectionTo compute the angle of my knees and arms, I decided to use pose detection—a machine learning technique that analyzes photos or videos of humans and tries to locate their body parts. There are lots of tools you can use to do pose detection (like TensorFlow.js), but for this project, I wanted to try out the new Person Detection feature of the Google Cloud Video Intelligence API. (You might recognize this API from my AI-Powered Video Archive, where I used it to analyze objects, text, and speech in my family videos.) The Person Detection feature recognizes a whole bunch of body parts, facial features, and clothing. From the docs:To start, I clipped the video of my tennis serves down to just the sections where I was serving. Since I only caught 17 serves on camera, this took me about a minute. Next, I uploaded the video to Google Cloud Storage and ran it through the Video Intelligence API. In code, that looks like: To call the API, you pass the location in Cloud Storage where your video is stored as well as a destination in cloud storage where the Video Intelligence API can write the results.When the Video Intelligence API finished analyzing my video, I visualized the results using this neat tool built by @wbobeirne. It spits out neat visualization videos like this:Pose detection makes a great pre-processing step for training machine learning models. For example, I could use the output of the API (the position of my joints over time) as input features to a second machine learning model that tries to predict (for example) whether or not I’m serving, or whether or not my serve will go over the net. But for now, I wanted to do something much simpler: analyze my serve with high school math!For starters, I plotted the y position of my left and right wrists over time:It might look messy, but that data actually shows pretty clearly the lifetime of a serve. The blue line shows the position of my left wrist, which peaks as I throw the tennis ball a few seconds before I hit it with my racket (the peak in the right wrist, or orange line).Using this data, I can tell pretty accurately at what points in time I’m throwing the ball and hitting it. I’d like to align that with the angle my elbow is making as I hit the ball. To do that, I’ll have to convert the output of the Video Intelligence API–raw pixel locations–to angles. How do you do that? Obviously using the Law of Cosines, duh! (Just kidding, I definitely forgot this and had to look it up. Here’s a great explanation of the Law of Cosines and some Python code.)The Law of Cosines is the key to converting points in space to angles. In code, that looks something like:Using these formulae, I plotted the angle of my elbow over time:By aligning the height of my wrist and the angle of my elbow, I was able to determine the angle was around 120 degrees (not straight!). If my friend hadn’t told me what to look for, it would have been nice for an app to catch that my arm angle was different from professionals and let me know.I used the same formula to calculate the angles of my knees and shoulders. (You can find all the details in the code.)Computing the speed of my servePose detection let me compute the angles of my body, but I also wanted to compute the speed of the ball after I hit it with my racket. To do that, I had to be able to track the tiny, speedy little tennis ball over time.As you can see here, the tennis ball was sort of hard to identify because it was blurry and far away.I handled this the same way Zack did in his Football Pier project: I trained a custom AutoML Vision model.If you’re not familiar with AutoML Vision, it’s a no-code way to build computer vision models using deep neural networks. The best part is, you don’t have to know anything about ML to use it.AutoML Vision lets you upload your own labeled data (i.e. with labeled tennis balls) and trains a model for you.Training an object detection model with AutoML VisionTo get started, I took a thirty second clip of me serving and split it into individual pictures I could use as training data to a vision model:ffmpeg -i filename.mp4 -vf fps=10 -ss 00:00:01 -t 00:00:30 tmp/snapshots/%03d.jpgYou can run that command from within the notebook I provided, or from the command line if you have ffmpeg installed. It takes an mp4 and creates a bunch of snapshots (here at fps=20, i.e. 20 frames per second) as jpgs. The -ss flag controls how far into the video the snapshots should start (i.e. start “seeking” at 1 second) and the flag -t controls how many seconds should be included (30 in this case).Once you’ve got all your snapshots created, you can upload them to Google Cloud storage with the command:gsutil mb gs://my_neat_bucket # create a new bucketgsutil cp tmp/snapshots/* gs://my_neat_bucket/snapshotsNext, navigate to the Google Cloud console and select Vision from the left hand menu:Create a new AutoML Vision Model and import your photos.Quick recap: what’s a machine learning classifier? It’s a type of model that learns how to label things from example. So to train our own AutoML Vision model, we’ll need to provide some labeled training data for the model to learn from.Once your data has been uploaded, you should see it in the AutoML Vision “IMAGES” tab:Here, you can start applying labels. Click into an image. In the editing view (below), you’ll be able to click and drag a little bounding box:For my model, I hand-labeled about 300 images which took me ~30 minutes. Once you’re done labeling data, it’s just one click to train a model with AutoML–just click the “Train New Model” button and wait.When your model is done training, you’ll be able to evaluate its quality in the “Evaluate” tab below.As you can see, my model was pretty darn accurate, with about 96% precision and recall.This was more than enough to be able to track the position of the ball in my pictures, and therefore calculate its speed:Once you’ve trained your model, you can use the code in this Jupyter notebook to make a cute little video like the one I plotted above.You can then use this to plot the position of the ball over time, to calculate speed:Unfortunately, I realized too late I’d made a grave mistake here. What is speed? Change in distance over time, right? But because I didn’t actually know the distance between me, the player, and the camera, I couldn’t compute distance in miles or meters–only pixels! So I learned I serve the ball at approximately 200 pixels per second. Nice.So there you have it–some techniques you can use to build your own sports machine learning trainer app. And if you do build your own sports analyzer, let me know!Related ArticleBaking recipes made by AIIn this post, we’ll show you how to build an explainable machine learning model that analyzes baking recipes, and we’ll even use it to co…Read Article

Quelle: Google Cloud Platform