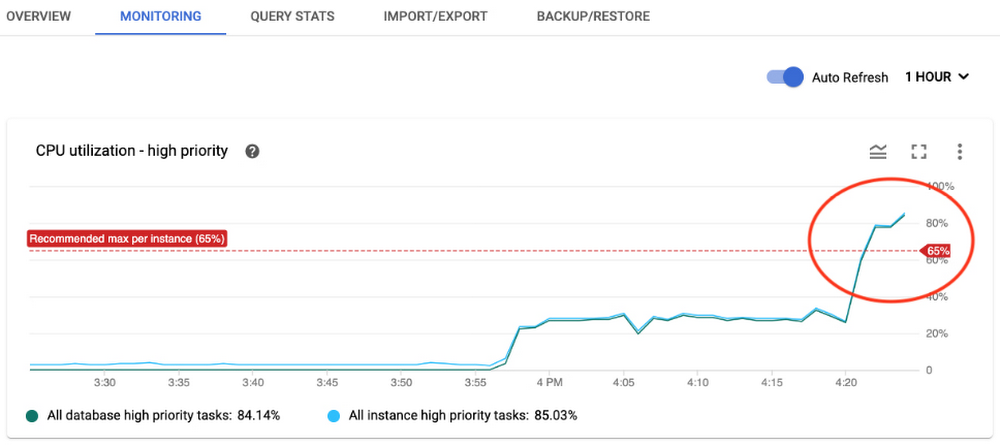

To serve the various workloads that you might have, Google Cloud offers a selection of managed databases. In addition to partner-managed services, including MongoDB, Cassandra by Datastax, Redis Labs, and Neo4j, Google Cloud provide a series of managed database options: CloudSQL and Cloud Spanner for relational use cases, Firestore and Firebase for document data, Memorystore for in-memory data management, and Cloud Bigtable, a wide-column, key-value database that can scales horizontally to support millions of requests per second with low latency. Fully managed cloud computing databases such as Cloud Bigtable enable organizations to store, analyze, and manage petabytes of data without the operational overhead of traditional self-managed databases. Even with all the cost efficiencies that cloud databases offer, as these systems continue to grow and support your applications, there are additional opportunities to optimize costs. This blog post reviews the billable components of Cloud Bigtable, discusses the impact various resource changes can have on cost, and introduces several high-level best practices that may help manage resource consumption for your most demanding workloads. (In later posts, we’ll discuss optimizing costs while balancing performance trade-offs using methods and best practices that apply to organizations of all sizes.) Understand the resources that contribute to Cloud Bigtable costsThe cost of your Bigtable instance is directly correlated to the quantity of consumed resources. Compute resources are charged according to the amount of time the resources are provisioned, whereas for network traffic and storage, you are charged by the quantity consumed.More specifically, when you use Cloud Bigtable, you are charged according to the following:NodesIn Cloud Bigtable, a node is a compute resource unit. As the node count increases, the instance is able to respond to a progressively higher request (writes and reads) load, as well as serve an increasingly larger quantity of data. Node charges are the same for instances regardless if its clusters store data on solid-state drives (SSD) or hard disk drives (HDD). Bigtable keeps track of how many nodes exist in your instance clusters during each hour. You are charged for the maximum number of nodes during that hour, according to the regional rates for each cluster. Nodes are priced in hours per node; the nodal unit price is determined by the cluster region.Data storageWhen you create a Cloud Bigtable instance, you choose the storage type: SSD or HDD; this cannot be changed afterward. The average used storage over a one-month period is utilized to calculate the monthly rate. Since data storage costs are region-dependent, there will be a separate line item on your bill for each region where an instance cluster has been provisioned. The underlying storage format of Cloud Bigtable is the SSTable; and you are billed only for the compressed disk storage consumed by this internal representation. This means that you are charged for the data as it is compressed on disk by the Bigtable service. Further, all data in Google Cloud is persisted in the Colossus file storage system for improved durability. Data Storage is priced in binary gigabytes (GiB)/month; the storage unit price is determined according to the deployment region and the storage type, either SSD or HDD.Network trafficIngress traffic, or the quantity of bytes sent to Bigtable, is free. Egress traffic, or the quantity of bytes sent from Bigtable, is priced according to the destination. Egress to the same zone and egress between zones in the same region are free, whereas cross-region egress and inter-continent egress incur progressively increasing costs based on the total quantity of bytes transferred during the billing period. Egress traffic is priced in GiB sent.Backup storage Cloud Bigtable users can readily initiate, within the bounds of project quota, managed table backups to protect against data corruption or operator error. Backups are stored in the zone of the cluster from which they are taken, and will never be larger than the size of the archived table. You are billed according to the storage used and the duration of the backup between backup creation and removal, via either manual deletion or assigned time-to-live (TTL.) Backup storage is priced in GiB/month; the storage unit price is dependent on the deployment region but is the same regardless of the instance storage type.Understand what you can adjust to affect Bigtable cost As discussed, the billable costs of Cloud Bigtable are directly correlated to the compute nodes provisioned as well as the storage and network resources consumed over the billing period. Thus, it is intuitive that consuming fewer resources will result in reduced operational costs. At the same time, there are performance and functional implications of resource consumption rate reductions that require consideration. Any effort to reduce operational cost of a running database-dependent production system is best undertaken with a concurrent assessment of the necessary development or administrative effort, while also evaluating potential performance tradeoffs. Certain resource consumption rates can be easily changed, while other types of resource consumption rate changes require application or policy changes, and the remaining type can only be achieved upon the completion of a data migration.Node countDepending on your application or workload, any of the resources consumed by your instance might represent the most significant portion of your bill, but it is very possible that the provisioned node count constitutes the largest single line item (we know, for example, that Cloud Bigtable nodes generally represent 50-80% of costs depending on the workload). Thus it is likely that a reduction in the number of nodes might offer the best opportunity for expeditious cost reduction with the most impact. As one would expect, cluster CPU load is the direct result of the database operations served by the cluster nodes. At a high level, this load is generated by a combination of the database operation complexity, the rate of read or write operations per second, and the rate of data throughput required by your workload. The operation composition of your workload may be cyclical and change over time, providing you the opportunity to shape your node count to the needs of the workload. When running a Cloud Bigtable cluster, there are two inflexible maximum metric upper bounds: the maximum available CPU (i.e., 100% average CPU utilization) and the maximum average quantity of stored data that can be managed by a node. At the time of writing, nodes of SSD and HDD clusters are limited to manage no more than 2.5 TiB and 8 TiB data per node respectively.If your workload attempts to exceed these limits, your cluster performance may be severely degraded. If available CPU utilization is exhausted, your database operations will increasingly experience undesirable results: high request latency, and an elevated service error rate. If the amount of storage per node exceeds the hard limit in any instance cluster, writes to all clusters in that instance will fail until you add nodes to each cluster that is over the limit.As a result, you are recommended to choose a node count for your cluster such that some headroom is maintained below the respective metric upper bounds. In the event of an increase in database operations, the database can continue to serve requests with optimal latency, and the database will have room to support spikes in load before hitting the hard serving limits. Alternatively, if your workload is more data-intensive than compute-intensive, it might be possible to reduce the amount of data stored in your cluster such that the minimum required node count is lowered.Data storage volumeSome applications, or workloads, generate and store a significant amount of data. If this evokes the behavior of your workload, there might be an opportunity to reduce costs by storing, or retaining, less data in Cloud Bigtable. As discussed, data storage costs are correlated to the amount of data stored over time: if less data is stored in an instance, the incurred storage costs will be lower. Depending on the storage volume, the structure of your data and the retention policies, an opportunity for cost savings could exist for either instances of the SSD or HDD storage types.As noted above, since there is a minimum node requirement based on the total data stored, there is a possibility that reducing the data stored might reduce both data storage costs as well as provide an opportunity for reduced node costs.Backup storage volume Each table backup performed will incur additional cost for the duration of the backup storage retention. If you can determine an acceptable backup strategy that retains fewer copies of your data for less time, you will be able to reduce this portion of your bill. Storage typeDepending on the performance needs of your application, or workload, there is a possibility that both node and data storage costs can be reduced if your database is migrated from SSD to HDD. This is due to the fact that HDD nodes can manage more data than SSD nodes, and the storage costs for HHD are an order of magnitude lower than SSD storage. However, the performance characteristics are different for HDD: read and write latencies are higher, supported reads per second are lower, and throughput is lower. Therefore, it is essential that you assess the suitability of HDD for the needs of your particular workload before choosing this storage type.Instance topology At the time of writing, a Cloud Bigtable instance can contain up to four clusters provisioned in the available Google Cloud zones of your choice. In case your instance topology encompasses more than one cluster, there are several potential opportunities for reducing your resource consumption costs. Take a moment to assess the number and the locations of clusters in your instance. It is understandable that each additional cluster results in additional node and data storage costs, but there is also a network cost implication. When there is more than one cluster in your instance, data is automatically replicated between all of the clusters in your instance topology.If instance clusters are located in different regions, the instance will accrue network egress costs for inter-region data replication. If an application workload issues database operations to a cluster in a different region, there will be network egress costs for both the calls originating from the application and the responses from Cloud Bigtable.There are strong business rationales, such as system availability requirements, for creating more than one cluster in your instance. For instance, a single cluster provides three nines, or 99.9% availability, and a replicated instance with two or more clusters provides four nines, or 99.99%, availability when a multi-cluster routing policy is used. These options should be taken into account when evaluating the needs for your instance topology.When choosing the locations for additional clusters in a Cloud Bigtable instance, you can choose to place replicas in geo-disparate locations such that data serving and persistence capacity are close to your distributed application endpoints. While this can provide various benefits to your application, it is also useful to weigh the cost implications of the additional nodes, the location of the clusters, and the data replication costs that can result from instances that span the globe. Finally, while limited to a minimum node count by the amount of data managed, clusters are not required to have a symmetric node count. The result is that you could asymmetrically size your clusters according to the expected load from application traffic expected for each cluster.High-level best practices for cost optimizationNow that you have had a chance to review how costs are apportioned for Cloud Bigtable instance resources, and you have been introduced to the resource consumption adjustments available that affect billing cost, check out some strategies available to realize cost savings that will balance the tradeoffs relative to your performance goals. (We’ll discuss techniques and recommendations to follow these best practices in the next post.). Options to reduce node costsIf your database is overprovisioned, meaning that your database has more nodes than needed to serve database operations from your workloads, there is an opportunity to save costs by reducing the number of nodes. Manually optimize node count If the load generated by your workload is reasonably uniform, and your node count is not constrained by the quantity of managed data, it may be possible to gradually decrease the number of nodes using a manual process to find your minimum required count.Deploy autoscalerIf the database demand of your application workload is cyclical in nature, or undergoes short-term periods of elevated load, bookended by significantly lower amounts, your infrastructure may benefit from an autoscaler that can automatically increase and decrease the number of nodes according to a schedule or metric thresholds.Optimize database performance As discussed earlier, your Cloud Bigtable cluster should be sized to accommodate the load generated by database operations originating from your application workloads with a sufficient amount of headroom to absorb any spikes in load. Since there is this direct correlation between the minimum required node count and the amount of work performed by the databases, an opportunity may exist to improve the performance of your cluster so the minimum number of required nodes is reduced.Possible changes in your database schema or application logic that can be considered include rowkey design modifications, filtering logic adjustments, column naming standards, and column value design. In each of these cases, the goal is to reduce the amount of computation needed to respond to your application requests.Store many columns in a serialized data structure Cloud Bigtable organizes data in a wide-column format. This structure significantly reduces the amount of computational effort required to serve sparse data. On the other hand, if your data is relatively dense, meaning that most columns are populated for most rows, and your application retrieves most columns for each request, you might benefit from combining the columnar values into fields in a single data structure. A protocol buffer is one such serialization structure.Assess architectural alternativesCloud Bigtable provides the highest level of performance when reads are uniformly distributed across the rowkey space. While such an access pattern is ideal, as serving load will be shared evenly across the compute resources, it is likely that some applications will interact with data in a less uniformly distributed manner.For example, for certain workload patterns, there may be an opportunity to utilize Cloud Memorystore to provide a read-through, or capacity cache. The additional infrastructure would add an additional cost, however certain system behavior may precipitate a larger decrease in Bigtable node cost. This option would most likely benefit cases when your workload queries data according to a power law distribution, such as theZipf distribution, where a small percentage of keys accounts for a large percentage of the requests, and your application requires extremely low P99 latency. The tradeoff is that the cache will be eventually consistent, consequently your application must be able tolerate some data latency.Such an architectural change would potentially allow for you to serve requests with greater efficiency, while also allowing you to decrease the number of nodes in your cluster. Options to reduce data storage costsDepending on the data volume of your workload, your data storage costs might account for a large portion of your Cloud Bigtable cost. Data storage costs can be reduced in one of two ways: store less data in Cloud Bigtable, or choose a lower-cost storage type. Developing a strategy for offloading data for longer-term data to either Cloud Storage or BigQuery may provide a viable alternative to keeping infrequently accessed data in Cloud Bigtable without eschewing the opportunity for comprehensive analytics use cases. Assess data retention policies One straightforward method to reduce the volume of data stored is to amend your data retention policies so that older data can be removed from the database after a certain age threshold. While writing an automated process to periodically remove data outside the retention policy limits would accomplish this goal, Cloud Bigtable has a built-in feature that allows for garbage collection to be applied to columns according to policies assigned to their column family. It is possible to set policies that will limit the number of cell versions, or define a maximum age, or a time-to-live (TTL), for each cell based on its version timestamp. With garbage collection policies in place, you are given the tools to safeguard against unbounded Cloud Bigtable data volume growth for applications that have established data retention requirements. Offload larger data structuresCloud Bigtable performs well with rows up to 100 binary megabytes (MiB) in total size, and can support rows up to 256 MiB, which gives you quite a bit of flexibility about what your application can store in each row. Yet, if you are using all of that available space in every row, the size of your database might grow to be quite large.For some datasets, it might be possible to split the data structures into multiple parts: one, optimally smaller part in Cloud Bigtable and another, optimally larger, part in Google Cloud Storage. While this would require your application to manage the two data stores, it could provide the opportunity to decrease the size of the data stored in Cloud Bigtable, which could in turn lower storage costs.Migrate from instance storage from SSD to HDDA final option that may be considered to reduce storage cost for certain applications is a migration of your storage type to HHD from SSD. Per-gigabyte storage costs for HDD storage are an order of magnitude less expensive than SSD. Thus, if you need to have a large volume of data online, you might assess this type of migration.That said, this path should not be embarked upon without serious consideration. Only once you have comprehensively evaluated the performance tradeoffs, and you have allotted the operational capacity to conduct a data migration, might this be chosen as a viable path forward. Options to reduce backup storage costs At the time of writing, you can create up to 50 backups of each table and retain each for up to 30 days. If left unchecked, this can add up quickly.Take a moment to assess the frequency of your backups and the retention policies you have in place. If there are not established business or technical requirements for the current quantity of archives that you currently retain, there might be an opportunity for cost reduction. What’s next Cloud Bigtable is an incredibly powerful database that provides low latency database operations and linear scalability for both data storage and data processing. As with any provisioned component in your infrastructure stack, the cost of operating Cloud Bigtable is directly proportional to the resources consumed by its operation. Understanding the resource costs, the adjustments available, and some of the cost optimization best practices is your first step toward finding a balance between your application performance requirements and your monthly spend. In the next post in this series, you will learn about some of the observations you can make of your application to better understand the options available for cost optimization. Until then, you can:Learn more about Cloud Bigtable pricing Review the recommendations about choosing between SSD and HDD storageUnderstand more about the various aspects of Cloud Bigtable performance

Quelle: Google Cloud Platform