PedidosYa: BigQuery reduced our total cost per query by 5x

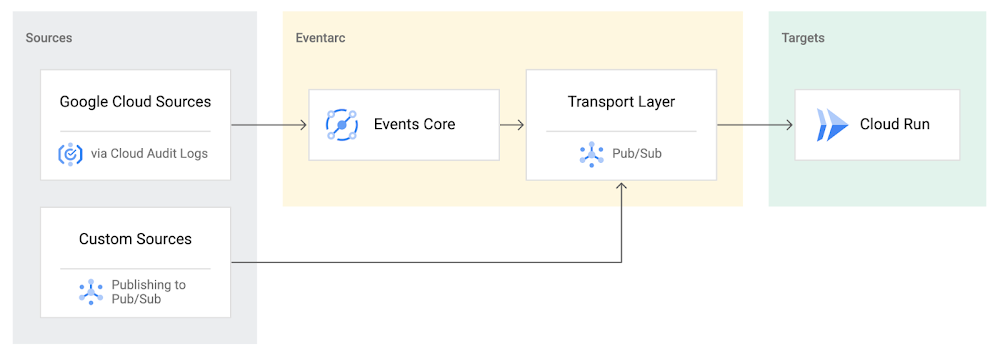

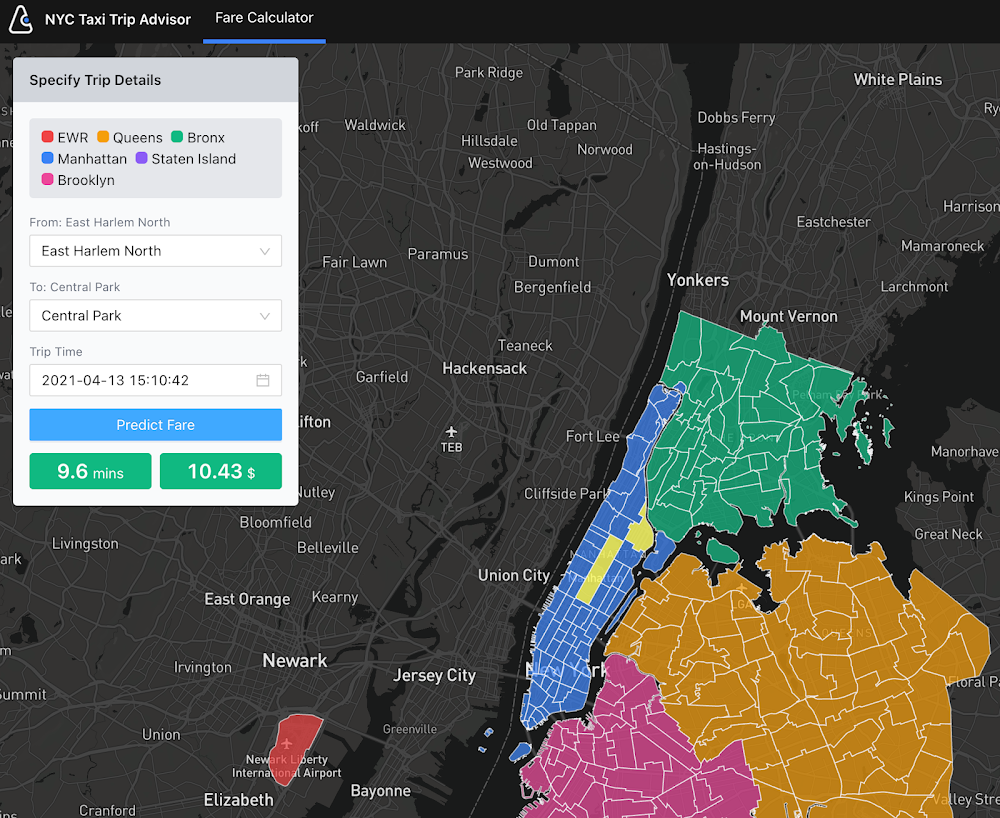

Editor’s note: PedidosYa is the market leader for online food ordering in Latin America, serving 15 markets and over 400 cities. It’s also one of the largest brands within the German multinational company Delivery Hero SE. With over 20 million app downloads, PedidosYa provides the best online delivery experience through 71,000+ online partners, including restaurants, shops, drugstores, and specialized markets. Having constant access to fresh customer data is a key requirement for PedidosYa to improve and innovate our customer’s experience. Our internal stakeholders also require faster insights to drive agile business decisions. Back in early 2020, PedidosYa’s leadership tasked the data team to make the impossible possible. Our team’s mission was to democratize data by providing universal and secure access while creating a comprehensive information ecosystem across PedidosYa. We also had to achieve this goal while keeping costs under control— even during the migration stage and removing operational bottlenecks. Challenges with legacy cloud infrastructurePedidosYa first built its data platform on top of AWS. Our data warehouse ran on Redshift, and our data lake was in S3. We used Presto and Hue as the user interfaces for our data analysts. However, maintaining this infrastructure was a daunting task. Our legacy platform couldn’t keep up with the increasing analytics demands. For example, the data stored on S3 complemented by Presto/Hue required high operational overhead. This was because Presto and our IAM (identity access management) didn’t integrate well in our legacy ecosystem. Managing individual users and mapping IAM roles with groups and Kerberos was operationally time-consuming and costly. Further, sharding access on the S3 files was far too complicated to enable seamless ACLs (access control lists). There were also challenges with workload management. Our data warehouse had batch data loaded overnight. If one analyst scheduled a query to run during the overnight ETL (extract, transform, load) workload, it would disrupt the current ETL task. This could stop the entire data pipeline. We’d have to wait until data engineers intervened with a manual fix.It was also difficult to understand whether a query error was due to performance issues or platform resource exhaustion. This lack of clarity affected our data analysts’ ability to autonomously improve querying efficiency. Data team members needed to manually inspect personal queries looking for performance issues. Also, the current architecture was prone to a ‘tragedy of the commons’ situation; it was seen as an unlimited and free resource. As a result, it was impossible to disentangle the infrastructure from different stakeholder teams, as all had very different needs. The decision to modernize our data warehouseGiven the growing challenges from our legacy platform, our tech team decided to transform our analytics environment with a modern data warehouse. They required the following key criteria from their next data platform: Scalability – The ability to grow with elastic infrastructure.Cost control – Cost management and transparency. These factors promote efficiency and ownership—both key aspects of data democratization.Metadata management – Intuitive data platform focusing on users’ previous SQL knowledge. Plus, being able to enrich the informational ecosystem with metadata, to diminish data gatekeepers.Ease of management – The team needed to reduce operational costs with a serverless solution. Data engineers wanted to focus on their key roles rather than acting as database administrators and infrastructure engineers. The team also wanted much higher availability, and to reduce the impact of maintenance windows and vacuum/analysis.Data governance and access rights – With a growing employee base with varying data access requirements, the team needed a simple yet comprehensive solution to understand and track user access to data.Migrating to Google CloudAfter exploring other alternatives, we concluded Google Cloud had an answer to each of our decision drivers. Google Cloud’s serverless, managed, and integrated data platform, coupled with its seamless integration across open-source solutions, was the perfect answer for our organization. In particular, the natural integration with Airflow as a job orchestrator and Kubernetes for flexible on-demand infrastructure was key. We used Dataflow together with Pub/Sub and Cloud Functions for our data ingestion requirements, which has made our deployment process with Terraform seamless. Because we set up everything in our environment programmatically, operation time has diminished. Google Cloud reduced the deployment process from about 16 hours in our legacy platform to 4 hours. This is partly due to the friendliness of automating the deployment (such as schema check, load test, table creation, build.) process with Terraform, Cloud Functions, Pub/Sub, Dataflow, and BigQuery on GCP. Input messages processed with Dataflow allow us to abstract and plan the schema changes according to the needs of the functional team. For example, schema changes raise an alarm, and then we can modify the raw layer table schema. By doing this, we ensure that backend modifications that we don’t control do not affect upper layers.A key reason why we picked Google Cloud was because of its advanced cost and workload management coupled with its transparent log analytics. This information gives us a complete view into any query performance issues to make improvements on the fly. Further, we achieved a significant amount of cost savings by consolidating multiple tools to BigQuery.With BigQuery, we’ve been able to reduce our total cost per query by 5x.This was due to a number of reasons:Automating pipeline deployment made it much simpler to maintain the data processing processes. Analysts are conscious about what queries they’re running, resulting in running better, more optimized queries. Analysts use a Data Studio dashboard to see their queries and all the associated costs. As a result, there’s a lot more transparency for each persona. With these changes, we can easily manage and assign costs associated with each workload with their own cost centers using specific Google Cloud projects.Change management is always challenging. However, BigQuery is intuitive and doesn’t have a steep learning curve from Hue/Hive on SQL basics. BigQuery also allowed the team to expand its capabilities and enabled them to properly work with nested structures, avoiding unnecessary joins and improving query efficiency. Additionally, we now use Data Catalog as our unique point of truth for metadata management. This allows our team to break the data access barriers and enable federation of data across the organization. By using Airflow to orchestrate everything, we keep track of every data stream. With this information, each end user can see their regularly used data entities’ status via the dashboard. This also adds transparency to our everyday data processes.Finally, with Google Cloud’s IAM rules applied across the different products, data sharing and access is close to a noOps experience. We have programmatically implemented access according to roles and level access within the company. This allows certain pre-validated roles to view more sensitive information. These solutions help drive a more automated data governance experience. Up next: Google Cloud AI/MLThe new stack based on BigQuery has created significant productivity gains. Freed from the burden of operational management, PedidosYa’s data team can now focus on adding value through data tools and products. Our data engineers are better equipped to integrate constantly changing transactional and operational data.The dataOps team can automate the infrastructure and provide autonomy to the end user.Our data quality team can focus on bringing added value to data stakeholders. Data scientists and data analytics can spend more time analyzing data and less time asking data gatekeepers for data access.PedidosYa can now democratize data access with a well-governed architecture. We are still at the beginning of our journey, but we are closer to achieving our vision of building a data-driven organization. Up next: expanding our artificial intelligence and machine learning capabilities.Tune in to Google Cloud’s Applied ML Summit on June 10th, 2021, or listen on-demand later, to learn how to apply groundbreaking machine learning technology in your projects.Related ArticleTransforming your business with the data cloudAccelerate your business transformation with the data cloud.Read Article

Quelle: Google Cloud Platform