How Zebra Technologies manages security & risk using Security Command Center

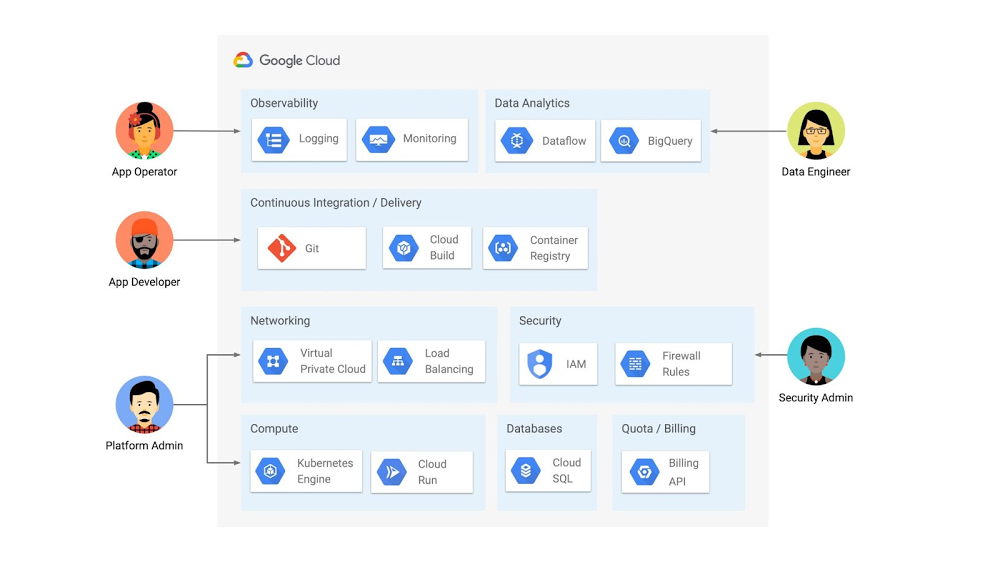

Zebra Technologies enables businesses around the world to gain a performance edge – our products, software, services, analytics and solutions are used principally in the manufacturing, retail, healthcare, transportation & logistics and public sectors. With more than 10,000 partners across 100 countries, our businesses and workflows operate across the interconnected world. Many of these workflows run through Google Cloud, where we host environments for both our own enterprise use and for our customer solutions. As CISO for Zebra, it’s my team’s responsibility to keep our organization’s network and data secure. We must ensure the confidentiality, integrity, and availability of all our assets and data. Google Cloud’s Security Command Center (SCC) supports our approach to protecting our technology environment.Adopting a cloud-native security approachSecuring our environment at all times is of utmost importance. As a security-conscious organization, we run best-of-breed security technologies in our environment. During our cloud transformation, we found security visibility gaps that our existing tools did not address when it came to our cloud assets and infrastructure. We needed to augment the capabilities we had with our cloud-agnostic security stack by adding cloud-native security tools that could provide a holistic view of our Google Cloud assets. That’s when we found Security Command Center. SCC’s cloud-native, platform-level integration with Google Cloud provides real-time visibility into our assets. It gives us the ability to see resources that are currently deployed, their attributes, and changes to those resources in real-time. For instance, SCC gives visibility into how many projects are new, what resources like Compute Engine, Cloud Storage buckets are deployed, what images are running on our containers, and security findings in our firewall configurations.At Zebra, the infosec team partners with product and security solution teams to manage risk, and to provide technology platforms that detect and respond to threats in our environment. We use SCC across our teams to monitor our Google Cloud environment, quickly discover misconfigurations, and detect and respond to the threats. We were also looking for new ways to get additional vulnerability information provided by vulnerability scanners into the hands of the development and support teams. Security Command Center emerged as a means to communicate that information through a common user interface. SCC’s third-party integration capabilities enabled us to provide findings from our vulnerability scanner into the same user interface in Security Command Center for our development and support teams to assess risk and drive resolution. The compliance benchmarks views that were provided out-of-the-box by Security Command Center revealed how we stacked up against key industry standards, and showed best practices to take corrective action. Operationalizing with Security Command Center PremiumWe run a 24/7 infosec operation that monitors and responds to threats across our environment. We use SCC for critical detection and response both in our Security Operations Center (SOC) and in our Vulnerability Management functions. SCC helps us identify threats such as potential malware infections, data exfiltration, cryptomining activity, brute force SSH attacks, and more. We particularly like SCC’s container security capabilities that enable us to detect top attack techniques that foreclose adversarial pathways for container threats. We’ve also integrated Security Command Center into our Security Incident and Event Management (SIEM) tool to ensure threat detections that are surfaced by Security Command Center get an immediate response. The integration capabilities provided by SCC allow us to seamlessly embed it into our SOC design, where we triage and respond to events. Being able to act from the platform in the same manner and in the same timeframe as detections from our other tools allows us to respond effectively using the same standard processes. Our SOC team has seen great value in how SCC allows us to directly pivot from a finding to detailed logs, which has helped us to significantly reduce triage time.We have a dedicated Vulnerability Management function that addresses misconfiguration risks in our resources, and vulnerabilities in our applications. Our Vulnerability Management team uses information presented in SCC’s dashboards to assess potential configuration risks and work with the asset owners to drive resolution. SCC helped us to address what needed to be fixed, especially as new resources are coming onboard into our environment we were able to detect if those assets had mis-configurations or violated any compliance controls. This team uses third-party tools to scan for known common vulnerability exposures (CVEs). We liked how SCC integrates with third-party vulnerability tools, so we can use SCC as a single pane of glass for our vulnerability information. For instance, we can use SCC to identify critical assets that have misconfigurations or vulnerabilities, assess the severity in one view so that we can immediately act to fix the issue. Before deploying SCC, we relied on spreadsheets or other mechanisms to share this information. Now, all security vulnerability findings exist in a unified view. All relevant information is available for our teams to digest and address from the same place. We also engage our engineering development teams to take certain ownership for addressing the security findings for the assets in their line of business. This is what the industry refers to as “shift left” security. We have multiple development teams at Zebra, and believe they should have the power to address security findings within their teams. SCC’s granular access control (scoped view) capability enables us to provide asset visibility and security findings in real-time based on roles and responsibilities. This ensures individual teams can only see the assets and findings for which they are responsible. This helps us limit sensitive information to those who have a need to know, and helps those individual teams to take action quickly as they are not overwhelmed or distracted by security findings that are not under their ownership. It also helps us reduce security risk and achieve compliance goals by limiting access as needed within our organization. In addition, this scoped view capability has created operational efficiencies in how we addressed our asset misconfigurations and vulnerability findings.Securing the future together with Google CloudSecurity Command Center has become integral to our security fabric thanks to its native platform-level integration with Google Cloud, as well as its ease of use. Overall, Security Command Center helps us continuously monitor our Google Cloud environment to provide real-time visibility and a prioritized view of security findings so that we can quickly respond and take action. Both Zebra and Google have a shared goal to keep cloud environments secure. With the help of Google Cloud and Security Command Center, Zebra Technologies improved our overall security posture and workload protection. It also helped us enhance our collaboration between the development teams and security teams as well as manage and lower the company’s security risk. Google Cloud blog note:Security Command Center is a native security and risk management platform for Google Cloud. Security Command Center Premium tier provides built-in services that enable you to gain visibility into your cloud assets, discover misconfigurations and vulnerabilities in your resources, detect threats targeting your assets, and help maintain compliance based on industry standards and benchmarks. To enable a Premium subscription, contact your Google Cloud Platform sales team. You can learn more about Security Command Center and how it can help with your security operations using our product documentation.Related ArticleSecurity Command Center now supports CIS 1.1 benchmarks and granular access controlApply fine-grained access control and compare your security posture against industry best practices with new Security Command Center capa…Read Article

Quelle: Google Cloud Platform