Docker Desktop 4.15 is here, packed with usability upgrades to make it simpler to find the images you want, manage your containers, discover vulnerabilities, and work with dev environments. Not enough for you? Well, it’s also easier to build and share extensions to add functionality to Docker Desktop. And Wasm+Docker has moved from technical preview to beta!

Let’s dig into what’s new and great in Docker Desktop 4.15.

Improvements for macOS

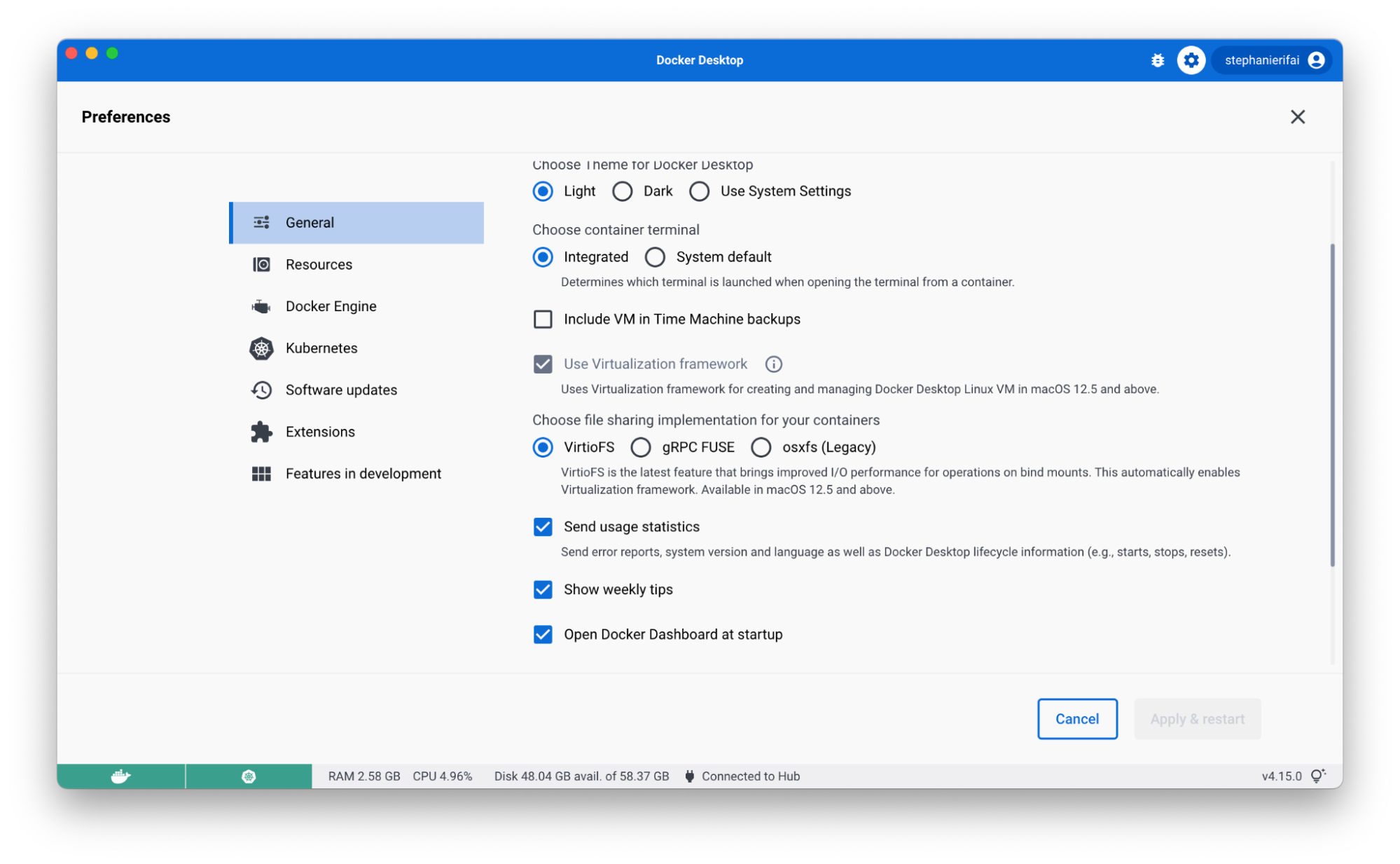

Move Faster with VirtioFS — now GA

Back in March, we introduced VirtioFS to improve sharing performance for macOS users. With Docker Desktop 4.15, it’s now generally available and you can enable it on the Preferences page.

Using VirtioFS and Apple’s Virtualization Framework can significantly reduce the time it takes to complete common tasks like package installs, database imports, and running unit tests. For developers, these gains in speed mean less time waiting for common operations to complete and more time focusing on innovation.

This option is available for macOS 12.5 and above. If you encounter any issues, you can turn this off in your settings tab.

Adminless install during first run on macOS

Now you don’t need to grant admin privileges to install and run Docker Desktop. Previously, Docker Desktop for Mac had to install the privileged helper process com.docker.vmnetd on install or on the first run.

There are some actions that may still require admin privileges (like binding to a privileged port), but when it’s needed, Docker Desktop will proactively inform you that you need to grant permission.

For more information see permission requirements for Mac.

Jump in faster with quick search

When you work with Docker Desktop, you probably know exactly which container you want to start with or image you want to run. But there might be times when you don’t remember if it’s already running — or if you pulled it locally at all.

So you might check a few of the current tabs in the Docker Dashboard, or maybe do a docker ps in the CLI. By the time you find what you need, you’ve checked a few different places, spent some time searching Docker Hub to find the right image, and probably got a little annoyed.

With quick search, you get to skip all of this (especially the annoyance!) and find exactly what you’re looking for in one simple search — along with relevant actions like the option to start/stop a container or run a new image. It even searches the Docker Hub API to help you run any public and private images you’ve hosted there!

To get started, click the search bar in the header (or use the shortcut: command+K on Mac / ctrl+K on Windows) and start typing.

The first tab shows results for any local containers or compose apps. You can perform quick actions like start, stop, delete, view logs, or start an interactive terminal session with a running container. You can also get a quick overview of the environment variables.

If you flip over to the Images tab, you’ll see results for Docker Hub images, local images, and images from remote repositories. (To see remote repository images, make sure you’re signed into your Docker Hub account.) Use the filter to easily narrow down the result types you want to see.

When you filter for local images, you’ll see some quick actions like run and an overview of which containers are using the image.

With Docker Hub images, you can pull the image by tag, run it (running will also pull the image as the first step), view documentation, or go to Docker Hub for more details.

Finally, with images in remote repositories, you can pull by tag or get quick info, like last updated date, size, or vulnerabilities.

Be sure to check out the tips in the footer of the search modal for more shortcuts and ways to use it. We’d love to hear your feedback on the experience and if there’s anything else you’d like to see added!

Flexible image management

Based on user feedback, Docker Desktop 4.15 includes user experience enhancements for the images tab. Cleaning up multiple images can now be done easier with multi-select checkboxes (this functionality used to be behind the “bulk clean up” button).

You can also manage your columns to only show exactly what you want. Want to view your complete container and image names? Drag the dividers in the table header to resize columns. You can also sort columns by header attributes or hide columns to create more space and reduce clutter.

And if you navigate away from your tab, don’t worry! State persistence will keep everything in place so your sorting and search results will be right where you left them.

Know your image vulnerabilities automatically

Docker Desktop now automatically analyzes images for vulnerabilities. When you explore an image, Docker Desktop will automatically provide you with vulnerability information at a base image and image layer level. The base image overview provides a high level view of any dependencies in packages that introduce Common Vulnerabilities and Exposures (CVEs). And it’ll let you know if there’s a newer base image version available.

If you’d prefer images were only analyzed on viewing them, you can turn off auto-analysis in Settings > Features in development > Experimental features > Enable background SBOM indexing.

Thanks to everyone who provided feedback in our 4.14 release. And let us know what you think of the new image overview!

Use Dev Environments with any IDE

When you create a new Dev Environment via Docker Desktop, you can now use any editor or IDE you’ve installed locally. Docker Desktop bind mounts your source code directory to the container. You can interact with the files locally as you normally do, and all your changes will be reflected in the Dev Environment.

Dev Environments help you manage and run your apps in Docker, while isolated from your local environment. For example, if you’re in the middle of a complicated refactor, Dev Environments makes it easier to review a PR without having to stash WIP. (Pro tip: you can install our extension for Chrome or Firefox to quickly open any PR as a Dev Environment!)

We’ve been making lots of little fixes to make Dev Environments better, including:

Custom names for projects

Better private repo support

Better port handling

CLI fixes (like interactive docker dev open)

Let us know what other improvements you’d like to see!

Building and sharing Docker Extensions just got easier

Did you know that you can build your own Docker Extension? Whether you’re just sharing it with your team or adding it to the Extensions Marketplace, Docker Desktop 4.15 makes the process easier and faster.

Meet the Build tab

In the Extensions Marketplace, you’ve got your Browse tab, your Manage tab, and, now, your Build tab. The Build tab brings all the resources you need to get started into one centralized view. You’ll find links to videos, documentation, community resources, and more! To start building, click “+ Add Extensions” in Docker Desktop and navigate to the new Build tab.

Share a link to your extension with others

So now you’ve made an extension to share with your teammates or the community. You could submit it to the Extensions Marketplace, but what if you aren’t quite ready to? (Or what if it’s just for your team?)

Prior to Docker Desktop 4.15, the extension developer had to share a CLI command that looked something like this: docker extension install IMAGE[:TAG]. Then anyone who wanted to install the extension had to paste that command into their CLI.

In Docker Desktop 4.15, we’ve simplified the process for both you and the developer you want to run your extension. When your extension’s ready to share, use the share button in the Manage tab to create a link. When the person you share it with opens on the link, they’ll be able to select the “Install” button from Docker Desktop.

Have an idea for a new Docker Extension?

If you have an idea for a new Docker Extension, we’ve got a new way that you can share them with Docker and the community. Inside the Extensions Marketplace, there’s a link to request an extension. This link will take you to our new GitHub repo that allows you to add your idea to our discussions and upvote existing ones. If you’re an extension developer, but aren’t sure what to build, be sure to check out the repo for some inspiration!

Docker+Wasm is now beta

We’ve integrated WasmEdge’s runwasi containerd shim into Docker Desktop (previously provided in a Technical Preview build).

This allows developers to run Wasm applications and compose Wasm applications with other containerized workloads, such as a database, in a single application stack. Learn more about it in the documentation and be on the lookout for more soon!

What else would make your life easier?

Take a test drive of the new usability upgrades and let us know what you think! Is there something you think we missed? Be sure to check out our public roadmap to see upcoming features — and to suggest any other ones you’d like to see.

And don’t forget to check out the release notes for a full breakdown of what’s new in Docker Desktop 4.15!

Quelle: https://blog.docker.com/feed/