Oracle Database@Azure offers new features, regions, and programs to unlock data and AI innovation

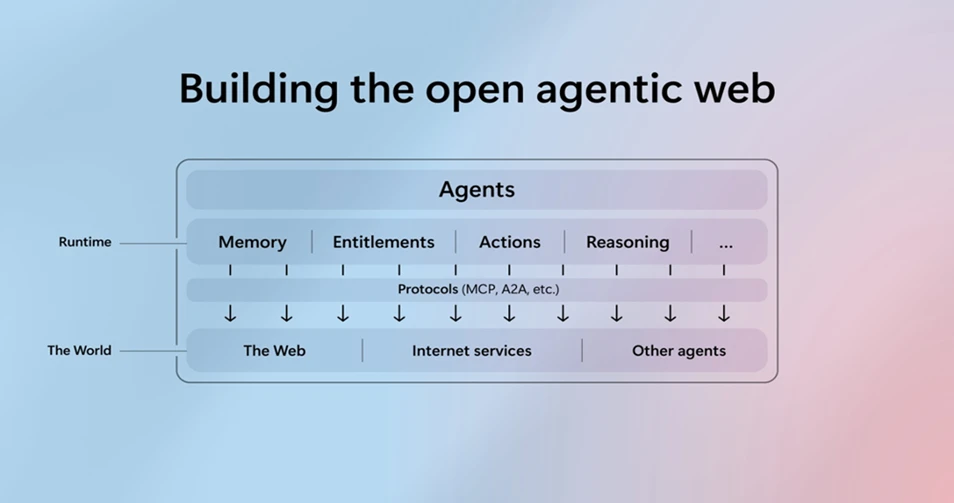

Together, Microsoft and Oracle are delivering the most comprehensive, enterprise‑ready platform for organizations migrating their Oracle solutions to the public cloud—especially those aiming to empower IT professionals and developers to streamline AI adoption and enhance employee productivity.

Oracle Database@Azure was the first offering of its kind in the market and today has the broadest regional availability, new ways to unify your data in Microsoft Fabric, deeper security integrations with Microsoft Defender, and can run most Oracle Database services—Base Database Service, Exadata Database Service on Dedicated Infrastructure, Exadata Database Service on Exascale Infrastructure and Autonomous Database as well as Oracle Database 19c or 23ai—on Azure.

Get started with Oracle Database@Azure

The result? A truly enterprise‑ready platform that offers more choice, increased control, and expanded opportunity to innovate with confidence–and customers are excited about the impact it’s driving in action.

Oracle Database@Azure delivered Exadata-grade performance natively within Azure, enabling us to host our Oracle EBS in the cloud without compromise. We gained native, real-time access to EBS data from Azure and seamless integration with both Oracle and non-Oracle data sources. Paired now with Microsoft Fabric, Power BI, and Copilot studio, our team will be able to accelerate insight delivery to business stakeholders and build agentic workflows faster. It’s a practical path to iterate on new features while keeping governance and security at the forefront.

Mahesh Tyagi, Vice President Finance Engineering, Activision Blizzard

New Oracle Database@Azure features strengthen its enterprise leadership

Enterprise-grade capabilities are essential for organizations that depend on Oracle databases for mission-critical workloads. That’s why Microsoft continues to advance Oracle Database@Azure, bringing together the scale and resilience of Azure with industry-leading security and AI innovation.

Take a look at our latest announcements. For more details on each of these capabilities, check out our technical blog.

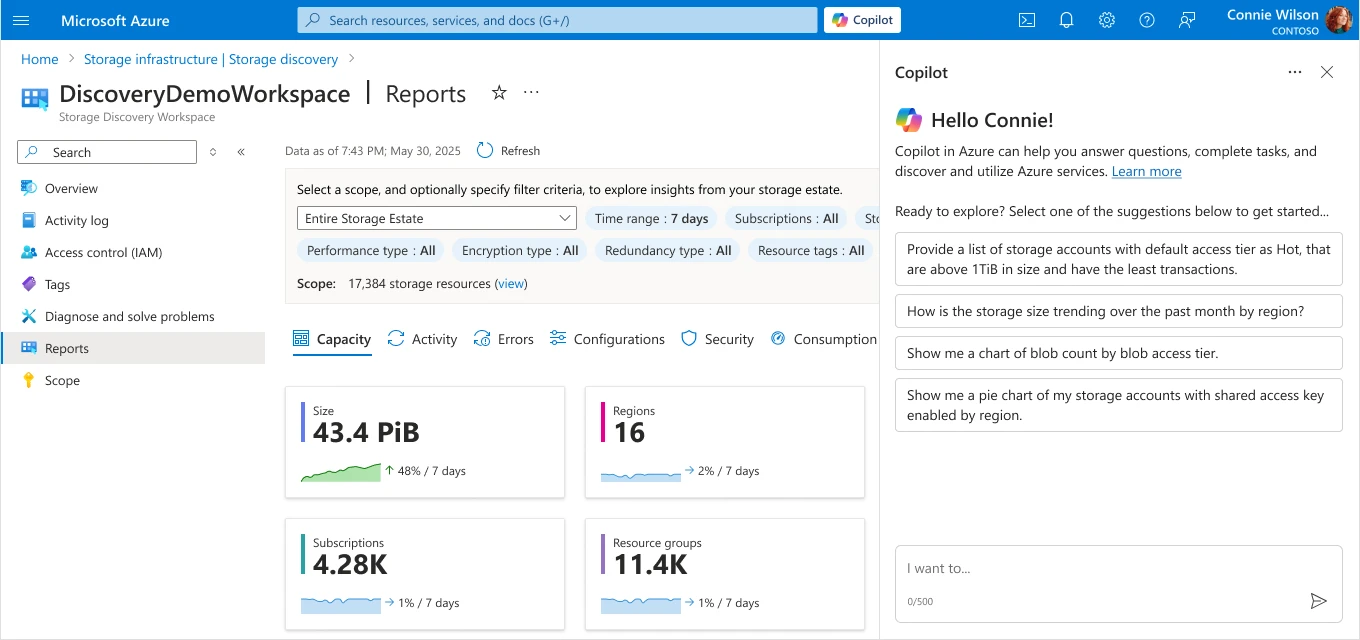

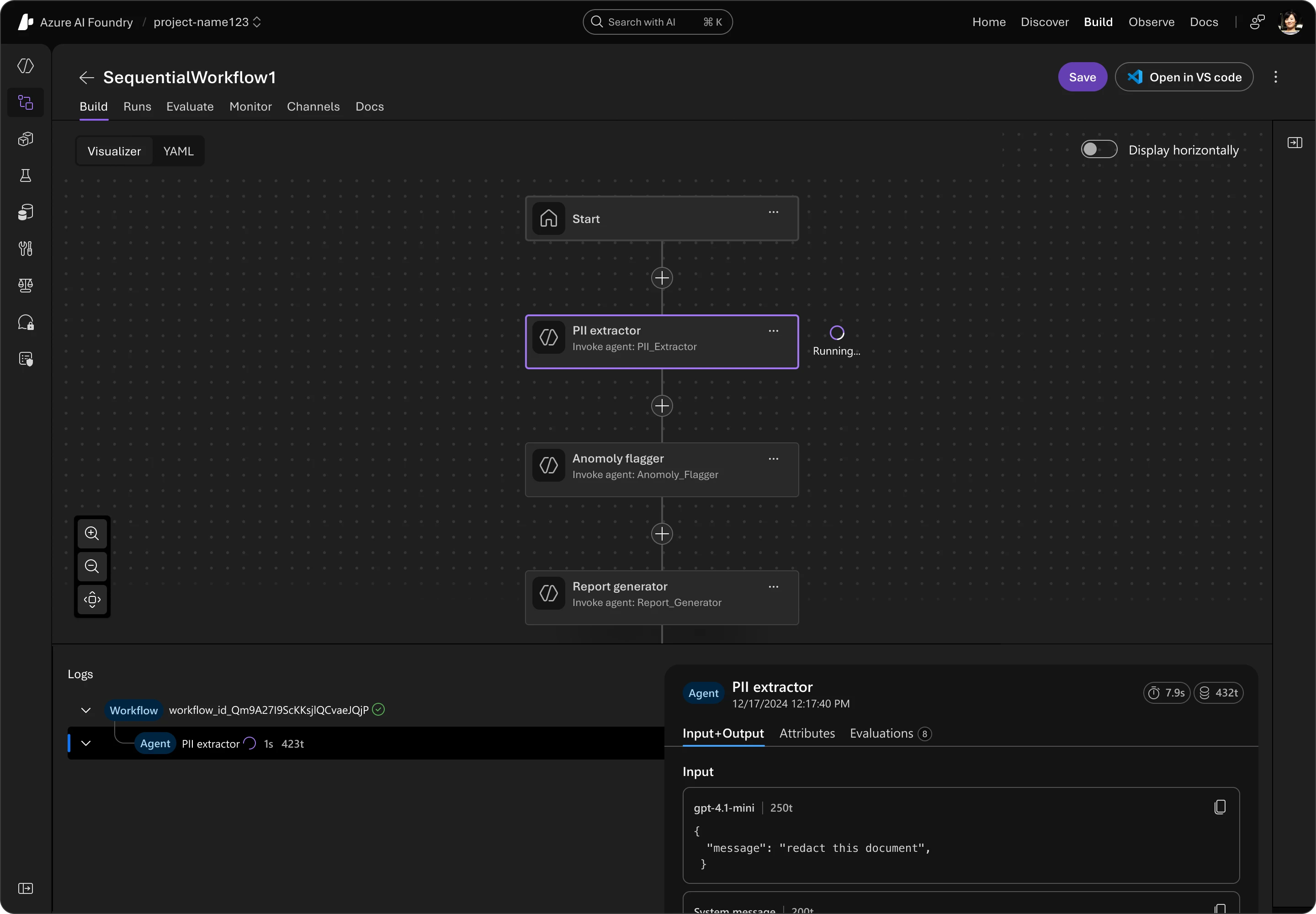

Announcing two new capabilities for real-time data integration and replication with Microsoft Fabric for an AI-ready data estate

Oracle Database mirroring in OneLake, now in public preview, enables continuous zero-ETL synchronization of Oracle data into OneLake, enabling a unified real-time data estate in Microsoft Fabric. Also available today, native Oracle GoldenGate integration offers managed, high-performance, low-latency replication and can be purchased using Microsoft Azure Consumption Commitment (MACC). Once your Oracle data is connected through Oracle Database@Azure, you can use powerful AI innovation tools like Microsoft Copilot Studio, Azure AI Foundry, and Power BI.

Oracle Base Database is generally available

Oracle Database@Azure offers customers the flexibility to run any Oracle Database service on Azure. Oracle Database@Azure now supports all popular Oracle database services—Base Database Service, Exadata Database Service on Dedicated Infrastructure, Exadata Database Service on Exascale Infrastructure and Autonomous Database—and also the choice of using either Oracle Database 19c or 23ai. This provides customers with a comprehensive set of flexible, simple, and cost-effective migration options when moving their Oracle databases to Azure.

Support for Oracle workloads goes beyond Oracle Database services. We’re excited to share that Oracle has introduced support policies for running Oracle E-Business Suite, PeopleSoft, JD Edwards EnterpriseOne, Enterprise Performance Management, and Oracle Retail Applications in Microsoft Azure using Oracle Database@Azure. This enables businesses to harness the power of Microsoft Azure while leveraging Oracle’s industry-leading database technology to achieve greater scalability, performance, and security. We continue to offer full Oracle Maximum Availability (MAA) support—up to platinum tier—available exclusively on Azure, giving customers the highest levels of availability, disaster recovery, and zero-data-loss protection for mission critical workloads.

Microsoft Defender now brings industry-leading threat detection and response to Oracle Database@Azure

Microsoft Defender is a cloud-native security platform that provides unified threat protection, vulnerability management, and automated compliance to safeguard Oracle Database@Azure workloads. Complemented by Microsoft Sentinel’s AI-powered security information and event management (SIEM) for real-time monitoring, and Microsoft Entra ID’s unified identity and access controls, customers get comprehensive enterprise-grade protection designed for today’s complex threat landscape.

Azure Arc for Oracle Database@Azure

Extend Azure’s management, governance, and security capabilities across environments—whether on-premises, multicloud or edge. From a single control plane, Azure Arc enables you to enforce policies, manage identities, and automate lifecycle operations for all your Azure resources—and now, for your Oracle databases running natively on Azure.

Azure IoT Operations and Microsoft Fabric now power an integration blueprint with Oracle Fusion Cloud Supply Chain and Manufacturing (SCM)

This integration enables manufacturers to capture live insights from factory equipment and sensors, automate key processes, and drive data‑driven decisions for greater efficiency and responsiveness.

Available in over 28 regions globally

With plans to reach 33 live regions by the end of the year, Oracle Database@Azure empowers organizations to deploy closer to their applications and users across North America, EMEA, and APAC. Stay up to date on the latest regions to go live here.

Introducing Azure Accelerate for Oracle

To help every organization start quickly and confidently—regardless of their size—Microsoft is excited to offer Azure Accelerate benefits to Oracle customers. Azure Accelerate is a program designed to support customers across their cloud and AI journey with expert guidance and investments. Customers can cut through the complexity of their Oracle migrations—and related application migration, modernization, and AI innovation projects—while also minimizing project costs. Azure Accelerate makes it easier than ever to bring your Oracle workloads to Azure by offering:

Access to trusted experts: Tap into the deep expertise of Azure’s specialized partner ecosystem. Additionally, you can take advantage of the Cloud Accelerate Factory benefit provides Microsoft experts at no additional cost.

Microsoft investments: Access Partner funding and Azure credits designed to make your migration to Azure more cost effective and minimize project risk.

Comprehensive coverage: Get help at every stage of the project, starting with an initial assessment through pilots or proof-of-value to full-scale implementation.

With Azure Accelerate, Oracle customers can now migrate more efficiently while integrating AI into their strategy, alongside Azure experts from day one.

Channel partners can now resell Oracle Database@Azure

Microsoft AI Cloud Partners and Oracle Partner Network (OPN) members can now purchase and resell Oracle Database@Azure—right from the Microsoft Marketplace. This new model underscores Microsoft and Oracle’s joint commitment to the partner community while streamlining migration and modernization for customers who prefer to purchase through their trusted partners.

Microsoft’s partner reseller programme helped CGI select Oracle Database@Azure to consolidate cloud services under a single cloud provider, ensuring cost efficiency, elasticity and redundancy required to meet CGI’s client key requirements. For Smart DCC, CGI is working with Oracle and Microsoft to implement the solution through the Microsoft marketplace reseller model, providing a streamlined procurement route on a secure, enterprise-ready platform for mission-critical workloads.

Ro Crawford, VP Consulting Services, CGI

We are also excited to share that Oracle Database@Azure is now included in the Microsoft Most Valuable Professionals (MVP) program under the new technology area, Azure Solutions and Ecosystem. This new technology area spans mission-critical workloads and modernization efforts, including Oracle Database@Azure, Azure VMware Solution (AVS), Nutanix on Azure, and mainframe modernization strategies. Microsoft Most Valuable Professionals program recognizes exceptional community leaders for their technical expertise, leadership, speaking experience, online influence, and commitment to solving real world problems. To learn more about the program, visit this FAQ.

Oracle Database@Azure customer momentum

Customers like Conduent, BV Liantis, SEFE, Astellas Pharmacy, Craneware and Medline have moved their Oracle databases to Oracle Database@Azure to optimize performance and reduce latency while unlocking a future-ready foundation for AI.

We’re excited to spotlight our customer innovation in our sessions at Oracle AI World. Don’t miss Activision Blizzard on stage for Microsoft’s Spotlight Session on Wednesday, October 15 at 4:45 PM PT. You can find our full session list and featured customers here.

Get started

Oracle Database@Azure is an Oracle database service running on Oracle Cloud Infrastructure (OCI), colocated in Microsoft data centers

Learn more here

Looking ahead

We’re excited to continue this journey—bringing together the best of Oracle and Microsoft to help customers innovate faster, operate smarter, and lead in the era of intelligent applications.

If you’re attending Oracle AI World 2025, come talk to our experts at the Microsoft booth (#3005) and be sure to check out our sessions.

Learn more about Oracle on Azure | Microsoft Azure.

To get started, contact our sales team.

The post Oracle Database@Azure offers new features, regions, and programs to unlock data and AI innovation appeared first on Microsoft Azure Blog.

Quelle: Azure