The data behind the design: How Pantone built agentic AI with an AI-ready database

When we talk about agentic AI, it’s easy to default to abstract conversations about models, prompts, and orchestration. But the most compelling stories I see are the ones where AI unlocks something deeply human—creativity, intuition, and expertise—at entirely new speed and scale.

That’s why I was excited to host Color Meets Code: Pantone’s Agentic AI Journey on Azure, a webinar featuring two Pantone leaders, Kristijan Risteski, solutions architect, and Rohani Jotshi, senior director of engineering. During the session, Kris and Rohani shared how they’re applying agentic AI to one of the most foundational elements of creative work: color—and how an AI-ready database, Azure Cosmos DB, plays a central role in making that possible.

Watch the Color Meets Code webinar

The challenge: Scaling color expertise in a real-time, interactive world

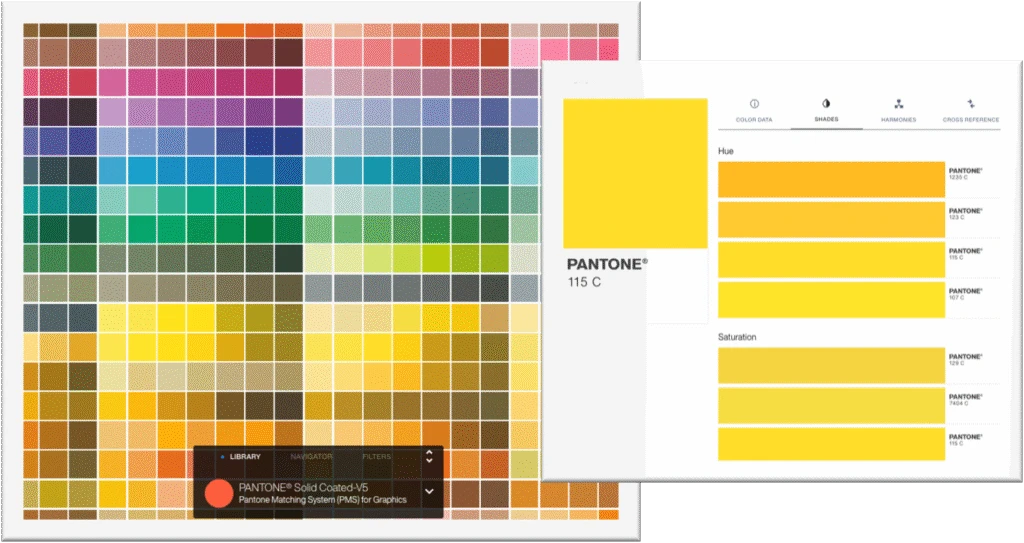

Pantone is widely recognized as a global authority on color. For decades, their teams have combined human expertise, color science, and trend forecasting to help designers and brands define, communicate, and control color across industries—from fashion and product design to packaging and digital experiences.

But as Pantone explained in the webinar, translating that depth of expertise into a modern, conversational AI experience came with real challenges. Creating color palettes is both time consuming and critical to the design process. Designers often gather inspiration by navigating between tools, color pickers, and trend reports before they ever land on a usable palette.

Pantone saw an opportunity to rethink that workflow entirely: What if designers could interact with decades of Pantone research, trend data, and color psychology through a chat-based interface—and generate curated palettes instantly?

Introducing the Palette Generator: An agentic AI experience

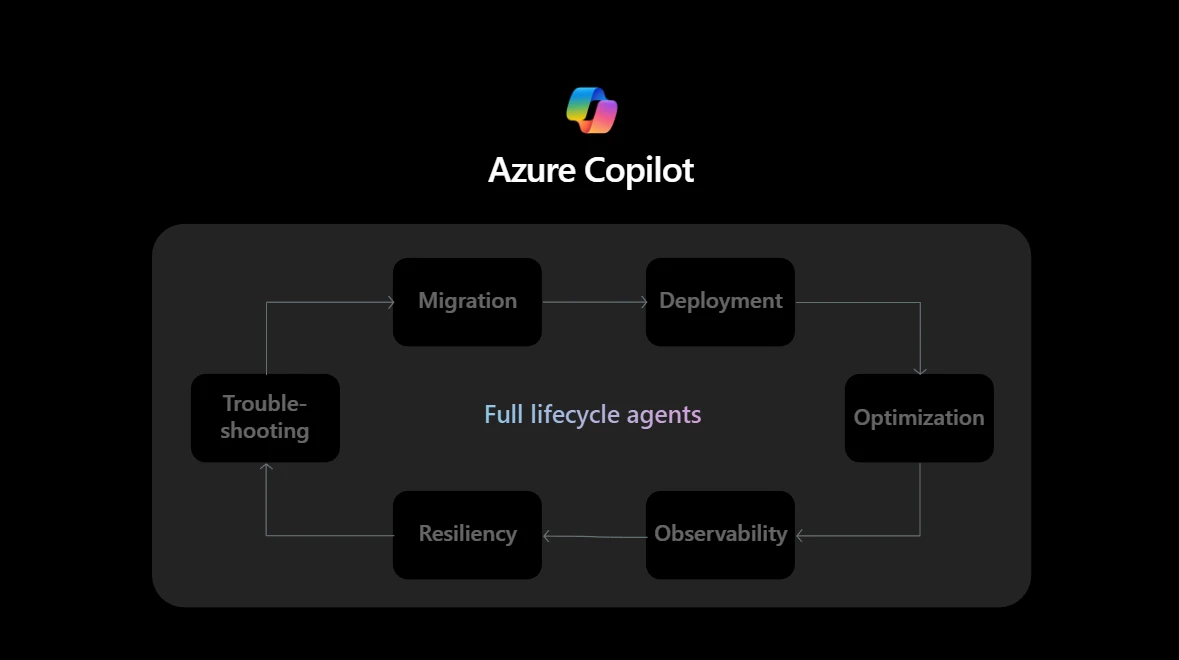

The result is Pantone’s Palette Generator, an AI-powered experience launched as a minimum viable product to gather real user feedback and iterate rapidly. Rather than offering static recommendations, the Palette Generator uses multiagent architecture to respond dynamically to user intent, conversational context, and historical interactions.

In the webinar, the Pantone team described how they designed the system to include specialized agents—such as a “chief color scientist” agent and a palette generation agent—each responsible for different aspects of reasoning, context retrieval, and response generation. These agents work together to deliver curated color palettes that reflect Pantone’s proprietary data and expertise.

What stood out to me was not just the sophistication of the AI, but the architectural discipline behind it. Agentic AI isn’t just about models—it’s also about data.

Why Azure Cosmos DB was foundational

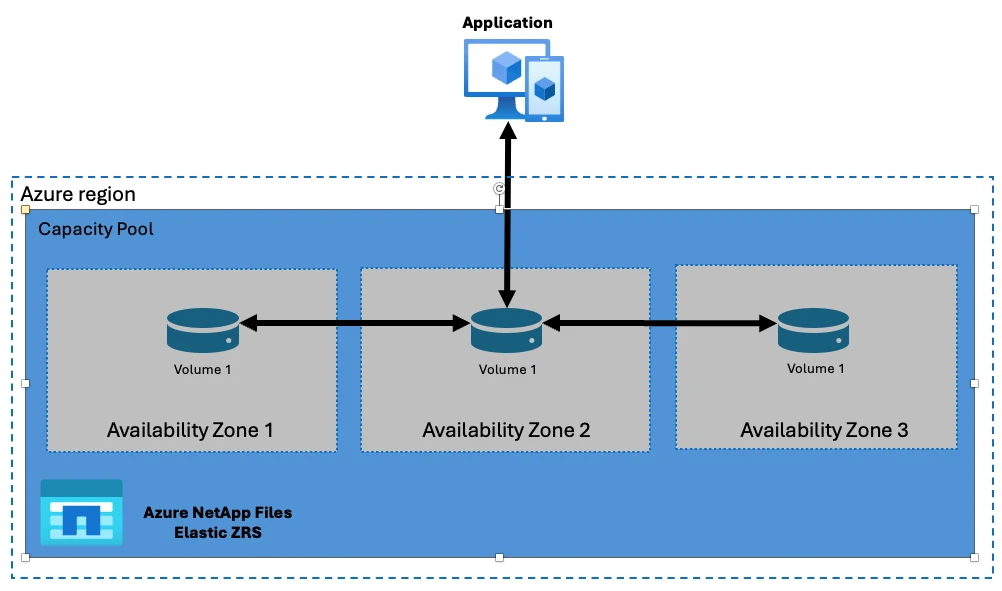

At the heart of Pantone’s Palette Generator is Azure Cosmos DB, serving as the system’s real-time data layer. Azure Cosmos DB is used to store and manage chat history, prompt data, message collections, and user interaction insights—all of which are essential for responsive, fast, context-aware agents.

As we did our research to find the best persistence storage, we explored different databases. What we found for Azure Cosmos DB was how easy it was to integrate it into our systems. We were able to make our initial proof of concept with a few lines of code and retrieve all the data very, very fast, like in a few milliseconds.

Kristijan Risteski

Azure Cosmos DB was also chosen because of its scale, allowing Pantone to serve users all over the world with fast data retrieval.

This is a critical point. As applications shift from “doing” to “understanding,” databases must support far more than simple transactions. They need to handle massive volumes of operational data, adapt as AI workflows evolve, and support advanced scenarios like conversational memory, analytics, and vector-based search.

Pantone’s architecture demonstrates what it means to be “AI-ready.” Azure Cosmos DB provides the scalability and flexibility needed to track user prompts and conversations across sessions, along with analytics that help Pantone understand how customers interact with the Palette Generator over time.

From text to vectors—and what comes next

Another insight Pantone shared during the webinar was how their architecture is evolving to improve relevance, accuracy, and contextual understanding. While the current system already supports rich conversational experiences, the team outlined next steps that involve moving from traditional text storage to vector-based workflows. This includes embedding user prompts and contextual data, allowing for vector search, and enriching responses with deeper semantic understanding.

Azure Cosmos DB plays a role here as well, supporting vectorized data, integrating with agent orchestration, and embedding models deployed through Microsoft Foundry. This allows Pantone to iterate without rearchitecting the entire system—an essential capability when working in a fast-moving AI landscape.

Real-world results from agentic architecture

Pantone didn’t just talk about vision—they shared concrete results from real usage of the Palette Generator. According to the webinar data, users across more than 140 countries engaged with the tool, generating thousands of unique chats within the first month of release and interacting in dozens of languages. The system observed multiple queries per user session, indicating that designers were actively experimenting, refining prompts, and exploring ideas conversationally.

Just as importantly, Pantone emphasized how rapidly they’ve been able to learn and adapt. Prompt sensitivity, user behavior, and architectural tradeoffs around speed, cost, and reliability all informed ongoing refinements. Azure Cosmos DB’s flexibility made it possible to capture these insights and evolve the experience without slowing innovation.

Lessons for anyone building agentic AI

Pantone’s journey reinforces several lessons I see repeated across customers building AI agents on Azure:

Agentic AI is inherently data driven. Without a real-time, scalable database layer, even the most advanced models struggle to deliver consistent, context-aware experiences.

Feedback loops matter. By capturing prompts, responses, and user interactions in Azure Cosmos DB, Pantone can continuously improve both the AI and the product experience itself.

Flexibility is nonnegotiable. AI architectures evolve quickly—from orchestration patterns to embedding strategies—and databases must evolve with them.

What Pantone has built with the Palette Generator is more than a feature; it’s a blueprint for how organizations can translate deep domain expertise into intelligent, agent-driven applications. By combining Microsoft Foundry, Azure AI services, and an AI-optimized database like Azure Cosmos DB, Pantone is showing how creativity and technology can move forward together.

As more organizations embrace agentic AI, the question won’t be whether they can deploy models—but whether their data foundations are ready to support real-time understanding, memory, and scale. Pantone’s journey makes that answer clear: AI-ready applications start with AI-ready data.

Explore Azure Cosmos DB

The post The data behind the design: How Pantone built agentic AI with an AI-ready database appeared first on Microsoft Azure Blog.

Quelle: Azure