Your pager is going off. Your service is down and your automated recovery processes have failed. You need to get people involved in order to get things fixed. But people are slow to react, have limited expertise, and tend to panic. However, they are your last line of defense, so you’re glad you prepared them for handling this situation.At Google, we follow SRE practices to ensure the reliability of our services, and here on the Customer Reliability Engineering (CRE) team, we share tips and tricks we’ve learned from our experiences helping customers get up and running. If you read our previous post on shrinking the impact of production incidents, you might remember that the time to mitigate an issue (TTM) is the time from when a first responder acknowledges the reception of a page to the time users stop feeling pain from the incident. Today’s post dives deeper into the mitigation phase, focusing on how to train your first responders so they can react efficiently under pressure. You’ll also find templates so you can get started testing these methods in your own organization.Understanding unmanaged vs. untrained responsesEffective incident response and mitigation requires effective technical people and proper incident management. Without it, teams can end up working on fixing technical problems in parallel instead of working together to mitigate the outage. Under these circumstances, actions performed by engineers can potentially worsen the state of the outage, since different groups of people may be undoing each other’s progress. This total lack of incident response management is what we referred to as “unmanaged.”Check out the Site Reliability Workbook for a real example of the consequences of the lack of proper incident management, along with a structure to introduce that incident management to your organization.Solving the problem of the untrained responseWhat we’ll focus on here is the problem that arises when the personnel responding to the outage are managed under a properly established incident response structure, but lack the training to effectively work through the response. In this “untrained” response, the response is coordinated and those responding know and understand their roles, but they lack the technical preparedness to troubleshoot the problem and identify the mitigation path to restore the service. Even if the engineers were once prepared, they can lose their edge if the service has a very low number of pages or if the on-call shifts for an individual are widely spaced in time.Other causes could be fast-paced software development or new service dependencies. Those can lead to the on-call engineers being unfamiliar with the tools and procedures needed to work through an outage. They know what they are supposed to be doing, but they just don’t know how to do it.How can we fix the untrained response to minimize the mean time to mitigation (MTTM)?Teaching response teams with hands-on activitiesThe way humans can cope with sudden changes in the environment, such as those introduced by an emergency, and have a measured response is by establishing mental models that help with pattern recognition. Psychologists call this “expert intuition,” and it helps when identifying underlying commonalities in situations that we have never faced before: “Hmm, I don’t recognize this specifically, but the symptoms we’re seeing make me think of X.”The best way to gain knowledge and, in turn, establish long-term memory and expert intuition, isn’t through one-time viewings of documents or videos. Instead, it’s through a series of exercises that include (but are not limited to) low-stakes struggles. These are situations with never-before-seen (or at least rarely seen) problems, in which failure to solve them will not have a severe impact on your service. These brain challenges help the learning process by practicing memory retrieval and increasing the neuro pathways that access memory, thus improving analytical capacity.At Google, we use two types of exercises to help our learning process: Disaster Recovery Testing (DiRT) and Wheel of Misfortune.DiRT, or how to get dirty The disaster recovery testing we perform internally at Google is a coordinated set of events organized across the company, in which a group of engineers plan and execute real and fictitious outages for a defined period of time to test the effective response of the involved teams. These complex, non-routine outages are performed in a controlled manner, so that they can be rolled back as quickly as possible by the proctors should the tests get out of hand.To ensure consistent behavior across the company, there are some rules of engagement that the coordinating team publishes, and every participating team has to adhere to. These rules include:Prioritizations, i.e., real emergencies take precedence over DiRT exercisesCommunication protocols for the different announcements and global coordinationImpact expectations: “Are services in production expected to be affected?”Test design requirements: all tests must include a revert/rollback plan in case something goes wrongAll tests are reviewed and approved by a cross-functional technical team, different from the coordinating team. One dimension of special interest during this review process is the overall impact of the test. It not only has to be clearly defined, but if there’s a high risk of affecting production services, the test has to be approved by a group of VP-level representatives. It is paramount to understand if a service outage is happening as a direct result of the test being run, or if something is out of control and the test needs to be stopped to fix the unrelated problem.Some examples of practical exercises include disconnecting complete data centers, disruptively diverting the traffic headed to a specific application to a different target, modifying live service configurations, or bringing up services with known bugs. The resilience of the services is also tested by “disabling” people who might have knowledge or experience that isn’t documented, or removing documentation, process elements, or communication channels.Back in the day, Google performed DiRT exercises in a different way, which may be more practical for companies without a dedicated disaster testing team. Initially, DiRT comprised a small set of theoretical tests done by engineers working on user-facing services, and the tests were isolated and very narrow in scope: “What would happen if access to a specific DNS server is down?” or “Is this engineer a single point of failure when trying to bring this service up?”How to start: the basicsOnce you embrace the idea that testing your infrastructure and procedures is a way to learn what works and what does not, and use the failures as a way of growing, it is very tempting to go nuts with your tests. But doing so can easily create too many complications in an already complex system.To avoid the initial unnecessary overhead of interdependencies, start small with service-specific tests, and evolve your exercises, analyzing which ones provide value and which ones don’t. Clearly defining your tests is also important, as it helps to verify if there are hidden dependencies: “Bring down DNS” is not the same as “Shut down all primary DNS servers running in North America data centers, but not the forwarding servers.” Forwarding rules may mask the fact that all the DNS servers are down but the clients are sending DNS queries to external providers.Over the years, your DiRT tests will naturally evolve and increase in size and scope, with the goal of identifying weaknesses in the interfaces between services and teams. This can be achieved, for instance, by failing services in parallel, or by bringing down entire clusters, buildings, geographical domains, cloud zones, network layers, or similar physical or logical groupings.What to test: human learningAs we described earlier, technical knowledge is not everything. Processes and communications are also fundamental in reducing the MTTM. Therefore, DiRT exercises should also test how people organize themselves and interact with each other, and how they use the processes that have previously been established for the resolution of emergencies. It’s not helpful to have a process to purchase fuel for a long-running generator working during an extensive power outage if nobody knows the process exists, or where it is documented.Once you identify failures in your processes, you can put in place a remediation plan. Once the remediation plan has been implemented and a fix is in place, you should make sure the fix is effective by testing it. After that, expand your tests and restart the cycle. If you plan to introduce a DiRT-style exercise in your company, you can use this Test Plan Scenario template to define your tests.Of course, you should note that these exercises can produce accidental user-facing outages, or even revenue loss. During a DiRT exercise, as we are operating on production services, an unknown bug can potentially bring an entire service to a point in which recovery is not automatic, easy, or even documented.We think the learning value of DiRT exercises justifies the cost in the long term, but it’s important to consider whether these exercises might be too disruptive. There are, fortunately, other practices that can be used without creating a major business disruption. Let’s describe the other one we use at Google, and how you can try it.Spinning the Wheel of MisfortuneA Wheel of Misfortune is a role-playing scenario to test techniques for responding to an emergency. The purpose of this exercise is to learn through a purely simulated emergency, using a traditional role-playing setup, where engineers walk through the steps of debugging and troubleshooting. It provides a risk-free environment, where the actions of the engineers will have no effects in production, so that the learning process can be reinforced through low-stakes struggles.The use of scenarios portraying both real and fictitious events also allow the creation of complete operational environments. These scenarios require the use of skills and bits of knowledge that might not be used otherwise, helping the learning process by exposing the engineers to real—but rarely occurring—patterns to help build a complete mental model.If you have played any role-playing game, you probably already know how it works: a leader such as the Dungeon Master, or DM, runs a scenario where some non-player characters get into a situation (in our case, a production emergency) and interact with the players, who are the people playing the role of the emergency responders.Running the scenarioThe DM is generally an experienced engineer who knows how the services work and interact in order to respond to the operations requested by the player(s). It is important that the DM knows what the underlying problem is, and the main path to mitigate its effects. Understanding the information the consoles and dashboards would present, the way the debugging tools work, and the details of their outputs all add realism to the scenario, and will avoid derailing the exercise by providing information and details that are not relevant to the resolution.The exercise usually starts with the DM describing how the player(s) becomes aware of the service breakage: an alert received through a paging device, a call from a call-center support person, an IM from a manager, etc. The information should be complete, and the DM should avoid holding back information that otherwise would be known during the real scenario. Information should also be relayed as it is without any commentary on what it might mean.From there, the player should be driving the scenario: They should give clear explanations of what they want to do, the dashboards they want to visualize, the diagnostic commands they want to run, the config files they want to inspect, and more. The DM in turn should provide answers to those operations, such as the shape of the graphs, the outputs of the different commands, or the content of the files. Screenshots of the different elements (graphs, command outputs, etc.) projected on a screen for everybody to see should be favored over verbal descriptions.It is important for the DM to ask questions like “How would you do that?” or “Where would you expect to find that information?” Exact file system paths or URLs are not required, but it should be evident that the player could find the relevant resource in a real emergency. One option is for the player to do the investigation for real by projecting their laptop screen to the room and looking at the real graphs and logs for the service.In these exercises, it’s important to test not only the players’ knowledge of the systems and their troubleshooting capacity, but also the understanding of incident command procedures. In the case of a large disaster, declaring a major outage and proceeding to identify the incident commander and the rest of the required roles is as important as digging to the bottom of the root cause.The rest of the team should be spectators, unless specifically called in by the DM or the player. However, the DM should exercise veto power for the sake of the learning process. For example, if the player declares the operations lead is another very experienced engineer and calls them in, with the goal of unloading all the troubleshooting operations, the DM could indicate that the experienced engineer is trapped inside a subway car without cell phone reception, and is unable to respond to the call.The DM should be literal in the details: If a page has a three-minute escalation timeout and has not been acknowledged after the timeout, escalate to the secondary. The secondary can be a non-player who then calls the player on the phone to inform them about the page. The DM should also be flexible in the structure. If the scenario is taking too long, or the player is stuck on one part, allow suggestions from the audience, or provide hints through non-player observations.Finally, once the scenario has concluded, the DM should state clearly and affirmatively (if so) that the situation is fixed. Allow some time at the end for debriefing and discussion, explaining the background story that led to the emergency and indicating the contributors to the situation. If the scenario was based on a real outage, the DM can provide some factual details of the context, as they usually help understand the different steps that led to the outage.To make the process of bootstrapping the exercises easier, check out the Wheel of Misfortune template we’ve created that can help you with your Wheel of Misfortune preparation.Putting it all togetherThe people involved in incident response directly affect the time needed to recover from an outage, so it’s important to prepare teams as well as systems. Now that you’ve seen how some testing and learning methods work, try them out for yourself. In the next few weeks, try running a simple Wheel of Misfortune with your team. Choose (or write!) a playbook for an important alert, and walk through it as if you were solving a real incident. You might be amazed at steps that seem obvious that need documenting.Check out these resources to learn more:SRE workbookDisaster Recovery Testing TemplateWheel Of Misfortune Template

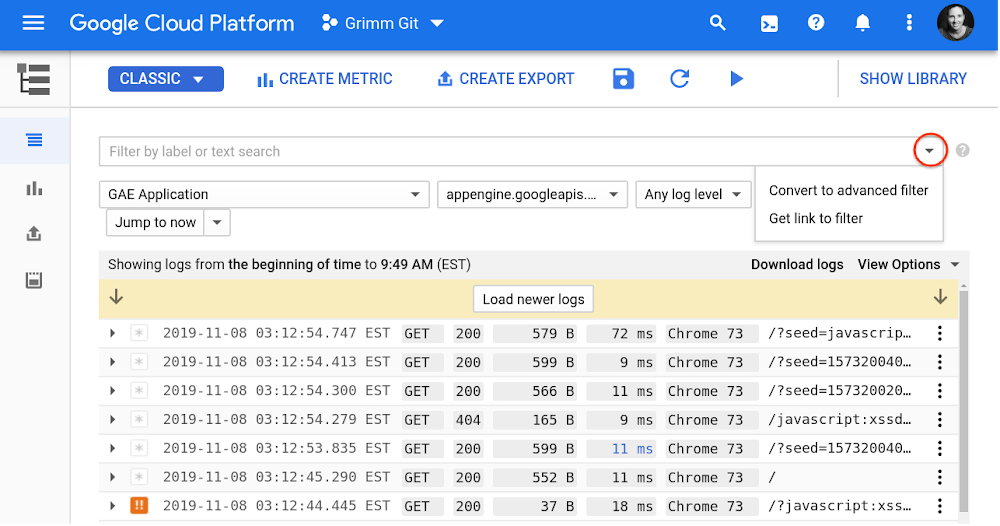

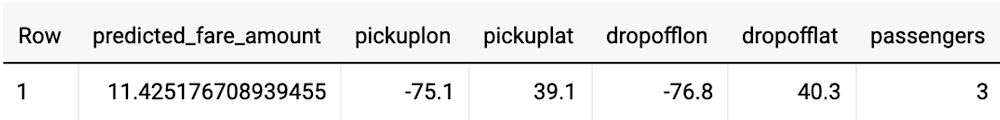

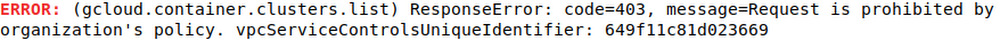

Quelle: Google Cloud Platform