Google Cloud Next ‘20: OnAir—delivering infrastructure for all your apps

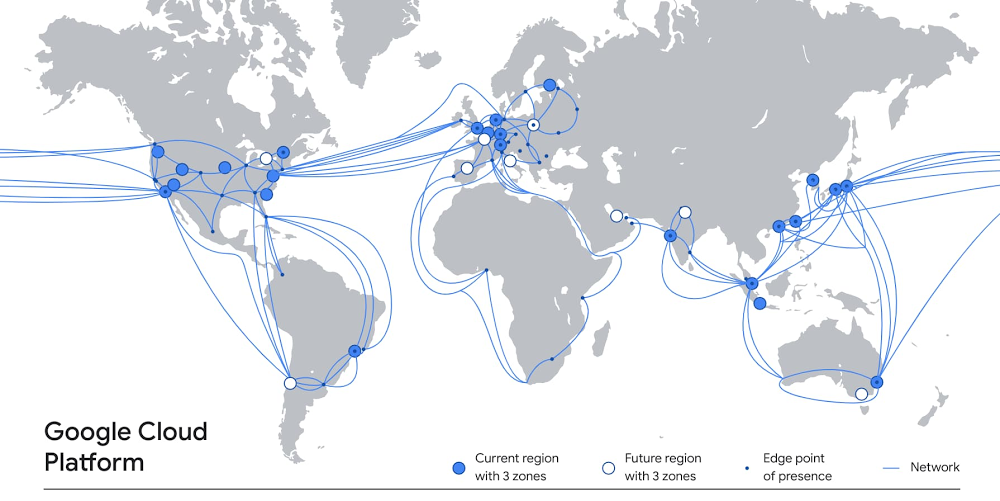

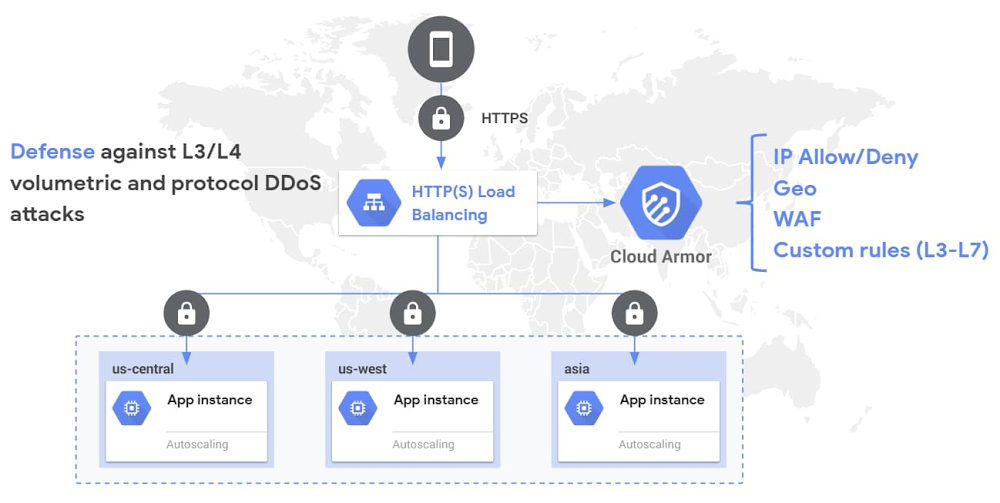

The applications you run on Google Cloud rely on extensive amounts of infrastructure, deployed around the world, in dozens of data centers, across hundreds of points of presence, and connected by a system of high-capacity fiber optic cables that encircle the globe. Inside our data centers, you’ll find the latest compute, storage and network systems and services on which to run a wide variety of workloads—from lightweight microservices to high performance computing to demanding enterprise applications. And that infrastructure is growing all the time, delivering more capacity and resilience, and better performance for your end users.At the same time, we’re always working to simplify that infrastructure complexity for you, so setting up and using Google Cloud infrastructure is easy and seamless. Today, we want to tell you about recent enhancements to Google Cloud’s global infrastructure, as well as new deployment options and functionality that you can take advantage of. A global presenceLet’s start with our worldwide footprint. At Next ‘19 in San Francisco, Google Cloud counted 19 regions around the world. Since then, we’ve opened five new regions in Jakarta, Las Vegas, Osaka, Salt Lake City and Seoul. We’ve also announced new forthcoming regions, including Toronto, Warsaw, Delhi, Doha, and Melbourne. Combined with 144 network edge locations and counting, these regions deliver the services, capacity and performance you need to ensure a terrific experience for your users.Those regions rely on robust networks to transport data between them, including private subsea cables. Today, we announced the new Grace Hopper cable that will run between the United States, the United Kingdom, and Spain. When Grace Hopper is commissioned in 2022, it will be one of the first new transatlantic cables to go live since 2003, delivering 16 fibre pairs of capacity, powering a variety of Google services like Gmail, Meet and of course Google Cloud.A flexible, secure networkEnterprises are increasingly adopting hybrid and multi-cloud to deliver the best experiences for their customers. The network is at the foundation of this transformation, but is getting exponentially more complex to manage, secure, and scale. To help enterprise customers with these challenges, we recently expanded our partnership with Cisco to bring the best of Cisco and Google Cloud technologies together, with a turnkey networking solution: Cisco SD-WAN Cloud Hub with Google Cloud. This joint solution will help our customers simplify enterprise networking and advance security capabilities, while helping IT teams minimize operational costs and meet application service-level objectives.And today, we’re announcing a new secure, easy way to connect to Google Cloud, with Private Service Connect. By taking a service-centric approach to networking and abstracting the underlying infrastructure, Private Service Connect creates service endpoints in consumer VPCs that provide private connectivity and policy enforcement, so you can easily connect services across different networks and organizations. Further, with Private Service Connect, traffic is not exposed to the public internet; customers can access services directly and securely over Google’s global network. Read this blog to learn more. Private Service Connect complements Service Directory, which we launched in March to help customers simplify service management and operations. Together, Private Service Connect and Service Directory let you easily and securely connect to and manage services at scale. As enterprises use Private Service Connect to access more first- and third-party services, Service Directory helps engineering teams to publish and discover them.In addition, you can further manage your network with Network Intelligence Center, Google Cloud’s comprehensive network monitoring, verification and optimization platform. Centralized monitoring reduces troubleshooting time and effort, increases network security and improves overall user experience. We are excited to announce updates to two of the modules in the platform: Firewall Insights is now in beta and Performance Dashboard is generally available. Firewall Insights brings intelligence and proactive management to network security, while Performance Dashboard offers real-time visibility into packet loss and latency at a per-project level. Finally, for customers with hybrid or multi-cloud deployments, Cloud CDN now supports serving content from on-prem data centers, or even other clouds. See this infographic to learn more about Cloud CDN.Industry-leading computeOf course, one of the many reasons people choose Google Cloud is for access to the latest high-performance compute and storage services. On the compute side, Google Compute Engine can be configured with some of the most powerful, cost-effective hardware, like efficient VMs (E2), one of our newest families of general-purpose virtual machines. E2 features dynamic resource management that delivers the lowest total cost of ownership (TCO) on Google Cloud and is our fastest growing new virtual machine family on Compute Engine. It now offers machine types with up to 32 vCPUs and is available in all Google Cloud regions.We also recently announced the Accelerator-Optimized VM family (A2), the first public cloud offering to feature the NVIDIA Ampere A100 GPUs. The A2 was designed for demanding workloads such as machine learning and high performance computing, providing up to 16 A100 GPUs in a single instance.For customers running large VM fleets, we announced the general availability of OS patch management service, to keep your operating systems up-to-date and reduce the risk of security vulnerabilities.The service works on Compute Engine and enables you to apply OS patches across a set of VMs, receive patch compliance data across your Windows or Linux environments, and automate installation of OS patches—all from one centralized location. The current release of OS patch management is available at no cost through December 31, 2020. To learn more about the service, check out our NEXT session: Managing Large Fleets of Compute Engine VM Fleets.The right storage for all your workloadsFor many applications, the performance is only as good as the underlying storage. If you need to support workloads such as Electronic Design Automation (EDA), video processing, genomics, manufacturing and financial modeling, we recently launched Filestore High Scale, a high-performance, scale-out file system. Currently in beta, the new Filestore High Scale tier is a fully managed service and makes it easy to mount file shares on Compute Engine VMs. With High Scale, it’s simple to deploy a file system that can scale to hundreds of thousands of IOPS, 10s GB/s throughput, and 100s of TBs.And if you’re looking for reliable, high-performance block storage, there’s Persistent Disk, which delivers industry-leading price performance for both HDD and SSD to satisfy your needs. Today we’re excited to announce an expanded approach to our Persistent Disk product portfolio, giving you the ability to pick the performance that best fits your workload: Best suited for most enterprise applications, we will have Balanced PD, giving you the best price per GB. For customers seeking the best price per IOPS for performance sensitive workloads such as databases or persistent cache we will have Performance PD. We will also introduce our Extreme PD SKU, well-suited for the highest performance workloads such as SAP HANA or large in-memory databases. This strategy is all about tailoring your storage to your workload, so we can deliver on your price and performance needs. Support for all your workloadsAll this infrastructure is in service of running your workloads, however you see fit. In the early days of Google Cloud, we started with a cloud-native platform as a service (App Engine), but today, our infrastructure supports a broad range of your most demanding enterprise workloads. For example, you can now run your VMware workloads on Google Cloud, using our Google Cloud VMware Engine service, which recently became generally available. This first-party offering lets you run a fully managed VMware environment so you can easily lift and shift your existing on-premises VMware based workloads into Google Cloud with no changes to your apps, tools or processes. Or perhaps you need to run Microsoft and Windows workloads. Google Cloud offers a first-class experience for these, too. Customers cite reliability and performance advantages as reasons they initially chose Google Cloud for migrating these workloads. They can also leverage the platform’s unique features—sole-tenant nodes, CPU overcommit, containerization, and managed services—to reduce their overall license spend. Further, Google Cloud provides an opinionated path to modernization to further reduce licensing costs and move to open-source alternatives.As SAP customers continue to adopt Google Cloud, we are continuously innovating to improve ease of migration, performance and scalability as well as lower barriers to entry for analytics and machine learning. Recently, we updated our SAP HANA certifications to include Google Compute Engine’s N2 family of VM instances, based on 2nd Generation Intel Xeon Scalable Processors. These N2 VMs improve performance and reduce waste with better alignment with SAP licensing increments. We also added SAP NetWeaver certifications for AMD-based N2D VM instances with improved performance compared to prior Google Cloud offerings based on our SAP Application Performance Standard (SAPS) benchmark testing, and at a lower-cost. Finally, in addition to running workloads on Google Cloud Platform, our Bare Metal Solution lets you run specialized workloads such as Oracle databases on dedicated hardware, close to Google Cloud. This can simplify your path of moving from on-premises to cloud, while reducing migration risks and helping you lower overall costs faster. We recently brought Bare Metal Solution to five additional regions, with four more regions on tap by the end of the year. Migrate and manage with easeTo make it easier to rapidly migrate to Google Cloud, today we’re announcing our Rapid Assessment and Migration Program (RAMP), publicly available today. Built on feedback from customers and partners, RAMP offers end-to-end migration guidance and training, as well as incentives to help you offset a significant portion of your migration cost. RAMP also brings together a full suite of tools for every phase of the migration journey to accelerate the process.And once your workloads are on Google Cloud, you don’t want to have to choose between performance and cost, or functionality and ease of use. Our goal is to create a platform that delivers terrific performance, that is easy to use, for a great price. That’s why we built Active Assist, a portfolio of intelligent tools and capabilities to help you manage complexity in your cloud operations. Active Assist leverages data, machine learning, automation, and intelligence to help customers focus on three key areas: making proactive improvements to your cloud with smart recommendations, preventing mistakes from happening in the first place with better analysis, and helping you figure out why something went wrong with intuitive troubleshooting tools. To learn more about Active Assist, be sure to check out our Next OnAir session, CMP100: Cloud is Complex. Managing it Shouldn’t Be. New security controlsWe want you to be able to operate your mission critical workloads securely, efficiently, and effectively, and we strive to simplify and reduce toil along the way. Today we’re simplifying the way you can use Google Cloud Armor to help protect your websites and applications from exploit attempts, as well as Distributed Denial of Service (DDoS) attacks.We’re announcing the beta release of Cloud Armor Managed Protection Plus, a bundle of products and services that helps protect your internet-facing applications for a monthly subscription fee. We’re making curated Named IP Lists available in beta. We’re expanding our set of pre-configured WAF rules with beta rules for Remote File Inclusion (RFI), Local File Inclusion (LFI), and Remote Code Execution (RCE).You can learn more about our security announcements here. Infrastructure is hard; Google Cloud makes it easyBuilding and managing the right infrastructure to power your workloads can be hard—we know, we do it day in, day out, at a scale that few other providers can lay claim to. Thankfully, building and managing your cloud infrastructure doesn’t have to be difficult—simply build your environment on top of Google Cloud, and automatically gain from our global presence, robust network, industry-leading compute and storage hardware, and intelligent, automated management capabilities. To learn more about Google Cloud infrastructure, register for Google Cloud Next ‘20: OnAir, and check out over 50 infrastructure keynote, breakout and spotlight sessions that go live this week.

Quelle: Google Cloud Platform