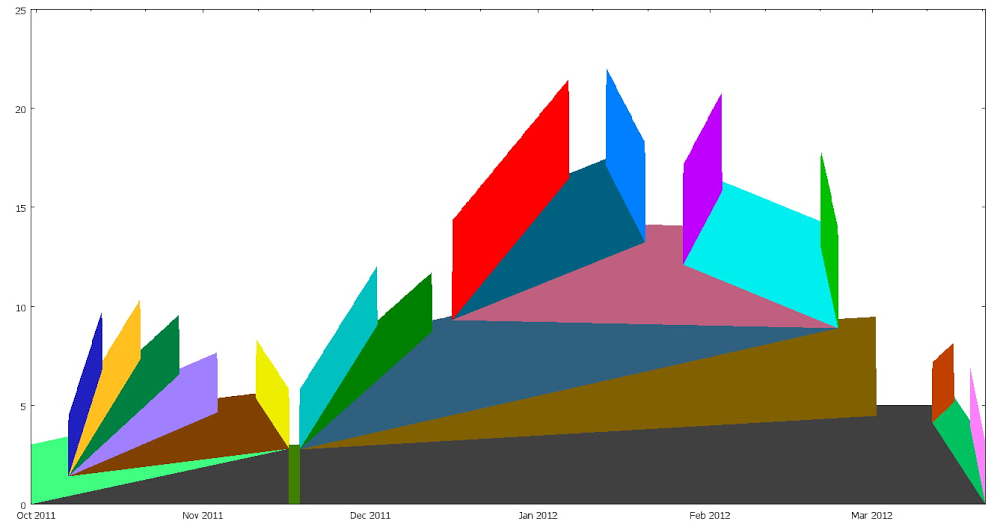

Have you ever wondered what, fundamentally, scientifically, makes a piece of cake different from a slice of bread or a cookie? Me neither. But now this important, controversial question finally has an answer, thanks to explainable machine learning. (Sort of.)In machine learning, explainability is the study of how we can make models more interpretable, so that we can understand, at least to some extent, why they make the predictions they do–an improvement from taking the predictions of a deep neural net at face value without understanding what contributed to the model output. In this post, we’ll show you how to build an explainable machine learning model that analyzes baking recipes, and we’ll even use it to come up with our own, new recipes–no data science expertise required.This project idea comes from Sara Robinson, who works on AI for Google Cloud. In April, she started a storm of pandemic baking, and like any good machine-learning-practitioner-baker, soon turned her modeling skills to baking. She collected a dataset of recipes and then built a TensorFlow model that took in lists of ingredients and spit out predictions, like:“97% bread, 2% cake, 1% cookie”Sara’s model could accurate classify recipes by type, but she also used it to come up with a completely new recipe: something her model deemed to be about 50% cookie and 50% cake–a “cakie.”Sara Robinson’s original cakie-cookie hybrid, the “cakie.”Results were promising:“It is yummy. And it strangely tastes like what I’d imagine would happen if I told a machine to make a cake cookie hybrid.”You can find her cakie recipe on her original blog.This December, Dale and Sara teamed up to build a baking 2.0 model–using a bigger dataset, new tools, and an explainabile model, one which would give insight into what makes cakes cakes and cookies cookies and breads breads. Plus, we came up with a new hybrid recipe: the “breakie,” a bread-cookie hybrid (we wanted to call it a brookie, but that name was already taken).Dale’s first bite of a “cakie”Keep reading to learn how we did it, or scroll to the end to see our brookie recipe.Build an Explainable No-Code Model with MLFor this project, we decided to use a Google Cloud tool called AutoML Tables. It’s a no-code way to build machine learning models on tabular data, like the kind you’d find in a spreadsheet or database. We chose AutoML Tables because it’s both easy to use and just got an upgrade of new, built-in explainability tools like Feature Attribution (more on that in a bit).Collecting and Preparing DataTo start, we collected a dataset of about 600 baking recipes for cookies, cakes, and breads from the web. (We can’t share the dataset here because we don’t own it, but you can definitely find your own recipe datasets online.) Next, we whittled down each of those 600 recipes to 16 core ingredients:YeastFlourSugarEggFat (sum of any type of oil)MilkBaking SodaBaking PowderApple Cider VinegarButtermilkBananaPumpkin PureeAvocadoWaterButterSaltWe didn’t include anything else, like cinnamon, chocolate chips, or nutmeg, in our model. The choice of these 16 ingredients was slightly arbitrary, but mainly we were trying to include ingredients that affect texture and consistency and exclude ingredients that don’t affect texture and that might even let the model “cheat.” For example, you could theoretically add chocolate chips to any recipe, but they’re almost never found in bread, a hint we didn’t want our model to learn from.Oh, speaking of bread: we also made the executive decision to move sweet breads (like pumpkin bread, banana bread, zucchini bread, etc) from the “bread” category to the “cake” category, based mostly on the wisdom of Great British Bake Off judge Paul Hollywood, who said on Instagram that banana bread is most definitely not a bread.Because recipes give ingredients in all different measurement units–butter could be written in sticks or tablespoons or ounces–we converted all measurement units to ounces (using a very long and unsophisticated if statement).And finally, for our last step of preprocessing, we used a little data augmentation trick. Data augmentation is a method for creating new training examples (in this case, rows) from data you already have. We wanted our model to be insensitive to the serving size of a recipe, so we decided randomly double and triple ingredient amounts. Since a recipe for 2x or 3x a cake should be more or less identical to the original cake recipe, we were able to generate new recipe examples for free (woohoo!).Building a ModelNext we built a classification model using AutoML Tables, which was the easiest part of this project. You can find Tables under the “Artificial Intelligence” section of the GCP console:Once you create a new Tables model, you can import data directly from a csv, Google Sheets, or a BigQuery database.Once your data is imported, you’ll be able to see it in the “Train” tab:AutoML Tables automatically computes some useful metrics about your data for you, like what percent of each column has missing values or how many distinct values it contains. It also computes the handy metric “Correlation with Target.” “Target” in this case is what we’re trying to predict–cookie, cake, or bread. You can set it in the drop down up top, which in our case is the column labeled “type”:Once “target” is set, AutoML will calculate, for each ingredient in isolation, how correlated it is with the target. In the data above, you can see that baking soda has the highest correlation with recipe type (0.615), meaning that if you had to only pick one ingredient to base your decision off of, baking soda would be a good bet.But in reality, baked goods are defined by complex interactions between ingredients, and looking at just baking powder alone is not accurate enough for us. So, we’ll build a machine learning model to predict recipe type by clicking on that “Train Model” button in the top right of the UI. From there, you’ll be given a dialog that lets you name your model, specify for how long you want your model to train, and indicate what columns you want to use for training (these are called “input features”).Since we only want our model to look at ingredients, I’ll select only ingredient columns from the “Input feature selection” drop down:Next hit “Train model” and wait. In the background, AutoML will train and compare a slew of machine learning models to find one that’s accurate. This could take a few hours.When your model is done training, you can view it in the “Evaluate” tab, which gives you lots of useful stats of model quality. As you can see, our model was pretty accurate:Model Explainability with Feature ImportanceIf you scroll down on the “Evaluate” tab, we can start to gain more insight into our model through “Feature Importance” scores:These scores highlight how heavily our model depends on each ingredient when making a prediction. In our case, it seems like butter, sugar, yeast, and egg are important predictors of whether a recipe is a cookie, cake, or bread.The feature importance scores above show the overall importance of each ingredient to the model, which AutoML calculates by looking at aggregate feature importance across our test set But we can also look at feature importance through the lens of a single prediction, which might be different.For example, maybe milk isn’t in general an important model feature, but when sugar and butter values are low, milk becomes more important. In other words, we’d like to know, what features did the model depend on when it made one particular prediction?In the Test and Use tab, we can make predictions about individual recipes and see those local feature importance scores. For example, when I feed my model a recipe for cake, it correctly predicts the category is “cake” (0.968 confidence). Meanwhile, the local feature importance scores tell me that egg, fat, and baking soda were the ingredients that most contributed to that prediction. Coming Up with a Breakie RecipeThanks to those feature importance scores, we were able to figure out what made the model think a recipe was for a cookie, cake, or bread, and we used that knowledge to come up with a breakie–something our model thought was roughly 50% cookie, 50% bread.Of course, once we found our breakie recipe, we had to experimentally verify it in the lab:Sara’s breakies, fresh out of the oven.Success! We wound up with something that tasted like a cookie but that was more airy, like a bread. Machine learning–it works!We should caveat that while our model gave us ingredients, it didn’t spit out any baking directions, so we had to improvise those ourselves. And, we added chocolate chips and cinnamon for good measure.If you want to verify our results (for science), try our breakie recipe for yourself. And if you still have an appetite for ML, find more project ideas from Making with ML on YouTube.BreakieMakes ~16 bread-inspired cookies.Ingredients2 teaspoons active dry yeast¼ cup warm milk2 cups flour1 egg, lightly beaten1 teaspoon baking soda½ teaspoon salt¼ teaspoon cinnamon½ cup white sugar¼ cup brown sugar1 and ¼ stick unsalted butter, room temperature⅓ cup chocolate chipsInstructionsPreheat oven to 350 degrees Fahrenheit. Line a baking sheet with parchment paper and lightly grease it with cooking spray.Make the bread part: Heat milk in microwave until it is warm to the touch, but not hot. Dissolve yeast in warm milk and set aside. In a large bowl, combine flour, baking soda, salt, and cinnamon. Add the milk and yeast mixture to the flour mixture and stir until combined. Add the lightly beaten egg to the flour mixture until combined. When you’re done mixing it may seem like there is too much flour. That’s ok, set this mixture aside for now.Make the cookie part: In a stand mixer fitted with a paddle attachment, combine room temperature butter with both sugars on medium speed until smooth. Slowly incorporate flour mixture into butter mixture, about a cup at a time. Stir in chocolate chips.Form dough into balls (in our recipe test the cookie dough balls were 2.5 tablespoons, or 50 grams if you have a kitchen scale) and place a few inches apart on your baking sheet. Bake 13 – 15 minutes until breakies are golden brown on the outside and start to crack slightly on top. Let cool on a wire rack. Enjoy, and let us know what you think the bread to cookie ratio of these are!Related ArticleIncreasing transparency with Google Cloud Explainable AIWe’re working to build AI that’s fair, responsible and trustworthy, and we’re excited to introduce the latest developments.Read Article

Quelle: Google Cloud Platform