Think big: Why Ricardo chose Bigtable to complement BigQuery

With over 3.7 million members, Ricardo is the most trusted, convenient, and largest online marketplace in Switzerland. We successfully migrated from on-prem to Google Cloud in 2019, a move that also raised some new use cases that we were keen to solve. With our on-premises data center closing, we were under deadline to find a solution for these use cases, first looking at our data stream process. We found a solution using both Cloud Bigtable and Dataflow from Google Cloud. Here, we take a look at how we decided upon and implemented that solution, as well as look at future use cases on our roadmap. Exploring our data use cases For analytics, we had originally used the Microsoft SQL data warehouse, and had decided to switch to BigQuery, Google Cloud’s enterprise data warehouse. That meant that all of our workloads had to be pushed there as well, so we chose to run the imports and the batch loads from Kafka into BigQuery through Apache Beam. We also wanted to give internal teams the ability to perform fraud detection work through our customer information portal, to help protect our customers from the sale of fraudulent goods or from actors using stolen identities. Also, our engineers had to work quickly to address how to move our two main streams of data that had been stored in separate systems. One is for articles—essentially, the items for sale posted to our platform. The other is for assets, which contain the various descriptions of the articles. Before, we’d insert the streams into BigQuery, and then do a JOIN. One of the challenges is that Ricardo has been around for quite some time, so we sometimes have an article that hasn’t been stored since 2006, or gets re-listed, so it may miss some information in the asset stream. One problem, which solution?Doing research into solving our data stream problem, I came across a Google Cloud blog that provided a guide to common use patterns for Dataflow (Google Cloud’s unified stream and batch processing service), with a section on Streaming Mode Large Lookup Tables. We have a large lookup table of about 400 GB with our assets, in addition to our article stream. But we needed to be able to look up the asset for an article. The guide suggested that a column-oriented system could answer this kind of query in milliseconds, and could be used in a Dataflow pipeline to both perform the lookup and update the table. So we explored two options to solve the use case. We tried out a prototype with Apache Cassandra, the open-source, wide column store, NoSQL database management system, which we can stream into from BigQuery using Apache Beam to preload it with the historical data. We built a new Cassandra cluster on Google Kubernetes Engine (GKE), using the CASS Operator, released by Datastax as open source. We created an index structure, optimized the whole thing, did some benchmarks, and happily found that everything worked. So we had the new Cassandra cluster, the pipeline was consuming assets and articles, and the assets were looked up from the Cassandra store where they were also stored. But what about day-to-day tasks and hassles of operations? Our Data Intelligence (DI) team needs to be completely self-sufficient. We’re a small company, so we need to move fast, and we don’t want to build a system that quickly becomes legacy. We were already using and liking the managed services of BigQuery. So using Bigtable, which is a fully managed, low-latency, wide column NoSQL database service, seemed like a great option. A 13 percent net cost savings with BigtableIn comparison to Bigtable, Cassandra had a strike against it in the area of budgeting. We found that Cassandra needed three nodes to secure the availability guarantees. With Bigtable, we could have a fault-tolerant data pipeline/Apache Beam pipeline running on Apache Flink. We could also have fault tolerance in the case of low availability, so we didn’t need to run the three nodes. We were able to schedule 18 nodes when we ingested the history from BigQuery into Bigtable, for the lookup table, but as soon as the lookup table was in, we could scale down to two or one node, because it can handle 10,000 requests per second guaranteed. Bigtable takes care of availability and durability behind the scenes and so it supplies guarantees even with one node.With this realization, it became quite clear that the Bigtable solution was easier to manage than Cassandra, and it was also more cost-effective. As a small team, when we factored in the ops learning costs, the downtime, and the tech support needed for the Cassandra-on-GKE solution, it was already more available to use one TB in a Bigtable instance to start out with, versus the Cassandra-on-GKE solution with three times the E2 node cluster, which is pretty small, at an 8 CPU GM. Bigtable was the easier, faster, and less expensive answer. By moving such lookup queries to Bigtable, we ultimately saved 13 percent in BigQuery costs. (Keep in mind that these are net savings, so the additional cost for running Bigtable is already factored in.)As soon as this new solution lifted off, we moved another workload to Bigtable where we integrated data from Zendesk tickets for our customer care team. We worked on integrating the customer information, making it available in Bigtable to have the product key lookup linked with the Zendesk data so that this information could be presented to our customer care agents instantly. Benefiting from the tight integration of Google Cloud tools If you’re a small company like ours, building out a data infrastructure where the data is highly accessible is high priority. For us, Bigtable is our store where we have processed data available to be used by services. The integration of the services between Bigtable, BigQuery, and Dataflow makes it so easy for us to make this data available. One of the other reasons we found the platform on Google Cloud to be superior is because with Dataflow and BigQuery, we can make quick adjustments. For example, one morning, thinking about an ongoing project, I realized we should have reversed the article ID—it should have a reverse string instead of a normal string to prevent hotspotting. To do that, we could quickly scale up to 20 Bigtable nodes and 50 Dataflow workers. Then the batch jobs read from BigQuery and wrote to the newly created schema with Bigtable, and it was all done in 25 minutes. Before Bigtable, this kind of adjustment would have taken days to complete.Bigtable’s Key Visualizer opens up opportunitiesThe idea to reverse the article ID came to me as I thought about the Key Visualizer from Bigtable, which is so nicely done and easy to use compared to our previous setup. It’s tightly integrated, but easy to explain to others. We use SSD nodes and the only configuration we need to worry about is the number of nodes, and if we want to have a replication or not. It’s like a volume on a stereo—and that was really mind-blowing, too. The speed of scaling up and down is really fast, and with Dataflow, it doesn’t drop anything, you don’t have to pre-warm anything, you can just schedule it and share it while it’s running. We haven’t seen ease of scaling like this before.Considering future use cases for BigtableFor future cases, we’re working on improvements to our fraud detection project involving machine learning (ML) that we hope to move to Bigtable. Currently we have a process, triggered every hour by Airflow in Cloud Composer, that takes the data from BigQuery for the last hour, and then runs over the data, with the Python container executing with the model that is being loaded and that takes the data as an input. If the algorithm is 100 percent sure the article is fraudulent, it would block the product, which would require a manual request from customer care to unblock. If the algorithm is less certain, it would go into a customer care inbox and get flagged, where the agents would check it.What’s currently missing in the process is an automated feedback loop, a learning adjustment if the customer care agent replies, “This is not fraud.” We could actually write out some code to perform the action, but we need a faster solution. It would make more sense to source this in the pipe directly from Bigtable for the learning models. In the future, we’d also like to have the Dataflow pipeline writing to BigQuery and Bigtable at the same time for all of the important topics. Then, we could source for these kinds of use cases and serve them directly from Bigtable instead of BigQuery, making them soft “real time.”With the 13 percent savings in BigQuery costs, and the tight integration of all the Google Cloud managed services like Bigtable, our small (but tenacious) DI team is free from the hassles of operations work on our data platform. We can devote that time to developing solutions for these future use cases and more. See what’s selling on Ricardo.ch. Then, check out our site for more information about the cloud-native key value store Bigtable.

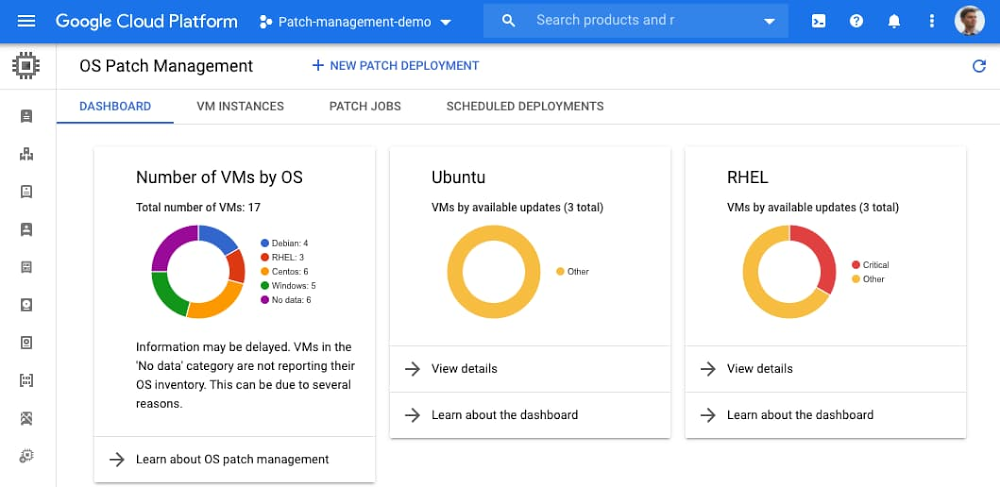

Quelle: Google Cloud Platform