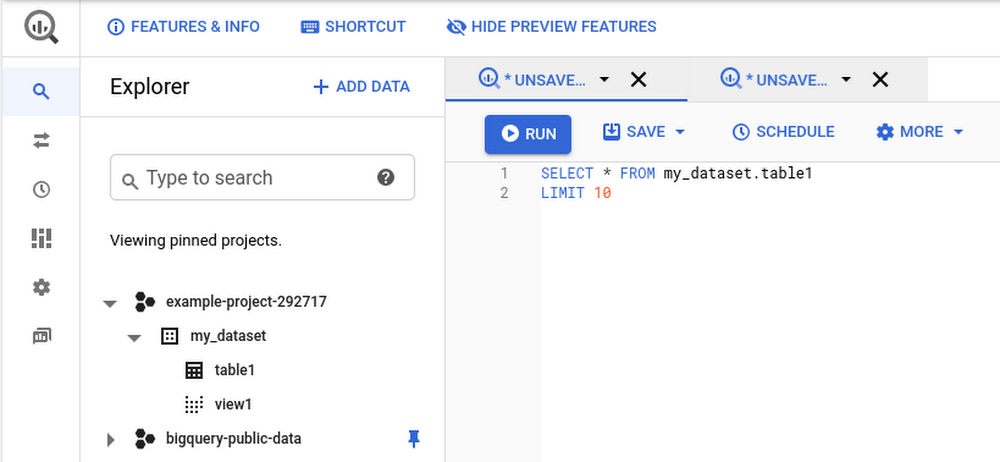

In our first blog post in this series, we talked broadly about the democratization of data and insights. Our second blog took a deeper look at insights derived specifically from machine learning, and how Google Cloud has worked to push those capabilities to more users across the data landscape. In our third and final blog in this series, we’ll examine data access, data insights, and machine learning in the context of real-time decision making, and how we’re working to help all users – business and technical – get access to real-time insights.Getting real about real-time data analysisLet’s start by taking a look at real-time data analysis (also referred to as stream analytics) and the blend of factors that increasingly make it critical to business success. First, data is increasingly real-time in nature. IDC predicts that by 2025, more than 25% of all data created will be real-time in nature. We predict the number of business decisions being made at Google Cloud based on real-time data will be even higher than that. What’s driving that growth? There are a number of factors that represent an overall trend towards digitization in not just business, but society in general. These factors include, but aren’t limited to, digital devices, IoT-enabled manufacturing and logistics, digital commerce, digital communications, and digital media consumption. Harnessing the real-time data created by these activities gives companies the opportunity to better analyze their market, competition, and importantly, customers.Next, customers expect more than ever in terms of personalization; they expect to be a “segment of one” across recommendations, offers, experience, and more. Companies know this and compete with each other to deliver the best user and customer experience possible. Google Cloud customers such as AB Tasty are processing billions of real-time events for millions of users each day to deliver just that for their clients—an experience that’s optimized for smaller and smaller segments of users.With our new data pipeline and warehouse, we are able to personalize access to large volumes of data that were not previously there. That means new insights and correlations and, therefore, better decisions and increased revenue for customers. Jean-Yves Simon, VP Product, AB TastyFinally, real-time analysis is most useful when there’s an opportunity to take quick actions based on the insights. The same digitization driving real-time data generation provides an opportunity to drive immediate action in an instant feedback loop. Whether the action involves on-the-spot recommendations for digital retail, rerouting delivery vehicles based on real-time traffic information, changing the difficulty of an online gaming session, digitally recalibrating a manufacturing process, stopping fraud before a transaction is completed, or countless other examples, today’s technology opens up the opportunity to drive a more responsive and efficient business.Democratizing real-time data analysisWe think of democratization in this space in two different frames. One is the standard frame we’ve taken in this blog series of expanding the capabilities of various data practitioners: “how do we give more users the ability to generate real-time insights?” The other frame, specifically for stream analytics, is democratization at the company level. Let’s start with how we’re helping more businesses move to real-time, and then we’ll dive into how we’re helping across different users.Democratizing stream analytics for all businesses Historically, collecting, processing, and acting upon real-time data was particularly challenging. The nature of real-time data is that its volume and velocity can vary wildly in many use cases, creating multiple layers of complexity for data engineers trying to keep the data flowing through their pipelines. The tradeoffs involved in running a real-time data pipeline led many engineers to implement a lambda architecture, in which they would have both a real-time copy of (sometimes partial) results as well as a “correct” copy of results that took a traditional batch route. In addition to presenting challenges in reconciling data at the end of these pipelines, this architecture multiplied the number of systems to manage, and typically increased the number of ecosystems these same engineers had to manage. Setting this up, and keeping it all working, took large teams of expert data engineers. It kept the bar for use cases high.Google and Google Cloud knew there had to be a better way to analyze real-time data… so we built it! Dataflow, together with Pub/Sub, answers the challenges posed by traditional streaming systems by providing a completely serverless experience that handles the variation in event streams with ease. Pub/Sub and Dataflow scale to exactly what resources are needed for the job at hand, handling performance, scaling, availability, security, and more—all automatically. Dataflow ensures that data is reliably and consistently processed exactly once, so engineers can trust the results their systems produce. Dataflow jobs are written using the Apache Beam SDK, which provides programming language choice for Dataflow (in addition to portability). Finally, Dataflow also allows data engineers to easily switch back and forth between both batch streaming modes, meaning users can experiment between real-time results and cost-effective batch processing with no changes to the code.Google unifies streaming analytics and batch processing the way it should be. No compromises. That must be the goal when software architects create a unified streaming and batch solution that must scale elastically, perform complex operations, and have the resiliency of Rocky Balboa. The Forrester Wave™, Streaming Analytics, Q3 2019, by Mike Gualtieri, Forrester Research, Inc.All together, Dataflow and Pub/Sub deliver an integrated, easy-to-operate experience that opens real-time analysis up to companies that don’t have large teams of expert data engineers. We’ve seen small teams of as few as six engineers processing billions of events per day. They can author their pipelines, and leave the rest to us.Democratizing stream analytics for all personasHaving developed a streaming platform that made streaming available to data engineering teams of all sizes and skills, we set about making it easier for more people to access real-time analysis and drive better decisions as a result. Let’s dive into how we’ve expanded access to real-time analytics.Business and data analystsProviding access to real-time data for data analysts and business analysts starts with enabling data to be rapidly ingested into the data warehouse. BigQuery is designed to be “always fast, always fresh,” and it enables streaming inserts into the data warehouse at millions of events per second. This gives data warehouse users the ability to work on the very freshest data, making their analysis more timely and accurate.In addition to the insights that data analysts typically drive out of the data warehouse, analysts can also apply machine learning capabilities delivered by BigQuery ML against real-time data being streamed in. If data analysts know there’s a source of data that they need to access but that isn’t currently in the warehouse, Dataflow SQL enables them to connect new streaming sources of data with a few simple lines of SQL. The real-time capabilities we describe for data analysts have cascading effects for the business analysts who rely on dashboards sourced from the data warehouse. BigQuery’s BI Engine enables sub-second query response and high concurrency for BI use cases, but including real-time data in the data warehouse gives business analysts (and those who rely on them) a fuller picture of what’s happening in the business right now. In addition to BI, Looker’s data-driven workflows and data application capabilities benefit from fast-updating data in BigQuery. ETL DevelopersData Fusion, Google Cloud’s code-free ETL tool, delivers real-time processing capabilities to ETL developers with the simplicity of flipping a switch. Data Fusion users can easily set their pipelines to process data in real-time and land it into any number of storage or database services at Google Cloud. Further, Data Fusion’s ability to call upon a number of predefined connectors, transformations, sinks, and more – including machine learning APIs – and to do so in real-time gives businesses an impressive level of flexibility without the need to write any code at all.Wrapping upEach blog in this series (catch up on Part 1 and Part 2 if you missed them) has shown how Google Cloud can democratize data and insights. It’s not enough to deliver data access, then simply hope for good things to happen within your business. We’ve observed a clear formula for successfully democratizing the generation of ideas and insights throughout your business:Start by ensuring you can deliver broad access to data that’s relevant to your business. That means moving towards systems that have elastic storage and compute with the ability to automatically scale both. This will enable you to bring in new data sources and new data workers without the need for labor-intensive operations, increasing the agility of your business.Ensure that users can generate insights from within the tools they know and are comfortable with. By delivering new capabilities to existing users within their tools, you can help your business put data to work across the organization. Further, this will keep your workforce excited and engaged as they get to explore new areas of analysis like machine learning.Once you’ve given your employees the ability to access data and the ability to drive insights from the data, give them the ability to analyze real-time data and automate the outcomes of that analysis. This will drive better customer experiences, and help your organization take faster advantage of opportunities in the market.We hope you’ve enjoyed this series, and we hope you’ll consider working with us to help democratize data and insights within your business. A great way to get started is by starting a free trial or jumping into the BigQuery sandbox, but don’t hesitate to reach out if you want to have a conversation with us.The Forrester Wave™, Streaming Analytics, Q3 2019

Quelle: Google Cloud Platform