The new Google Cloud region in Warsaw is open

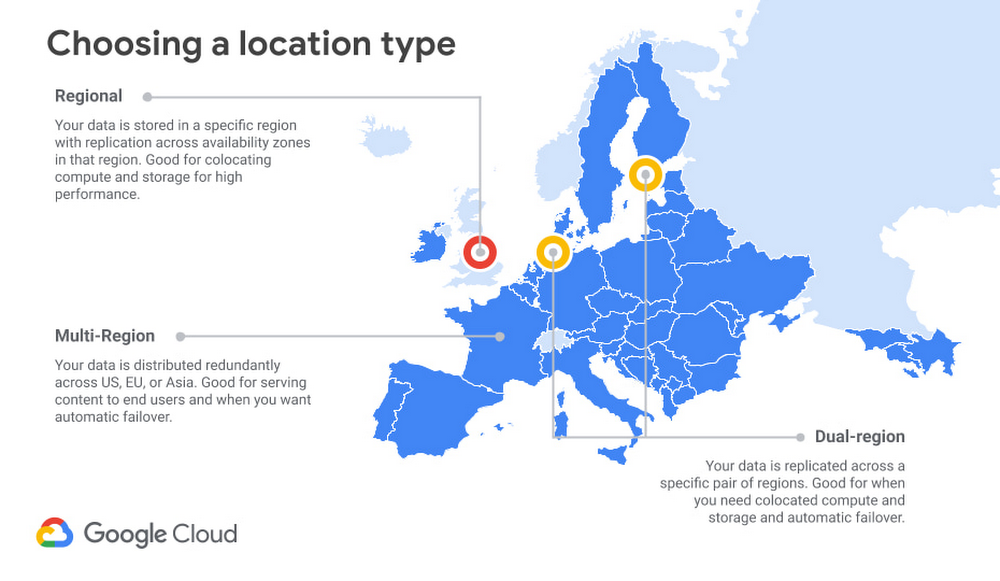

Since Google opened its first office in Poland over 15 years ago, we have been supporting the country’s growing digital economy, providing our partners and customers with cutting-edge technology, knowledge and global insights. With our announcement of a strategic partnership with Poland’s Domestic Cloud Provider in September 2019, we further committed to bring the power of Google Cloud to support the rapid growth, entrepreneurial spirit and passion for innovation of Polish businesses.Now, as Poland looks towards economic recovery, enterprises and public organizations of all sizes are taking advantage of new cloud technologies, and we are delivering on our commitment. To support customers in Poland and Central and Eastern Europe (CEE), we’re excited to announce that our new Google Cloud region in Warsaw is now open.Designed to help Polish and CEE companies build highly available applications for their customers, the Warsaw region is our first Google Cloud region in Poland and seventh to open in Europe. What customers and partners are sayingNavigating this past year has been a challenge for companies as they grapple with changing customers demands and greater economic uncertainty. And we’ve been fortunate to partner with and serve people, companies, and government institutions around the world to help them adapt. The Google Cloud region in Warsaw will help our customers in CEE adapt to new requirements, new opportunities and new ways of working. “We want to build the bank of the future, and to do that, we need the most innovative technology. By choosing Google Cloud, we believe we will have access to the tools we need, now and in the years to come.”—Adam Marciniak, CIO PKO Bank Polski “Google Cloud really helped us to make better use of their products and save money. As a result, we were able to dramatically increase our CPU and memory usage while keeping costs flat. We delivered a stable service to more customers without having to pass the cost on to them.”—Lenka Gondová, Chief Information Security Officer, Exponea“We have ambitious plans for the next few years, so we have decided to engage with Google Cloud as an experienced partner who provides us with both the know-how and the infrastructure and tools we need to build and maintain our ecommerce sites.”—Arkadiusz Ruciński, Omnichannel Director, LPP“We double in size every year, and our previous infrastructure providers couldn’t keep up. It became really hard to maintain hundreds of dedicated servers. As our stack grew, we decided to deploy our service to Google Cloud as the most effective and efficient way to support our business model.”—Paweł Sobkowiak, CTO, Booksy“We are seeing even more benefits from Anthos as our use evolves. The biggest advantage so far has been increased engagement among our staff. Teams are working passionately to achieve as much as possible because they can now focus on their core responsibilities rather than infrastructure management. That’s a testament to the power of Anthos and the value of the Accenture partnership.”—Monika Nowak-Toporowicz, Chief Technology and Innovation Officer, UPC PolskaA global network of regionsWarsaw joins the existing 24 Google Cloud regions connected via our high-performance network, helping customers better serve their users and customers throughout the globe. Learn more about Google Cloud locations.With this new region, Google Cloud customers operating in Poland and the wider CEE will benefit from low latency and high performance of their cloud-based workloads and data. Designed for high availability, the region opens with three availability zones to protect against service disruptions, and offers a portfolio of key products, including Compute Engine, App Engine, Google Kubernetes Engine, Cloud Bigtable, Cloud Spanner, and BigQuery.Helping customers build their transformation cloudsGoogle Cloud is here to support Polish businesses, helping them get smarter with data, deploy faster, connect more easily with people and customers throughout the globe, and protect everything that matters to their businesses. The cloud region in Warsaw offers new technology and tools that can be a catalyst for this change. Google Cloud will also support our customers with people and education programs. We have already trained thousands of IT specialists in Poland, helping both large enterprises and medium-sized companies get access to experts in cloud technologies. And since last year, as part of our local Grow with Google programme, we offer all SMBs in Poland free support in starting their cloud journey. Our ongoing commitment to Poland goes beyond our newest Cloud region. Google Cloud’s engineering center in Warsaw is a leading cloud technology hub in Europe and continues to grow each year, employing highly-skilled specialists that work on our global cloud computing solutions. We are also expanding our office in Wrocław, hiring experts to help companies migrate to cloud. In Poland and across Central and Eastern Europe, we’re proud to support businesses in every industry, from retail and banking, to manufacturing and public sector helping them recover, grow and thrive. And we are very excited to see how our partners and customers will use the power of the new Google Cloud region in Warsaw to accelerate their digital transformation.

Quelle: Google Cloud Platform