Enhance DDoS protection & get predictable pricing with new Cloud Armor service

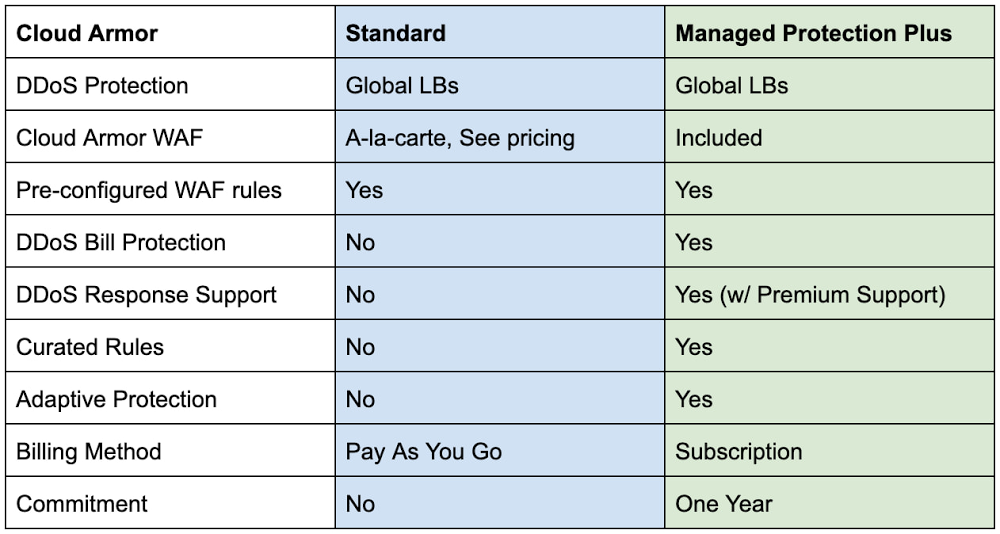

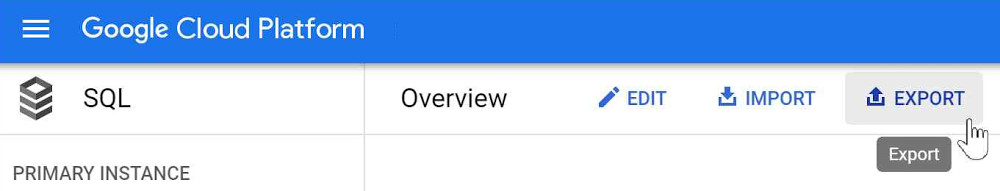

Securing websites and applications is a constant challenge for most organizations. To make it easier, we have introduced new capabilities within Cloud Armor over the past year that can help protect your applications. Today, we are announcing the general availability of Google Cloud Armor Managed Protection Plus. Cloud Armor, our Distributed Denial of Service (DDoS) protection and Web-Application Firewall (WAF) service on Google Cloud, leverages the same infrastructure, network, and technology that has protected Google’s internet-facing properties from some of the largest attacks ever reported. These same tools protect customers’ infrastructure from DDoS attacks, which are increasing in both magnitude and complexity every year. Deployed at the very edge of our network, Cloud Armor absorbs malicious network- and protocol-based volumetric attacks, while mitigating the OWASP Top 10 risks and maintaining the availability of protected services. Managed Protection Plus OverviewCloud Armor Managed Protection Plus is a managed application protection service that bundles advanced DDoS protection capabilities, WAF capabilities, ML-based Adaptive Protection, efficient pricing, bill protection and access to Google’s DDoS response support service into an enterprise-friendly subscription.Cloud Armor L3/L4 DDoS ProtectionAll Cloud Armor customers (Standard & Managed Protection Plus) receive the same in-line, always-on DDoS protection. This protection is deeply integrated into the Global Load Balancers sitting at Google Cloud’s edge. These capabilities defend target workloads from network- and protocol-based volumetric attacks (L3/L4 DDoS). This is the same protection and mitigation infrastructure that was used to protect against the 2.54 Tbps DDoS attack we shared last year.Malformed traffic targeting your globally load-balanced endpoints (HTTP/S LB, TCP Proxy, SSL Proxy) is automatically absorbed or dropped without impacting any well-formed requests heading to a protected service. Cloud Armor stops common attacks such as UDP-based amplification or reflection attacks as well TCP floods such as SYN-Flood. Cloud Armor Web-Application Firewall (WAF)Cloud Armor WAF protects their internet-facing applications from common attack types and enforce IP, Geo, and layer 7 filtering policies at the edge of Google’s network. Users can easily deploy pre-configured WAF rules to mitigate the OWASP Top 10 web vulnerability risks and can use the extensive custom rules language to configure security policies.Managed Protection Plus CapabilitiesAdaptive Protection Cloud Armor Adaptive Protection (currently in Preview) is a machine learning-powered service that detects layer 7 attacks and protects your applications and services from them. Adaptive Protection automatically learns what normal traffic patterns look like on a per-application/service basis. Because it is always monitoring, Adaptive Protection quickly identifies and analyzes suspicious traffic and provides customized, narrowly tailored rules that mitigate ongoing attacks in near real-time. Curated RulesCloud Armor’s curated rules simplify the deployment of effective access controls in front of your applications. A range of named rules let you filter traffic based on threat intelligence data maintained, and regularly updated, by Google on behalf of Managed Protection Plus subscribers. For example, today’s curated rules include Named IP Lists containing IP ranges of third party proxies from Cloudflare, Imperva, or Fastly that users may deploy upstream of their Google Cloud endpoints. Future Capabilities Managed Protection Plus will continue to expand the breadth and depth of protection over time. This will include additional protection capabilities for subscribers, visibility into DDoS attacks and on-going mitigations, as well as access to Google’s threat intelligence.Managed Protection Plus ServicesDDoS Response SupportCustomers that come under attack can engage support to get rapid help from Google’s DDoS response team. Our team can help assess, advise, and assist in mitigating the attack. DDoS response support is available 24/7, and is staffed by a global team of DDoS and networking experts that protect Google’s own services as well as those of other Google Cloud customers. Response team members have a wide range of tactics and tools at their fingertips, including custom mitigations deployed across Google Cloud’s networking infrastructure. DDoS Bill ProtectionBill protection offers peace of mind and predictability by alleviating much of the financial impact of a DDoS attack. Subscribers that see their Google Cloud networking bill spike as a result of a DDoS attack will be able to open a claim to receive a credit in the amount of the bill spike. Not only does this service ensure that costs remain predictable in the face of DDoS attacks, it also decreases the incentive for attackers to target their victim’s infrastructure bill in the hopes of making it too expensive to operate.Managed Protection Plus brings to bear to the full scale of Google’s global network, machine learning capabilities, and unique experience and expertise. Subscribers can operate internet-facing services and workloads safely, and respond quickly and effectively to targeted or distributed attacks. Subscribe today by navigating to the Managed Protection tab in the console and click the subscribe button.Related ArticleExponential growth in DDoS attack volumesHow Google prepares for and protects against the largest volumetric DDoS attacks.Read Article

Quelle: Google Cloud Platform