First steps: Run your first secure, production-ready image

Container base images form the foundation of your application security. When those foundations contain vulnerabilities, every service built on top inherits the same risk.

Docker Hardened Images addresses this at the source. These are continuously-maintained, minimal base images designed for security: stripped of unnecessary packages, patched proactively, and built with supply chain attestation. Instead of maintaining your own hardened bases or accepting whatever vulnerabilities ship with official images, you get production-ready foundations with near-zero CVEs and compliance metadata baked in.

What to Expect from Your 30-days Trial?

You’ve got 30 days to evaluate whether Docker Hardened Images fits your environment. That’s enough time to answer the crucial question: Would this reduce our security debt without adding operational burden?

It’s important to note that while DHI provides production‑grade images, this trial isn’t about rushing into production. Its primary purpose is educational: to let you experience the benefits of a hardened base image for supply‑chain security by testing it with the actual services in your stack and measuring the results.

By the end of the trial, you should have concrete results:

CVE counts before and after,

engineering effort required per image migration, and

whether your team would actually use this.

Testing with real projects always outshines promises.

The DHI quickstart guide walks through the actions. This post covers what the docs don’t: the confusion points you may hit, what metrics actually matter, and how to evaluate results easily.

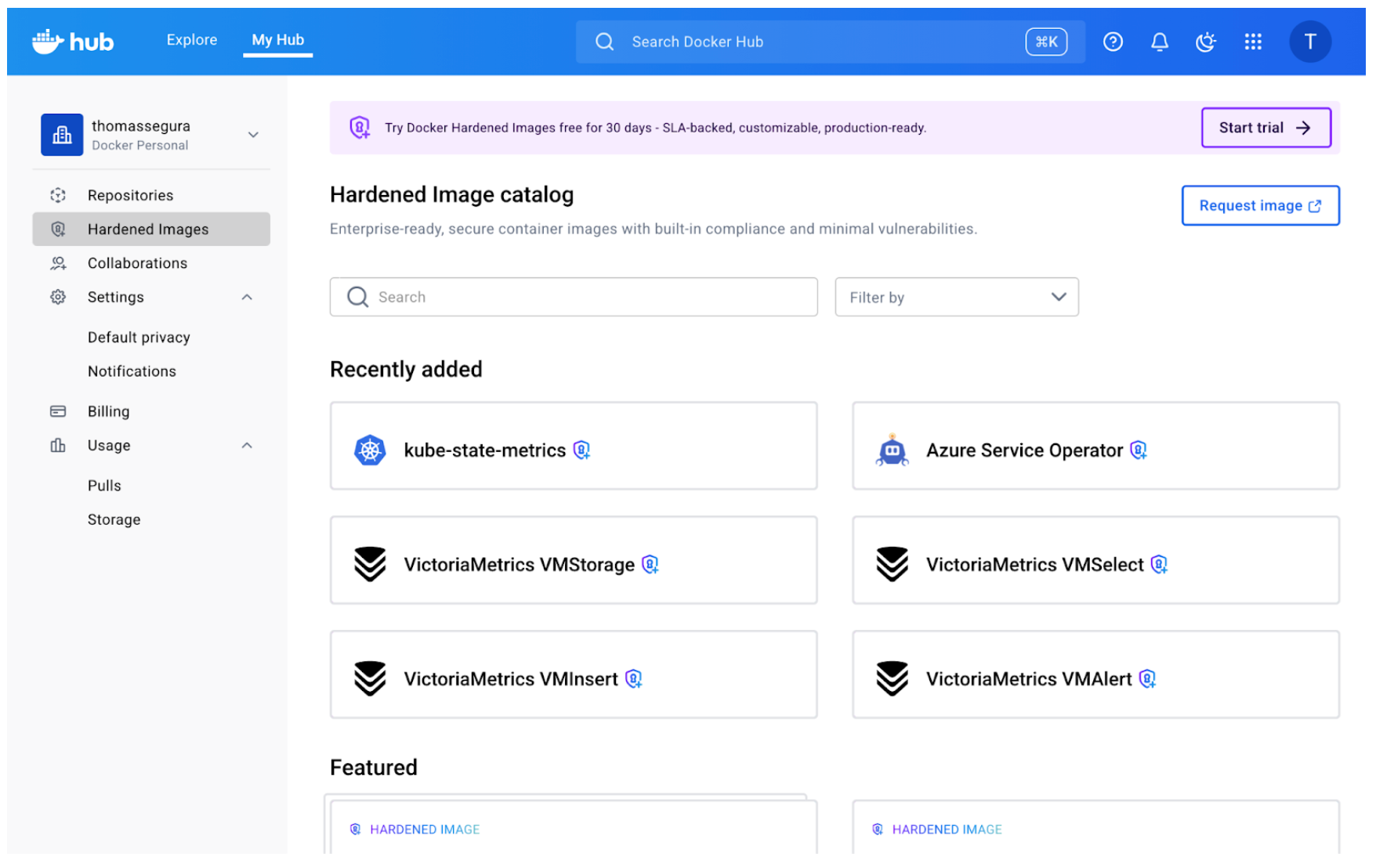

Step 1: Understanding the DHI Catalog

To get started with your Free trial, you must be an organization owner or editor. This means you will get your own Repository where you can mirror images, but we’ll get back to this later.

If you are familiar with Docker Hub, the DHI catalog should already look familiar:

The most obvious difference are the little lock icons indicating a Hardened Image. But what exactly does it mean?The core concept behind hardened images is that they present a minimal attack surface, which in practical terms means that only the strict minimum is included (as opposed to “battery-included” distributions like Ubuntu or Debian). Think of it like this: The hardened images maintain compatibility with the distro’s core characteristics (libc, filesystem hierarchy, package names) while removing the convenience layers that increase attack surface (package managers, extra utilities, debugging tools).So the “OS” designation you can see below every DHI means this image is built on top of those distributions (uses the same base operating system), but with security hardening and package minimization applied.

Sometimes, you need these convenient Linux utilities, for development or testing purposes. This is where variants come into play.

The catalog shows multiple variants for each base image: standard versions, (dev) versions, (fips) versions. The variant choice matters for security posture. If you can run your application without a package manager in the final image (using multi-stage builds, for example), always choose the standard variant. Fewer tools in the container means fewer potential vulnerabilities.Here’s what they mean: Standard variants (e.g., node-base:24-debian13):

Minimal runtime images

No package managers (apk, apt, yum removed)

Production-ready

Smallest attack surface

Fips variants (e.g., node-base:24-debian13-fips):FIPS variants come in both runtime and build-time variants. These variants use cryptographic modules that have been validated under FIPS 140, a U.S. government standard for secure cryptographic operations. They are required for highly-regulated environments

Dev variants (e.g., node-base:24-debian13-dev):

Include package managers for installing additional dependencies

Useful during development or when you need to add packages at build time

Larger attack surface (but still hardened)

Not recommended for production

The catalog includes dozens of base images: language runtimes (Python, Node, Go), distros (Alpine, Ubuntu, Debian), specialized tools (nginx, Redis). Instead of trying to evaluate everything from the start, start narrow by picking one image (that you use frequently (Alpine, Python, Node are common starting points) for the first test.What “Entitlements” and “Mirroring” Actually MeanYou can’t just ‘docker pull’ directly from Docker’s DHI catalog. Instead, you mirror images to your organization’s namespace first. Here’s the workflow:

Your trial grants your organization access to a certain number of DHIs through mirroring: these are called entitlements.

As an organization owner, you first create a copy of the DHI image in your namespace (e.g., yourorg/dhi-node), which means you are mirroring the image and will automatically receive new updates in your repository.

Your team pulls from your org’s namespace, not Docker’s.

Mirroring takes a few minutes and copies all available tags. Once complete, the image appears in your organization’s repositories like any other image.Why this model? Two reasons:

Access control: Your org admins control which hardened images your team can use

Availability: Mirrored images remain available even if your subscription changes

The first time you encounter “mirror this image to your repository,” it feels like unnecessary friction. But once you realize it’s a one-time setup per base image (not per tag), it makes sense. You mirror node-base once and get access to all current and future Node versionsNow that you’ve mirrored a hardened image, it’s time to test it with an actual project. The goal is to discover friction points early, when stakes are low.

Step 2: Your First Real Migration Test

Choose a project that is:

Simple enough to debug quickly if something breaks (fewer moving parts)

Real enough to represent actual workloads

Representative of your stack

Drop-In Replacement

Open your Dockerfile and locate the FROM instruction. The migration is straightforward:

# Before

FROM node:22-bookworm-slim

# After

FROM <your-org-namespace>/dhi-node:22-debian13-fips

Replace your organization’s namespace and choose the appropriate tag. If you were using a generic tag like node:22, switch to a specific version tag from the hardened catalog (like 22-debian13-fips). Pinning to specific versions is a best practice anyway – hardened images just make it more explicit.

For other language runtimes, the pattern is similar:

# Python example

FROM python:3.12-slim

# becomes

FROM <your-org-namespace>/dhi-python-base:3.12-bookworm

# Node example

FROM node:20-alpine

# becomes

FROM <your-org-namespace>/dhi-node-base:20.18-alpine3.20

Build the image with your new base:

docker build . -t my-service-hardened

Watch the build output: if your Dockerfile assumes certain utilities exist (like wget, curl, or package managers), the build may fail. This is expected. Hardened bases strip unnecessary tools to reduce attack surface. Here are some common build failures and fixes:

Missing package manager (apt, yum):

If you’re installing packages in your Dockerfile, you’ll need to use the (dev) variant, and probably switch to a multi-stage build (install dependencies in a builder stage using a dev variant, then copy artifacts to the minimal runtime stage use a fips hardened base image variant)

Missing utilities (wget, curl, bash):

Network tools are removed unless you’re using a debug variant

Solution: same as above, install what you need explicitly in a builder stage, or verify you actually need those tools at runtime

Different default user:

Some hardened images run as non-root by default

If your application expects to write to certain directories, you may need to adjust permissions or use USER directives appropriately

For my Node.js test, the build succeeded without changes. The hardened Node base contained everything the runtime needed – npm dependencies installed normally, and the packages removed were system utilities my application never touched.

Verify It Runs

Build success doesn’t mean runtime success. Start the container and verify it behaves correctly:

docker run –rm -p 3000:3000 my-service-hardened

Test the service:

Does it start without errors?

Do API endpoints respond correctly?

Are logs written as expected?

Can it connect to databases or external services?

Step 3: Comparing What Changed

Before moving to measurement, build the original version alongside the hardened one:

# Switch to your main branch

git checkout main

# Build original version

docker build . -t my-service-original

# Switch back to your test branch with hardened base

git checkout dhi-test

# Build hardened version

docker build . -t my-service-hardened

Now you have two images to compare: one with the official base, one with the hardened base. Now comes the evaluation: what actually improved, and by how much?

Docker Scout

Docker Scout compares images and reports on vulnerabilities, package differences, and size changes. If you haven’t enrolled your organization with Scout yet, you’ll need to do that first (it’s free for the comparison features we’re using).

Run the comparison (here we are comparing Node base images) :

docker scout compare –to <your-org-namespace>/dhi-node:24.11-debian13-fips node:24-bookworm-slim

Scout outputs a detailed breakdown. Here’s what we found when comparing the official Node.js image to the hardened version.

1. Vulnerability Reduction

The Scout output shows CVE counts by severity:

Official Node Hardened DHI

24-bookworm-slim 24.11-debian13-fips

Critical 0 0

High 0 0

Medium 1 0 ← eliminated

Low 24 0 ← eliminated

Total 25 0

The hardened image achieved complete vulnerability elimination. While the official image already had zero Critical/High CVEs (good baseline), it contained 1 Medium and 24 Low severity issues – all eliminated in the hardened version.Medium and Low severity vulnerabilities matter for compliance frameworks. If you’re pursuing SOC2, ISO 27001, or similar certifications (especially in regulated industries with strict security standards), demonstrating zero CVEs across all severity levels significantly simplifies audits.

2. Package Reduction

Scout shows a dramatic difference in package count:

Official Node Hardened DHI

Total packages 321 32

Reduction — 289 packages (90%)

The hardened image removed 289 packages including:

apt (package manager)

gcc-12 (entire compiler toolchain)

perl (scripting language)

bash (replaced with minimal shell)

dpkg-dev (Debian package tools)

gnupg2, gzip, bzip2 (compression and crypto utilities)

dozens of libraries and system utilities

These are tools your Node.js application never uses at runtime. Removing them drastically reduces attack surface: 90% fewer packages means 90% fewer potential targets for exploitation.This is important because even if packages have no CVEs today, they represent future risk. Every utility, library, or tool in your image could become a vulnerability tomorrow. The hardened base eliminates that entire category of risk.

3. Size Difference

Scout reports image sizes:

Official Node Hardened DHI

Image size 82 MB 48 MB

Reduction — 34 MB (41.5%)

The hardened image is 41.5% smaller – that’s 34 MB saved per image. For a single service, this might seem minor. But multiply across dozens or hundreds of microservices, and the benefits start to become obvious: faster pulls, lower storage costs, and reduced network transfer.

4. Extracting and Reading the SBOM

One of the most valuable compliance features is the embedded SBOM (Software Bill of Materials). Unlike many images where you’d need to generate the SBOM yourself, hardened images include it automatically.

Extract the SBOM to see every package in the image:

docker scout sbom <your-org-namespace>/dhi-node:24.11-debian13-fips –format list

This outputs a complete package inventory:

Name Version Type

base-files 13.8+deb13u1 deb

ca-certificates 20250419 deb

glibc 2.41-12 deb

nodejs 24.11.0 dhi

openssl 3.5.4 dhi

openssl-provider-fips 3.1.2 dhi

…

The Type column shows where packages came from:

deb: Debian system packages

dhi: Docker Hardened Images custom packages (like FIPS-certified OpenSSL)

docker: Docker-managed runtime components

The SBOM includes name, version, license, and package URL (purl) for each component – everything needed for vulnerability tracking and compliance reporting.You can can easily the SBOM in SPDX or CycloneDX format for ingestion by a vulnerability tracking tools:

# SPDX format (widely supported)

docker scout sbom <your-org>/dhi-node:24.11-debian13-fips

–format spdx

–output node-sbom.json

# CycloneDX format (OWASP standard)

docker scout sbom <your-org>/dhi-node:24.11-debian13-fips

–format cyclonedx

–output node-sbom-cyclonedx.json

Beyond the SBOM, hardened images include 17 different attestations covering SLSA provenance, FIPS compliance, STIG scans, vulnerability scans, and more. We’ll explore how to verify and use these attestations in Part 2 of this blog series.

Trust, But Verify

You’ve now: Eliminated 100% of vulnerabilities (25 CVEs → 0) Reduced attack surface by 90% (321 packages → 32) Shrunk image size by 41.5% (82 MB → 48 MB) Extracted the SBOM for compliance tracking

The results look good on paper, but verification builds confidence for production. But how do you verify these security claims independently? In Part 2, we’ll explore:

Cryptographic signature verification on all attestations

Build provenance traced to public GitHub source repositories

Deep-dive into FIPS, STIG, and CIS compliance evidence

SBOM-driven vulnerability analysis with exploitability context

View related documentation:

Docker Hardened Images: Get Started

Docker Hardened Images catalog

Docker Scout Quickstart

Quelle: https://blog.docker.com/feed/