Ten years ago, Microsoft and Red Hat began a partnership grounded in open source and enterprise cloud innovation. This year, we celebrate a decade of collaboration. Our journey together has helped customers accelerate hybrid cloud transformation, empower developers to innovate, and strengthen the open source community to drive modern application innovation.

Accelerate modernization with Azure Red Hat OpenShift

The partnership that redefined enterprise cloud

In 2015, running mission-critical Linux workloads on Microsoft Azure was considered bold and visionary. Ten years later, our partnership with Red Hat has helped thousands of organizations worldwide accelerate digital transformation, set new benchmarks in open innovation, and advance the cloud-native movement for enterprises everywhere.

Together, we introduced Red Hat Enterprise Linux (RHEL) on Azure, setting a new precedent for innovation in the cloud. This collaboration deepened with the addition of Red Hat offerings, including Azure Red Hat OpenShift (ARO)—a fully managed, jointly engineered, and supported application platform that combines cloud scale with open source flexibility.

Red Hat and Microsoft’s global footprint and expanding customer base underline how an open approach and commitment to solving customer challenges drives adoption and innovation at scale.

Accomplishments and impact

Azure Red Hat OpenShift and Red Hat’s automation platforms are powering digital transformation for global leaders across industries:

Leaders like Teranet have saved CA$5.6 million in capital expenditures and increased customer confidence by migrating mission-critical systems and OpenShift containers to Azure, unlocking unmatched scalability and automation.

For Bradesco, Azure Red Hat OpenShift is the secure, scalable backbone of its future-ready AI platform—unifying governance, powering more than 200 enterprise AI initiatives, and accelerating transformation across every business unit. By integrating Azure OpenAI and Power Platform, Bradesco delivers scalable, compliant innovation in banking services.

Western Sydney University improved reliability and accelerated digital research for thousands of students and faculty with the security and flexibility of Red Hat Enterprise Linux on Azure.

Symend launched new regions in weeks and powered personalized customer engagement by adopting Azure Red Hat OpenShift and Microsoft Azure AI, driving agility at enterprise scale. Microsoft itself leverages Red Hat’s Ansible Automation Platform to streamline thousands of endpoints and modernize global network operations for business-critical infrastructure.

Together, Microsoft and Red Hat have advanced the industry with major accomplishments:

Deep integration for real-world flexibility: Red Hat solutions—like Azure Red Hat OpenShift, Red Hat Enterprise Linux, and Red Hat Ansible Automation Platform—are available across Azure, including in the Azure Marketplace, Azure Government, and expanding regions. Customers benefit from streamlined migrations, enhanced security features, and integrated support that simplifies modernization.

Modernization and operational agility: OpenShift Virtualization and Confidential Containers on Azure Red Hat OpenShift enable customers to migrate and modernize legacy applications, run confidential workloads, and automate operations. These capabilities deliver scalability and secure management across hybrid environments.

Accelerating open source innovation: Together, the companies have made contributions to Kubernetes, containers, cloud monitoring, secure computing standards, and advancing open hybrid architectures for everyone.

Expanding developer and IT choice: By making RHEL available for Windows Subsystem for Linux and supporting hybrid container and virtual machine (VM) environments, Microsoft and Red Hat have given developers flexible, secure, and consistent tools for building anywhere.

Enabling transformative AI adoption at scale: By leveraging Azure Red Hat OpenShift as a secure, governable foundation for managing multicloud OpenShift clusters, Bradesco streamlined operations across on-premises and cloud environments. This foundation, combined with Microsoft Foundry and Azure OpenAI Service, empowers Bradesco to deliver AI-powered banking solutions that scale securely and responsibly across millions of customers and business units. Symend also adopts Azure Red Hat OpenShift and Azure AI to power personalized customer engagement.

Flexible pricing: Azure Hybrid Benefit for RHEL is a key cost optimization feature that allows organizations to maximize existing Red Hat subscriptions when running workloads on Azure. By leveraging this benefit, customers can reduce licensing costs and improve ROI while maintaining enterprise-grade support and security. Including this in the conversation highlights how Azure delivers both technical flexibility and financial efficiency for hybrid environments.

Additionally, customers can optimize costs with pay-as-you-go pricing, draw down Microsoft Azure Consumption Commitment (MACC), and receive a single bill for both OpenShift and Azure consumption with Azure Red Hat OpenShift.

Discover what these solutions can offer your business

Ten years of innovation: Microsoft and Red Hat partnership highlights

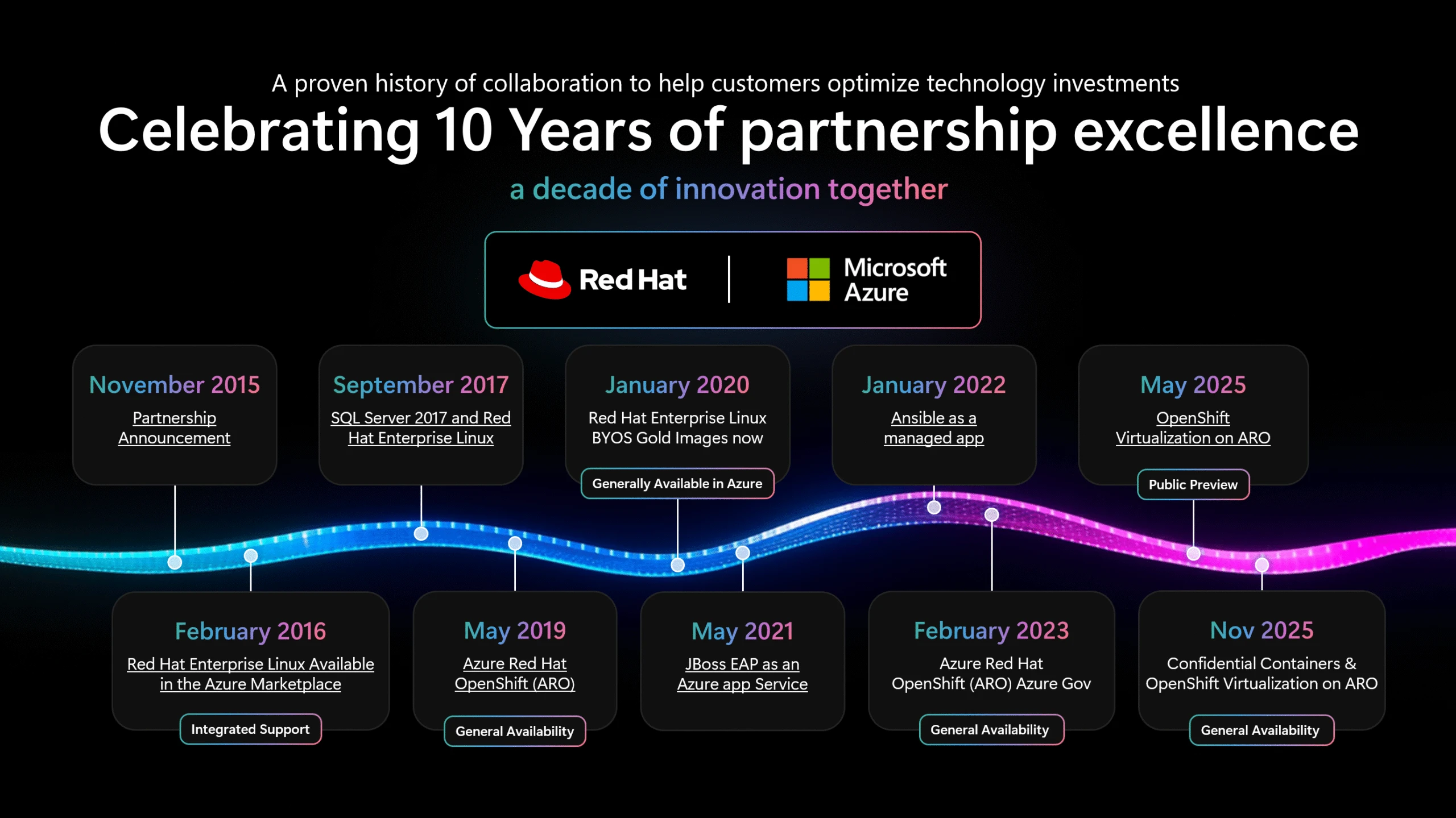

The partnership’s journey is marked by major shared milestones, summarized in the timeline graphic below:

November 2015: Partnership announcement launched a decade of innovation.

February 2016: Red Hat Enterprise Linux available in the Azure Marketplace with integrated support.

May 2019: Azure Red Hat OpenShift reached general availability (GA).

January 2020: Red Hat Enterprise Linux BYOS Gold images available in Azure.

May 2021: JBoss EAP offered as an Azure App Service.

January 2022: Ansible released as a managed app for automation.

February 2023: Azure Red Hat OpenShift for Azure Government reached GA.

May 2025: OpenShift Virtualization on Azure Red Hat OpenShift entered public preview, culminating at Ignite 2025 with GA.

See the attached timeline for more details about key moments and innovations.

Ignite 2025: GA of OpenShift Virtualization and more on Azure Red Hat OpenShift

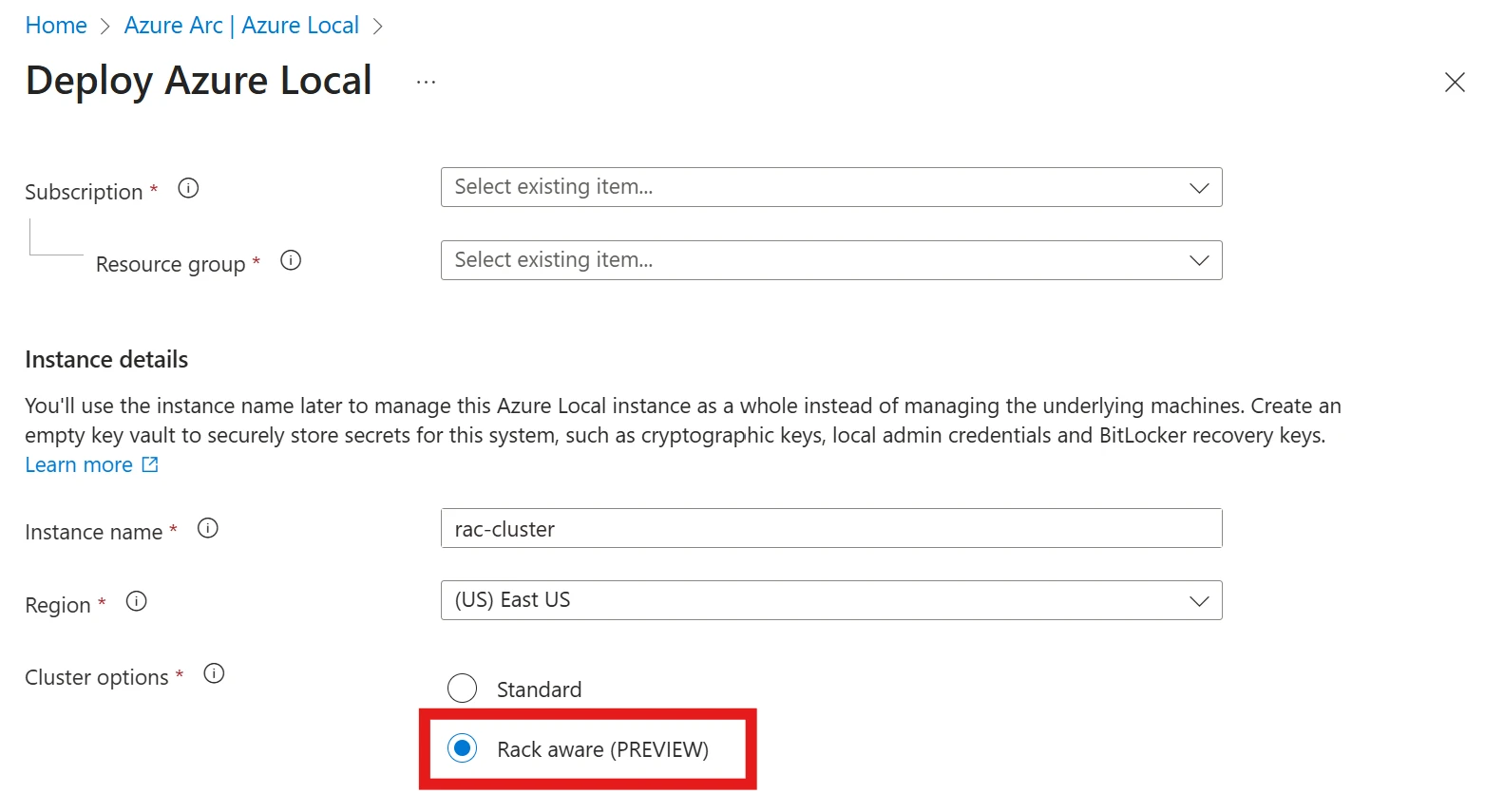

A defining moment of our tenth anniversary was the GA of OpenShift Virtualization on Azure Red Hat OpenShift, announced at Microsoft Ignite 2025. Organizations can now run VMs alongside containers on a single, secure platform, seamlessly bridging traditional virtualization with cloud-native innovation. Enterprises can modernize their VM workloads into Kubernetes-based environments, leveraging Azure’s performance and security with familiar OpenShift tools.

In addition, Microsoft Ignite 2025 marked the GA of confidential containers on Azure Red Hat OpenShift, delivering enhanced hardware-enforced security and isolation for container workloads. The event also showcased alongside the GA of Red Hat Enterprise Linux (RHEL) for HPC on Azure, offering a secure, high-performance platform tailored for scientific and parallel computing workloads in Azure.

Together, these announcements underscore our ongoing commitment to hybrid innovation, security, and helping customers to deploy a wide spectrum of enterprise workloads with agility and confidence.

Open at the core: What’s next for open source and enterprise cloud collaboration

Ten years of partnership have proven openness is more than a technological strategy—it is a culture of progress, trust, and shared innovation. Microsoft and Red Hat remain committed to pioneering the future of hybrid cloud and AI-powered applications, always keeping customer choice and reliability at the center.

We’re proud to partner with Red Hat not just to support our customers, but also in our shared interest in projects like the Linux Kernel, Kubernetes, and most recently llm-d. Together, we are committed to continuing contributions to the health and success of open source technologies and communities.

To our customers, partners, and open source communities: thank you for partnering with us on this journey. Together, we will continue to build the future of enterprise technology—openly, boldly, and collaboratively.

—Brendan Burns, Corporate Vice President, Microsoft Cloud Native

Explore OpenShift Virtualization on Azure

Explore more stories on hybrid cloud and open innovation

Unlock what is next: Microsoft at Red Hat Summit 2025

Red Hat Powers Modern Virtualization on Microsoft Azure

Red Hat Success Stories: Helping Microsoft with IT automation

The best of both worlds: How Microsoft and Red Hat are revolutionizing enterprise IT

Red Hat CEO and Microsoft EVP on The Evolution of Open Source

GA of OpenShift Virtualization on Azure Red Hat OpenShift at Microsoft Ignite 2025

Bradesco, Azure Red Hat OpenShift is the secure, scalable backbone of its future-ready AI platform

Ortec Finance launched a cloud-native risk management platform, accelerating service delivery for over 600 financial institutions

Rossmann transformed its retail operations and scaled hybrid cloud deployments to millions of customers

City of Vienna modernized citizen services with AI, improving availability and efficiency for thousands of residents

Porsche Informatik accelerated digital transformation across automotive logistics, optimizing mission-critical IT service

The post A decade of open innovation: Celebrating 10 years of Microsoft and Red Hat partnership appeared first on Microsoft Azure Blog.

Quelle: Azure