Imagine a platform where every developer—whether you’re building for a startup or a global enterprise—can unlock the full spectrum of AI: text, images, audio, and video. This OpenAI DevDay, Azure AI Foundry is making that vision real. With today’s launch of OpenAI GPT-image-1-mini, GPT-realtime-mini, and GPT-audio-mini, plus major safety upgrades to GPT-5, you now have the ultimate toolkit to create, experiment, and scale multimodal solutions—faster and more affordably than ever before. We are excited to share that the models announced today by OpenAI will be rolling out now in Azure AI Foundry, with most customers being able to get started on October 7, 2025.

Try Azure AI Foundry today

Today’s announcement joins major innovations we announced last week with the launch of the Microsoft Agent Framework (now in preview), multi-agent workflows in Foundry Agent Service in private preview, unified observability, Voice Live API general availability, and the new Responsible AI capabilities. Microsoft Agent Framework (GitHub) is a commercial-grade, open-source SDK, and runtime designed to simplify the orchestration of multi-agent systems. It unifies the business-ready foundations of Semantic Kernel with the multi-agent capabilities of AutoGen, giving developers the tools to build intelligent, scalable agentic solutions with speed and confidence.

By expanding Azure AI Foundry with the latest OpenAI models and advancing our agentic AI framework, we empower customers with unparalleled choice, flexibility, and business capabilities, enabling developers to build intelligent agent systems that address complex business needs and drive innovation at scale.

Meet the new models: Built for developers, ready for anything

GPT-image-1-mini: Compact power for visual creativity

GPT-image-1-mini is purpose-built for organizations and developers who need rapid, resource-efficient image generation at scale. Its compact architecture enables high-quality text-to-image and image-to-image creation while consuming fewer computational resources, allowing teams to deploy multimodal AI even in constrained settings. Its robust architecture built on Image-1 model optimizes consistency and ease of adoption for organizations already leveraging multimodal AI in Azure AI Foundry.

What makes it special?

Flexible image generation: Deploy high-quality text-to-image and image-to-image features without breaking your budget.

Lightning-fast inference: Generate images in real time, seamlessly integrated with existing Azure AI Foundry workflows.

Use cases:

Generating educational materials for classrooms and online learning.

Designing storybooks and visual narratives.

Producing game assets for rapid prototyping and development.

Accelerating UI design workflows for apps and websites.

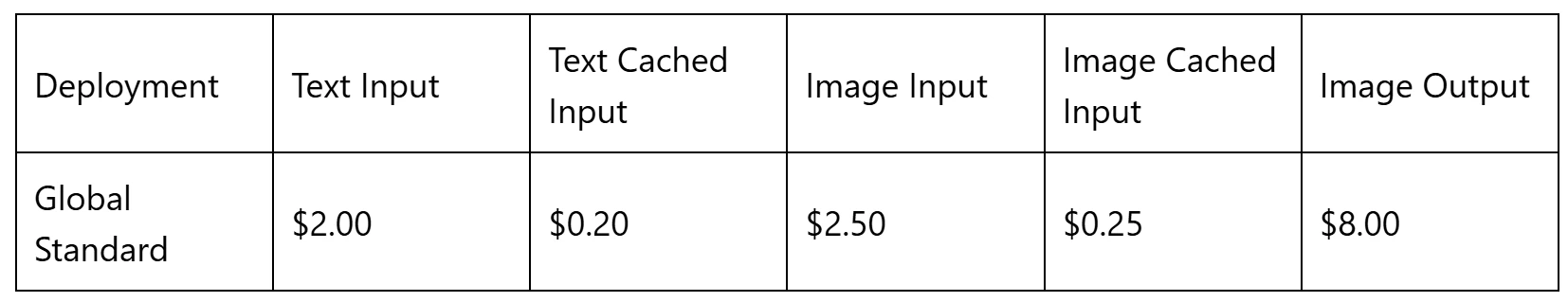

Table 1: GPT-image-1-mini pricing and deployment in Azure AI Foundry (per 1m tokens)*

GPT-realtime-mini and GPT-audio-mini: Efficient and affordable voice solution

The two new mini models are designed for organizations and developers who need fast, cost-effective multimodal AI without sacrificing quality. These models are lightweight and highly optimized, delivering real-time voice interaction and audio generation with minimal resource requirements. Their streamlined architecture enables rapid inference and low latency, making them ideal for scenarios where speed and responsiveness are critical—such as voice-based chatbots, real-time translation, and dynamic audio content creation. By consuming fewer computational resources, these models help businesses and developer teams reduce operational costs while scaling multimodal capabilities across a wide range of applications.

What makes them special?

Real-time responsiveness: Power chatbots, assistants, and translation tools with near-zero latency.

Resource-light: Run advanced voice and audio models on minimal infrastructure.

Affordable scaling: Lower your operational costs while expanding multimodal capabilities.

Use cases:

Voice-based chatbots for customer service and support.

Real-time translation for global communication.

Dynamic audio content creation for media and entertainment.

Interactive voice assistants for enterprise and consumer applications.

GPT‑realtime‑mini in Azure AI Foundry enables our customer to build voice solutions with lower latency, better instruction adherence, and cost efficiency—capabilities our customers value, driving shorter handle times, smoother dialogues, and faster time‑to‑value.

Andy O’Dower, VP of Product, Twilio

Table 2: GPT-realtime-mini and GPT-audio-mini pricing and deployment in Azure AI Foundry (per 1m tokens)*

GPT-5-chat-latest: Raising the bar for safety and wellbeing

The latest GPT-5-chat-latest update in Azure AI Foundry introduces a more robust set of safety guardrails, designed to better protect users during sensitive conversations. With enhanced detection and response capabilities, GPT-5-chat-latest is now equipped to more effectively recognize and manage dialogue that could lead to mental or emotional distress. These improvements reflect our ongoing commitment to responsible AI, ensuring that every interaction is not only intelligent and helpful, but also safe and supportive for users in challenging moments.

Table 3: GPT-5-chat-latest pricing and deployment in Azure AI Foundry (per 1m tokens)*

GPT-5-pro: The pinnacle of reasoning and analytics

GPT-5-pro represents the pinnacle of advanced reasoning and analytics within the Azure AI Foundry ecosystem, delivering research-grade intelligence. When deployed through Foundry, GPT-5-pro’s tournament-style architecture leverages multiple reasoning pathways to ensure maximum accuracy and reliability, making it ideal for complex analytics, code generation, and decision-making workflows. With Azure AI Foundry, organizations unlock the full potential of GPT-5-pro, driving smarter decisions and accelerating innovation across their most critical business processes, securely and reliably.

Table 4: GPT-5-pro pricing and deployment in Azure AI Foundry (per 1m tokens)*

The developer’s edge: Build, experiment, and ship—faster

With these new models, Azure AI Foundry isn’t just keeping up—it’s setting the pace. Developers can now move beyond text, tapping into image and audio generation, editing, and understanding. The result? Richer, smarter workflows that drive innovation in every industry—from education and gaming to enterprise automation.

Sneak peek: Sora 2—Next-level video and audio generation

And there’s more on the horizon. Sora 2 in Azure AI Foundry is coming soon, bringing advanced video and audio generation in a single API. Imagine physics-driven animation, synchronized dialogue, and cameo features—all available to developers through Azure AI Foundry. Stay tuned for the next wave of immersive, generative experiences.

Are you ready to create the next wave of immersive, multimodal experiences? Azure AI Foundry is your platform for every possibility.

*Pricing is accurate as of October 2025.

The post Unleash your creativity at scale: Azure AI Foundry’s multimodal revolution appeared first on Microsoft Azure Blog.

Quelle: Azure