AI Guide to the Galaxy: MCP Toolkit and Gateway, Explained

This is an abridged version of the interview we had in AI Guide to the Galaxy, where host Oleg Šelajev spoke with Jim Clark, Principal Software Engineer at Docker, to unpack Docker’s MCP Toolkit and MCP Gateway.

TL;DR

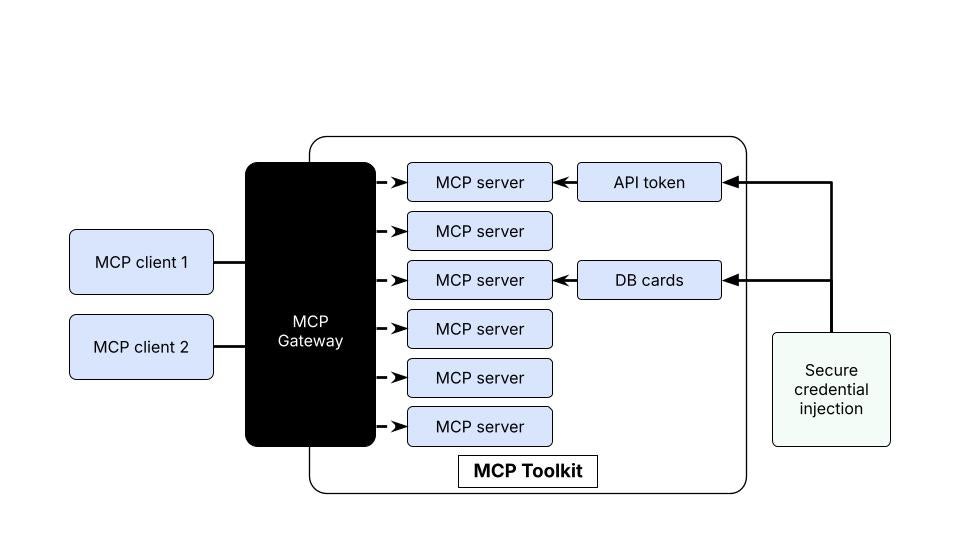

What they are: The MCP Toolkit helps you discover, run, and manage MCP servers; the MCP Gateway unifies and securely exposes them to your agent clients.

Why Docker: Everything runs as containers with supply-chain checks, secret isolation, and OAuth support.

How to use: Pick servers from the MCP Catalog, start the MCP Gateway, and your client (e.g., Claude) instantly sees the tools.

First things first: if you want the official overview and how-tos, start with the Docker MCP Catalog and Toolkit.

A quick origin story (why MCP and Docker?)

Oleg: You’ve been deep in agents for a while. Where did this all start?

Jim: When tool calling arrived, we noticed something simple but powerful: tools look a lot like containers. So we wrapped tools in Docker images, gave agents controlled “hands,” and everything clicked. That was even before the Model Context Protocol (MCP) spec landed. When Anthropic published MCP, it put a name to what we were already building.

What the MCP Toolkit actually solves

Oleg: So, what problem does the Toolkit solve on day one?

Jim: Installation and orchestration. The Toolkit gives you a catalog of MCP servers (think: YouTube transcript, Brave search, Atlassian, etc.) packaged as containers and ready to run. No cloning, no environment drift. Just grab the image, start it, and go. As Docker builds these images and publishes them to Hub, you get consistency and governance on pull.

Oleg: And it presents a single, client-friendly surface?

Jim: Exactly. The Toolkit can act as an MCP server to clients, aggregating whatever servers you enable so clients can list tools in one place.

How the MCP Gateway fits in

Oleg: I see “Toolkit” inside Docker Desktop. Where does the MCP Gateway come in?

Jim: The Gateway is a core piece inside the Toolkit: a process (and open source project) that unifies which MCP servers are exposed to which clients. The CLI and UI manage both local containerized servers and trusted remote MCP servers. That way you can attach a client, run through OAuth where needed, and use those remote capabilities securely via one entry point.

Oleg: Can we see it from a client’s perspective?

Jim: Sure. Fire up the Gateway, connect Claude, run mcp list, and you’ll see the tools (e.g., Brave Web Search, Get Transcript) available to that session, backed by containers the Gateway spins up on demand.

Security: provenance, secrets, and OAuth without drama

Oleg: What hardening happens before a server runs?

Jim: On pull/run, we do provenance verification, ensuring Docker built the image, checking for an SBOM, and running supply-chain checks (via Docker Scout) so you’re not executing something tampered with.

Oleg: And credentials?

Jim: Secrets you add (say, for Atlassian) are mounted only into the target container at runtime, nothing else can see them. For remote servers, the Gateway can handle OAuth flows, acquiring or proxying tokens into the right container or request path. It’s two flavors of secret management: local injection and remote OAuth, both controlled from Docker Desktop and the CLI.

Profiles, filtering, and “just the tools I want”

Oleg: If I have 30 servers, can I scope what a given client sees?

Jim: Yes. Choose the servers per Gateway run, then filter tools, prompts, and resources so the client only gets the subset you want. Treat it like “profiles” you can version alongside your code; compose files and config make it repeatable for teams. You can even run multiple gateways for different configurations (e.g., “chess tools” vs. “cloud ops tools”).

From local dev to production (and back again)

Oleg: How do I move from tinkering to something durable?

Jim: Keep it Compose-first. The Gateway and servers are defined as services in your compose files, so your agent stack is reproducible. From there, push to cloud: partners like Google Cloud Run already support one-command deploys from Compose, with Azure integrations in progress. Start locally, then graduate to remote runs seamlessly.

Oleg: And choosing models?

Jim: Experiment locally, swap models as needed, and wire in the MCP tools that fit your agent’s job. The pattern is the same: pick models, pick tools, compose them, and ship.

Getting started with MCP Gateway (in minutes)

Oleg: Summarize the path for me.

Jim:

Pick servers from the catalog in Docker Desktop (or CLI).

Start the MCP Gateway and connect your client.

Add secrets or flow through OAuth as needed.

Filter tools into a profile.

Capture it in Compose and scale out.

Why the MCP Toolkit and Gateway improve team workflows

Fast onboarding: No glue code or conflicting envs, servers come containerized.

Security built-in: Supply-chain checks and scoped secret access reduce risk.

One workflow: Local debug, Compose config, cloud deploys. Same primitives, fewer rewrites.

Try it out

Spin up your first profile and point your favorite client at the Gateway. When you’re ready to expand your agent stack, explore tooling like Docker Desktop for local iteration and Docker Offload for on-demand cloud resources — then keep everything declarative with Compose.

Ready to build? Explore the Docker MCP Catalog and Toolkit to get started.

Learn More

Watch the rest of the AI Guide to the Galaxy series

Explore the MCP Catalog: Discover containerized, security-hardened MCP servers

Open Docker Desktop and get started with the MCP Toolkit (Requires version 4.48 or newer to launch the MCP Toolkit automatically)

Check out our latest guide on how to setup Claude Code with Docker’s MCP Toolkit

Quelle: https://blog.docker.com/feed/