Go (or Golang) is one of the most loved and wanted programming languages, according to Stack Overflow’s 2022 Developer Survey. Thanks to its smaller binary sizes vs. many other languages, developers often use Go for containerized application development.

Mohammad Quanit explored the connection between Docker and Go during his Community All-Hands session. Mohammad shared how to Dockerize a basic Go application while exploring each core component involved in the process:

Follow along as we dive into these containerization steps. We’ll explore using a Go application with an HTTP web server — plus key best practices, optimization tips, and ways to bolster security.

Go application components

Creating a full-fledged Go application requires you to create some Go-specific components. These are essential to many Go projects, and the containerization process relies equally heavily on them. Let’s take a closer look at those now.

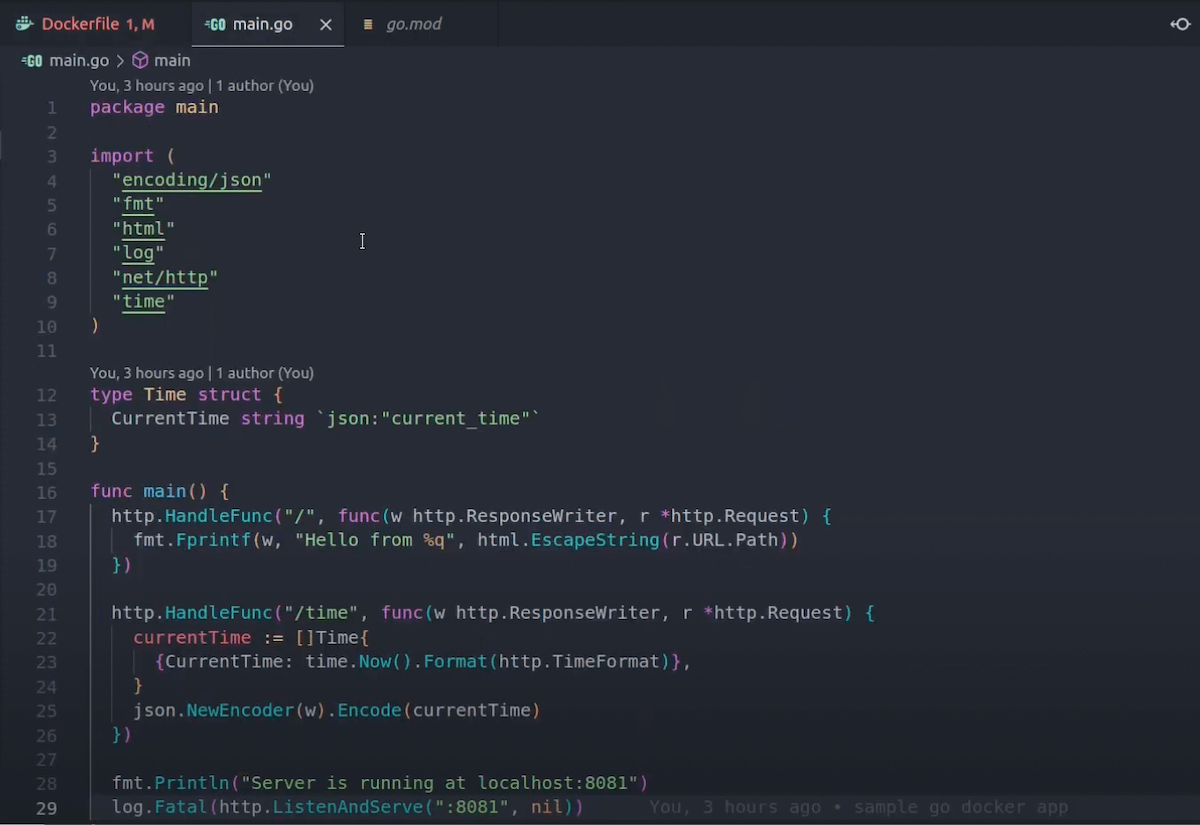

Using main.go and go.mod

Mohammad mainly highlights the main.go file since you can’t run an app without executable code. In Mohammad’s case, he created a simple web server with two unique routes: an I/O format with print functionality, and one that returns the current time.

What’s nice about Mohammad’s example is that it isn’t too lengthy or complex. You can emulate this while creating your own web server or use it as a stepping stone for more customization.

Note: You might also use a package main in place of a main.go file. You don’t explicitly need main.go specified for a web server — since you can name the file anything you want — but you do need a func main () defined within your code. This exists in our sample above.

We always recommend confirming that your code works as expected. Enter the command go run main.go to spin up your application. You can alternatively replace main.go with your file’s specific name. Then, open your browser and visit http://localhost:8081 to view your “Hello World” message or equivalent. Since we have two routes, navigating to http://localhost:8081/time displays the current time thanks to Mohammad’s second function.

Next, we have the go.mod file. You’ll use this as a root file for your Go packages, module path for imports (shown above), and for dependency requirements. Go modules also help you choose a directory for your project code.

With these two pieces in place, you’re ready to create your Dockerfile!

Creating your Dockerfile

Building and deploying your Dockerized Go application means starting with a software image. While you can pull this directly from Docker Hub (using the CLI), beginning with a Dockerfile gives you more configuration flexibility.

You can create this file within your favorite editor, like VS Code. We recommend VS Code since it supports the official Docker extension. This extension supports debugging, autocompletion, and easy project file navigation.

Choosing a base image and including your application code is pretty straightforward. Since Mohammad is using Go, he kicked off his Dockerfile by specifying the golang Docker Official Image as a parent image. Docker will build your final container image from this.

You can choose whatever version you’d like, but a pinned version like golang:1.19.2-bullseye is both stable and slim. Newer image versions like these are also safe from October 2022’s Text4Shell vulnerability.

You’ll also need to do the following within your Dockerfile:

Include an app directory for your source codeCopy everything from the root directory into your app directoryCopy your Go files into your app directory and install dependenciesBuild your app with configurationTell your Docker container to listen on a certain port at runtimeDefine an executable command that runs once your container starts

With these points in mind, here’s how Mohammad structured his basic Dockerfile:

# Specifies a parent image

FROM golang:1.19.2-bullseye

# Creates an app directory to hold your app’s source code

WORKDIR /app

# Copies everything from your root directory into /app

COPY . .

# Installs Go dependencies

RUN go mod download

# Builds your app with optional configuration

RUN go build -o /godocker

# Tells Docker which network port your container listens on

EXPOSE 8080

# Specifies the executable command that runs when the container starts

CMD [ “/godocker” ]

From here, you can run a quick CLI command to build your image from this file:

docker build –rm -t [YOUR IMAGE NAME]:alpha .

This creates an image while removing any intermediate containers created with each image layer (or step) throughout the build process. You’re also tagging your image with a name for easier reference later on.

Confirm that Docker built your image successfully by running the docker image ls command:

If you’ve already pulled or built images in the past and kept them, they’ll also appear in your CLI output. However, you can see Mohammad’s go-docker image listed at the top since it’s the most recent.

Making changes for production workloads

What if you want to account for code or dependency changes that’ll inevitably occur with a production Go application? You’ll need to tweak your original Dockerfile and add some instructions, according to Mohammad, so that changes are visible and the build process succeeds:

FROM golang:1.19.2-bullseye

WORKDIR /app

# Effectively tracks changes within your go.mod file

COPY go.mod .

RUN go mod download

# Copies your source code into the app directory

COPY main.go .

RUN go mod -o /godocker

EXPOSE 8080

CMD [ “/godocker” ]

After making those changes, you’ll want to run the same docker build and docker image ls commands. Now, it’s time to run your new image! Enter the following command to start a container from your image:

docker run -d -p 8080:8081 –name go-docker-app [YOUR IMAGE NAME]:alpha

Confirm that this worked by entering the docker ps command, which generates a list of your containers. If you have Docker Desktop installed, you can also visit the Containers tab from the Docker Dashboard and locate your new container in the list. This also applies to your image builds — instead using the Images tab.

Congratulations! By tracing Mohammad’s steps, you’ve successfully containerized a functioning Go application.

Best practices and optimizations

While our Go application gets the job done, Mohammad’s final image is pretty large at 913MB. The client (or end user) shouldn’t have to download such a hefty file.

Mohammad recommends using a multi-stage build to only copy forward the components you need between image layers. Although we start with a golang:version as a builder image, defining a second build stage and choosing a slim alternative like alpine helps reduce image size. You can watch his step-by-step approach to tackling this.

This is beneficial and common across numerous use cases. However, you can take things a step further by using FROM scratch in your multi-stage builds. This empty file is the smallest we offer and accepts static binaries as executables — making it perfect for Go application development.

You can learn more about our scratch image on Docker Hub. Despite being on Hub, you can only add scratch directly into your Dockerfile instead of pulling it.

Develop your Go application today

Mohammad Quanit outlined some user-friendly development workflows that can benefit both newer and experienced Go users. By following his steps and best practices, it’s possible to create cross-platform Go apps that are slim and performant. Docker and Go inherently mesh well together, and we also encourage you to explore what’s possible through containerization.

Want to learn more?

Check out our Go language-specific guide.Read about the golang Docker Official Image.See Go in action alongside other technologies in our Awesome Compose repo.Dig deeper into Dockerfile fundamentals and best practices.Understand how to use Go-based server technologies like Caddy 2.

Quelle: https://blog.docker.com/feed/