In this blog we would like to demonstrate how to use the new NVIDIA GPU operator to deploy GPU-accelerated workloads on an OpenShift cluster.

The new GPU operator enables OpenShift to schedule workloads that require use of GPGPUs as easily as one would schedule CPU or memory for more traditional not accelerated workloads. Start by creating a container that has a GPU workload inside it and request the GPU resource when creating the pod and OpenShift will take care of the rest. This makes deployment of GPU workloads to OpenShift clusters straightforward for users and administrators as it is all managed at the cluster level and not on the host machines. The GPU operator for OpenShift will help to simplify and accelerate the compute-intensive ML/DL modeling tasks for data scientists, as well as help running inferencing tasks across data centers, public clouds, and at the edge. Typical workloads that can benefit from GPU acceleration include image and speech recognition, visual search and several others.

We assume that you have an OpenShift 4.x cluster deployed with some worker nodes that have GPU devices.

$ oc get no

NAME STATUS ROLES AGE VERSION

ip-10-0-130-177.ec2.internal Ready worker 33m v1.16.2

ip-10-0-132-41.ec2.internal Ready master 42m v1.16.2

ip-10-0-156-85.ec2.internal Ready worker 33m v1.16.2

ip-10-0-157-132.ec2.internal Ready master 42m v1.16.2

ip-10-0-170-127.ec2.internal Ready worker 4m15s v1.16.2

ip-10-0-174-93.ec2.internal Ready master 42m v1.16.2

In order to expose what features and devices each node has to OpenShift we first need to deploy the Node Feature Discovery (NFD) Operator (see here for more detailed instructions).

Once the NFD Operator is deployed we can take a look at one of our nodes; here we see the difference between the node before and after. Among the new labels describing the node features, we see:

feature.node.kubernetes.io/pci-10de.present=true

This indicates that we have at least one PCIe device from the vendor ID 0x10de, which is for Nvidia. These labels created by the NFD operator are what the GPU Operator uses in order to determine where to deploy the driver containers for the GPU(s).

However, before we can deploy the GPU Operator we need to ensure that the appropriate RHEL entitlements have been created in the cluster (see here for more detailed instructions). After the RHEL entitlements have been deployed to the cluster, then we may proceed with installation of the GPU Operator.

The GPU Operator is currently installed via helm chart, so make sure that you have helm v3+ installed. Once you have helm installed we can begin the GPU Operator installation.

1. Add the Nvidia helm repo:

$ helm repo add nvidia https://nvidia.github.io/gpu-operator “nvidia” has been added to your repositories

2. Update the helm repo:

$ helm repo update Hang tight while we grab the latest from your chart repositories…

…Successfully got an update from the “nvidia” chart repository Update Complete. ⎈ Happy Helming!⎈

3. Install the GPU Operator helm chart:

$ helm install –devel https://nvidia.github.io/gpu-operator/gpu-operator-1.0.0.tgz

–set platform.openshift=true,operator.defaultRuntime=crio,nfd.enabled=false –wait –generate-name

4. Monitor deployment of GPU Operator:

$ oc get pods -n gpu-operator-resources -w

This command will watch the gpu-operator-resources namespace as the operator rolls out on the cluster. Once the installation is completed you should see something like this in the gpu-operator-resources namespace.

We can see that both the nvidia-driver-validation and the nvidia-device-plugin-validation pods have completed successfully and we have four daemonsets, each running the number of pods that match the node label feature.node.kubernetes.io/pci-10de.present=true. Now we can inspect our GPU node once again.

Here we can see the latest changes to our node which now include Capacity, Allocatable and Allocatable Resources for a new resource called nvidia.com/gpu. As we see above, since our GPU node only has one GPU we can see that reflected.

Now that we have the NFD Operator, cluster entitlements, and the GPU Operator deployed we can assign workloads that will use the GPU resources.

Let’s begin by configuring Cluster Autoscaling for our GPU devices. This will allow us to create workloads that request GPU resources and then will automatically scale our GPU nodes up and down depending on the amount of requests pending for these devices.

The first step is to create a ClusterAutoscaler resource definition, for example:

$ cat 0001-clusterautoscaler.yaml

apiVersion: “autoscaling.openshift.io/v1″

kind: “ClusterAutoscaler”

metadata:

name: “default”

spec:

podPriorityThreshold: -10

resourceLimits:

maxNodesTotal: 24

gpus:

– type: nvidia.com/gpu

min: 0

max: 16

scaleDown:

enabled: true

delayAfterAdd: 10m

delayAfterDelete: 5m

delayAfterFailure: 30s

unneededTime: 10m

$ oc create -f 0001-clusterautoscaler.yaml

clusterautoscaler.autoscaling.openshift.io/default created

Here we define the number of nvidia.com/gpu resources that we expect for the Autoscaler.

After we deploy the ClusterAutoscaler, we deploy the MachineAutoscaler resource that references the MachineSet that is used to scale the cluster:

$ cat 0002-machineautoscaler.yaml

apiVersion: “autoscaling.openshift.io/v1beta1″

kind: “MachineAutoscaler”

metadata:

name: “gpu-worker-us-east-1a”

namespace: “openshift-machine-api”

spec:

minReplicas: 1

maxReplicas: 6

scaleTargetRef:

apiVersion: machine.openshift.io/v1beta1

kind: MachineSet

name: gpu-worker-us-east-1a

$ oc create -f 0002-machineautoscaler.yaml

machineautoscaler.autoscaling.openshift.io/sj-022820-01-h4vrj-worker-us-east-1c created

The metadata name should be a unique MachineAutoscaler name, and the MachineSet name at the end of the file should be the value of an existing MachineSet.

Looking at our cluster, we check what MachineSets are available:

$ oc get machinesets -n openshift-machine-api

NAME DESIRED CURRENT READY AVAILABLE AGE

sj-022820-01-h4vrj-worker-us-east-1a 1 1 1 1 4h45m

sj-022820-01-h4vrj-worker-us-east-1b 1 1 1 1 4h45m

sj-022820-01-h4vrj-worker-us-east-1c 1 1 1 1 4h45m

In this example the third MachineSet sj-022820-01-h4vrj-worker-us-east-1c is the one that has GPU nodes.

$ oc get machineset sj-022820-01-h4vrj-worker-us-east-1c -n openshift-machine-api -o yaml

apiVersion: machine.openshift.io/v1beta1

kind: MachineSet

metadata:

name: sj-022820-01-h4vrj-worker-us-east-1c

namespace: openshift-machine-api

…

spec:

replicas: 1

…

spec:

metadata:

instanceType: p3.2xlarge

kind: AWSMachineProviderConfig

placement:

availabilityZone: us-east-1c

region: us-east-1

We can create our MachineAutoscaler resource definition, which would look like this:

$ cat 0002-machineautoscaler.yaml

apiVersion: “autoscaling.openshift.io/v1beta1″

kind: “MachineAutoscaler”

metadata:

name: “sj-022820-01-h4vrj-worker-us-east-1c”

namespace: “openshift-machine-api”

spec:

minReplicas: 1

maxReplicas: 6

scaleTargetRef:

apiVersion: machine.openshift.io/v1beta1

kind: MachineSet

name: sj-022820-01-h4vrj-worker-us-east-1c

$ oc create -f 0002-machineautoscaler.yaml

machineautoscaler.autoscaling.openshift.io/sj-022820-01-h4vrj-worker-us-east-1c created

We can now start to deploy RAPIDs using shared storage between multiple instances. Begin by creating a new project:

$ oc new-project rapids

Assuming you have a StorageClass that provides ReadWriteMany functionality like OpenShift Container Storage with cephfs, we can create a PVC to attach to our RAPIDs instances. (‘storageClassName` is the name of the StorageClass)

$ cat 0003-pvc-for-ceph.yaml

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: rapids-cephfs-pvc

spec:

accessModes:

– ReadWriteMany

resources:

requests:

storage: 25Gi

storageClassName: example-storagecluster-cephfs

$ oc create -f 0003-pvc-for-ceph.yaml

persistentvolumeclaim/rapids-cephfs-pvc created

$ oc get pvc -n rapids

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

rapids-cephfs-pvc Bound pvc-a6ba1c38-6498-4b55-9565-d274fb8b003e 25Gi RWX example-storagecluster-cephfs 33s

Now that we have our shared storage deployed we can finally deploy the RAPIDs template and create the new application inside our rapids namespace:

$ oc create -f 0004-rapids_template.yaml

template.template.openshift.io/rapids created

$ oc new-app rapids

–> Deploying template “rapids/rapids” to project rapids

RAPIDS

———

Template for RAPIDS

A RAPIDS pod has been created.

* With parameters:

* Number of GPUs=1

* Rapids instance number=1

–> Creating resources …

service “rapids” created

route.route.openshift.io “rapids” created

pod “rapids” created

–> Success

Access your application via route ‘rapids-rapids.apps.sj-022820-01.perf-testing.devcluster.openshift.com’

Run ‘oc status’ to view your app.

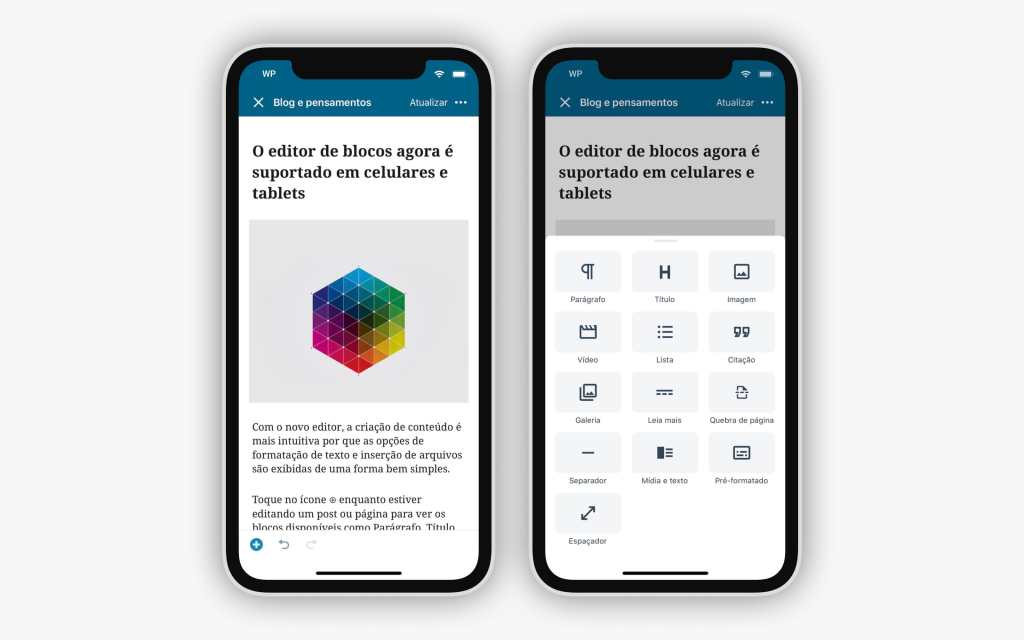

In a browser we can now load the route that the template created above: rapids-rapids.apps.sj-022820-01.perf-testing.devcluster.openshift.com

Image shows example notebook running using GPUs on OpenShift

We can also see on our GPU node that RAPIDs is running on it and using the GPU resource:

$ oc describe gpu node

Given we have more than one person that wants to run Jupyter playbooks, lets create a second RAPIDs instance with its own dedicated GPU.

$ oc new-app rapids -p INSTANCE=2

–> Deploying template “rapids/rapids” to project rapids

RAPIDS

———

Template for RAPIDS

A RAPIDS pod has been created.

* With parameters:

* Number of GPUs=1

* Rapids instance number=2

–> Creating resources …

service “rapids2″ created

route.route.openshift.io “rapids2″ created

pod “rapids2″ created

–> Success

Access your application via route ‘rapids2-rapids.apps.sj-022820-01.perf-testing.devcluster.openshift.com’

Run ‘oc status’ to view your app.

But we just used our only GPU resource on our GPU node, so the new deployment of rapids (rapids2) is not schedulable due to insufficient GPU resources.

$ oc get pods -n rapids

NAME READY STATUS RESTARTS AGE

rapids 1/1 Running 0 30m

rapids2 0/1 Pending 0 2m44s

If we look at the event state of the rapids2 pod:

$ oc describe pod/rapids -n rapids

…

Events:

Type Reason Age From Message

—- —— —- —- ——-

Warning FailedScheduling <unknown> default-scheduler 0/9 nodes are available: 9 Insufficient nvidia.com/gpu.

Normal TriggeredScaleUp 44s cluster-autoscaler pod triggered scale-up: [{openshift-machine-api/sj-022820-01-h4vrj-worker-us-east-1c 1->2 (max: 6)}]

We just need to wait for the ClusterAutoscaler and MachineAutoscaler to do their job and scale up the MachineSet as we see above. Once the new node is created:

$ oc get no

NAME STATUS ROLES AGE VERSION

(old nodes)

…

ip-10-0-167-0.ec2.internal Ready worker 72s v1.16.2

The new RAPIDs instance will deploy to the new node once it becomes Ready with no user intervention.

To summarize, the new NVIDIA GPU operator simplifies the use of GPU resources in OpenShift clusters. In this blog we’ve demonstrated the use-case for multi-user RAPIDs development using NVIDIA GPUs. Additionally we’ve used OpenShift Container Storage and the ClusterAutoscaler to automatically scale up our special resource nodes as they are being requested by applications.

As you observed, NVIDIA GPU Operator is already relatively easy to deploy using Helm and work is ongoing to support t deployments right from OperatorHub, simplifying this process even further.

For more information on NVIDIA GPU Operator and OpenShift, please see the official Nvidia documentation.

1 – Helm 3 is in Tech Preview in OpenShift 4.3, and will GA in OpenShift 4.4

The post Simplifying deployments of accelerated AI workloads on Red Hat OpenShift with NVIDIA GPU Operator appeared first on Red Hat OpenShift Blog.

Quelle: OpenShift