At Docker, we are incredibly proud of our vibrant, diverse and creative community. From time to time, we feature cool contributions from the community on our blog to highlight some of the great work our community does. Are you working on something awesome with Docker? Send your contributions to Ajeet Singh Raina (@ajeetraina) on the Docker Community Slack and we might feature your work!

URL shortening is a widely adopted technique that’s used to create short, condensed, and unique aliases for long URL links. Websites like tinyurl.com, bit.ly and ow.ly offer online URL shortener services, while social media sites integrate shorteners right into their product, like Twitter with its use of t.co. This is especially important for Twitter, where shortened links allow users to share long URLs in a Tweet while still fitting in the maximum number of characters for their message.

Why are URL shortener techniques so popular? First, the URL shortener technique allows you to create a short URL that is easy to remember and manage. Say, if you have a brand name, a short URL just consisting of a snippet of your company name is easier to identify and remember.

Second, oversized and hard-to-guess URLs might sometimes look too suspicious and clunky. Imagine a website URL link that has UTM parameters embedded. UTMs are snippets of text added to the end of a URL to help marketers track where website traffic comes from if users click a link to this URL. With too many letters, backslashes and question marks, a long URL might look insecure. Some users might still think that there is a security risk involved with a shortened URL as you cannot tell where you’re going to land, but there are services like Preview mode that allows you to see a preview version of long URL before it instantly redirects you to the actual site.

How do they actually work? Whenever a user clicks a link (say, https://tinyurl.com/2p92vwuh), an HTTP request is sent to the backend server with the full URL. The backend server reads the path part(2p92vwuh) that maps to the database that stores a description, name, and the real URL. Then it issues a redirect, which is an HTTP 302 response with the target URL in the header.

Building the application

In this blog tutorial, you’ll learn how to build a basic URL shortener using Python and Django.

First, you’ll create a basic application in Python without using Docker. You’ll see how the application lets you shorten a URL. Next, you’ll build a Docker image for that application. You’ll also learn how Docker Compose can help you rapidly deploy your application within containers. Let’s jump in.

Key Components

Here’s what you’ll need to use for this tutorial:

Git

GitHub account

Python 3.8+ and virtualenv

Django

Microsoft Visual Studio Code

Docker Desktop

Getting Started

Once you have Python 3.8+ installed on your system, follow these steps to build a basic URL shortener clone from scratch.

Step 1. Create a Python virtual environment

Virtualenv is a tool for creating isolated virtual python environments. It’s a self-contained directory tree that contains a Python installation from a particular version of Python, as well as a number of additional packages.

The venv module is used to create and manage virtual environments. In most of the cases, venv is usually the most recent version of Python. If you have multiple versions of Python, you can create a specific Python version.

Use this command to create a Python virtual environment to install packages locally

mkdir -p venv

python3 -m venv venv

The above command will create a directory if it doesn’t exist and also create sub-directories that contain a copy of the Python interpreter and a number of supporting files.

$ tree venv -L 2

venv

├── bin

│ ├── Activate.ps1

│ ├── activate

│ ├── activate.csh

│ ├── activate.fish

│ ├── easy_install

│ ├── easy_install-3.8

│ ├── pip

│ ├── pip3

│ ├── pip3.8

│ ├── python -> python3

│ └── python3 -> /usr/bin/python3

├── include

├── lib

│ └── python3.8

├── lib64 -> lib

└── pyvenv.cfg

5 directories, 12 files

Once you’ve created a virtual environment, you’ll need to activate it:

source ./venv/bin/activate

Step 2. Install Django

The easiest way to install Django is to use the standalone pip installer. PIP(Preferred Installer Program) is the most popular package installer for Python and is a command-line utility that helps you to manage your Python 3rd-party packages. Use the following command to update the pip package and then install Django:

pip install -U pip

pip install Django

You’ll see the following results:

Collecting django

Downloading Django-4.0.4-py3-none-any.whl (8.0 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 8.0/8.0 MB 15.9 MB/s eta 0:00:00

Collecting asgiref<4,>=3.4.1

Downloading asgiref-3.5.2-py3-none-any.whl (22 kB)

Collecting sqlparse>=0.2.2

Downloading sqlparse-0.4.2-py3-none-any.whl (42 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 42.3/42.3 kB 1.7 MB/s eta 0:00:00

Collecting backports.zoneinfo

Downloading backports.zoneinfo-0.2.1.tar.gz (74 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 74.1/74.1 kB 3.0 MB/s eta 0:00:00

Installing build dependencies … done

…..

Step 3. Create a Django project

The django-admin is Django’s command-line utility for administrative tasks. The utility helps you automatically create manage.py in each Django project.

mkdir -p src/ && cd src

django-admin startproject url shortener

Django Project Structure:

$ tree urlshortener/

urlshortener/

├── manage.py

└── urlshortener

├── __init__.py

├── asgi.py

├── settings.py

├── urls.py

└── wsgi.py

1 directory, 6 files

In this directory tree:

manage.py is Django’s CLI

settings.py is where all of the global Django project’s settings reside

urls.py is where all the URL mappings reside

wsgi.py is an entry-point for WSGI-compatible servers to serve the project in production

Step 4. Creating a Django app for shortening the URL

Change directory to src/urlshortener and run the following command:

cd src/urlshortener

python manage.py startapp main

It will create a new subdirectory called “main” under src/urlshortener as shown below:

src

└── urlshortener

├── main

│ ├── admin.py

│ ├── apps.py

│ ├── __init__.py

│ ├── migrations

│ ├── models.py

│ ├── tests.py

│ └── views.py

├── manage.py

└── urlshortener

In this directory tree:

admin.py is where Django’s built-in admin configuration resides

migrations is where all of the database migrations reside

models.py is where all of the database models for this Django app exist

tests.py is self-explanatory

views.py is where “controller” functions reside, the functions that are in charge of creating the views

For this tutorial, you’ll only leverage the last one.

Step 5. Create the URL Shortener

pyshorteners is a simple URL shortening API wrapper Python library. With pyshorteners , you can generate a short url or expand another one is as easy as typing

Run the following command to install the package pyshorteners:

pip install pyshorteners

Run the following command to save all your python libraries with current version into requirements.txt file:

pip freeze > requirements.txt

Once the command is successfully run, the requirements.txt gets created with the following entries:

asgiref==3.5.2

backports.zoneinfo==0.2.1

certifi==2022.5.18.1

charset-normalizer==2.0.12

Django==4.0.5

idna==3.3

pyshorteners==1.0.1

requests==2.27.1

sqlparse==0.4.2

urllib3==1.26.9

Head to main/views.py and edit it accordingly:

from django.shortcuts import render

from django.http import HttpResponse

import pyshorteners

# Create your views here.

def shorten(request, url):

shortener = pyshorteners.Shortener()

shortened_url = shortener.chilpit.short(url)

return HttpResponse(f’Shortened URL: <a href=”{shortened_url}”>{shortened_url}</a>’)

In this code listing:

In line 1, the render function is imported by default. You won’t remove it now, as you’re going to use it later.

In line 2, you’ve imported the class name HttpResponse. This is the type returned with an HTML text.

In line 3, the library pyshorteners is imported, which you use to shorten the given URLs.

In line 7, the function gets two parameters; a request that is mandatory, and a url that is set by Django. We’ll get to it in the next file.

In line 8, you initialized the shortener object.

In line 9, the shortened URL is generated by sending a request to chilp.it.

In line 10, the shortened URL is returned as a minimal HTML link.

Next, let’s assign a URL to this function.

Create a urls.py under main:

touch main/urls.py

Add the below code:

from django.urls import path

from . import views

urlpatterns = [

path(‘shorten/<str:url>’, views.shorten, name=’shorten’),

]

The URL mapping specifies which function to use and which path parameters there are. In this case, the URL is mapped to the function shorten and with a string parameter named url.

Now head back to the urlshortener/ directory and include the newly created urls.py file:

from django.contrib import admin

from django.urls import include, path

urlpatterns = [

path(”, include(‘main.urls’)),

path(‘admin/’, admin.site.urls),

]

Now, run the development server:

python manage.py runserver

Open http://127.0.0.1:8000/shorten/google.com in your browser and type Enter. It will show you a shortened URL as shown in the following screenshot.

Step 6. Creating the form

In this section, you’ll see how to create a landing page.

mkdir -p main/templates/main

touch main/templates/main/index.html

Open the index.html and fill it up the with following content:

<form action=”{% url ‘main:shorten_post’ %}” method=”post”>

{% csrf_token %}

<fieldset>

<input type=”text” name=”url”>

</fieldset>

<input type=”submit” value=”Shorten”>

</form>

In this file:

The form action which the URL form sends the request to, is defined by Django’s template tag url. The tag in use is the one created in the URL mappings. Here, the URL tag main:shorten_post doesn’t exist yet. You’ll create it later.

The CSRF token is a Django security measure that works out-of-the-box.

Head over to main/views.py under the project directory src/urlshortener/ and add two functions, namely index and shorten_post at the end of the file.

from django.shortcuts import render

from django.http import HttpResponse

import pyshorteners

def index(request):

return render(request, ‘main/index.html’)

def shorten_post(request):

return shorten(request, request.POST[‘url’])

. . .

Here,

The function index renders the HTML template created in the previous step, using the render function.

The function shorten_post is a function created to be used for the post requests. The reason for its creation (and not using the previous function) is because Django’s URL mapping only works with path parameters and not post request parameters. So, here, the parameter url is read from the post request and passed to the previously available shorten function.

Now go to the main/urls.py to bind the functions to URLs:

from django.urls import path

from . import views

urlpatterns = [

path(”, views.index, name=’index’),

path(‘shorten’, views.shorten_post, name=’shorten_post’),

path(‘shorten/<str:url>’, views.shorten, name=’shorten’),

]

Next, head over to urlshortener/settings.py under src/urlshortener/urlshortener directory and add ‘main.apps.MainConfig’ to the beginning of the list INSTALLED_APPS:

. . .

INSTALLED_APPS = [

‘main.apps.MainConfig’,

‘django.contrib.admin’,

‘django.contrib.auth’,

‘django.contrib.contenttypes’,

‘django.contrib.sessions’,

‘django.contrib.messages’,

‘django.contrib.staticfiles’,

]

. . .

Step 7. Creating a Database Models

Now, to save the URLs and their short versions locally, you should create database models for them. Head to main/models.py under src/urlshortener/main and create the following model:

from django.db import models

# Create your models here.

class Question(models.Model):

original_url = models.CharField(max_length=256)

hash = models.CharField(max_length=10)

creation_date = models.DateTimeField(‘creation date’)

We’ll assume that the given URLs fit in 256 characters and the short version are less than 10 characters (usually 7 characters would suffice).

Now, create the database migrations:

python manage.py makemigrations

It will show the following results:

Migrations for ‘main':

main/migrations/0001_initial.py

– Create model Question

A new file will be created under main/migrations.

main % tree migrations

migrations

├── 0001_initial.py

├── __init__.py

└── __pycache__

└── __init__.cpython-39.pyc

1 directory, 3 files

Now to apply the database migrations to the default SQLite DB, run:

python manage.py migrate

It shows the following results:

urlshortener % python3 manage.py migrate

Operations to perform:

Apply all migrations: admin, auth, contenttypes, main, sessions

Running migrations:

Applying contenttypes.0001_initial… OK

Applying auth.0001_initial… OK

Applying admin.0001_initial… OK

Applying admin.0002_logentry_remove_auto_add… OK

Applying admin.0003_logentry_add_action_flag_choices… OK

Applying contenttypes.0002_remove_content_type_name… OK

Applying auth.0002_alter_permission_name_max_length… OK

Applying auth.0003_alter_user_email_max_length… OK

Applying auth.0004_alter_user_username_opts… OK

Applying auth.0005_alter_user_last_login_null… OK

Applying auth.0006_require_contenttypes_0002… OK

Applying auth.0007_alter_validators_add_error_messages… OK

Applying auth.0008_alter_user_username_max_length… OK

Applying auth.0009_alter_user_last_name_max_length… OK

Applying auth.0010_alter_group_name_max_length… OK

Applying auth.0011_update_proxy_permissions… OK

Applying auth.0012_alter_user_first_name_max_length… OK

Applying main.0001_initial… OK

Applying sessions.0001_initial… OK

Now that you have the database models, it’s time to create a shortener service. Create a Python file main/service.py and add the following functionality:

import random

import string

from django.utils import timezone

from .models import LinkMapping

def shorten(url):

random_hash = ”.join(random.choice(string.ascii_uppercase + string.ascii_lowercase + string.digits) for _ in range(7))

mapping = LinkMapping(original_url=url, hash=random_hash, creation_date=timezone.now())

mapping.save()

return random_hash

def load_url(url_hash):

return LinkMapping.objects.get(hash=url_hash)

In this file, in the function shorten, you create a random 7-letter hash, assign the entered URL to this hash, save it into the database, and finally return the hash.

In load_url, you load the original URL from the given hash.

Now, create a new function in the views.py for redirecting:

from django.shortcuts import render, redirect

from . import service

. . .

def redirect_hash(request, url_hash):

original_url = service.load_url(url_hash).original_url

return redirect(original_url)

Then create a URL mapping for the redirect function:

urlpatterns = [

path(”, views.index, name=’index’),

path(‘shorten’, views.shorten_post, name=’shorten_post’),

path(‘shorten/<str:url>’, views.shorten, name=’shorten’),

path(‘<str:url_hash>’, views.redirect_hash, name=’redirect’),

]

You create a URL mapping for the hashes directly under the main host, e.g. example.com/xDk8vdX. If you want to give it an indirect mapping, like example.com/r/xDk8vdX, then the shortened URL will be longer.

The only thing you have to be careful about is the other mapping example.com/shorten. We made this about the redirect mapping, as otherwise it would’ve resolved to redirect as well.

The final step would be changing the shorten view function to use the internal service:

from django.shortcuts import render, redirect

from django.http import HttpResponse

from django.urls import reverse

from . import service

. . .

def shorten(request, url):

shortened_url_hash = service.shorten(url)

shortened_url = request.build_absolute_uri(reverse(‘redirect’, args=[shortened_url_hash]))

return HttpResponse(f’Shortened URL: <a href=”{shortened_url}”>{shortened_url}</a>’)

You can also remove the third-party shortener library from requirements.txt, as you won’t use it anymore.

Using PostgreSQL

To use PostgreSQL instead of SQLite, you change the config in settings.py:

import os

. . .

DATABASES = {

‘default': {

‘ENGINE': ‘django.db.backends.sqlite3′,

‘NAME': BASE_DIR / ‘db.sqlite3′,

}

}

if os.environ.get(‘POSTGRES_NAME’):

DATABASES = {

‘default': {

‘ENGINE': ‘django.db.backends.postgresql’,

‘NAME': os.environ.get(‘POSTGRES_NAME’),

‘USER': os.environ.get(‘POSTGRES_USER’),

‘PASSWORD': os.environ.get(‘POSTGRES_PASSWORD’),

‘HOST': ‘db’,

‘PORT': 5432,

}

}

The if statement means it only uses the PostgreSQL configuration if it exists in the environment variables. If not set, Django will keep using the SQLite config.

Create a base.html under main/templates/main:

<!DOCTYPE html>

<html lang=”en”>

<head>

<meta charset=”UTF-8″>

<title>Link Shortener</title>

<link href=”https://unpkg.com/material-components-web@latest/dist/material-components-web.min.css” rel=”stylesheet”>

<script src=”https://unpkg.com/material-components-web@latest/dist/material-components-web.min.js”></script>

</head>

<style>

#main-card {

margin:0 auto;

display: flex;

width: 50em;

align-items: center;

}

</style>

<body class=”mdc-typography”>

<div id=”main-card”>

{% block content %}

{% endblock %}

</div>

</body>

Alter the index.html to use material design:

{% extends ‘main/base.html’ %}

{% block content %}

<form action=”{% url ‘shorten_post’ %}” method=”post”>

{% csrf_token %}

<label class=”mdc-text-field mdc-text-field–outlined”>

<span class=”mdc-notched-outline”>

<span class=”mdc-notched-outline__leading”></span>

<span class=”mdc-notched-outline__notch”>

<span class=”mdc-floating-label” id=”my-label-id”>URL</span>

</span>

<span class=”mdc-notched-outline__trailing”></span>

</span>

<input type=”text” name=”url” class=”mdc-text-field__input” aria-labelledby=”my-label-id”>

</label>

<button class=”mdc-button mdc-button–outlined” type=”submit”>

<span class=”mdc-button__ripple”></span>

<span class=”mdc-button__label”>Shorten</span>

</button>

</form>

{% endblock %}

Create another view for the response, namely link.html:

{% extends ‘main/base.html’ %}

{% block content %}

<div class=”mdc-card__content”>

<p>Shortened URL: <a href=”{{shortened_url}}”>{{shortened_url}}</a></p>

</div>

{% endblock %}

Now, get back to views.py and change the shorten function to render instead of returning a plain HTML:

. . .

def shorten(request, url):

shortened_url_hash = service.shorten(url)

shortened_url = request.build_absolute_uri(reverse(‘redirect’, args=[shortened_url_hash]))

return render(request, ‘main/link.html’, {‘shortened_url': shortened_url})

Click here to access the code previously developed for this example. You can directly clone the repository and try executing the following commands to bring up the application.

git clone https://github.com/aerabi/link-shortener

cd link-shortener/src/urlshortener

python manage.py migrate

python manage.py runserver

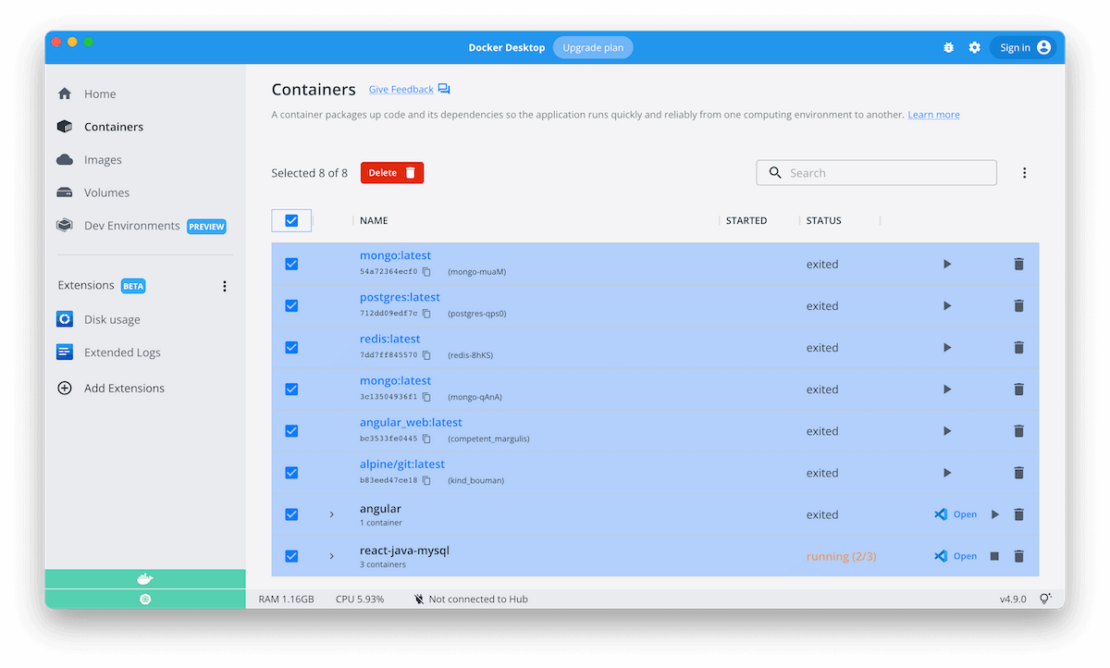

Step 8. Containerizing the Django App

Docker helps you containerize your Django app, letting you bundle together your complete Django application, runtime, configuration, and OS-level dependencies. This includes everything needed to ship a cross-platform, multi-architecture web application.

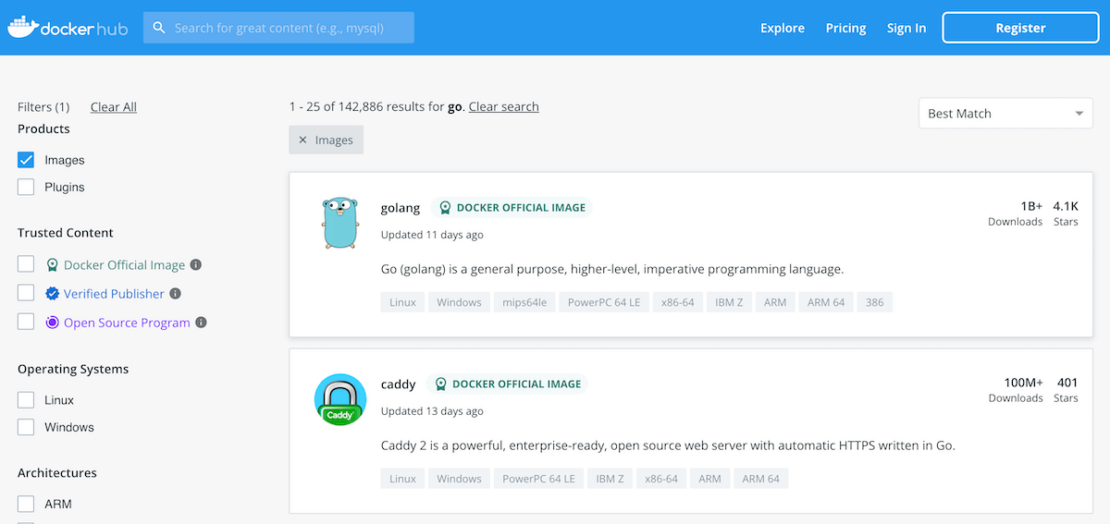

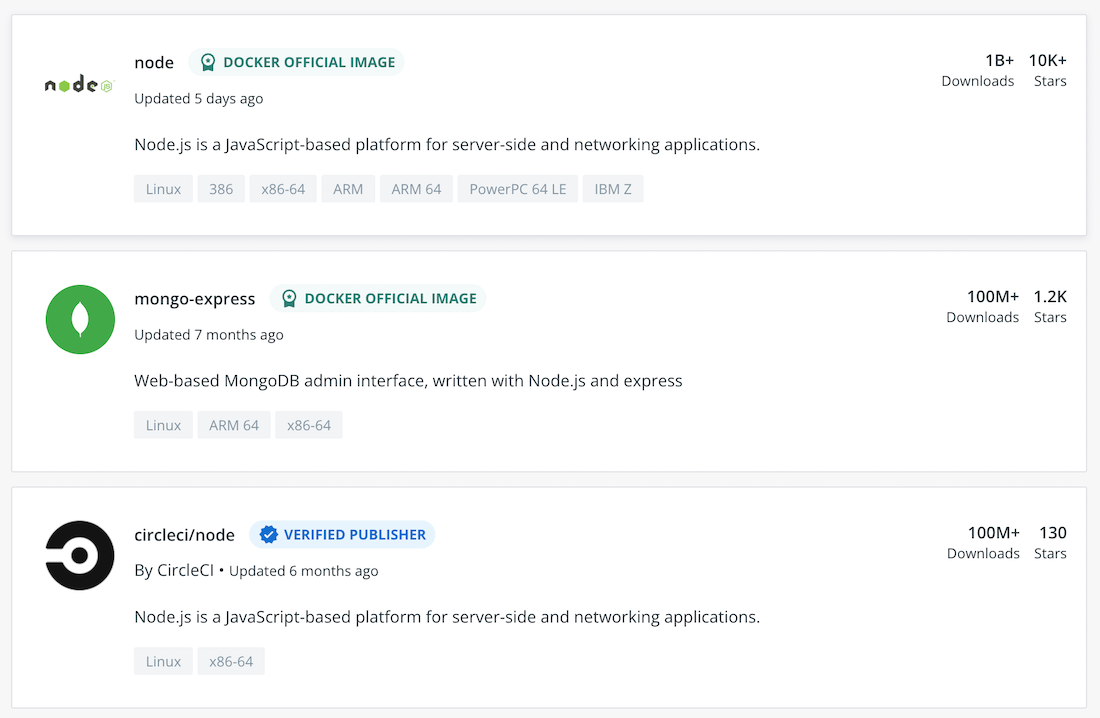

Let’s look at how you can easily run this app inside a Docker container using a Docker Official Image. First, you’ll need to download Docker Desktop. Docker Desktop accelerates the image-building process while making useful images more discoverable. Complete the installation process once your download is finished.

You’ve effectively learned how to build a sample Django app. Next, let’s see how to create an associated Docker image for this application.

Docker uses a Dockerfile to specify each image’s “layers.” Each layer stores important changes stemming from the base image’s standard configuration. Create the following empty Dockerfile in your Django project.

touch Dockerfile

Use your favorite text editor to open this Dockerfile. You’ll then need to define your base image.

Whenever you’re creating a Docker image to run a Python program, it’s always recommended to use a smaller base image that helps in speeding up the build process and launching containers at a faster pace.

FROM python:3.9

Next, let’s quickly create a directory to house our image’s application code. This acts as the working directory for your application

RUN mkdir /code

WORKDIR /code

It’s always recommended to update all the packages using the pip command.

RUN pip install –upgrade pip

The following COPY instruction copies the requirements.txt file from the host machine to the container image and stores it under /code directory.

COPY requirements.txt /code/

RUN pip install -r requirements.txt

Next, you need to copy all the directories of the Django project. It includes Django source code and pre-environment configuration files of the artifact.

COPY . /code/

Next, use the EXPOSE instruction to inform Docker that the container listens on the specified network ports at runtime. The EXPOSE instruction doesn’t actually publish the port. It functions as a type of documentation between the person who builds the image and the person who runs the container, about which ports are intended to be published.

EXPOSE 8000

Finally, in the last line of the Dockerfile, specify CMD so as to provide defaults for an executing container. These defaults include Python executables. The runserver command is a built-in subcommand of Django’s manage.py file that will start up a development server for this specific Django project.

CMD [“python”, “manage.py”, “runserver”, “0.0.0.0:8000″]

Here’s your complete Dockerfile:

FROM python:3.9

RUN mkdir /code

WORKDIR /code

RUN pip install –upgrade pip

COPY requirements.txt /code/

RUN pip install -r requirements.txt

COPY . /code/

EXPOSE 8000

CMD [“python”, “manage.py”, “runserver”, “0.0.0.0:8000″]

Step 9. Building Your Docker Image

Next, you’ll need to build your Docker image. Enter the following command to kickstart this process, which produces an output soon after:

docker build -t urlshortener .

Step 10. Run Your Django Docker Container

Docker runs processes in isolated containers. A container is a process that runs on a host, which it’s either local or remote. When an operator executes docker run, the container process that runs is isolated with its own file system, networking, and separate process tree from the host.

The following docker run command first creates a writeable container layer over the specified image, and then starts it.

docker run -p 8000:8000 -t urlshortener

Step 11. Running URL Shortener app using Docker Compose

Finally, it’s time to create a Docker Compose file. This single YAML file lets you specify your frontend app and your PostgreSQL database:

services:

web:

build:

context: ./src/urlshortener/

dockerfile: Dockerfile

command: gunicorn urlshortener.wsgi:application –bind 0.0.0.0:8000

ports:

– 8000:8000

environment:

– POSTGRES_NAME=postgres

– POSTGRES_USER=postgres

– POSTGRES_PASSWORD=postgres

depends_on:

– db

db:

image: postgres

volumes:

– postgresdb:/var/lib/postgresql/data

environment:

– POSTGRES_DB=postgres

– POSTGRES_USER=postgres

– POSTGRES_PASSWORD=postgres

volumes:

postgresdb:

Your example application has the following parts:

Two services backed by Docker images: your frontend web app and your backend database

The frontend, accessible via port 8000

The depends_on parameter, letting you create the backend service before the frontend service starts

One persistent volume, attached to the backend

The environmental variables for your PostgreSQL database

You’ll then start your services using the docker-compose up command.

docker-compose up -d -—build

Note: If you’re using Docker Compose v1, the command line name is docker-compose, with a hyphen. If you’re using v2, which is shipped with Docker Desktop, you should omit the hyphen: docker compose.

docker-compose ps

NAME COMMAND SERVICE STATUS PORTS

link-shortener-db-1 “docker-entrypoint.s…” db running 5432/tcp

link-shortener-web-1 “gunicorn urlshorten…” web running 0.0.0.0:8000->8000/tcp

Now, it’s time to perform the migration:

docker-compose exec web python manage.py migrate

Just like that, you’ve created and deployed your Django URL-shortener app! This is usable in your browser, like before:

You can get the shortened URL by adding the URL as shown below:

Conclusion

Docker helps accelerate the process of building, running, and sharing modern applications. Docker Official Images help you develop your own unique applications, no matter what tech stack you’re accustomed to. With a single YAML file, we demonstrated how Docker Compose helps you easily build and deploy a Django-based URL shortener app in a matter of seconds. With just a few extra steps, you can apply this tutorial while building applications with much greater complexity.

Happy coding.

Quelle: https://blog.docker.com/feed/