Containerizing a Legendary PetClinic App Built with Spring Boot

Per the latest Health for Animals Report, over half of the global population (billions of households) is estimated to own a pet. In the U.S. alone, this is true for 70% of households.

A growing pet population means a greater need for veterinary care. In a survey by the World Small Animal Veterinary Association (WSAVA), three-quarters of veterinary associations shared that subpar access to veterinary medical products hampered their ability to meet patient needs and provide quality service.

Source: Unsplash

The Spring Framework team is taking on this challenge with its PetClinic app. The Spring PetClinic is an open source sample application developed to demonstrate the database-oriented capabilities of Spring Boot, Spring MVC, and the Spring Data Framework. It’s based on this Spring stack and built with Maven.

PetClinic’s official version also showcases how these technologies work with Spring Data JPA. Overall, the Spring PetClinic community maintains nine PetClinic app forks and 18 repositories under Docker Hub. To learn how the PetClinic app works, check out Spring’s official resource.

Deploying a Pet Clinic app is simple. You can clone the repository, build a JAR file, and run it from the command line:

git clone https://github.com/dockersamples/spring-petclinic-docker

cd spring-petclinic-docker

./mvnw package

java -jar target/*.jar

You can then access PetClinic at http://localhost:8080 in your browser:

Why does the PetClinic app need containerization?

The biggest challenge developers face with Spring Boot apps like PetClinic is concurrency — or the need to do too many things simultaneously. Spring Boot apps may also unnecessarily increase deployment binary sizes with unused dependencies. This creates bloated JARs that may increase your overall application footprint while impacting performance.

Other challenges include a steep learning curve and complexities while building a customized logging mechanism. Developers have been seeking solutions to these problems. Unfortunately, even the Docker Compose file within Spring Boot’s official repository shows how to containerize the database, but doesn’t extend this to the complete application.

How can you offset these drawbacks? Docker simplifies and accelerates your workflows by letting you freely innovate with your choice of tools, application stacks, and deployment environments for each project. You can run your Spring Boot artifact directly within Docker containers. This lets you quickly create microservices. This guide will help you completely containerize your PetClinic solution.

Containerizing the PetClinic application

Docker helps you containerize your Spring app — letting you bundle together your complete Spring Boot application, runtime, configuration, and OS-level dependencies. This includes everything needed to ship a cross-platform, multi-architecture web application.

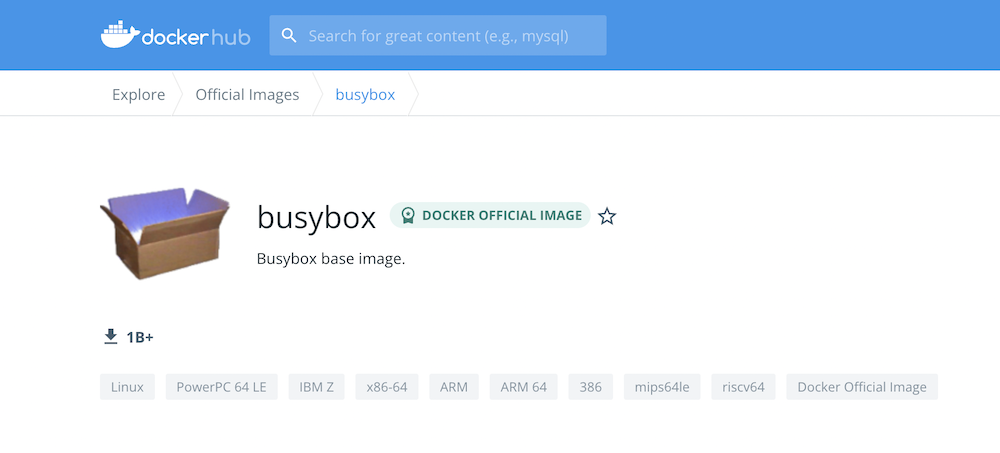

We’ll explore how to easily run this app within a Docker container, using a Docker Official image. First, you’ll need to download Docker Desktop and complete the installation process. This gives you an easy-to-use UI and includes the Docker CLI, which you’ll leverage later on.

Docker uses a Dockerfile to specify each image’s layers. Each layer stores important changes stemming from your base image’s standard configuration. Let’s create an empty Dockerfile in our Spring project.

Building a Dockerfile

A Dockerfile is a text document that contains the instructions to assemble a Docker image. When we have Docker build our image by executing the docker build command, Docker reads these instructions, executes them, and creates a Docker image as a result.

Let’s walk through the process of creating a Dockerfile for our application. First create the following empty Dockerfile in the root of your Spring project.

touch Dockerfile

You’ll then need to define your base image.

The upstream OpenJDK image no longer provides a JRE, so no official JRE images are produced. The official OpenJDK images just contain “vanilla” builds of the OpenJDK provided by Oracle or the relevant project lead. That said, we need an alternative!

One of the most popular official images with a build-worthy JDK is Eclipse Temurin . The Eclipse Temurin project provides code and processes that support the building of runtime binaries and associated technologies. Temurin is high performance, enterprise-caliber, and cross-platform.

FROM eclipse-temurin:17-jdk-jammy

Next, let’s quickly create a directory to house our image’s application code. This acts as the working directory for your application:

WORKDIR /app

The following COPY instruction copies the Maven wrapper and our pom.xml file from the host machine to the container image. The COPY command takes two parameters. The first tells Docker which file(s) you would like to copy into the image. The second tells Docker where you want those files to be copied. We’ll copy everything into our working directory called /app.

COPY .mvn/ .mvn

COPY mvnw pom.xml ./

Once we have our pom.xml file inside the image, we can use the RUN command to execute the command ./mvnw dependency:resolve. This works identically to running the .mvnw (or mvn) dependency locally on our machine, but this time the dependencies will be installed into the image.

RUN./mvnw dependency:resolve

The next thing we need to do is to add our source code into the image. We’ll use the COPY command just like we did with our pom.xml file above.

COPY src ./src

Finally, we should tell Docker what command we want to run when our image is executed inside a container. We do this using the CMD instruction.

CMD ["./mvnw", "spring-boot:run"]

Here’s your complete Dockerfile:

FROM eclipse-temurin:17-jdk-jammy

WORKDIR /app

COPY .mvn/ .mvn

COPY mvnw pom.xml ./

RUN ./mvnw dependency:resolve

COPY src ./src

CMD ["./mvnw", "spring-boot:run"]

Create a .dockerignore file

To increase build performance, and as a general best practice, we recommend creating a .dockerignore file in the same directory as your Dockerfile. For this tutorial, your .dockerignore file should contain just one line:

target

This line excludes the target directory — which contains output from Maven — from Docker’s build context. There are many good reasons to carefully structure a .dockerignore file, but this simple file is good enough for now.

So, what’s this build context and why’s it essential? The docker build command builds Docker images from a Dockerfile and a context. This context is the set of files located in your specified PATH or URL. The build process can reference any of these files.

Meanwhile, the compilation context is where the developer works. It could be a folder on Mac, Windows, or a Linux directory. This directory contains all necessary application components like source code, configuration files, libraries, and plugins. With the .dockerignore file, you can determine which of the following elements like source code, configuration files, libraries, plugins, etc. to exclude while building your new image.

Building a Docker image

Let’s build our first Docker image:

docker build –tag petclinic-app .

Once the build process is completed, you can list out your images by running the following command:

$ docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

petclinic-app latest 76cb88b61d39 About an hour ago 559MB

eclipse-temurin 17-jdk-jammy 0bc7a4cbe8fe 5 weeks ago 455MB

With multi-stage builds, a Docker build can use one base image for compilation, packaging, and unit tests. A separate image holds the application runtime. This makes the final image more secure and smaller in size (since it doesn’t contain any development or debugging tools).

Multi-stage Docker builds are a great way to ensure your builds are 100% reproducible and as lean as possible. You can create multiple stages within a Dockerfile and control how you build that image.

Spring Boot uses a “fat JAR” as its default packaging format. When we inspect the fat JAR, we see that the application is a very small portion of the entire JAR. This portion changes most frequently. The remaining portion contains your Spring Framework dependencies. Optimization typically involves isolating the application into a separate layer from the Spring Framework dependencies. You only have to download the dependencies layer — which forms the bulk of the fat JAR — once. It’s also cached in the host system.

In the first stage, the base target is building the fat JAR. In the second stage, it’s copying the extracted dependencies and running the JAR:

FROM eclipse-temurin:17-jdk-jammy as base

WORKDIR /app

COPY .mvn/ .mvn

COPY mvnw pom.xml ./

RUN ./mvnw dependency:resolve

COPY src ./src

FROM base as development

CMD ["./mvnw", "spring-boot:run", "-Dspring-boot.run.profiles=mysql", "-Dspring-boot.run.jvmArguments=’-agentlib:jdwp=transport=dt_socket,server=y,suspend=n,address=*:8000’"]

FROM base as build

RUN ./mvnw package

FROM eclipse-temurin:17-jre-jammy as production

EXPOSE 8080

COPY –from=build /app/target/spring-petclinic-*.jar /spring-petclinic.jar

CMD ["java", "-Djava.security.egd=file:/dev/./urandom", "-jar", "/spring-petclinic.jar"]

The first image eclipse-temurin:17-jdk-jammy is labeled base. This helps us refer to this build stage in other build stages. Next, we’ve added a new stage labeled development. We’ll leverage this stage while writing Docker Compose later on.

Notice that this Dockerfile has been split into two stages. The latter layers contain the build configuration and the source code for the application, and the earlier layers contain the complete Eclipse JDK image itself. This small optimization also saves us from copying the target directory to a Docker image — even a temporary one used for the build. Our final image is just 318 MB, compared to the first stage build’s 567 MB size.

Now, let’s rebuild our image and run our development build. We’ll run the docker build command as above, but this time we’ll add the –target development flag so that we specifically run the development build stage.

docker build -t petclinic-app –target development .

docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

petclinic-app latest 05a13ed412e0 About an hour ago 313MB

Using Docker Compose to develop locally

In this section, we’ll create a Docker Compose file to start our PetClinic and the MySQL database server with a single command.

Here’s how you define your services in a Docker Compose file:

services:

petclinic:

build:

context: .

dockerfile: Dockerfile

target: development

ports:

– 8000:8000

– 8080:8080

environment:

– SERVER_PORT=8080

– MYSQL_URL=jdbc:mysql://mysqlserver/petclinic

volumes:

– ./:/app

depends_on:

– mysqlserver

mysqlserver:

image: mysql/mysql-server:8.0

ports:

– 3306:3306

environment:

– MYSQL_ROOT_PASSWORD=

– MYSQL_ALLOW_EMPTY_PASSWORD=true

– MYSQL_USER=petclinic

– MYSQL_PASSWORD=petclinic

– MYSQL_DATABASE=petclinic

volumes:

– mysql_data:/var/lib/mysql

– mysql_config:/etc/mysql/conf.d

volumes:

mysql_data:

mysql_config:

You can clone the repository or download the YAML file directly from here.

This Compose file is super convenient, as we don’t have to enter all the parameters to pass to the docker run command. We can declaratively do that using a Compose file.

Another cool benefit of using a Compose file is that we’ve set up DNS resolution to use our service names. Resultantly, we’re now able to use mysqlserver in our connection string. We use mysqlserver since that’s how we’ve named our MySQL service in the Compose file.

Now, let’s start our application and confirm that it’s running properly:

docker compose up -d –build

We pass the –build flag so Docker will compile our image and start our containers. Your terminal output will resemble what’s shown below if this is successful:

Next, let’s test our API endpoint. Run the following curl commands:

$ curl –request GET

–url http://localhost:8080/vets

–header ‘content-type: application/json’

You should receive the following response:

{

"vetList": [

{

"id": 1,

"firstName": "James",

"lastName": "Carter",

"specialties": [],

"nrOfSpecialties": 0,

"new": false

},

{

"id": 2,

"firstName": "Helen",

"lastName": "Leary",

"specialties": [

{

"id": 1,

"name": "radiology",

"new": false

}

],

"nrOfSpecialties": 1,

"new": false

},

{

"id": 3,

"firstName": "Linda",

"lastName": "Douglas",

"specialties": [

{

"id": 3,

"name": "dentistry",

"new": false

},

{

"id": 2,

"name": "surgery",

"new": false

}

],

"nrOfSpecialties": 2,

"new": false

},

{

"id": 4,

"firstName": "Rafael",

"lastName": "Ortega",

"specialties": [

{

"id": 2,

"name": "surgery",

"new": false

}

],

"nrOfSpecialties": 1,

"new": false

},

{

"id": 5,

"firstName": "Henry",

"lastName": "Stevens",

"specialties": [

{

"id": 1,

"name": "radiology",

"new": false

}

],

"nrOfSpecialties": 1,

"new": false

},

{

"id": 6,

"firstName": "Sharon",

"lastName": "Jenkins",

"specialties": [],

"nrOfSpecialties": 0,

"new": false

}

]

}

Conclusion

Congratulations! You’ve successfully learned how to containerize a PetClinic application using Docker. With a multi-stage build, you can easily minimize the size of your final Docker image and improve runtime performance. Using a single YAML file, we demonstrated how Docker Compose helps you easily build and deploy your PetClinic app in seconds. With just a few extra steps, you can apply this tutorial while building applications with much greater complexity.

Happy coding.

References

Build Your Java Image

Kickstart your Spring Boot Application Development

Spring PetClinic Application Repository

Quelle: https://blog.docker.com/feed/