Topping Stack Overflow’s 2022 list of most popular web frameworks and technologies, Node.js continues to grow as a critical MERN stack component. And since Node applications are written in JavaScript — the world’s leading programming language — many developers will feel right at home using it. We introduced the Node Docker Official Image (DOI) due to Node.js’ popularity and to solve some common development challenges.

The Node.js Foundation describes Node as “an open-source, cross-platform JavaScript runtime environment.” Developers use it to create performant, scalable server and networking applications. Despite Node’s advantages, building and deploying cross-platform services can be challenging with traditional workflows.

Conversely, the Node Docker Official Image accelerates and simplifies your development processes while allowing additional configuration. You can deploy containerized Node applications in minutes. Throughout this guide, we’ll discuss the Node Official Image, how to use it, and some valuable best practices.

In this tutorial:

What is the Node Docker Official Image?Node.js use casesAbout Docker Official ImagesHow to run Node in DockerEnter a quick pull commandConfirm that Node is functionalCreate your Node image from a DockerfileOptimize your Node imageUsing Docker ComposeRunning a simple Node scriptDocker Node best practicesGet started with Node today

What is the Node Docker Official Image?

The Node Docker Official Image contains all source code, core dependencies, tools, and libraries your application needs to work correctly.

This image supports multiple CPU architectures like amd64, arm32v6, arm32v7, arm64v8, ppc641le, and s390x. You can also choose between multiple tags (or image versions) for any project. Choosing a pinned version like node:19.0.0-slim locks you into a stable, streamlined version of Node.js.

Node.js use cases

Node.js lets developers write server-side code in JavaScript. The runtime environment then transforms this JavaScript into hardware-friendly machine code. As a result, the CPU can process these low-level instructions.

Node is event-driven (through user actions), non-blocking, and known for being lightweight while simultaneously handling numerous operations. As a result, you can use the Node DOI to create the following:

Web server applicationsNetworking applications

Node works well here because it supports HTTP requests and socket connections. An asynchronous I/O library lets Node containers read and write various system files that support applications.

You could use the Node DOI to build streaming apps, single-page applications, chat apps, to-do list apps, and microservices. Or — if you’re like Community All-Hands’ Kathleen Juell — you could use Node.js to help serve static content. Containerized Node will shine in any scenario dictated by numerous client-server requests.

Docker Captain Bret Fisher also offered his thoughts on Dockerized Node.js during DockerCon 2022. He discussed best practices for managing Node.js projects while diving into optimization.

Lastly, we also maintain some Node sample applications within our GitHub Awesome Compose library. You can learn to use Node with different databases or even incorporate an NGINX proxy.

About Docker Official Images

We’ve curated the Node Docker Official Image as one of many core container images on Docker Hub. The Node.js community maintains this image alongside members of the Docker community.

Like other Docker Official Images, the Node DOI offers a common starting point for Node and JavaScript developers. We also maintain an evolving list of Node best practices while regularly pushing critical security updates. This distinguishes Docker Official Images from alternatives on Docker Hub.

How to run Node in Docker

Before getting started, download the latest Docker Desktop release and install it. Docker Desktop includes the Docker CLI, Docker Compose, and additional core development tools. The Docker Dashboard (Docker Desktop’s UI component) will help you manage images and containers.

You’re then ready to Dockerize Node!

Enter a quick pull command

Pulling the Node DOI is the quickest way to begin. Enter docker pull node in your terminal to grab the default latest Node version from Docker Hub. You can readily use this tag for testing or local development. But, a pinned version might be safer for production use. Here’s how the pull process works:

Your CLI will display a status message once it’s done. You can also double-check this within Docker Desktop! Click the Images tab on the left sidebar and scan through your listed images. Docker Desktop will display your node image:

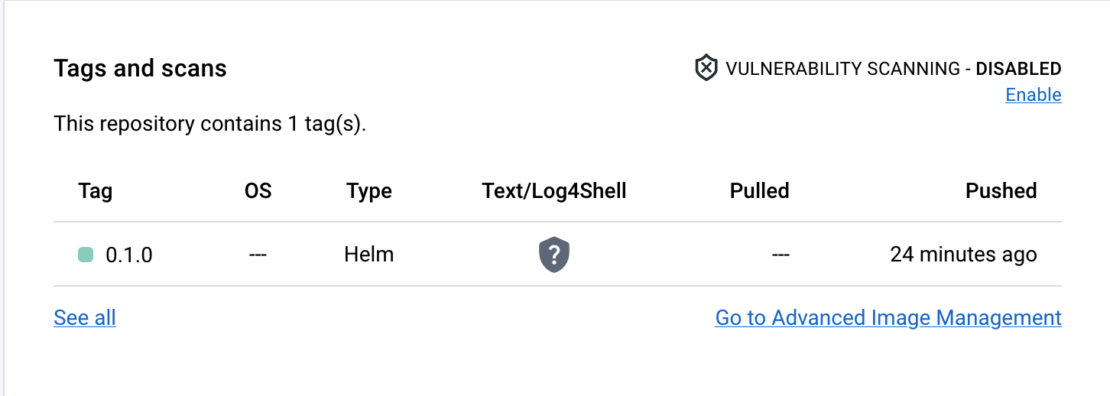

Your node:latest image is a hefty 942.33 MB. If you inspect your Node image’s contents using docker sbom node, you’ll see that it currently includes 623 packages. The Node image contains numerous dependencies and modules that support Node and various applications.

However, your final Node image can be much slimmer! We’ll tackle optimization while discussing Dockerfiles. After all, the Node DOI has 24 supported tags spread amongst four major Node versions. Each has its own impact on image size.

Confirm that Node is functional

Want to run your new image as a container? Hover over your listed node image and click the blue “Run” button. In this state, your Node container will produce some minimal log entries and run continuously in case requests come through.

Exit this container before moving on by clicking the square “stop” button in Docker Desktop or by entering docker stop YourContainerName in the CLI.

Create your Node image from a Dockerfile

Building from a Dockerfile gives you ultimate control over image composition, configuration, and your overall application. However, Node requires very little to function properly. Here’s a barebones Dockerfile to get you up and running (using a pinned, Debian-based image version):

FROM node:19-bullseye

Docker will build your image from your chosen Node version.

It’s safest to use node:19-bullseye because this image supports numerous use cases. This version is also stable and prevents you from pulling in new breaking changes, which sometimes happens with latest tags.

To build your image from a Dockerfile, run the docker build -t my-nodejs-app . command. You can then run your new image by entering docker run -it –rm –name my-running-app my-nodejs-app.

Optimize your Node image

The complete version of Node often includes extra packages that weigh your application down. This leaves plenty of room for optimization.

For example, removing unneeded development dependencies reduces image bloat. You can do this by adding a RUN instruction to our previous file:

FROM node:19-bullseye

RUN npm prune –production

This approach is pretty granular. It also relies on you knowing exactly what you do and don’t need for your project. Alternatively, switching to a slim image build offers the quickest results. You’ll encounter similar caveats but spend less time writing individual Dockerfile instructions. The easiest approach is to replace node:19-bullseye with its node:19-bullseye-slim counterpart. This alone shrinks image size by 75%.

You can even pull node:19-alpine to save more disk space. However, this tag contains even fewer dependencies and isn’t officially supported by the Node.js Foundation. Keep this in mind while developing.

Finally, multi-stage builds lead to smaller image sizes. These let you copy only what you need between build stages to combat bloat.

Using Docker Compose

Say you have a start script, an existing package.json file, and (possibly) want to operate Node alongside other services. Spinning up Node containers with Docker Compose can be pretty handy in these situations.

Here’s a sample docker-compose.yml file:

services:

node:

image: “node:19-bullseye”

user: “node”

working_dir: /home/node/app

environment:

– NODE_ENV=production

volumes:

– ./:/home/node/app

ports:

– “8888:8888″

command: “npm start”

You’ll see some parameters that we didn’t specify earlier in our Dockerfile. For example, the user parameter lets you run your container as an unprivileged user. This follows the principle of least privilege.

To jumpstart your Node container, simply enter the docker compose up -d command. Like before, you can verify that Node is running within Docker Desktop. The docker container ls –all command also displays all existing containers within the CLI.

Running a simple Node script

Your project doesn’t always need a Dockerfile. In these cases, you can directly leverage the Node DOI with the following command:

docker run -it –rm –name my-running-script -v “$PWD”:/usr/src/app -w /usr/src/app node:19-bullseye node your-daemon-or-script.js

This simplistic approach is ideal for single-file projects.

Docker Node best practices

It’s important to get the most out of Docker and the Node Official Image. We’ve briefly outlined the benefits of running as a non-root node user, but here are some useful tips for developing with Node:

Easily pass secrets and other runtime configurations to your application by setting NODE_ENV to production, as seen here: -e “NODE_ENV=production”.Place any installed, global Node dependencies into a non-root user directory.Remember to manually install curl if using an alpine image tag, since it’s not included by default.Wrap your Node process in an init system with the –init flag, so it can successfully run as PID1. Set memory limitations for your containers that run on the same host. Include the package.json start command directly within your Dockerfile, to reduce active container processes and let Node properly receive exit signals.

This isn’t an exhaustive list. To view more details, check out our best practices documentation.

Get started with Node today

As you’ve seen, spinning up a Node container from the Node Docker Official Image is quick and requires just a few steps depending on your workflow. You’ll no longer need to worry about platform-specific builds or get bogged down with complex development processes.

We’ve also covered many ways to help your Node builds perform better. Check out our top containerization tips article to learn even more about optimization and security.

Ready to get started? Swing by Docker Hub and pull our Node image to start experimenting. In no time, you’ll have your server and networking applications up and running. You can also learn more on our GitHub read.me page.

Quelle: https://blog.docker.com/feed/