Our journey has been remarkable. Recently, Docker shifted focus from Docker Swarm, specializing in container orchestration, to the “inner loop” — the foundation — of the Software Development Life Cycle (SLDC). Today, in the early planning, coding, and building stages, we are setting the stage for container development success, ensuring development teams can rapidly and consistently build innovative containerized applications with confidence.

At Docker, we’re dedicated to optimizing the “inner loop” to ensure your journey from development to deployment and in-production software management is flawless. Whether you’re developing locally or in the cloud, our commitment to delivering all this with top-tier performance and security remains unwavering.

In this post, I’ll highlight our focus on performance and walk you through the milestones of the past year. We’re thrilled about the momentum we’re building in providing you with a robust, performant, agile, and secure container application development platform.

These achievements are more than just numbers; they demonstrate the positive impact and return on investment we deliver to you. Our work continues, but let’s explore these improvements and what they mean for you — the driving force behind innovation.

Improving startup performance by up to 75%

In 2022, we embarked on a journey that transformed how macOS users experience Docker. At that time, experimental virtualization was the norm, resulting in startup times that tested your patience, often exceeding 30 seconds. We knew we had to improve this, so we made several adjustments to reduce startup time significantly, such as adding support for the Mac virtualization framework and optimizing the Docker Desktop Linux VM boot sequence.

Now, when you fire up Docker Desktop 4.23, brace yourself for a lightning-fast launch, taking a mere 3.481 seconds. That’s right, a startup time that’s not just improved but slashed by 75% (Figure 1).

Figure 1: Startup time improvements across dev environments from Docker Desktop 4.12 to 4.23.

Mac users aren’t the only ones celebrating. Windows Hyper-V and Windows WSL2 users have their reason to cheer. Startup times have decreased from 20.257 seconds (with 4.12) to just 10.799 seconds (with 4.23). That 47% performance boost provides a smoother and more efficient development experience.

And the startup performance journey continues. We want to achieve sub-three-second startup times for all supported development environments. We’re looking forward to delivering this additional advancement soon, and we anticipate our startup performance to continue improving with each release.

Accelerating network performance by 85x

Downloading and uploading container images can be time-consuming. On Mac, with Docker Desktop 4.23, we’ve accelerated the process, delivering speeds over 30GB/s (bytes/sec), ensuring swift development workflows. We did this by entirely replacing the Docker Desktop network stack with a newer, modernized version that is much more efficient. This change resulted in an 85x improvement in upload speed compared to previous versions (4.12) (Figure 2). Think of it as upgrading from a horse-drawn carriage to a bullet train. Your data can now move seamlessly without delays.

Figure 2: Host-to-container use case occurs when a service hosted inside the container is accessed from outside the VM (for example, when a web developer accesses a website they are working on using a browser). Container-to-host use case occurs when the container accesses a service provided from the host (for example, when a package is installed as part of a build using internet access).

On Windows, downloading an image has never been faster. Docker Desktop 4.23 now achieves a speed of 1.1Gbits/s, enhancing developer efficiency. This achievement represents a 650% improvement compared to the previous version (4.12).

For real-time downloading speed, such as you would expect for video games and movies, Docker Desktop 4.23 on macOS offers UDP streaming improvements, soaring to 4.75GB/s (bytes/sec), a 5,800% increase in streaming speed compared to the previous version (4.12).These numbers translate to a faster, smoother digital experience, helping to keep your digital world at the speed of your ideas.

Optimizing host file sharing performance by more than 2x

File sharing may not always be in the spotlight. Still, it’s an unsung hero of modern development that can make or break your development experience, and we’ve even made improvements here.

Imagine this scenario: Not too long ago, working with Docker Desktop 4.11 on your trusty Mac host, building Redis from within a container (where your Redis source code resided on your local host) was a patience-testing ordeal. It demanded 7 minutes and 25 seconds of your valuable time, primarily because the container’s access to the host files introduced frustrating delays.

Today, with Docker Desktop 4.23, we’ve revolutionized the game. Thanks to groundbreaking improvements in virtiofs, that same Redis build now takes only 2 minutes and 6 seconds. That’s an impressive 71% reduction in build time.

Since macOS 12.5+, virtiofs is now the default in Docker Desktop as the standard to deliver substantial performance gains when sharing files with containers (Figure 3). You can read more about this in “Docker Desktop 4.23: Updates to Docker Init, New Configuration Integrity Check, Quick Search Improvements, Performance Enhancements, and More.”

Figure 3: Docker Desktop 4.11 compared to 4.22 with virtiofs enabled.

But wait, there’s more to come. Expect even more progress in the file-sharing arena soon. We continue working toward seamless collaboration and faster development cycles because we know that every minute saved is a minute gained for innovation.

Increasing efficiency and reducing idle memory usage by 10x

Let’s talk about efficiency and a little touch of green innovation.

In Docker Desktop 4.22, we introduced the Resource Saver mode, which is like having your development environment on standby, ready to jump into action when needed and conserving resources when it’s not. Resource Saver mode works on Mac, Windows, and Linux, and supports both Mac and Windows by massively reducing Docker Desktop’s memory and CPU footprint when Docker Desktop is idle (i.e., not running containers for a configurable period of time), reducing memory utilization on host machines by a 2GBs, thereby allowing developers to multitask uninterrupted (Figure 4).

Figure 4: Idle memory usage improvements since Docker Desktop 4.20.

Besides improving developer multitasking, what else is so remarkable about this feature? Well, let me paint the picture. We’re saving 38,500 CPU hours daily across all our Docker Desktop users. To put that in perspective, that’s enough to power 1,000 American homes for an entire month.

We have also made significant improvements while Docker Desktop is active (i.e., running containers), resulting in a 52.85% reduction in footprint. These improvements make Docker Desktop lighter and free up resources on your machine to leverage other tools and applications efficiently (Figure 5).

Figure 5: Docker Desktop active memory usage improvements since 4.20.

This means we’re not just optimizing your development workflow but doing so efficiently, reducing energy costs, and positively impacting the environment — an area we will continue to invest in. The reduced footprint is one small way of giving back while helping you build the future — a win-win.

Streamlining the build process, delivering up to a 40% compression improvement

Imagine your containers are digital backpacks; the heavier the bag, the harder to carry it around while you work. We’ve introduced support for Zstandard (zstd) compression of Docker container images in Docker Desktop 4.19 to lighten the load, reducing container image sizes with remarkable results.

Look at the data for a debian:12.1 container image in Figure 6. Zstandard delivers a ~40% improvement in compression compared to the traditional gzip method. And for the Docker Engine:24.0 image, we are achieving a ~20% enhancement.

Figure 6: Data for a debian:12.1 container image and Docker Engine 24.0 with improved compression.

In practical terms, your container images become leaner and faster to transfer, allowing you to work more swiftly and effectively. With Docker Desktop, it’s like fitting your backpack with a magical compression spell, making every byte count. Your containers are lighter, your image pulls and pushes are faster, and your development is smoother — optimizing your journey, one compression at a time.

Enterprise-level security (and peace of mind)

When we talk about speed and performance, there’s a crucial aspect we mustn’t overlook: security. At Docker, we understand that speed without security is like a ship without a compass — it may move fast but won’t stay on course.

While we’ve been investing heavily in accelerating your development journey, we haven’t lost sight of our commitment to enterprise-level security and governance. In fact, it’s quite the opposite. Our goal is to create a seamless union between velocity and vigilance.

Here’s how we do it:

Unprivileged users: Unlike the native Docker Engine on Linux, unprivileged users can run Docker Desktop. This is because Docker Desktop runs Docker Engine inside a Linux VM, isolated from the underlying host machine.

Enhanced Container Isolation: ECI runs containers in rootless mode by default, vets sensitive system calls in containers, and restricts sensitive mounts, thereby adding an extra layer of isolation between containers and the host. It does this without changing developer workflows, so you can continue using Docker as usual with an extra layer of peace of mind.

Settings management: With settings management, IT admins can manipulate security settings in Docker Desktop per organization security policies to better secure developer environments.

Robust security model: Our security model is designed for safety and optimal performance. The two should go hand in hand. So, while protecting your environment, we ensure it runs efficiently.

Continuous security audits: Our commitment to security goes beyond features and tools. We are dedicated to safeguarding the platform, user community, and customers from various modern-day threats. We invest in regular security audits to scrutinize every nook and cranny of our applications and services. Vulnerabilities are swiftly identified and mitigated.

We aim to provide you with a holistic platform, an enterprise-grade offering that seamlessly integrates performance and security. In this fast-paced world, the perfect blend of speed and security truly empowers innovation. At Docker, we’re here to ensure you have both every step of the way.

Continuing our journey

At Docker, our unwavering commitment to performance and innovation is crystal clear. The achievements showcased here are just the beginning. So, as you embark on your development endeavors, know that we’re right there with you, making the seconds count and ensuring your confidence and ability to focus energy on what truly matters — creating and innovating. Together, we’re rewriting the story of development across the SDLC, one build, container, and application at a time.

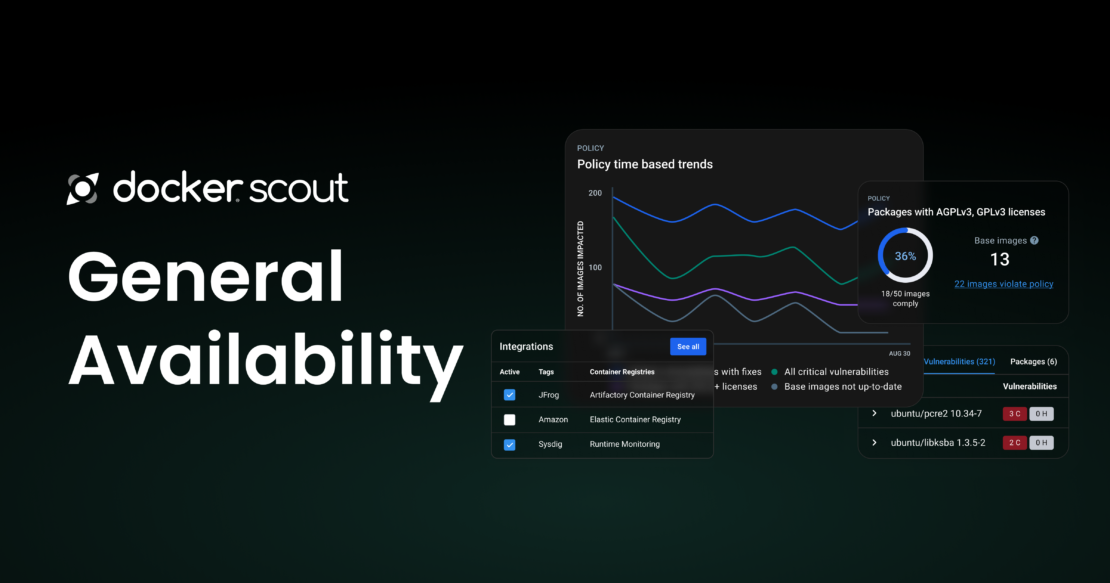

I hope you will join us at DockerCon 2023, in person or virtually, to explore what we have planned for Docker Desktop, Docker Hub, and Docker Scout. Upgrade to the latest Docker Desktop version and check out our Docker 101 webinar: What Docker can do for your business.

Learn more

Register for DockerCon.

Register for DockerCon workshops.

See the DockerCon program.

Get the latest release of Docker Desktop.

Vote on what’s next! Check out our public roadmap.

Have questions? The Docker community is here to help.

New to Docker? Get started.

Quelle: https://blog.docker.com/feed/