Executive summary

The 2025 Docker State of Application Development Report offers an ultra high-resolution view of today’s fast-evolving dev landscape. Drawing insights from over 4,500 developers, engineers, and tech leaders — three times more users than last year — the survey explores tools, workflows, pain points, and industry trends. In this our third report, key themes emerge: AI is gaining ground but adoption remains uneven; security is now a shared responsibility across teams; and developers still face friction in the inner loop despite better tools and culture. With a broader, more diverse respondent base than our previous more IT-focused surveys, this year’s report delivers a richer, more nuanced view of how modern software is built and orgs operate.

2025 report key findings

IT is among the leaders in AI — with 76% of IT/SaaS pros using AI tools at work, compared with just 22% across industries. Overall, there’s a huge spread across industries — from 1% to 84%.

Security is no longer a siloed specialty — especially when vulnerabilities strike. Just 1 in 5 organizations outsource security, and it’s top of mind at most others: only 1% of respondents say security is not a concern at their organization.

Container usage soared to 92% in the IT industry — up from 80% in our 2024 survey. But adoption is lower across other industries, at 30%. IT’s greater reliance on microservice-based architectures — and the modularity and scalability that containers provide — could explain the disparity.

Non-local dev environments are now the norm — not the exception. In a major shift from last year, 64% of developers say they use non-local environments as their primary development setup, with local environments now accounting for only 36% of dev workflows.

Data quality is the bottleneck when it comes to building AI/ML-powered apps — and it affects everything downstream. 26% of AI builders say they’re not confident in how to prep the right datasets — or don’t trust the data they have.

Developer productivity, AI, and security are key themes

Like last year’s survey, our 2025 report drills down into three main areas:

What’s helping devs thrive — and what’s still holding them back?

AI is changing software development — but not how you think

Security — it’s a team sport

1. What’s helping devs thrive — and what’s still holding them back?

Great culture, better tools — but developers often still hit sticking points. From pull requests held up in review to tasks without clear estimates, the inner loop remains cluttered with surprisingly persistent friction points.

How devs learn — and what’s changing

Self-guided learning is on the upswing. Across all industries, fully 85% of respondents turn to online courses or certifications, far outpacing traditional sources like school (33%), books (25%), or on-the-job training (25%).

Among IT folks, the picture is more nuanced. School is still the top venue for learning to code (65%, up from 57% in our 2024 survey), but online resources are also trending upward. Some 63% of IT pros learned coding skills via online resources (up from 54% in our 2024 survey) and 57% favored online courses or certifications (up from 45% in 2024).

How devs like to learn

As for how devs prefer to learn, reading documentation tops the list, as in last year’s report — that despite the rise in new and interactive forms of learning. Some 29% say they lean on documentation, edging out videos and side projects (28% each) and slightly ahead of structured online training (26%).

AI tools play a relatively minor role in how respondents learn, with GitHub Copilot cited by just 13% overall — and only 9% among IT pros. It’s also cited by 13% as a preferred learning method.

Online resources

When learning to code via online resources, respondents overwhelmingly favored technical documentation (82%) ahead of written tutorials and how-to videos (66% each), and blogs (63%).

Favorite online courses or certifications included Coursera (28%), LinkedIn Learning (24%), and Pluralsight (23%).

Discovering new tools

When it comes to finding out about new tools, developers tend to trust the opinions and insights of other developers — as evidenced by the top four selected options. Across industries, the main ways are developer communities, social media, and blogs (tied at 23%), followed closely by friends/colleagues (22%).

Within the IT industry only, the findings mirror last year’s, though blogs have moved up from third place to first. The primary ways devs learn about new tools are blogs (54%), developer communities (52%), and social media (50%), followed by searching online (48%) and friends/colleagues (46%).Conferences still play a significant role, with 34% of IT folks selecting this response, versus 17% across industries.

Open source contributions

Unsurprisingly, IT industry workers are more active in the open source space:

48% contributed to open source in the past year, while 52% did not.

That’s a slight drop from 2024, when 59% reported contributing and 41% had not.

Falling open source contributions could be worth keeping an eye on — especially with growing developer reliance on AI coding copilots. Could AI be chipping away at the need for open source code itself? Future studies could reveal whether this is a blip or a trend.

Across industries, just 13% made open source contributions, while 87% did not. But the desire to contribute is widespread — spanning industries as diverse as energy and utilities (91%), media or digital and education (90% each), and IT and engineering or manufacturing (82% each).

Mirroring our 2024 study, the biggest barrier to contributing to open source is time — 24%, compared with 40% in last year’s IT-focused study. Other barriers include not knowing where to start (18%) and needing guidance from others on how to contribute (15%).

Employers are often supportive: 37% allow employees to contribute to open source, while just 29% do not.

Tech stack

This year, we dove deeper into the tech stack landscape to understand more about the application structures, languages, and frameworks devs are using — and how they have evolved since the previous survey.

Application structure

Asked about the structure of the applications they work on, respondents’ answers underscored the continued rise of microservices we saw in our 2024 report.

Thirty-five percent said they work on microservices-based applications — far more than those who work on monolithic or hybrid monolith/microservices (24% each), but still behind the 38% who work on client-server apps.

Non-local dev environments are now the norm — not the exception

The tides have officially turned. In 2025, 64% of developers say they use non-local environments as their primary development setup, up from just 36% last year. Local environments — laptops or desktops — now account for only 36% of dev workflows.

What’s driving the shift? A mix of flexible, cloud-based tooling:

Ephemeral or preview environments: 10% (↓ from 12% in 2024)

Personal remote dev environments or clusters: 22% (↑ from 11%)

Other remote dev tools (e.g., Codespaces, Gitpod, JetBrains Space): 12% (↑ from 8%)

Compared to 2024, adoption of persistent, personal cloud environments has doubled, while broader usage of remote dev tools is also on the rise.

Bottom line: As we’ve tracked since our first app dev report in 2022, the future of software development is remote, flexible, and increasingly cloud-native.

IDP adoption remains low — except at larger companies

Internal Developer Portals (IDPs) may be buzzy, but adoption is still in early days. Only 7% of developers say their team currently uses an IDP, while 93% do not.That said, usage climbs with company size. Among orgs with 5,000+ employees, IDP adoption jumps to 36%. IDPs aren’t mainstream yet — but at scale, they’re proving their value.

OS preferences hold steady — with Linux still on top

When it comes to operating systems for app development, Linux continues to lead the pack, used by 53% of developers — the same share as last year. macOS usage has ticked up slightly to 51% (from 50%), while Windows trails just behind at 47% (up from 46%).

The gap among platforms remains narrow, suggesting that today’s development workflows are increasingly OS-flexible — and often cross-platform.

Python surges past JavaScript in language popularity

Python is now the top language among developers, used by 64%, surpassing JavaScript at 57% and Java at 40%. That’s a big jump from 2024, when JavaScript held the lead.

Framework usage is more evenly spread: Spring Boot leads at 19%, with Angular, Express.js, and Flask close behind at 18% each.

Data store preferences are shifting

In 2025, MongoDB leads the pack at 21%, followed closely by MySQL/MariaDB and Amazon RDS (both at 20%). That’s a notable shift from 2024, when PostgreSQL (45%) topped the list.

Tool favorites hold

GitHub, VS Code, and JetBrains editors remain top development tools, as they did in our previous survey. And there’s little change across CI/CD, provisioning, and monitoring tools:

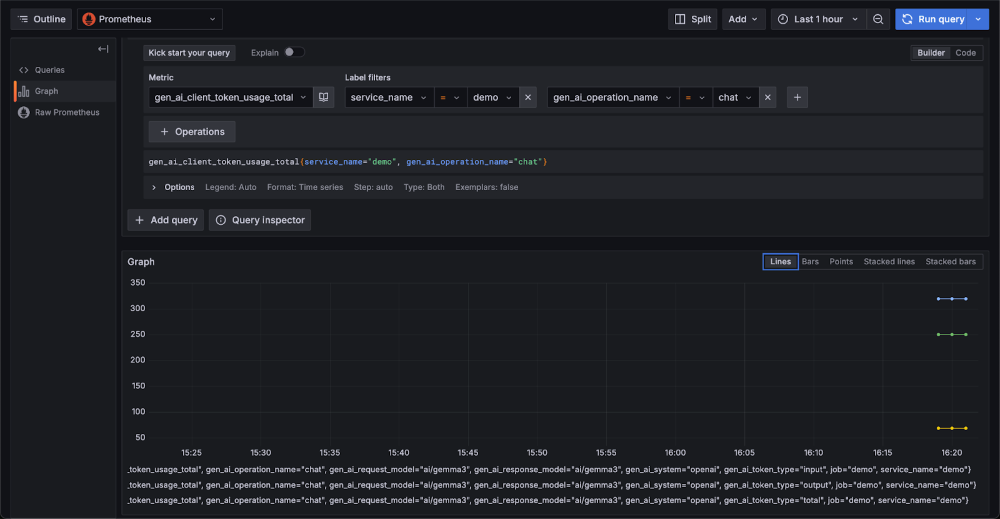

CI/CD: GitHub Actions (40%), GitLab (39%), Jenkins (36%)

Provisioning: Terraform (39%), Ansible (35%), GCP (32%)

Monitoring: Grafana (40%), Prometheus (38%), Elastic (34%)

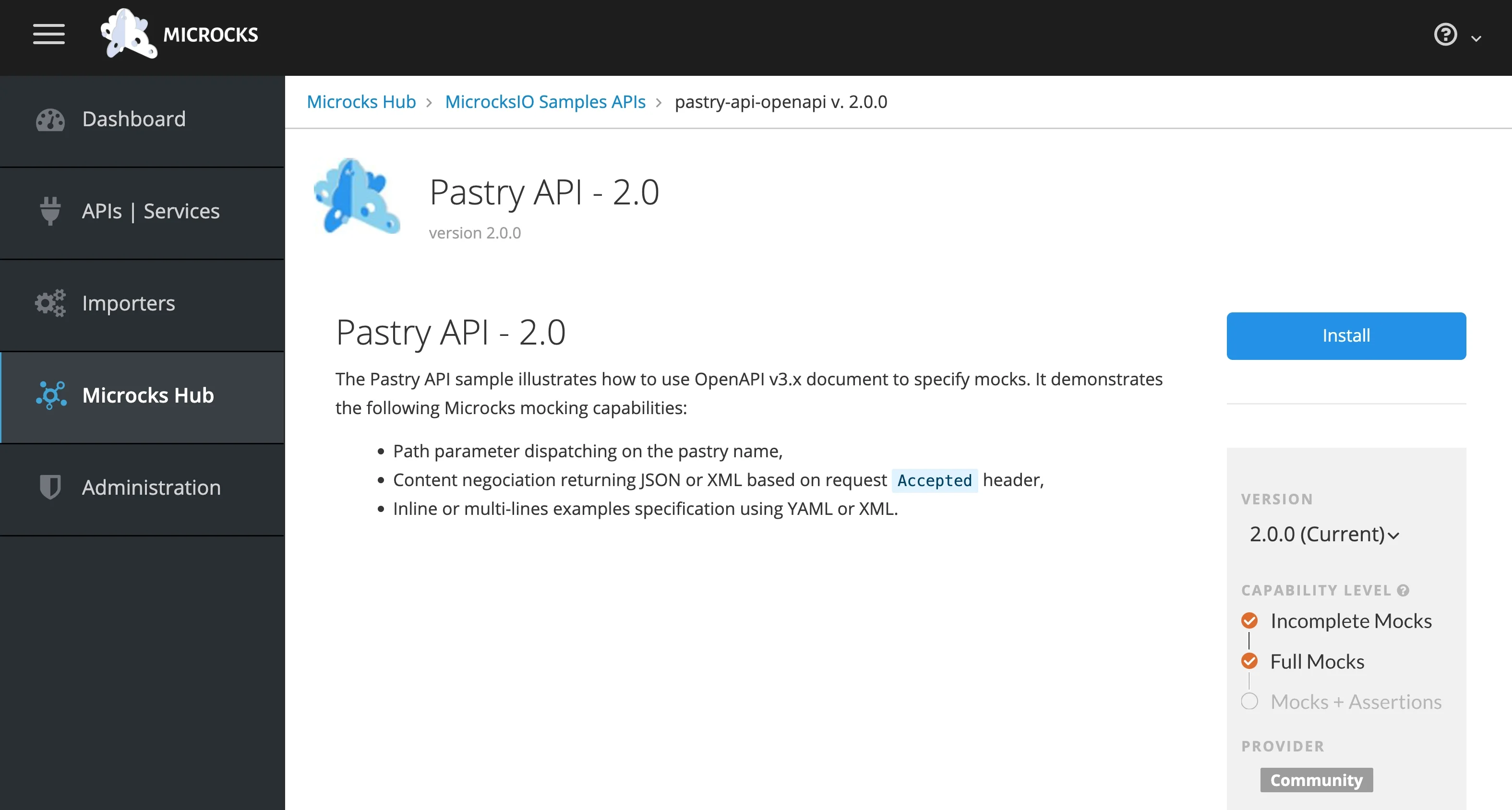

Containers: the great divide?

Among IT pros, container usage soared to 92% — up from 80% in our 2024 survey. Zoom out to a broader view across industries, however, and adoption appears considerably lower. Just 30% of developers say they use containers in any part of their workflow.

Why the gap? Differences in app structure may offer an explanation: IT industry respondents work with microservice-based architectures more often than those in other industries (68% versus 31%). So the higher container adoption may stem from IT pros’ need for modularity and scalability — which containers provide in spades.

And among container users, needs are evolving. They want better tools for time estimation (31% compared to 23% of all respondents), task planning (18% for both container users and all respondents), and monitoring/logging (16%) vs designing from scratch (18%) in the number 3 spot for all respondents — stubborn pain points across the software lifecycle.

An equal-opportunity headache: estimating time

No matter the role, estimating how long a task will take is the most consistent pain point across the board. Whether you’re a front-end developer (28%), data scientist (31%), or a software decision-maker (49%), precision in time planning remains elusive.

Other top roadblocks? Task planning (26%) and pull-request review (25%) are slowing teams down. Interestingly, where people say they need better tools doesn’t always match where they’re getting stuck. Case in point, testing solutions and Continuous Delivery (CD) come up often when devs talk about tooling gaps — even though they’re not always flagged as blockers.

Productivity by role: different hats, same struggles

When you break it down by role, some unique themes emerge:

Experienced developers struggle most with time estimation (42%).

Engineering managers face a three-way tie: planning, time estimation, and designing from scratch (28% each).

Data scientists are especially challenged by CD (21%) — a task not traditionally in their wheelhouse.

Front-end devs, surprisingly, list writing code (28%) as a challenge, closely followed by CI (26%).

Across roles, a common thread stands out: even seasoned professionals are grappling with foundational coordination tasks — not the “hard” tech itself, but the orchestration around it.

Tools vs. culture: two sides of the experience equation

On the tooling side, the biggest callouts for improvement across industries include:

Time estimation (23%)

Task planning (18%)

Designing solutions from scratch (18%)

Within the IT industry specifically, the top priority is the same — but even more prevalent:

Time estimation (31%)

Task planning (18%)

PR review (18%)

But productivity isn’t just about tools — it’s deeply cultural. When asked what’s working well, developers pointed to work-life balance (39%), location flexibility such as work from home policies (38%), and flexible hours (37%) as top cultural strengths.

The weak spots? Career development (38%), recognition (36%), and meaningful work (33%). In other words: developers like where, when, and how they work, but not always why.

What’s easy? What’s not?

While the dev world is full of moving parts, a few areas are surprisingly not challenging:

Editing config files (8%)

Debugging in dev (8%)

Writing config files (7%)

Contrast that with the most taxing areas:

Troubleshooting in production (9%)

Debugging in production (9%)

Security-related tasks (8%)

It’s a reminder that production is still where the stress — and the stakes — are highest.

2. AI is changing software development — but not how you think

Rumors of AI’s pervasiveness in software development have been greatly exaggerated. A look under the hood shows adoption is far from uniform.

AI in dev workflows: two very different camps

One of the clearest splits we saw in the data is how people use AI at work. There are two groups:

Developers using AI tools like ChatGPT, Copilot, and Gemini to help with everyday tasks — writing, documentation, and research.

Teams building AI/ML applications from the ground up.

IT is among the leaders in AI

Overall, only 22% of respondents said they use AI tools at work. But that number masks a huge spread across industries — from 1% to 84%. IT/SaaS folks sit near the top of the range at 76%.

IT’s leadership in AI is even more marked when you look at how many are building AI/ML into apps:

34% of IT/SaaS respondents say they do.

Only 8% of non-tech industries can say the same.

And the strategy gap is just as big. While 73% of tech companies say they have a clear AI strategy, only 16% of non-tech companies do. Translation: AI is gaining traction, but it’s still living mostly inside tech bubbles.

AI tools: overhyped and indispensable

Here’s the paradox: while 59% of respondents say AI tools are overhyped, 64% say they make their work easier.

Even more telling, 65% of users say they’re using AI more now than they did a year ago — and that they use it daily.

The hype is real. But for many devs, the utility is even more real.

These numbers track with our 2024 findings, too — where nearly two-thirds of devs said AI made their job easier, even as 45% weren’t fully sold on the buzz.

AI tool usage is up — and ChatGPT leads the pack

No big surprise here. ChatGPT is still the most-used AI tool by far. But the gap is growing.

Compared to last year’s survey:

ChatGPT usage jumped from 46% → 82%

GitHub Copilot: 30% → 57%

Google Gemini: 19% → 22%

Expect that trend to continue as more teams test drive (and trust) these tools in production workflows, moving from experimentation to greater integration.

Not all devs use AI the same way

While coding is the top AI use case overall, how devs actually lean on it varies by role:

Seasoned devs use AI for documentation and writing tests — but only lightly.

DevOps engineers use it to write docs and navigate CLI tools.

Software developers often turn to it for research and test automation.

And how dependent they feel on AI also shifts:

Seasoned devs: Often rate their dependence as 0/10.

DevOps engineers: Closer to 7/10.

Software devs: Usually around 5/10.

For comparison, the overall average dependence on AI in our 2024 survey was 4/10 (all users). Looking ahead, it will be interesting to see how dependence on AI shifts and becomes further integrated by role.

The hidden bottleneck: data prep

When it comes to building AI/ML-powered apps, data is the choke point. A full 26% of AI builders say they’re not confident in how to prep the right datasets — or don’t trust the data they have.

This issue lives upstream but affects everything downstream — time to delivery, model performance, user experience. And it’s often overlooked.

Feelings around AI

How do people really feel about AI tools? Mostly positive — but it’s a mixed bag.

Compared to last year’s survey:

AI tools are a positive option: 65% → 66%

They allow me to focus on more important tasks: 55% → 63%

They make my job more difficult: 19% → 40%

They are a threat to my job: 23% → 44%

The predominant emotions around building AI/ML apps are distinctly positive — enthusiasm, curiosity, and happiness or interest.

3. Security — it’s a team sport

Why everyone owns security now

In the evolving world of software development, one thing is clear — security is no longer a siloed specialty. It’s a team sport, especially when vulnerabilities strike. Forget the myth that only “security people” handle security. Across orgs big and small, roles are blending. If you’re writing code, you’re in the security game. As one respondent put it, “We don’t have dedicated teams — we all do it.”

Just 1 in 5 organizations outsource security.

Security is top of mind at most others: only 1% of respondents say security is not a concern at their organization.

One exception to this trend: In larger IT organizations (50+ employees), software security is more likely to be the exclusive domain of security engineers, with other types of engineers playing less of a role.

Devs, leads, and ops all claim the security mantle

It’s not just security engineers who are on alert. Team leads, DevOps pros, and senior developers all see themselves as responsible for security. And they’re all right. Security has become woven into every function.

When vulnerabilities hit, it’s all hands on deck

No turf wars here. When scan alerts go off, everyone pitches in — whether it’s security engineers helping experienced devs to decode scan results, engineering managers overseeing the incident, or DevOps engineers filling in where needed.

As in our 2024 survey, fixing vulnerabilities is the most common security task (30%) — followed by logging data analysis, running security scans, monitoring security incidents, and dealing with scan results (all tied at 24% each).

Fixing vulnerabilities is also a major time suck. Last year, respondents pointed to better vulnerability remediation tools as a key gap in the developer experience.

Security tools

For the second year in a row, SonarQube is the most widely used security tool, cited by 11% of respondents. But that’s a noticeable drop from last year’s 24%, likely due to the 2024 survey’s heavier focus on IT professionals.

Dependabot follows at 8%, with Snyk and AWS Security Hub close behind at 7% each — all showing lower adoption compared to last year’s more tech-centric sample.

Security isn’t the bottleneck — planning and execution are

Surprisingly, security doesn’t crack the top 10 issues holding teams back. Planning and execution-type activities are bigger sticking points.

Overall, across all industries and development-focused roles, security issues are the 11th and 14th most selected, way behind planning and execution type activities.

Translation? Security is better integrated into the workflow than ever before.

Shift-left is yesterday’s news

The once-pervasive mantra of “shift security left” is now only the ninth most important trend (14%) — behind Generative AI (27%), AI assistants for software engineering (23%), and Infrastructure as Code (19%). Has the shift left already happened? Is AI and cloud complexity drowning it out? Or is this further evidence that security is, by necessity, shifting everywhere?

Our 2024 survey identified the shift-left approach as a possible source of frustration for developers and an area where more effective tools could make a difference. Perhaps security tools have gotten better, making it easier to shift left. Or perhaps there’s simply broader acceptance of the shift-left trend.

Shift-left may not be buzzy — but it still matters

It’s no longer a headline-grabber, but security-minded dev leads still value the shift-left mindset. They’re the ones embedding security into design, coding, CI/CD, and deployment.

Even if the buzz has faded, the impact hasn’t.

Conclusion

The 2025 Docker State of Application Development Report captures a fast-changing software landscape defined by AI adoption, evolving security roles, and persistent friction in developer workflows. While AI continues to gain ground, adoption remains uneven across industries. Security has become a shared responsibility, and the shift to non-local dev environments signals a more cloud-native future. Through it all, developers are learning, building, and adapting quickly.

In spotlighting these trends, the report doesn’t just document the now — it charts a path forward. Docker will continue evolving to meet the needs of modern teams, helping developers navigate change, streamline workflows, and build what’s next.

Methodology

The 2025 Docker State of Application Development Report was an online, 25-minute survey conducted by Docker’s User Research Team in the fall of 2024. The distribution was much wider than in previous years due to advertising the survey on a larger range of platforms.

Credits

This research was designed, conducted, and analyzed by the Docker UX Research Team: Rebecca Floyd, Ph.D.; Julia Wilson, Ph.D.; and Olga Diachkova.

Quelle: https://blog.docker.com/feed/