Day 2 of our top blog posts of 2018 and coming in at Number 4 is the launch of Docker Enterprise 2.0 (formerly Docker Enterprise Edition). Docker’s industry-leading container platform is the only platform that simplifies Kubernetes and manages and secures applications on Kubernetes in multi-Linux, multi-OS and multi-cloud customer environments. To learn more about our Docker Enterprise, read on…

We are excited to announce Docker Enterprise Edition 2.0 – a significant leap forward in our enterprise-ready container platform. Docker Enterprise Edition (EE) 2.0 is the only platform that manages and secures applications on Kubernetes in multi-Linux, multi-OS and multi-cloud customer environments. As a complete platform that integrates and scales with your organization, Docker EE 2.0 gives you the most flexibility and choice over the types of applications supported, orchestrators used, and where it’s deployed. It also enables organizations to operationalize Kubernetes more rapidly with streamlined workflows and helps you deliver safer applications through integrated security solutions. In this blog post, we’ll walk through some of the key new capabilities of Docker EE 2.0.

Eliminate Your Fear of Lock-in

As containerization becomes core to your IT strategy, the importance of having a platform that supports choice becomes even more important. Being able to address a broad set of applications across multiple lines of business, built on different technology stacks and deployed to different infrastructures means that you have the flexibility needed to make changes as business requirements evolve. In Docker EE 2.0 we are expanding our customers’ choices in a few ways:

Multi-Linux, Multi-OS, Multi-Cloud – Most enterprise organizations have a hybrid or multi-cloud strategy and a mix of Windows and Linux in their environment. Docker EE is the only solution that is certified across multiple Linux distributions, Windows Server, and multiple public clouds, enabling you to support the broadest set of applications to be containerized and freedom to deploy it wherever you need.

Choice of Swarm or Kubernetes – Both orchestrators operate interchangeably in the same cluster meaning IT can build an environment that allows developers to choose how they want to have applications deployed at runtime. Teams can deploy applications to Swarm today and migrate these same applications to Kubernetes using the same Compose file. Applications deployed by either orchestrator can be managed through the same control plane, allowing you to scale more efficiently.

Docker EE Dashboard with containers deployed with both Swarm and Kubernetes

Deploying to Kubernetes via the admin UI

Manage via native Kubernetes CLI commands

Gain Operational Agility

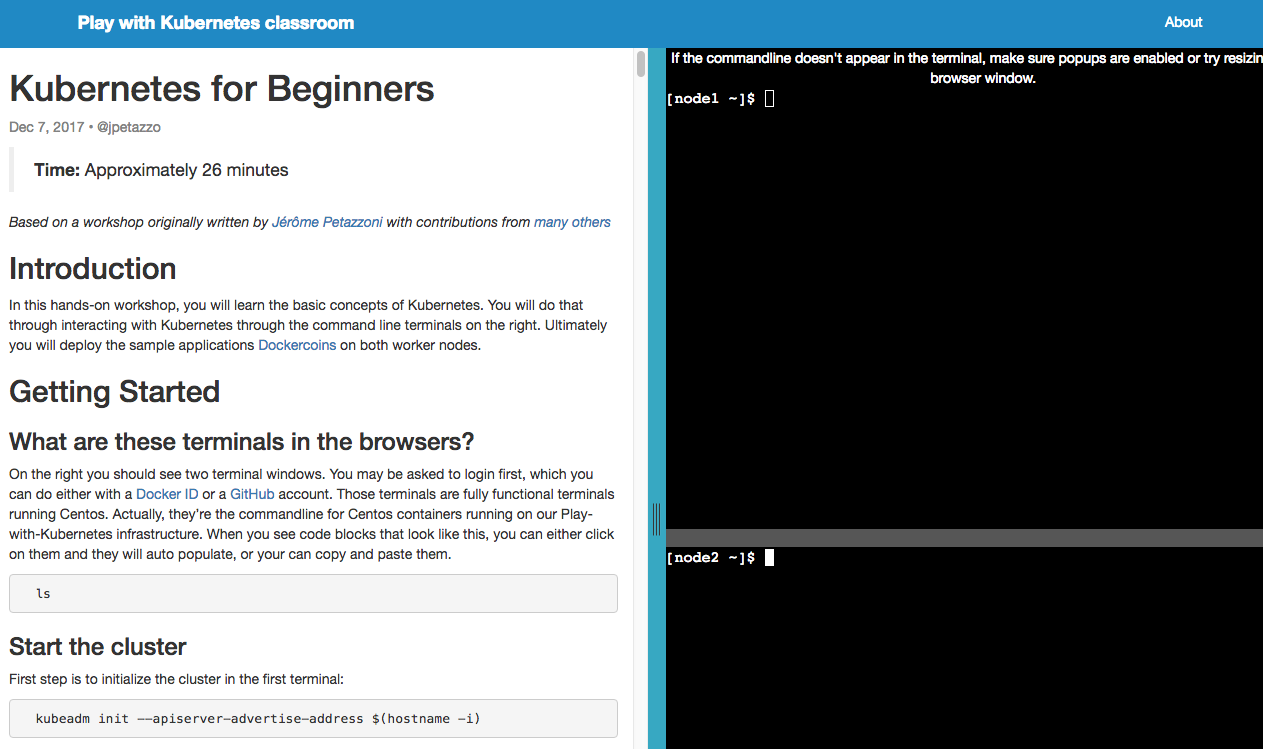

Docker is well-known for democratizing containers for developers everywhere. In a similar manner, Docker Enterprise Edition is focused on making the management of a container environment very intuitive and easy for infrastructure and operations teams. This focus on the operational experience carries over to managing Kubernetes. With Docker EE 2.0, you get simplified workflows for the day-to-day management of a Kubernetes environment while still having access to native Kubernetes APIs, CLIs, and interfaces.

Simplified cluster management tasks -Docker EE 2.0 delivers easy-to-use workflows for the basic configuration and management of a container environment, including:

Single line commands to add new nodes to a cluster

One-click high availability of the management plane

Easy access to consoles and logs

Secure default configurations

Secure application zones – One way that Docker helps you scale is with efficient multi-tenancy that doesn’t require building new clusters. By integrating with your corporate LDAP and Active Directory systems and setting resource access policies, you can get both logical and physical separation for different teams within the same cluster. For a Kubernetes deployment, this is a simple way to align Namespaces and nodes.

Enhanced Layer 7 Routing for Swarm – This release of Docker EE 2.0 also includes new enhancements for Layer 7 routing and load balancing. Based on the new Interlock 2.0 architecture which provides a highly scalable and highly available routing solution for Swarm, you can learn more about these enhancements here.

Kubernetes conformance – The streamlined operational workflows for managing Kubernetes are abstractions that run atop a full-featured and CNCF-conformant Kubernetes stack. All core Kubernetes components and their native APIs, CLIs and interfaces are available to advanced users seeking to fine tune and troubleshoot the orchestrator.

Build a Secure, Global Supply Chain

Containers provide improved security through greater isolation and smaller attack surfaces, but delivering safer applications also requires looking at how these applications were created. Organizations need to know where the applications came from, who has had access to them, if they contain known vulnerabilities and if it’s approved for production. Docker EE 2.0 is the only solution that delivers a policy-based secure supply chain that is designed to give you governance and oversight over the entire container lifecycle without slowing you down.

Secure supply chain for Swarm and Kubernetes – With Docker EE 2.0, you can set policies around image promotions to automate the process of moving an application through test, QA, staging, and production. For example, you can set a policy around image vulnerability scanning results that only promotes clean images to production repositories. Additionally with Docker EE 2.0, administrators can enforce rules around which applications are allowed to be deployed. Only images that have been signed off by the right tools or teams will be allowed to run in production. These are automated processes that enforce governance without adding any manual bottlenecks to the delivery process. Learn more about these capabilities in Part 1 and Part 2 of this blog series.

Secure supply chain for globally distributed organizations – Many Docker EE customers are multinational corporations with offices and data centers located around the world. With Docker EE 2.0 we are introducing a number of features that allow these global organizations to maintain a secure and globally-consistent supply chain.

Centralized Image Repository – Some organizations want to maintain one source of truth for all applications. They want a centralized private image repository for their global organizations. With Docker EE 2.0, you can connect multiple EE clusters to a single, common private registry with a common set of security policies built in.

Remote Office Access – Many organizations have development teams that are not in the same location as the registry. To ensure that these developers can quickly download images from their location, Docker EE 2.0 includes an Image Caching capability to create local caches of the repository content. Caching extends the secure access controls and digital signatures to these remote offices to ensure no breaks in the supply chain.

Multi-site Availability and Consistency – Alternatively, some organizations wish to have separate registries for different office locations – possibly one for North America, one for Europe, one for Asia. But they also want to make sure that they are using common images. With the new Image Mirroring capability, organizations can set policies that “push” and “pull” images from one registry to another. This also means that if a certain region goes down, copies of the same images are available in the other registries.

How to Get Started

Docker EE 2.0 is a major milestone for enterprise container platforms. Designed to give you the broadest choice around orchestrators, application types, operating systems, and clouds and supporting the requirements of global organizations, Docker EE 2.0 is the most advanced enterprise-ready container platform in the market and it’s available to you today!

To learn more about this release:

Register for our upcoming Virtual Event featuring Docker Chief Product Officer, Scott Johnston, and Sr. Director of Product Management, Banjot Chanana, to hear more about how our enterprise customers are leveraging Docker EE, see demos of EE 2.0 and learn how Docker can help you on your containerization journey.

Try it for yourself in our free, hosted trial. Explore the advanced capabilities described in this blog in just 30 minutes.

Read more about Docker Enterprise Edition 2.0 or access the documentation.

Register for DockerCon 2018 in San Francisco (June 12-15) to hear from Docker experts and customers about their containerization journey.

Announcing #Docker Enterprise Edition 2.0 – the most advanced enterprise-ready container platform!Click To Tweet

The post Top 5 Blog Post of 2018: Docker Enterprise 2.0 with Kubernetes appeared first on Docker Blog.

Quelle: https://blog.docker.com/feed/