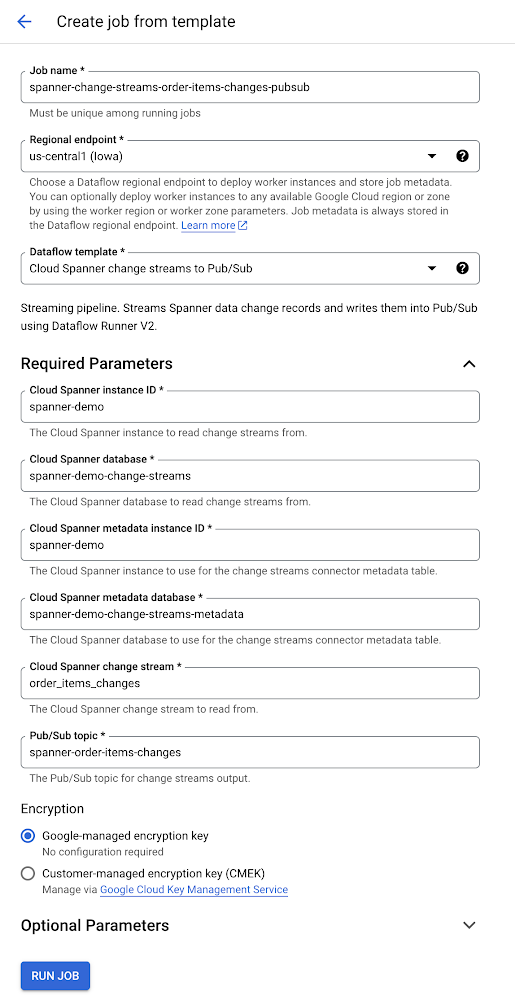

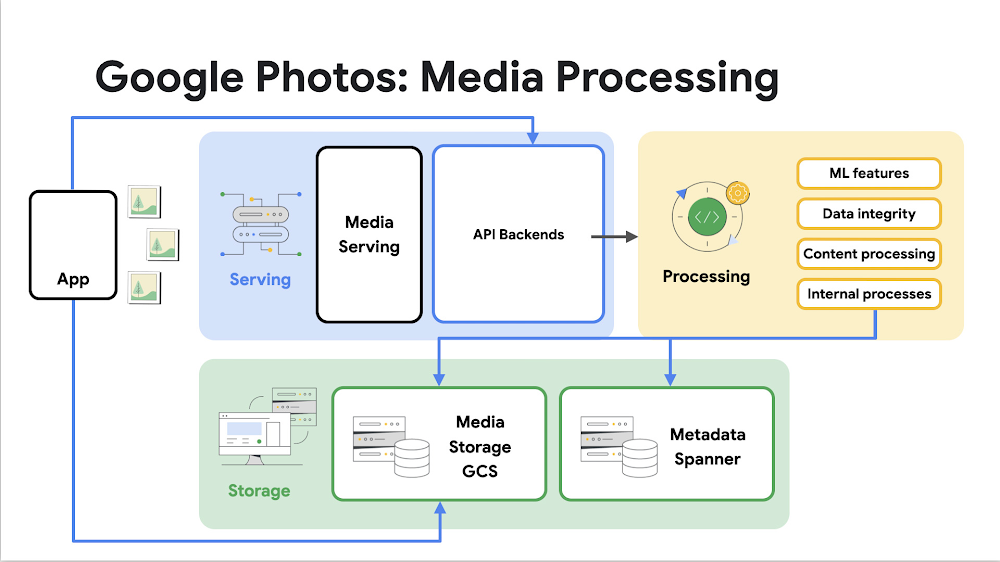

Editor’s note: Content updated at 9am PT to reflect announcements made on stage in the opening keynote at Google Cloud Next ’23.This week, Google Cloud will welcome thousands of people to San Francisco for our first in-person Google Cloud Next event since 2019. I am incredibly excited to bring so many of our customers and partners together to showcase the amazing innovations we have been working on across our entire portfolio of Infrastructure, Data and AI, Workspace Collaboration, and Cybersecurity solutions. It’s been an exciting year so far for Google Cloud. We’ve achieved some noteworthy milestones, including in Q2 2023, reaching a $32B annual revenue run rate and seeing our second quarter of profitability, which is all based on the success of our customers across every industry. This year, we have shared some incredible stories about how we are working with leading organizations like Culture Amp, Deutsche Borse, eDreams ODIGEO, HSBC, IHOP, IPG Mediabrands, John Lewis Partnership, The Knot Worldwide, Macquarie Bank, Mayo Clinic, Priceline, Shopify, the Singapore Government, U.S. Steel, and Wendy’s. Today, we are announcing new or expanded relationships with The Estée Lauder Companies, FOX Sports, GE Appliances, General Motors, HCA Healthcare, and more. I’d like to thank all of these customers and the millions of others around the world for trusting us as they progress on their digital transformation journeys.Today at Google Cloud Next ’23, we’re proud to announce new ways we’re helping every business, government, and user benefit from generative AI and leading cloud technologies, including: AI-optimized Infrastructure: The most advanced AI-optimized infrastructure for companies to train and serve models. We offer this infrastructure in our cloud regions, to run in your data centers with Google Distributed Cloud, and on the edge. Vertex AI: Developer tools to build models and AI-powered applications, with major advancements to Vertex AI for creating custom models and building custom Search and Conversation apps with enterprise data; Duet AI: Duet AI is an always-on AI collaborator that is deeply integrated in Google Workspace and Google Cloud. Duet AI in Workspace gives every user a writing helper, a spreadsheet expert, a project manager, a note taker for meetings, and a creative visual designer, and is now generally available. Duet AI in Google Cloud collaborates like an expert coder, a software reliability engineer, a database pro, an expert data analyst, and a cybersecurity adviser — and is expanding its preview and will be generally available later this year; and Many more significant announcements across Developer Tools, Data, Security, Sustainability, and our fast-growing cloud ecosystem.New infrastructure and tools to help customersThe advanced capabilities and broad applications that make gen AI so revolutionary demand the most sophisticated and capable infrastructure. We have been investing in our data centers and network for 25 years, and now have a global network of 38 cloud regions, with a goal to operate entirely on carbon-free energy 24/7 by 2030.Our AI-optimized infrastructure is a leading choice for training and serving gen AI models. In fact, more than 70% of gen AI unicorns are Google Cloud customers, including AI21, Anthropic, Cohere, Jasper, MosaicML, Replit, Runway, and Typeface; and more than half of all funded gen AI startups are Google Cloud customers, including companies like Copy.ai, CoRover, Elemental Cognition, Fiddler AI, Fireworks.ai, PromptlyAI, Quora, Synthesized, Writer, and many others.Today we are announcing key infrastructure advancements to help customers, including:Cloud TPU v5e: Our most cost-efficient, versatile, and scalable purpose-built AI accelerator to date. Now, customers can use a single Cloud TPU platform to run both large-scale AI training and inference. Cloud TPU v5e scales to tens of thousands of chips and is optimized for efficiency. Compared to Cloud TPU v4, it provides up to a 2x improvement in training performance per dollar and up to a 2.5x improvement in inference performance per dollar.A3 VMs with NVIDIA H100 GPU: Our A3 VMs powered by NVIDIA’s H100 GPU will be generally available next month. It is purpose-built with high-performance networking and other advances to enable today’s most demanding gen AI and large language model (LLM) innovations. This allows organizations to achieve three times better training performance over the prior-generation A2. GKE Enterprise: This enables multi-cluster horizontal scaling ;-required for the most demanding, mission-critical AI/ML workloads. Customers are already seeing productivity gains of 45%, while decreasing software deployment times by more than 70%. Starting today, the benefits that come with GKE, including autoscaling, workload orchestration, and automatic upgrades, are now available with Cloud TPU v5e.Cross-Cloud Network: A global networking platform that helps customers connect and secure applications across clouds. It is open, workload-optimized, and offers ML-powered security to deliver zero trust. Designed to enable customers to gain access to Google services more easily from any cloud, Cross-Cloud Network reduces network latency by up to 35%.Google Distributed Cloud: Designed to meet the unique demands of organizations that want to run workloads at the edge or in their data center. In addition to next-generation hardware and new security capabilities, we’re also enhancing the GDC portfolio to bring AI to the edge, with Vertex AI integrations and a new managed offering of AlloyDB Omni on GDC Hosted. Our Vertex AI platform gets even betterOn top of our world-class infrastructure, we deliver what we believe is the most comprehensive AI platform — Vertex AI — which enables customers to build, deploy and scale machine learning (ML) models. We have seen tremendous usage, with the number of gen AI customer projects growing more than 150 times from April-July this year. Customers have access to more than 100 foundation models, including third-party and popular open-source versions, in our Model Garden. They are all optimized for different tasks and different sizes, including text, chat, images, speech, software code, and more. We also offer industry specific models like Sec-PaLM 2 for cybersecurity, to empower global security providers like Broadcom and Tenable; and Med-PaLM 2 to assist leading healthcare and life sciences companies including Bayer Pharmaceuticals, HCA Healthcare, and Meditech. Vertex AI Search and Conversation are now generally available, enabling organizations to create Search and Chat applications using their data in just minutes, with minimal coding and enterprise-grade management and security built in. In addition, Vertex AI Generative AI Studio provides user-friendly tools to tune and customize models, all with enterprise-grade controls for data security. These include developer tools like Text Embeddings API, which lets developers build sophisticated applications based on semantic understanding of text or images, and Reinforcement Learning from Human Feedback (RLHF), which incorporates human feedback to deeply customize and improve model performance. Today, we’re excited to announce several new models and tooling in the Vertex AI platform:PaLM 2, Imagen and Codey Upgrades: We’re updating PaLM 2 to 32k context windows so enterprises can easily process longer form documents like research papers and books. We’re also improving Imagen’s visual appeal, and extending support for new languages in Codey.Tools for tuning: For PaLM 2 and Codey, we’re making adapter tuning generally available and in preview respectively, which can help improve LLM performance with as few as 100 examples. We’re also introducing a new method of tuning for Imagen, called Style Tuning, so enterprises can create images aligned to their specific brand guidelines or other creative needs with a small amount of reference images.New models: We’re announcing availability of Llama 2 and Code Llama from Meta, and Technology Innovative Institute’s Falcon LLM, a popular open-source model, as well as pre-announcing Claude 2 from Anthropic. In the case of Llama 2, we will be the only cloud provider offering both adapter tuning and RLHF.Vertex AI extensions: Developers can access, build, and manage extensions that deliver real-time information, incorporate company data, and take action on the user’s behalf. This opens up endless new possibilities for gen AI applications that can operate as an extension of your enterprise, enabled by the ability to access proprietary information and take action on third-party platforms like your CRM system or email.Grounding: We are announcing an enterprise grounding service that works across Vertex AI foundation models, Search and Conversation that gives customers the ability to ground responses in their own enterprise data to deliver more accurate responses. We are also working with a few early customers to test grounding with the technology that powers Google Search.Digital Watermarking on Vertex AI: Powered by Google DeepMind SynthID, this offers a state-of-the art technology that embeds the watermark directly into the image of pixels, making it invisible to the human eye and difficult to tamper with. Digital watermarking provides customers with a scalable approach to creating and identifying AI-generated images responsibly. We are the first hyperscale cloud provider to offer this technology for AI-generated images.Colab Enterprise: This managed service combines the ease-of-use of Google’s Colab notebooks with enterprise-level security and compliance capabilities. Data scientists can use Colab Enterprise to collaboratively accelerate AI workflows with access to the full range of Vertex AI platform capabilities, integration with BigQuery, and even code completion and generation. Equally important to discovering and training the right model is controlling your data. From the beginning, we designed Vertex AI to give you full control and segregation of your data, code, and IP, with zero data leakage. When you customize and train your model with Vertex AI — with private documents and data from your SaaS applications, databases, or other proprietary sources — you are not exposing that data to the foundation model. We take a snapshot of the model, allowing you to train and encapsulate it together in a private configuration, giving you complete control over your data. Your prompts and data, as well as user inputs at inference time, are not used to improve our models and are not accessible to other customers.Duet AI in Workspace and Google CloudWe unveiled Duet AI at I/O in May, introducing powerful new features across Workspace and showcasing developer features such as code and chat assistance in Google Cloud. Since then, trusted testers around the world have experienced the power of Duet AI while we worked on expanding capabilities and integrating it across a wide range of products and services throughout Workspace and Google Cloud. Let’s start with Workspace, the world’s most popular productivity tool, with more than 3 billion users and more than 10 million paying customers who rely on it every day to get things done. With the introduction of Duet AI just a few months ago, we delivered a number of features to make your teams more productive, like helping you write and refine content in Gmail and Google Docs, create original images in Google Slides, turn ideas into action and data into insights with Google Sheets, foster more meaningful connections in Google Meet, and more. Since then, thousands of companies and more than a million trusted testers have used Duet AI as a powerful collaboration partner — a coach, source of inspiration, and productivity booster — all while helping to ensure every user and organization has control over their data. Today, we are introducing a number of new enhancements:Duet AI in Google Meet: Duet AI will take notes during video calls, send meeting summaries, and even automatically translate captions in 18 languages. In addition, to ensure every meeting participant is clearly seen, heard, and understood, Duet AI in Meet announced studio look, studio lighting, and studio sound. Duet AI in Google Chat: You’ll be able to chat directly with Duet AI to ask questions about your content, get a summary of documents shared in a space, and catch up on missed conversations. We’ve also delivered a refreshed user interface, new shortcuts, and enhanced search to allow you to stay on top of conversations, as well as huddles in Chat which allow teams to start meetings from the place where they are already collaborating.Workspace customers of all sizes and from all industries are using Duet AI and seeing improvements in customer experience, productivity and efficiency. Instacart is creating enhanced customer service workflows and industrial technology company Trimble can now deliver solutions faster to their clients. Adore Me, Uniformed Services University and Thoughtworks are increasing productivity by using Duet AI to quickly write content such as emails, campaign briefs, and project plans with just a simple prompt. Today, we are making Duet AI in Google Workspace generally available, while expanding the preview capabilities of Duet AI in Google Cloud, with general availability coming later this year. Beyond Workspace, Duet AI can now provide AI assistance across a wide range of Google Cloud products and services — as a coding assistant to help developers code faster, as an expert adviser to help operators quickly troubleshoot application and infrastructure issues, as a data analyst to provide quick and better insights, and as a security adviser to recommend best practices to help prevent cyber threats.Customers are already realizing value from Duet AI in Google Cloud: L’Oréal is able to achieve better and faster business decisions from their data, and Turing, in early testing, is reporting engineering productivity gains of one-third.Our Duet AI in Google Cloud announcements include advancements for:Software development: Duet AI provides expert assistance across your entire software development lifecycle, enabling developers to stay in flow-state longer by minimizing context switching to help them be more productive. In addition to code completion and code generation, it can help you modernize applications faster by assisting you with code refactoring; and by using Duet AI in Apigee, any developer can now easily build APIs and integrations using simple natural language prompts. Application and infrastructure operations: Operators can chat with Duet AI in natural language across a number of services directly in the Google Cloud Console to quickly retrieve “how to” information about infrastructure configuration, deployment best practices, and expert recommendations on cost and performance optimization. Data Analytics: Duet AI in BigQuery provides contextual assistance for writing SQL queries as well as Python code, generates full functions and code blocks, auto-suggests code completions and explains SQL statements in natural language, and can generate recommendations based on your schema and metadata. These capabilities can allow data teams to focus more on outcomes for the business. Accelerating and modernizing databases: Duet AI in Cloud Spanner, AlloyDB and Cloud SQL, helps generate code to structure, modify, or query data using natural language. We’re also bringing the power of Duet AI to Database Migration Service (DMS), helping automate the conversion of database code, such as stored procedures, functions, triggers, and packages, that could not be converted with traditional translation technologies.Security Operations: We are bringing Duet AI to our security products including Chronicle Security Operations, Mandiant Threat Intelligence and Security Command Center, which can empower security professionals to more efficiently prevent threats, reduce toil in security workflows, and uplevel security talent. Duet AI delivers contextual recommendations from PaLM 2 LLM models and expert guidance, trained and tuned with Google Cloud-specific content, such as documentation, sample code, and Google Cloud best practices. In addition, Duet AI was designed using Google’s comprehensive approach to help protect customers’ security and privacy, as well as ourAI principles. With Duet AI, your data is your data. Your code, your inputs to Duet AI, and your recommendations generated by Duet AI will not be used to train any shared models nor used to develop any products.Simplify analytics at scale with a unified data and AI foundationData sits at the center of gen AI, which is why we are bringing new capabilities to Google’s Data and AI Cloud that will help unlock new insights and boost productivity for data teams. In addition to the launch of Duet AI, which assists data engineers and data analysts across BigQuery, Looker, Spanner, Dataplex, and our database migration tools, we have several other important announcements today in data and analytics:BigQuery Studio: A single interface for data engineering, analytics, and predictive analysis, BigQuery Studio helps increase efficiency for data teams. In addition, with new integrations to Vertex AI foundation models, we are helping organizations AI-enable their data lakehouse with innovations for cross-cloud analytics, governance, and secure data sharing.AlloyDB AI: Today we’re introducing AlloyDB AI, an integral part of AlloyDB, our PostgreSQL-compatible database service. AlloyDB AI offers an integrated set of capabilities for easily building GenAI apps, including high-performance, vector queries that are up to 10x faster than Standard PostgreSQL. In addition, with AlloyDB Omni, you can also run AlloyDB virtually everywhere. This includes on-premises, on Google Cloud, AWS, Azure, or through Google Distributed Cloud. Data Cloud Partners: Our open data ecosystem is an asset for customers’ gen AI strategies, and we’re continuing to expand the breadth of partner solutions and datasets available on Google Cloud. Our partners, like Confluent, DataRobot, Dataiku, Datastax, Elastic, MongoDB, Neo4j, Redis, SingleStore, and Starburst are all launching new capabilities to help customers accelerate and enhance gen AI development with data. Our partners are also adding more datasets to Analytics Hub, which customers can use to build and train gen AI models. This includes trusted data from Acxiom, Bloomberg, TransUnion, ZoomInfo, and more.These innovations help organizations harness the full potential of data and AI through a unified data foundation. With Google Cloud, companies can now run their data anywhere and bring AI and machine learning tools directly to their data, which can lower the risk and cost of data movement. Addressing top security challenges Google Cloud is the only leading security provider that brings together the essential combination of frontline intelligence and expertise, a modern SecOps platform, and a trusted cloud foundation, all infused with the power of gen AI, to help drive the security outcomes you’re looking to achieve. Earlier this year, we introduced Security AI Workbench, an industry-first extensible platform powered by our next generation security LLM, Sec-PaLM 2, which incorporates Google’s unique visibility into the evolving threat landscape and is fine-tuned for cybersecurity operations. And just a few weeks ago, we announced Chronicle CyberShield, a security operations solution that allows governments to break down information silos, centralize security data to help strengthen national situational awareness, and initiate a united response. In addition to the Duet AI innovations mentioned earlier, today we are also announcing:Mandiant Hunt for Chronicle: This service integrates the latest insights into attacker behavior from Mandiant’s frontline experts with Chronicle Security Operations’ ability to quickly analyze and search security data, helping customers gain elite-level support without the burden of hiring, tooling, and training. Agentless vulnerability scanning: These posture management capabilities in Security Command Center detect operating system, software, and network vulnerabilities on Compute Engine virtual machines. Network security advancements: Cloud Firewall Plus adds advanced threat protection and next-generation firewall (NGFW) capabilities to our distributed firewall service, powered by Palo Alto Networks; and Network Service Integration Manager allows network admins to easily integrate trusted third-party NGFW virtual appliances for traffic inspection.Assured Workloads Japan Regions: Customers can have controlled environments that enforce data residency in our Japanese regions, options for local control of encryption keys, and administrative access transparency. We also continue to grow our Regulated and Sovereignty solutions partner initiative to bring innovative third-party solutions to customers’ regulated cloud environments. Expanding our ecosystemOur ecosystem is already delivering real-world value for businesses with gen AI, and bringing new capabilities, powered by Google Cloud, to millions of users worldwide. Partners are also using Vertex AI to build their own features for customers – including Box, Canva, Salesforce, UKG, and many others. Today at Next ‘23, we’re announcing:DocuSign is working with Google to pilot how Vertex AI could be used to help generate smart contract assistants that can summarize, explain and answer what’s in complex contracts and other documents.SAP is working with us to build new solutions utilizing SAP data and Vertex AI that will help enterprises apply gen AI to important business use cases, like streamlining automotive manufacturing or improving sustainability.Workday’s applications for Finance and HR are now live on Google Cloud and they are working with us to develop new gen AI capabilities within the flow of Workday, as part of their multicloud strategy. This includes the ability to generate high-quality job descriptions and to bring Google Cloud gen AI to app developers via the skills API in Workday Extend, while helping to ensure the highest levels of data security and governance for customers’ most sensitive information.In addition, many of the world’s largest consulting firms, including Accenture, Capgemini, Deloitte, and Wipro, have collectively planned to train more than 150,000 experts to help customers implement Google Cloud GenAI.We are in an entirely new era of digital transformation, fueled by gen AI. This technology is already improving how businesses operate and how humans interact with one another. It’s changing the way doctors care for patients, the way people communicate, and even the way workers are kept safe on the job. And this is just the beginning.Together, we are creating a new way to cloud. We are grateful for the opportunity to be on this journey with our customers. Thank you for your partnership, and have a wonderful Google Cloud Next ‘23.

Quelle: Google Cloud Platform