Good vibes only—don’t miss these Cloud Next ‘19 sessions on inclusivity, sustainability

At Google Cloud, we’re excited to join with our customers and build a world that works for everyone. Technology and innovation can help businesses grow sustainably, create richer, more interactive learning experiences, power economies where more people have an opportunity to thrive, and advance inclusion for all. We’ve picked a few of the sessions at Next 2019 that focus on building a cleaner, accessible and more inclusive future for generations to come. These are the ideas that give us good vibes about building with Google Cloud, so be sure not to miss them! 1. Prioritizing diversity and inclusionWorkforce diversity starts with recruiting and hiring diversity. In Inclusive by Design: Engage and Recruit Diverse Talent with AI, you’ll hear how companies like Cox are reaching a larger and more diverse talent pool and making racial, ethnic and gender diversity a key driver of innovation and growth. It takes a more diverse workforce for companies to truly build for all customers. It also makes business sense. In The Business Case for Product Inclusion, you’ll hear from a panel of Google leaders and Google Cloud customers about demonstrating the business value of inclusive products. And in the Chief Diversity Officer Panel: Building Dynamic Inclusive Cultures, you’ll hear from a panel of Chief Diversity Officers about how they are advancing vibrant, inclusive cultures across their organizations.Another amazing panel during Next ‘19 will share the stories of female technical leaders across Google. In Women of Cloud: How to Grow our Clout 2.0, senior women will discuss their past year in both the field and their careers, and answer your questions about career development and company culture. They’ll likely touch on allyship, or advocating for groups who have been historically excluded from the tech industry. If you want to learn more about allyship, and practice it, Allyship: The Fundamentals will run on both Wednesday and Thursday. This session will give you the chance to practice identity-based leadership by examining your position in social struggles and putting yourself in another’s shoes.2. Building a more sustainable futureIf you’re curious about your environmental impact as a cloud user, join us for Building Sustainability Into Our Infrastructure, Your Goals and New Products. We’ll share what it took to build a cloud with sustainability built-in, and National Geographic will share how they incorporate a corporate focus on the earth into an IT one. SunPower will also join for an exciting announcement as part of their journey toward making home solar accessible to all. Making renewable energy like solar a primary energy source for the globe is a big challenge, and so are the challenges faced in global ocean exploitation. The oceans are big—140 million square miles big, or about 70% of the earth’s surface. But less than 5% has been explored. That presents a problem for sustainable fishing management, particularly with dark vessels that do not have any associated location data and could be fishing illegally. In Making Planet-Scale GIS Possible with Google Earth Engine and BigQuery GIS, Google Cloud customer Global Fishing Watch will share how they use Google Earth Engine to automatically extract vessel locations from massive amounts of radar imagery, then use BigQuery GIS to elucidate the dark vessels.Overfishing is just one example of the natural resource challenges we face. In 2018, the global demand for resources was 1.7 times what the earth can support in one year. Google Cloud and SAP came together to help address this challenge by hosting a sustainability contest for social entrepreneurs. In Circular Economy 2030: Cloud Computing for a Sustainable Revolution, you can learn more about how cloud computing can be mobilized for a sustainable future with responsible consumption and production, and hear the anticipated announcement of the five finalists of Circular Economy 2030.3. Nonprofit organizations making a positive impactGlobal nonprofit organizations are tackling big challenges with Google technology. One area with a promising future is using data analytics and other new technologies to solve some of the world’s greatest challenges, such as unemployment or sustainable development. In Data for Good: Driving Social and Environmental Impact with Big Data Solutions, you’ll hear about how Google Cloud is working to empower nonprofits around the world, and how we’ve collaborated with organizations like the Global Partnership for Sustainable Development Data (GPSDD) to mobilize data for sustainable development across our Data Solutions for Change, Visualize 2030, and Circular Economy 2030 initiatives. In Empowering Global Nonprofits to Drive Impact with G Suite, we’ll talk about how nonprofits are embracing technology to improve how they collaborate, engage with their community, and fundraise for their cause. You’ll hear from two Bay Area organizations making a positive impact on the lives of local youth.4. Using technology to help the visually impairedAs part of a strategic initiative by the Library of Congress to support users who are visually impaired, one Google Cloud customer is building an app to make reading books more easily available. In Making Books Accessible to the Visually Impaired, SpringML will share how users can now search for and play an audiobook from a Google Home device. Hear about the development process SpringML went through to make almost 1 TB of audio content available via their application. In addition to partnering with our customers on applications like SpringML is building, we’re working on improving accessibility with Google Cloud products. In Empowering Entrepreneurs and Employees With Disabilities Using G Suite, the Blind Institute of Technology will share how they used G Suite to establish workflows that are effective and efficient for their employees, some who happen to be visually impaired. There’s more on this in the G Suite and Chrome Accessibility Features for an Inclusive Organization session, which will go into depth on the built-in accessibility features of G Suite and Chromebooks.And finally, a session focused on those who will be building this new world in a few years. Did you know that more than half of school-aged children in the U.S. use Google in their classrooms? Join For Parents and Guardians: How Your Child Uses Google in Class to learn about the tools that are transforming learning outcomes, curriculum, and opportunities for children across the nation.For more on what to expect at Google Cloud Next ‘19, take a look at the session list here, and register here if you haven’t already. We’ll see you there.

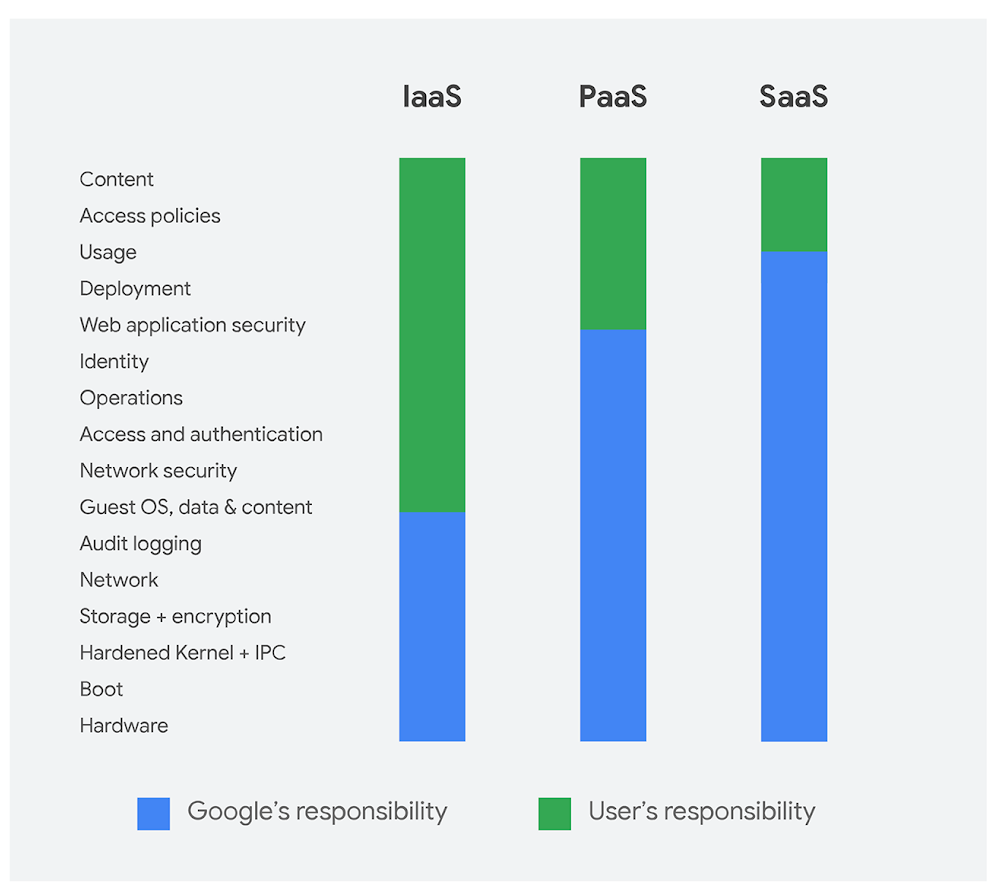

Quelle: Google Cloud Platform