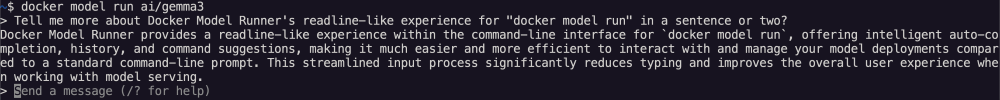

The command line is where developers live and breathe. A powerful and intuitive CLI can make the difference between a frustrating task and a joyful one. That’s why we’re excited to announce a major upgrade to the interactive chat experience in Docker Model Runner, our tool for running AI workloads locally.

We’ve rolled out a new, fully-featured interactive prompt for the “docker model run” command that brings a host of quality-of-life improvements, making it faster, easier, and more intuitive to chat with your local models. Let’s dive into what’s new.

A True Readline-Style Prompt with Keyboard Shortcuts

The most significant change is the move to a new readline-like implementation. If you spend any time in a modern terminal, you’ll feel right at home. This brings advanced keyboard support for navigating and editing your prompts right on the command line.

You can now use familiar keyboard shortcuts to work with your text more efficiently. Here are some of the new key bindings you can start using immediately:

Move to Start/End: Use “Ctrl + a” to jump to the beginning of the line and “Ctrl + e” to jump to the end.

Word-by-Word Navigation: Quickly move through your prompt using “Alt + b” to go back one word and “Alt + f” to go forward one word.

Efficient Deletions:

“Ctrl + k”: Delete everything from the cursor to the end of the line.

“Ctrl + u”: Delete everything from the cursor to the beginning of the line.

“Ctrl + w”: Delete the word immediately before the cursor.

Screen and Session Management:

“Ctrl + l”: Clear the terminal screen to reduce clutter.

“Ctrl + d”: Exit the chat session, just like the /bye command.

Take Back Control with “Ctrl + c”

We’ve all been there: you send a prompt to a model, and it starts generating a long, incorrect, or unwanted response. Previously, you had to wait for it to finish. Not anymore.

You can now press “Ctrl + c” at any time while the model is generating a response to immediately stop it. We’ve implemented this using context cancellation in our client, which sends a signal to halt the streaming response from the model. This gives you full control over the interaction, saving you time and frustration. This feature has also been added to the basic interactive mode for users who may not be in a standard terminal environment. “Ctrl + c” ends that interaction but does not exit. “Ctrl + d” exits “docker model run”.

Improved Multi-line and History Support

Working with multi-line prompts, like pasting in code snippets, is now much smoother. The prompt intelligently changes from > to a more subtle . to indicate that you’re in multi-line mode.

Furthermore, the new prompt includes command history. Simply use the Up and Down arrow keys to cycle through your previous prompts, making it easy to experiment, correct mistakes, or ask follow-up questions without retyping everything. For privacy or scripting purposes, you can disable history writing by setting the DOCKER_MODEL_NOHISTORY environment variable.

Get Started Today!

These improvements make “docker model run” a more powerful and pleasant tool for all your local AI experiments. Pull a model from Docker Hub and start a chat to experience the new prompt yourself:

$ docker model run ai/gemma3

> Tell me a joke about docker containers.

Why did the Docker container break up with the Linux host?

… Because it said, "I need some space!"

—

Would you like to hear another one?

Help Us Build the Future of Local AI

Docker Model Runner is an open-source project, and we’re building it for the community. These updates are a direct result of our effort to create the best possible experience for developers working with AI.

We invite you to get involved!

Star, fork, and contribute to the project on GitHub: https://github.com/docker/model-runner

Report issues and suggest new features you’d like to see.

Share your feedback with us and the community.

Your contributions help shape the future of local AI development and make powerful tools accessible to everyone. We can’t wait to see what you build!

Learn more

Check out the Docker Model Runner General Availability announcement

Visit our Model Runner GitHub repo! Docker Model Runner is open-source, and we welcome collaboration and contributions from the community!

Get started with Docker Model Runner with a simple hello GenAI application

Quelle: https://blog.docker.com/feed/