G502 Hero X: Die neue Generation einer der beliebtesten Logitech-Mäuse

An der Form der G502 Hero hat Logitech nur wenig gefeilt. Stattdessen wird die neue Gaming-Maus leichter, leistungsfähiger und teurer. (Logitech, Eingabegerät)

Quelle: Golem

An der Form der G502 Hero hat Logitech nur wenig gefeilt. Stattdessen wird die neue Gaming-Maus leichter, leistungsfähiger und teurer. (Logitech, Eingabegerät)

Quelle: Golem

JBL hat das Nachfolgemodell des Tour One vorgestellt. Der neue ANC-Kopfhörer integriert dabei eine Komfortfunktion, die Sony bei ANC-Produkten eingeführt hat. (Ifa 2022, Audio/Video)

Quelle: Golem

Um die sogenannte Supply-Chain besser abzusichern, verteilt Google Bug-Bountys für seine Open-Source-Projekte und deren Abhängigkeiten. (Google, Applikationen)

Quelle: Golem

Drop stellt mal wieder eine eigene Tastatur vor: Die Sense75 kommt mit hochwertigen Komponenten und einer Federung, kostet aber auch 350 US-Dollar. (Tastatur, Eingabegerät)

Quelle: Golem

Die Asus PG42UQ und PG48UQ sollen sich mit 120-Hz-Panel für Games und andere Dinge eignen. Sie können per Displayport angeschlossen werden. (Asus, Display)

Quelle: Golem

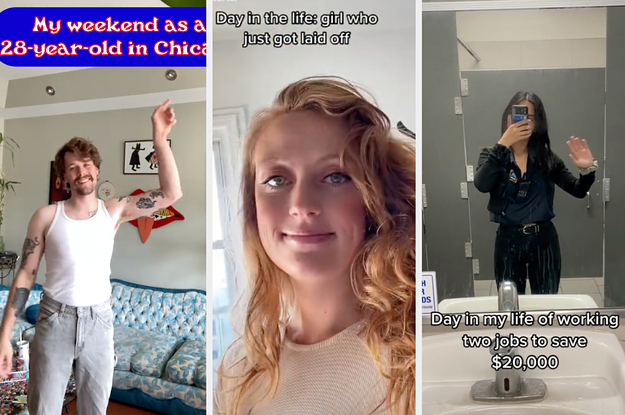

Quelle: <a href="TikTokers Are Showing Their Typical Daily Life, But There’s Nothing Typical About It“>BuzzFeed

We get it. Life’s to-do list seems bottomless, and maybe building your own website isn’t anywhere near the top of it. And yet your business needs it. Yesterday, if possible. Your audience, your customers, are waiting.

If you’re in need of a professionally designed, budget-friendly, mobile-optimized website to showcase your business, product, or service, consider Built By WordPress.com Express.

Click here to get started.Tell us a little bit about your business.Select a design from our catalog of themes (or let us choose the right one for you!).Provide your content, including your logo and any images. Sit back and relax!

Our in-house experts will build the site for you, all in four business days or less. The cost is $499, plus an additional purchase of the WordPress.com Premium plan.

We’ve built sites for professional bloggers, local service professionals such as roofers, a surfing school, consultants, attorneys, nonprofits, churches, restaurants, even an online sports streaming network. If your primary goal is to tell the world about your project or business, or maybe you have a long wish list of things you’d like your website to do and just need a head start, Built By WordPress.com Express was created just for you.

We can’t wait to delight you.

Yes! Build my site for me!

Quelle: RedHat Stack

Organizations on a journey to containerize applications and run them on Kubernetes often reach a point where running a single cluster doesn’t meet their needs. One example, you want to bring your app closer to the users in a new regional market. Add a cluster to the new region and get the added benefit of increasing resiliency. Please read this multi-cluster use casesoverview if you want to learn more about the benefits and tradeoffs involved. ArgoCD and Fleets offer a great way to ease the management of multi-cluster environments by allowing you to define your clusters state based on labels abstracting away the focus from unique clusters to profiles of clusters that are easily replaced.This post shows you how to use ArgoCD and Argo Rollouts to automate the state of a Fleet of GKE clusters. This demo covers three potential journeys for a cluster operator. Add a new application cluster to the Fleet with zero touch beyond deploying the cluster and giving it a specific label. The new cluster should automatically install a baseline set of configurations for tooling and security along with any applications tied to the cluster label.Deploy a new application to the Fleet that automatically inherits baseline multi-tenant configurations for the team that develops and delivers the application, and applies Kubernetes RBAC policies to that team’s Identity Group.Progressively roll out a new version of an application across groups, or waves, of clusters with manual approval needed in between each wave.You can find the code used in this demo on GitHub.Configuring the ArgoCD Fleet ArchitectureArgoCD is a CNCF tool that provides GitOps continuous delivery for Kubernetes. ArgoCD’s UX/UI is one of its most valuable features. To preserve the UI/UX across a Fleet of clusters, use a hub and spoke architecture. In a hub and spoke design, you use a centralized GKE cluster to host ArgoCD (the ArgoCD cluster). You then add every GKE cluster that hosts applications as a Secret to the ArgoCD namespace in the ArgoCD cluster. You assign specific labels to each application cluster to identify it. ArgoCD config repo objects are created for each Git repository containing Kubernetes configuration needed for your Fleet. ArgoCD’s sync agent continuously watches the config repo(s) defined in the ArgoCD applications and actuates those changes across the Fleet of application clusters based on the cluster labels that are in that cluster’s Secret in the ArgoCD namespace.Set up the underlying infrastructureBefore you start working with your application clusters, you need some foundational infrastructure. Follow the instructions in Fleet infra setup, which uses a Google-provided demo tool to set up your VPC, regional subnets, Pod and Service IP address ranges, and other underlying infrastructure. These steps also create the centralized ArgoCD cluster that’ll act as your control cluster.Configure the ArgoCD clusterWith the infrastructure set up, you can configure the centralized ArgoCD cluster with Managed Anthos Service Mesh (ASM), Multi Cluster Ingress (MCI), and other controlling components. Let’s take a moment to talk about why ASM and MCI are so important to your Fleet. MCI is going to provide better performance to all traffic getting routing into your cluster from an external client by giving you a single anycast IP in front of a global layer 7 load balancer that routes traffic to the GKE cluster in your Fleet that is closest to your clients. MCI also provides resiliency to regional failure. If your application is unreachable in the region closest to a client, they will be routed to the next closest region.Along with mTLS, layer 7 metrics for you apps, and a few other great features, ASM is going to provide you with a network that handles pod to pod traffic across your Fleet of GKE clusters. This means that your applications making calls to other applications within the cluster an automatically redirect to other cluster in your Fleet if the local call fails or has not endpoints. Follow the instructions in Fleet cluster setup. The command runs a script that installs ArgoCD, creates ApplicationSets for application cluster tooling and configuration, and logs you into ArgoCD. It also configures ArgoCD to synchronize with a private repository on GitHub.When you add a GKE application cluster as a Secret to the ArgoCD namespace, and give it the label `env: “multi-cluster-controller”`, the multi-cluster-controller ApplicationSet generates applications based on the subdirectories and files in the multi-cluster-controllers folder. For this demo, the folder contains all of the config necessary to setup Multi Cluster Ingress for the ASM Ingress Gateways that will be installed in each application cluster.When you add a GKE application cluster as a Secret to the ArgoCD namespace, and give it the label `env: “prod”`, the app-clusters-tooling application set generates applications for each subfolder in the app-clusters-config folder. For this demo, the app-clusters-config folder contains tooling needed for each application cluster. For example, the argo-rollouts folder contains the Argo Rollouts custom resource definitions that need to be installed across all application clusters.At this point, you have the following:Centralized ArgoCD cluster that syncs to a GitHub repository. Multi Cluster Ingress and multi cluster service objects that sync with the ArgoCD cluster.Multi Cluster Ingress and multi cluster Service controllers that configure the Google Cloud Load Balancer for each application cluster. The load balancer is only installed when the first application cluster gets added to the Fleet.Managed Anthos Service Mesh that watches Istio endpoints and objects across the Fleet and keeps Istio sidecars and Gateway objects updated.The following diagram summarizes this status:Connect an application cluster to the FleetWith the ArgoCD control cluster set up, you can create and promote new clusters to the Fleet. These clusters run your applications. In the previous step, you configured multi-cluster networking with Multi Cluster Ingress and Anthos Service Mesh. Adding a new cluster to the ArgoCD cluster as a Secret with the label `env=prod` ensures that the new cluster automatically gets the baseline tooling it needs, such as Anthos Service Mesh Gateways.To add any new cluster to ArgoCD, you add a Secret to the ArgoCD namespace in the control cluster. You can do this using the following methods:The `argocli add cluster` command, which automatically inserts a bearer token into the Secret that grants the control cluster `clusteradmin` permissions on the new application cluster.Connect Gateway and Fleet Workload Identity, which let you construct a Secret that has custom labels, such as labels to tell your ApplicationSets what to do, and configure ArgoCD to use a Google OAuth2 token to make authenticated API calls to the GKE control plane.When you add a new cluster to ArgoCD, you can also mark it as being part of a specific rollout wave, which you can leverage when you start progressive rollouts later in the demo.The following example Secret manifest shows a Connect Gateway authentication configuration and labels such as `env: prod` and `wave`:For the demo, you can use a Google-provided script to add an application cluster to your ArgoCD configuration. For instructions, refer to Promoting Application Clusters to the Fleet.You can use the ArgoCD web interface to see the progress of the automated tooling setup in the clusters, such as in the following example image:Add a new team application and a new clusterAt this point, you have an application cluster in the Fleet that’s ready to serve apps. To deploy an app to the cluster, you create the application configurations and push them to the ArgoCD config repository. ArgoCD notices the push and automatically deploys and configures the application to start serving traffic through the Anthos Service Mesh Gateway.For this demo, you can run a Google-provided script that creates a new application based on a template, in a new ArgoCD Team, `team-2`. For instructions, refer to Creating a new app from the app template. The new application creation also configures an application set for each progressive rollout wave, synced with a git branch for that wave. Since that application cluster is labeled as wave one and is the only application cluster deployed so far, you should only see one Argo application in the UI for the app that looks similar to this.If you `curl` the endpoint, the app responds with some metadata including the name of the Google Cloud zone in which it’s running:You can also add a new application cluster in a different Google Cloud zone, for higher availability. To do so, you create the cluster in the same VPC and add a new ArgoCD Secret with labels that match the existing ApplicationSets.For this demo, you can use a Google-provided script to do the following:Add a new cluster in a different zoneLabel the new cluster for wave two (the existing application cluster is labeled for wave one)Add the application-specific labels so that ArgoCD installs the baseline toolingDeploys another instance of the sample application in that clusterFor instructions, refer to Add another application cluster to the Fleet. After you run the script, you can check the ArgoCD web interface for the new cluster and application instance. The interface is similar to this:If you `curl` the application endpoint, the GKE cluster with the least latent path from the source of the curl serves the response. For example, curling from a Compute Engine instance in `us-west1` routes you to the `gke-std-west02` cluster.You can experiment with the latency-based routing by accessing the endpoint from machines in different geographical locations. At this point in the demo, you have the following:One application cluster labeled for wave oneOne application cluster labeled for wave twoA single Team with an app deployed on both application clustersA control cluster with ArgoCDA backing configuration repository for you to push new changesProgressively rollout apps across the FleetArgoCD rollouts are similar to Kubernetes Deployments, with some additional fields to control the rollout. You can use a rollout to progressively deploy new versions of apps across the Fleet, manually approving the rollout’s wave-based progress by merging the new version from the `wave-1` git branch to the `wave-2` git branch, and then into `main`. For this demo, you can use Google-provided scripts that do the following:Add a new application to both application clusters.Release a new application image version to the wave one cluster.Test the rolled out version for errors by gradually serving traffic from Pods with the new application image. Promote the rolled out version to the wave two cluster.Test the rolled out version.Promote the rolled out version as the new stable version in `main`. For instructions, refer to Rolling out a new version of an app. The following sample shows the fields that are unique to ArgoCD rollouts. The `strategy` field defines the rollout strategy to use. In this case, the strategy is canary, with two steps in the rollout. The application cluster rollout controller checks for image changes to the rollout object and creates a new replica set with the updated image tag when you add a new image. The rollout controller then adjusts the Istio virtual service weight so that 20% of traffic to that cluster is routed to Pods that use the new image.Each step runs for 4 minutes and calls an analysis template before moving onto the next step. The following example analysis template uses the Prometheus provider to run a query to check the success rate of the canary version of the rollout. If the success rate is 95% or greater, the rollout moves on to the next step. If the success rate is less than 95%, the rollout controller rolls the change back by setting the Istio virtual service weight to 100% for the Pods running the stable version of the image.After all the analysis steps are completed, the rollout controller labels the new application’s deployment as stable, sets the Istio virtual service 100% back to the stable step, and deletes the previous image version deployment. SummaryIn this post you have learned how ArgoCD and Argo Rollouts can be used to automate the state of a Fleet of GKE clusters. This automation abstracts away any uniques of a GKE cluster and allows you to promote and remove clusters as your needs change over time. Here is a list of documents that will help you learn more about the services used to build this demo.Argo ApplicationSet controller: improved multi-cluster and multi-tenant support.Argo Rollouts: Kubernetes controller that provides advanced rollout capabilities such as blue-green and experimentation.Multi Cluster Ingress: map multiple GKE clusters to a single Google Cloud Load Balancer, with one cluster as the control point for the Ingress controller.Managed Anthos Service Mesh: centralized Google-managed control plane with features that spread your app across multiple clusters in the Fleet for high availability.Fleet Workload Identity: allow apps anywhere in your Fleet’s clusters that use Kubernetes service accounts to authenticate to Google Cloud APIs as IAM service accounts without needing to manage service account keys and other long-lived credentials.Connect Gateway: use the Google identity provider to authenticate to your cluster without needing VPNs, VPC Peering, or SSH tunnels.Related ArticleGoogle Kubernetes Engine: 7 years and 7 amazing benefitsHow you can benefit from 7 years of the most automated and scalable managed Kubernetes.Read Article

Quelle: Google Cloud Platform

Apigee is Google Cloud’s API management platform that enables organizations to build, operate, manage and monetize their APIs. Customers from industries around the world trust Apigee to build and scale their API programs.While some organizations operate with mature API-first strategies, others might still be working on a modernization strategy. Even within an organization, different teams often end up with diverse use cases and choices for API management. From our conversations with customers, we are increasingly hearing the need to align our capabilities and pricing with such varied workloads.We’re excited to introduce a Pay-as-you-go pricing model to enable customers to unlock Apigee’s API management capabilities whilst retaining the flexibility to manage their own costs. Starting today, customers will have the option to use Apigee by paying only for what they are using. This new pricing model is offered as a complement to the existing Subscription plans (or) the ability to evaluate it for free.Start small, but powerful with Pay-as-you-go pricingThe new Pay-as-you-go pricing model offers flexibility for organizations to: Unlock the value of Apigee with no upfront commitment: Get up and running quickly without any upfront purchasing or commitmentMaintain flexibility and control in costs: Adapt to ever-changing needs whilst maintaining low costs. You can continue to automatically scale with Pay-as-you-go or switch to Subscription tiers based on your usageProvide freedom to experiment: Every API management use case is different and with Pay-as-you-go you can experiment with new use cases by unlocking value provided by Apigee without a long term commitmentPay-as-you-go pricing works just like the rest of your Google Cloud bills, allowing you to get started without any license commitment or upfront purchasing. As part of the Pay-as-you-go pricing model, you will only be charged based on your consumption of Apigee gateway nodes: You will be charged on your API traffic based on the number of Apigee gateway nodes (a unit of environment that processes API traffic) used per minute. Any nodes that you provision would be charged every minute and billed for a minimum of one minute.API analytics: You will be charged for the total number of API requests analyzed per month. API requests, whether they are successful or not, are processed by Apigee analytics. Analytics data is preserved for three months.Networking usage: You will be charged on the networking (such as IP address, network egress, forwarding rules etc.,) based on usageWhen is Pay-as-you-go pricing right for me?Apigee offers three different pricing modelsEvaluation plan to access Apigee’s capabilities at no cost for 60 days Subscription plans across Standard, Enterprise or Enterprise plus based on your predictable but high volume API needsPay-as-you-go without any startup costsSubscription plans are ideal for use cases with predictable workloads for a given time period, whereas Pay-as-you-go pricing is ideal if you are starting small with a high value workload. Here are a few use cases where organizations would choose Pay-as-you-go if they want to:Establish usage patterns before choosing a Subscription modelEvolve their API program by starting with high value and low volume API use casesManage and protect your applications build on Google cloud infrastructureMigrate or modernize your services gradually without disruptionNext steps Every organization is increasingly relying on APIs to build new applications, adopt modern architectures or create new experiences. In such transformation journeys, Apigee’s Pay-as-you-go pricing will provide flexibility for organizations to start small and scale seamlessly with their API management needs.To get started with Apigee’s Pay-as-you-go pricing go to console or try it for free hereCheck out our documentation and pricing calculator for further details on Apigee’s Pay-as-you-go pricing for API management. For comparison and other information, take a look at our pricing page.

Quelle: Google Cloud Platform

Welcome to this month’s Cloud CISO Perspectives. This month, we’re focusing on the importance of vulnerability reward programs, also known as bug bounties. These programs for rewarding independent security researchers for reporting zero-day vulnerabilities to the software vendor first started appearing around 1995, and have since evolved into an integral part of the security landscape. Today, they can help organizations build more secure products and services. As I explain below, vulnerability reward programs also play a key role in digital transformation. As with all Cloud CISO Perspectives, the contents of this newsletter will continue to be posted to the Google Cloud blog. If you’re reading this on the website and you’d like to receive the email version, you can subscribe here.Why vulnerability rewards programs are vital to cloud servicesI’d like to revisit a Google Cloud highlight from June that I believe sheds some light onto an important aspect of how organizations build secure products, and build security into their business systems. On June 3, we announced the winners of the 2021 Google Cloud Vulnerability Rewards Program prize. This is the third year that Google Cloud has participated in the VRP. The top six prize winners scored a combined $313,337 for the vulnerabilities they found. An integral part of the competition is for the competitors to publish a public write-up of their vulnerability reports, which we hope encourages even more people to participate in open research into cloud security. (You can learn more about Google’s Vulnerability Rewards Program here.)Over the life of the program, we’ve increased the awards—a measure of the program’s success. And we’ve also increased the prize values in our companion Kubernetes Capture the Flag VRP. These increases benefit the research community, of course, and help us secure our products. But they also help develop a mature, resilient security ecosystem in which our internal security teams are indelibly connected to external, independent security researchers. This conclusion has been borne out by my own experience with VRPs, but also independent analysis. Researchers at the University of Houston and the Technical University of Munich concluded in a study of Chromium vulnerabilities published in 2021 that the diverse backgrounds and interests of external bug-hunters contributed to their ability to find different kinds of vulnerabilities. Specifically, they tracked down bugs in Chromium Stable releases and in user interface components. The researchers wrote that “external bug hunters provide security benefits by complementing internal security teams with diverse expertise and outside perspective, discovering different types of vulnerabilities.”Although organizations have used VRPs since the 1990s to help fix their software, and their use continues to grow in popularity, they still require forethought and planning. At the very least, an organization should have a dedicated, publicly-available and internally-managed email address for researchers to submit their reports and claims. More than anything else, researchers want to be able to communicate their security concerns to somebody who will take them seriously.That said, incoming vulnerability reports can set off klaxons if the preparations have not been put in place to properly manage them. A more mature VRP will triage incoming reports and have in place a more rigorous machinery which includes determining who will receive the reports, how the interactions with the researcher who filed the report will be handled, which engineering teams will be notified and involved, how the report will be verified as accurate and authentic, and how customers will be supported.There’s an opportunity for boards and organization leaders to take a more active role in kickstarting and guiding this process if their organization doesn’t have a VRP in place yet. Part of what makes VRPs so important is that they bring benefits beyond the obvious. They can help teams learn more, they can strengthen ties to the researcher community, they can provide feedback on updating internal processes, and they can create pathways to improve security and development team structures.Ultimately, the business case for a VRP program is simple. No matter how great you are at security, you still are going to have some vulnerabilities. You want those discovered as quickly as possible by people who will be incentivized to tell you. If you don’t, you run increasing risks that adversaries will either discover those vulnerabilities or acquire them from an illicit marketplace. As more organizations undergo their digital transformations, the need for VRPs will only increase. The web of interconnectedness between a company’s systems and the systems of its suppliers, partners, and customers will force them to expand the scope of their security concerns, so the most responsible behavior is for organizations to encourage their suppliers to adopt VRP programs.Google Cloud Security TalksSecurity Talks is our ongoing program to bring together experts from the Google Cloud security team, including the Google Cybersecurity Action Team and Office of the CISO, and the industry at large to share information on our latest security products, innovations, and best practices. Our latest Security Talks, on Aug. 31, will focus on practitioner needs and how to use our products. Sign up here. Google Cybersecurity Action Team highlightsHere are the latest updates, products, services and resources from our security teams this month: SecurityHow Google Cloud blocked the largest Layer 7 DDoS attack to date: On June 1, a Google Cloud Armor customer was targeted with a series of HTTPS DDoS attacks which peaked at 46 million requests per second. This is the largest Layer 7 DDoS reported to date—at least 76% larger than the previously reported record. Here’s how we stopped it. “Deception at scale”—VirusTotal’s latest report: VirusTotal’s most recent report on the state of malware explores how malicious hackers change up their malware techniques to bypass defenses and make social engineering attacks more effective. Read more.First-to-market Virtual Machine Threat Detection now generally available: Our unique Virtual Machine Threat Detection (VMTD) in Security Command Center is now generally available for all Google Cloud customers. Launched six months ago in public preview, VMTD is invisible to adversaries and draws on expertise from Google’s Threat Analysis Group and Google Cloud Threat Intelligence. Read more. How autonomic data security can help define cloud’s future: As data usage has undergone drastic expansion and changes in the past five years, so have your business needs for data. Google Cloud is positioned uniquely to define and lead the effort to adopt a modern approach to data security. We contend that the optimal way forward is with autonomic data security. Here’s why.How CISOs need to adapt their mental models for cloud security: Successful cloud security transformations can help better prepare CISOs for threats today, tomorrow, and beyond, but they require more than just a blueprint and a set of projects. CISOs and cybersecurity team leaders need to envision a new set of mental models for thinking about security, one that will require you to map your current security knowledge to cloud realities. Here’s why. How to help ensure smooth shift handoffs in security operations: Without proper planning, SOC shift-handoffs can create knowledge gaps between team members. Fortunately, those gaps are not inevitable. Here’s three ways to avoid them. Five must-know security and compliance features in Cloud Logging: As enterprise and public sector cloud adoption continues to accelerate, having an accurate picture of who did what in your cloud environment is important for security and compliance purposes. Here are five must-know Cloud Logging security and compliance features (including three new ones launched this year) that can help customers improve their security audits. Read more.Google Cloud Certificate Authority Service now supports on-premises Windows workloads: Organizations who have adopted cloud-based CAs increasingly want to extend the capabilities and value of their CA to their on-premises environments. They can now deploy a private CA through Google Cloud CAS along with a partner solution that simplifies, manages, and automates the digital certificate operations in on-prem use cases such as issuing certificates to routers, printers, and users. Read more. Easier de-identification of Cloud Storage data: Many organizations require effective processes and techniques for removing or obfuscating certain sensitive information in the data that they store, a process known as “de-identification.” We’ve now released a new action for Cloud Storage inspection jobs that makes this process easier. Read more. Introducing Google Cloud and Google Workspace support for multiple Identity providers with Single Sign-On: Google has long provided customers with a choice of digital identity providers. For more than a decade, we have supported SSO via the SAML protocol. Currently, Google Cloud customers can enable a single identity provider for their users with the SAML 2.0 protocol. This release significantly enhances our SSO capabilities by supporting multiple SAML-based identity providers instead of just one. Read more. Curated detections come to Chronicle SecOps Suite: A critical component of any security operations team’s job is to deliver high-fidelity detections of potential threats across the breadth of adversary tactics. Today, we are putting the power of Google’s intelligence in the hands of security operations teams with high quality, actionable, curated detections built by our Google Cloud Threat Intelligence team. Read more. Google Cloud’s Managed Microsoft Active Directory gets on-demand backup, schema extension support: We’ve added schema extension support and on-demand backups to our Managed Microsoft Active Directory to make it easier for customers to integrate with applications that rely on AD. Read more.Securing apps using Anthos Service Mesh: Our Anthos Service Mesh can help maintain a high level of security across numerous apps and services with minimal operational overhead, all while providing service owners granular traffic control. Here’s how it works. Our Security Voices blogging initiative highlights blogs from a diverse group of Google Cloud’s security professionals. Here, Jaffa Edwards explains how preventive security controls, also known as security “guardrails,” can help developers prevent misconfigurations before they can be exploited. Read more.Industry updatesHow Vulnerability Exploitability eXchanges can help healthcare prioritize cybersecurity risk: In our latest blog on healthcare and cybersecurity resiliency, we discuss how a VEX can help bolster SBOM and SLSA with vital information for making risk-assessment decisions in healthcare organizations—and beyond. Read more. MITRE and Google Cloud collaborate on cloud analytics: How can the cybersecurity industry improve its analysis of the already-tremendous and growing volumes of security data in order to better stop the dynamic threats we face? We’re excited to announce the release of the Cloud Analytics project by the MITRE Engenuity Center for Threat-Informed Defense, and sponsored by Google Cloud and several other industry collaborators. Read more. Compliance & ControlsUsing data advocacy to close the consumer privacy trust gap: As consumer data privacy regulations tighten and the end of third-party cookies looms, organizations of all sizes may be looking to carve a path toward consent-positive, privacy-centric ways of working. Organizations must begin to treat consumer data privacy as a pillar of their business. One way to do this is by implementing a cross-functional data advocacy panel. Read more.How to avoid cloud misconfigurations and move towards continuous compliance: Modern application security tools should be fully automated, largely invisible to developers, and minimize friction within the DevOps pipeline. Infrastructure continuous compliance can be achieved thanks to Google Cloud’s open and extensible architecture, which uses Security Command Center and open source solutions. Here’s how.Helping European education providers navigate privacy assessments: Navigating complex DPIA requirements under GDPR can be challenging for many of our customers, and while only customers, as controllers, can complete DPIAs, we are here to help meet these compliance obligations with our Cloud DPIA Resource Center. Read more. Tips for security teams to shareAs I noted in July’s newsletter, we published four helpful guides that month on Google Cloud’s security architecture. These explainers by our lead developer advocate Priyanka Vergadia are ready-made to share with IT colleagues, and come with colorful illustrations that break down how our security works. This month, we added two more. Make the most of your cloud deployment with Active Assist: This guide walks you through our Active Assist feature, which can help streamline information from your workloads’ usage, logs, and resource configuration, and then uses machine learning and business logic to help optimize deployments in exactly those areas that make the cloud compelling: cost, sustainability, performance, reliability, manageability, and security. Read more. Zero Trust and BeyondCorp: In this primer, we focus on how the need to mitigate the security risks created by implicitly trusting any part of a system has led to the rise of the Zero Trust security model. Read more.Google Cloud Security PodcastsWe launched in February 2021 a new weekly podcast focusing on Cloud Security. Hosts Anton Chuvakin and Timothy Peacock chat with cybersecurity experts about the most important and challenging topics facing the industry today. This month, they discussed:Demystifying data sovereignty at Google Cloud: What is data sovereignty, why it matters, and how it will play a growing role in cloud technology, with Google’s C.J. Johnson. Listen here.A CISO walks into a cloud: Frustrations, successes, lessons, and risk, with David Stone, staff consultant at our Office of the CISO. Listen here. How to modernize data security with the Autonomic Data Security approach, with John Stone, staff consultant at our Office of the CISO. Listen here.What changes and what doesn’t when SOC meets cloud, with Gorka Sadowski, chief strategy officer at Exabeam. Listen here.Explore the magic (and operational realities) of SOAR, with Cyrus Robinson, SOC Director and IR Team lead at Ingalls Information Security. Listen here.To have our Cloud CISO Perspectives post delivered every month to your inbox, sign up for our newsletter. We’ll be back next month with more security-related updates.Related ArticleCloud CISO Perspectives: July 2022Google Cloud CISO Phil Venables shares his thoughts on the important role and challenges of including cybersecurity in the boardroom, alo…Read Article

Quelle: Google Cloud Platform