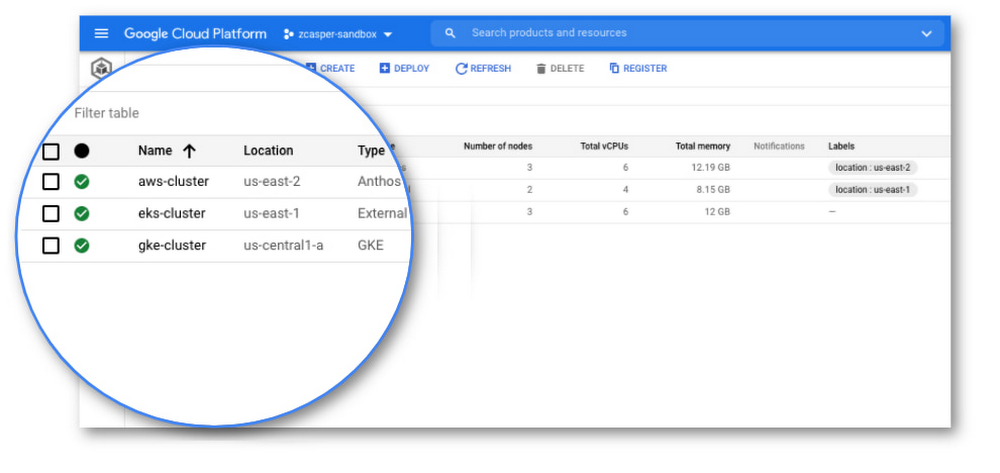

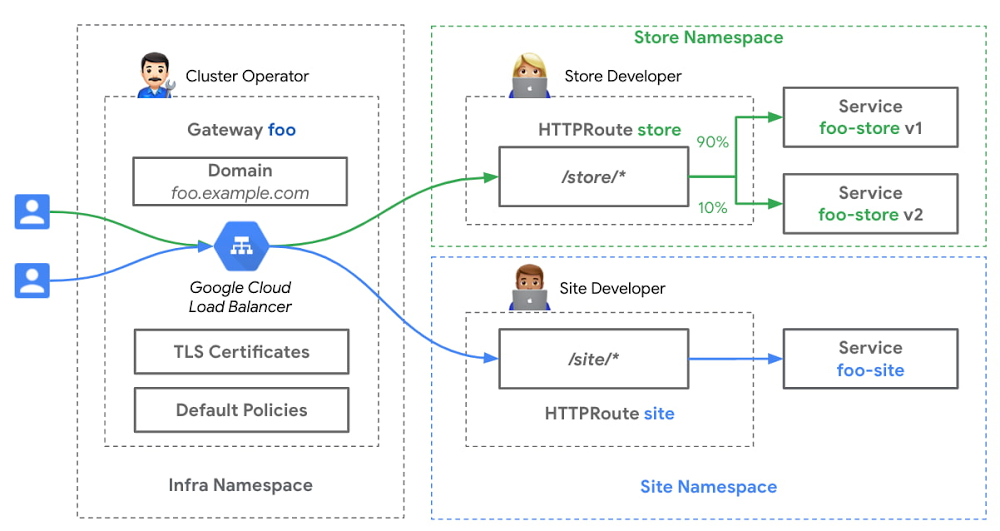

It’s that time of the year again: time to get excited about all things cloud-native, as we gear up to connect, share and learn from fellow developers and technologists around the world at KubeCon EU 2021 next week. Cloud-native technologies are mainstream and our creation, Kubernetes, is core to building and operating modern software. We’re working hard to create industry standards and services to make it easy for everyone to use this service. Let’s take a look at what’s new in the world of Kubernetes at Google Cloud since the last KubeCon and how we’re making it easier for everyone to use and benefit from this foundational technology.1. Run production-ready k8s like a proGoogle Kubernetes Engine (GKE), our managed Kubernetes service, has always been about making it easy for you to run your containerized applications, while still giving you the control you need. With GKE Autopilot, a new mode of operation for GKE, you have an automated Kubernetes experience that optimizes your clusters for production, reduces the operational cost of managing clusters, and delivers higher workload availability.“Reducing the complexity while getting the most out of Kubernetes is key for us and GKE Autopilot does exactly that!” – STRABAG BRVZ Customers who want advanced configuration flexibility continue to use GKE in the standard mode of operation. As customers scale up their production environments, application requirements for availability, reducing blast-radius, or distributing different types of services have grown to necessitate deployment across multiple clusters. With the recently introduced GKE multi-cluster services, the Kubernetes Services object can now span multiple clusters in a zone, across multiple zones, or across multiple regions, with minimal configuration or overhead for managing the interconnection between clusters. GKE multi-cluster services enable you to focus on the needs of your application while GKE manages your multi-cluster topology.“We have been running all our microservices in a single multi-tenant GKE cluster. For our next-generation Kubernetes infrastructure, we are designing multi-region homogeneous and heterogeneous clusters. Seamless inter-cluster east-west communication is a prerequisite and multi-cluster Services promise to deliver. Developers will not need to think about where the service is running. We are very excited at the prospect.” – Mercari2. Create and scale CI/CD pipelines for GKE Scaling continuous integration and continuous delivery (CI/CD) pipelines can be a time-consuming process, involving multiple manual steps: setting up the CI/CD server, ensuring configuration files are updated, and deploying images with the correct credentials to a Kubernetes cluster. Cloud Build, our serverless CI/CD platform, comes with a variety of features to make the process as easy for you as possible. For starters, Cloud Build natively supports buildpacks, which allows you to build containers without a Dockerfile. As a part of your build steps, you can bring your own container images or choose from pre-built images to save time. Additionally, since Cloud Build is serverless, there is no need to pre-provision servers or pay in advance for capacity. And with its built-in vulnerability scanning, you can perform deep security scans within the CI/CD pipeline to ensure only trusted artifacts make it to production. Finally, Cloud Build lets you create continuous delivery pipelines for GKE in a few clicks. These pipelines implement out-of-the-box best practices that we’ve developed at Google for handling Kubernetes deployments, further reducing the overhead of setting up and managing pipelines. “Before moving to Google Cloud, the idea that we could take a customer’s feature request and put it into production in less than 24 hours was man-on-the-moon stuff. Now, we do it all the time.” —Craig Van Arendonk, Director of IT – Customer and Sales, Gordon Food Service3. Manage security and compliance on k8s with easeUpstream Kubernetes, the open-source version that you get from a GitHub repository, isn’t a locked-down environment out of the box. Rather than solve for security, it‘s designed to be very extensible, solving for flexibility and usability.As such, Kubernetes security relies on extension points to integrate with other systems such as identity and authorization. And that’s okay! It means Kubernetes can fit lots of use cases. But it also means that you can’t assume that the defaults for upstream Kubernetes are correct for you. If you want to deploy Kubernetes with a “secure by default” mindset, there are several core components to keep in mind. As we discuss in the fundamentals of container security whitepaper, here are some of the GKE networking-related capabilities which help you make Kubernetes more secure: Secure Pod networking – With Dataplane V2 (in GA), we enable Kubernetes Network Policy when you create a cluster. In addition, Network Policy logging (in GA) provides visibility into your cluster’s network so that you can see who is talking to who.Secure Service networking – The GKE Gateway controller (in preview) offers centralized control and security without sacrificing flexibility and developer autonomy, all through standard and declarative Kubernetes interfaces.”Implementing Network Policy in k8s can be a daunting task, fraught with guess work and trial and error, as you work to understand how your applications behave on the wire. Additionally, many compliance and regulatory frameworks require evidence of a defensive posture in the form of control configuration and logging of violations. With GKE Network Policy Logging you have the ability to quickly isolate and resolve issues, as well as, providing the evidence required during audits. This greatly simplifies the implementation and operation of enforcing Network Policies.”- Credit Karma4. Get an integrated view of alerts, SLOs, metrics, and logsDeep visibility into both applications and infrastructure is essential for troubleshooting and optimizing your production offerings. With GKE, when you deploy your app, it gets monitored automatically. GKE clusters come with pre-installed agents that collect telemetry data and automatically route it to observability services such as Cloud Logging and Cloud Monitoring. These services are integrated with one another as well as with GKE, so you get better insights and can act on them faster. For example, the GKE dashboard offers a summary of metrics for clusters, namespaces, nodes, workloads, services, pods, and containers, as well as an integrated view of Kubernetes events and alerts across all of those entities. From alerts or dashboards you can go directly to logs for a given resource and you can navigate to the resource itself without having to navigate through unconnected tools from multiple vendors. Likewise, since telemetry data is automatically routed to the Google Cloud’s observability suite, you can immediately take advantage of tools based on Google’s Site Reliability Engineering (SRE) principles. For example, SLO Monitoring helps you to drive greater accountability across your development and operations teams by creating error budgets and monitoring your service against those objectives. Ongoing investments in integrating OpenTelemetry will improve both platform and application telemetry collection“[With GKE] There’s zero setup required and the integration works across the board to find errors…. Having the direct integration with the cloud-native aspects gets us the information in a timely fashion.” —Erik Rogneby, Director of Platform Engineering, Gannett Media Corp.5. Manage and run Kubernetes anywhere with AnthosYou can take advantage of GKE capabilities in your own datacenter or on other clouds through Anthos. With Anthos you can bring GKE, along with other key frameworks and services, to any infrastructure, while managing centrally from Google Cloud.Creating secure environments within multiple cloud platforms is a challenge. With each platform having different security capabilities, identity systems, and risks, supporting an additional cloud platform often means doubling your security efforts. With Anthos, many of these challenges are solved. Rather than relying on your application or cloud infrastructure teams to build tools to operate across multiple platforms, Anthos provides these capabilities out-of-the-box. Anthos provides the core capabilities for deploying and operating infrastructure and services across Google Cloud, AWS, and soon in Azure. We recently released Anthos 1.7, delivering an array of capabilities that make multicloud more accessible and sustainable.Take a look at how our latest Anthos release tracks to a successful multicloud deployment. “Using Anthos, we’ll be able to speed up our development and deliver new services faster” – PKO Bank Polski6. ML at scale made simpleGKE brings flexibility, autoscaling, and management simplicity, while GPUs bring superior processing power. With the launch of support for multi-instance NVIDIA GPUs on GKE (in Preview), you can now partition a single NVIDIA A100 GPU into up to seven instances that each have their own high-bandwidth memory, cache and compute cores. Each instance can be allocated to one container, supporting up to seven containers per one NVIDIA A100 GPU. Further, multi-instance GPUs provide hardware isolation between containers, and consistent and predictable QoS for all containers running on the GPU.”By reducing the number of configuration hoops one has to jump through to attach a GPU to a resource, Google Cloud and NVIDIA have taken a needed leap to lower the barrier to deploying machine learning at scale. Alongside reduced configuration complexity, NVIDIA’s sheer GPU inference performance with the A100 is blazing fast. Partnering with Google Cloud has given us many exceptional options to deploy AI in the way that works best for us.” – BetterviewSee you at KubeCon EUOn Monday, May 3, join us at Build with GKE + Anthos, co-located with KubeCon EU, to kickstart or accelerate your Kubernetes development journey. We’ll cover everything from how to code, build and run a containerized application, to how to operate, manage, and secure it. You’ll get access to technical demos that go deep into our Kubernetes services, developer tools, operations suite and security solutions. We look forward to partnering with you on your Kubernetes journey!

Quelle: Google Cloud Platform