This blog post was contributed to by Chin Lai The, Technical Specialist, SAP on Azure.

This is the first in a four-part blog series on designing a great SAP on Azure Architecture, and will focus on designing for security.

Great SAP on Azure Architectures are built on the pillars of security, performance and scalability, availability and recoverability, and efficiency and operations.

Microsoft investments in Azure Security

Microsoft invests $1 billion annually on security research and development and has 3,500 security professionals employed across the company. Advanced AI is leveraged to analyze 6.5 trillion global signals from the Microsoft cloud platforms and detect and respond to threats. Enterprise-grade security and privacy are built into the Azure platform including enduring, rigorous validation by real world tests, such as the Red Team exercises. These tests enable Microsoft to test breach detection and response as well as accurately measure readiness and impacts of real-world attacks, and are just one of the many operational processes that provide best-in-class security for Azure.

Azure is the platform of trust, with 90 compliance certifications spanning nations, regions, and specific industries such as health, finance, government, and manufacturing. Moreover, Azure Security and Compliance Blueprints can be used to easily create, deploy, and update your compliant environments.

Security – a shared responsibility

It’s important to understand the shared responsibility model between you as a customer and Microsoft. The division of responsibility is dependent on the cloud model used – SaaS, PaaS, or IaaS. As a customer, you are always responsible for your data, endpoints, account/access management, irrespective of the chosen cloud deployment.

SAP on Azure is delivered using the IaaS cloud model, which means security protections are built into the service by Microsoft at the physical datacenter, physical network, and physical hosts. However, for all areas beyond the Azure hypervisor i.e. the operating systems and applications, customers need to ensure their enterprise security controls are implemented.

Key security considerations for deploying SAP on Azure

Resource based access control & resource locking

Role-based access control (RBAC) is an authorization system which provides fine-grained access for the management of Azure resources. RBAC can be used to limit access and control permissions on Azure resources for the various teams within your IT operations.

For example, the SAP basis team members can be permissioned to deploy virtual machines (VMs) into Azure virtual networks (VNets). However, the SAP basis team can be restricted from creating or configuring VNets. On the flip side, members of the networking team can create and configure VNets, however, they are prohibited from deploying or configuring VMs in VNets where SAP applications are running.

We recommend validating and testing the RBAC design early during the lifecycle of your SAP on Azure project.

Another important consideration is Azure resource locking which can be used to prevent accidental deletion or modification of Azure resources such as VMs and disks. It is recommended to create the required Azure resources at the start of your SAP project. When all additons, moves, and changes are finished, and the SAP on Azure deployment is operational all resources can be locked. Following, only a super administrator can unlock a resource and permit the resource (such as a VM) to be modified.

Secure authentication

Single-sign-on (SSO) provides the foundation for integrating SAP and Microsoft products, and for years Kerberos tokens from Microsoft Active Directory have been enabling this capability for both SAP GUI and web-browser based applications when combined with third party security products.

When a user logs onto their workstation and successfully authenticates against Microsoft Active Directory they are issued a Kerberos token. The Kerberos token can then be used by a 3rd party security product to handle the authentication to the SAP application without the user having to re-authenticate. Additionally, data in transit from the users front-end towards the SAP application can also be encrypted by integrating the security product with secure network communications (SNC) for DIAG (SAP GUI), RFC and SPNEGO for HTTPS.

Azure Active Directory (Azure AD) with SAML 2.0 can also be used to provide SSO to a range of SAP applications and platforms such as SAP NetWeaver, SAP HANA and the SAP Cloud Platform.

This video demonstrates the end-to-end enablement of SSO between Azure AD and SAP NetWeaver

Protecting your application and data from network vulnerabilities

Network security groups (NSG) contain a list of security rules that allow or deny network traffic to resources within your Azure VNet. NSGs can be associated to subnets or individual network interfaces attached to VMs. Security rules can be configured based on source/destination, port, and protocol.

NSG’s influence network traffic for the SAP system. In the diagram below, three subnets are implemented, each having an NSG assigned – FE (Front-End), App and DB.

A public internet user can reach the SAP Web-Dispatcher over port 443

The SAP Web-Dispatcher can reach the SAP Application server over port 443

The App Subnet accepts traffic on port 443 from 10.0.0.0/24

The SAP Application server sends traffic on port 30015 to the SAP DB server

The DB subnet accepts traffic on port 30015 from 10.0.1.0/24.

Public Internet Access is blocked on both App Subnet and DB Subnet.

SAP deployments using the Azure virtual Ddatacenter architecture will be implemented using a hub and spoke model. The hub VNet is the central point for connectivity where an Azure Firewall or other type of network virtual appliances (NVA) is implemented to inspect and control the routing of traffic to the spoke VNet where your SAP applications reside.

Within your SAP on Azure project, it is recommended to validate that that inspection devices and NSG security rules are working as desired, this will ensure that your SAP resources are shielded appropriately against network vulnerabilities.

Maintaining data integrity through encryption methods

Azure Storage service encryption is enabled by default on your Azure Storage account where it cannot be disabled. Therefore, customer data at rest on Azure Storage is secured by default where data is encrypted/decrypted transparently using 256-bit AES. The encrypt/decrypt process has no impact on Azure Storage performance and is cost free. You have the option of Microsoft managing the encryption keys or you can manage your own keys with Azure Key Vault. Azure Key Vault can be used to manage your SSL/TLS certificates which are used to secure interfaces and internal communications within the SAP system.

Azure also offers virtual machine disk encryption using BitLocker for Windows and DM-Crypt for Linux to provide volume encryption for virtual machine operating system and data disks. Disk encryption is not enabled by default.

Our recommended approach to encrypting your SAP data at rest is as follows:

Azure Disk Encryption for SAP Application servers – operating system disk and data disks.

Azure Disk Encryption for SAP Database servers – operating system disks and those data disk not used by the DBMS.

SAP Database servers – leverage Transparent Data Encryption offered by the DBMS provider to secure your data and log files and to ensure the backups are also encrypted.

Hardening the operating system

Security is a shared responsibility between Microsoft and you as a customer where your customer specific security controls need to be applied to the operating system, database, and the SAP application layer. For example, you need to ensure the operating system is hardened to eradicate vulnerabilities which could lead to attacks on the SAP database.

Windows, SUSE Linux, RedHat Linux and others are supported for running SAP applications on Azure and various images of these operating systems are available within the Azure Marketplace. You can further harden these images to comply with the security policies of your enterprise and within the guidance from the Center of Internet Security (CIS)- Microsoft Azure foundations benchmark.

Enterprises generally have operational processes in place for updating and patching of their IT software including the operating system. Once an operating system vulnerability has been exposed, it is published in security advisories and usually remediated quickly. The operating system vendor regularly provides security updates and patches. You can use the Update Management solution in Azure Automation to manage operating system updates for your Windows and Linux VMs in Azure. A best practice approach is a selective installation of security updates for the operating system on a regular cadence and installation of other updates such as new features during maintenance windows.

Learn more

Within this blog we have touched upon a selection of security topics as they relate to deploying SAP on Azure. Incorporating solid security practices will lead to a secure SAP deployment on Azure.

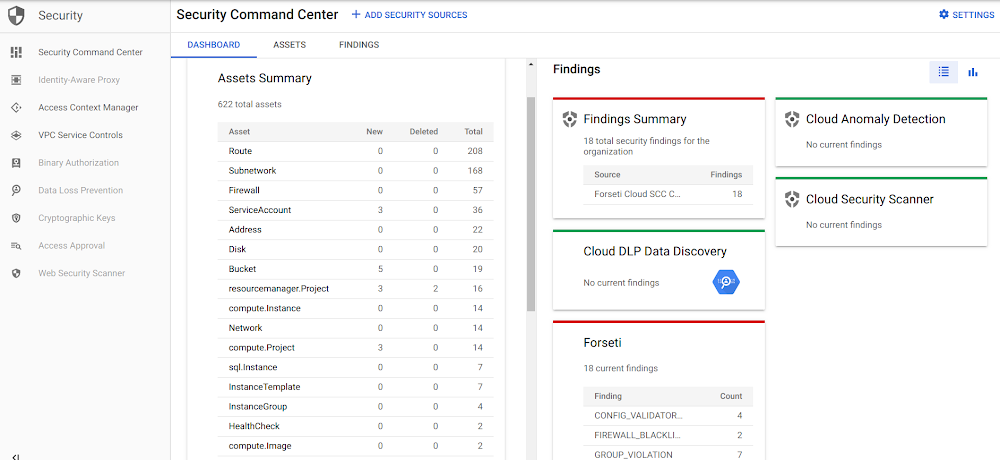

Azure Security Center is the place to learn about the best practices for securing and monitoring your Azure deployments. Also, please read the Azure Security Center technical documentation along with Azure Sentinel to understand how to detect vulnerabilities, generate alerts when exploitations have occurred and provide guidance on remediation.

In blog number two in our series we will cover designing for performance and scalability.

Quelle: Azure